Smart manufacturing depends on deterministic data flow between PLCs, edge analytics, and machine vision systems. When links flap, latency spikes, or optics drift, production lines feel it immediately as missed cycles and rework. This article helps industrial engineers and network leads choose and deploy fiber optic transceivers for industry applications with a focus on operational reliability, switch compatibility, and measurable ROI.

Prerequisites: what you must measure before buying optics

Before selecting transceivers, confirm the actual traffic pattern and physical-layer constraints. For industry applications, the optical layer must survive vibration, temperature swings, and frequent maintenance access without introducing intermittent BER (bit error rate) errors. Plan for both link budget and operational envelope so your optics selection matches the plant floor reality.

Inventory ports, media, and required speeds

Expected outcome: you produce a port-by-port requirements sheet that maps each uplink to a transceiver type and fiber type. Start by pulling switch transceiver diagnostics (vendor CLI or GUI) and recording port speed (for example 1G, 10G, 25G, 40G), connector type (LC duplex vs MPO), and current optics model. If you are standardizing on IEEE 802.3 Ethernet PHYs, capture the line rate and expected maximum payload throughput, not just the nominal bitrate.

Measure fiber plant loss and connector quality

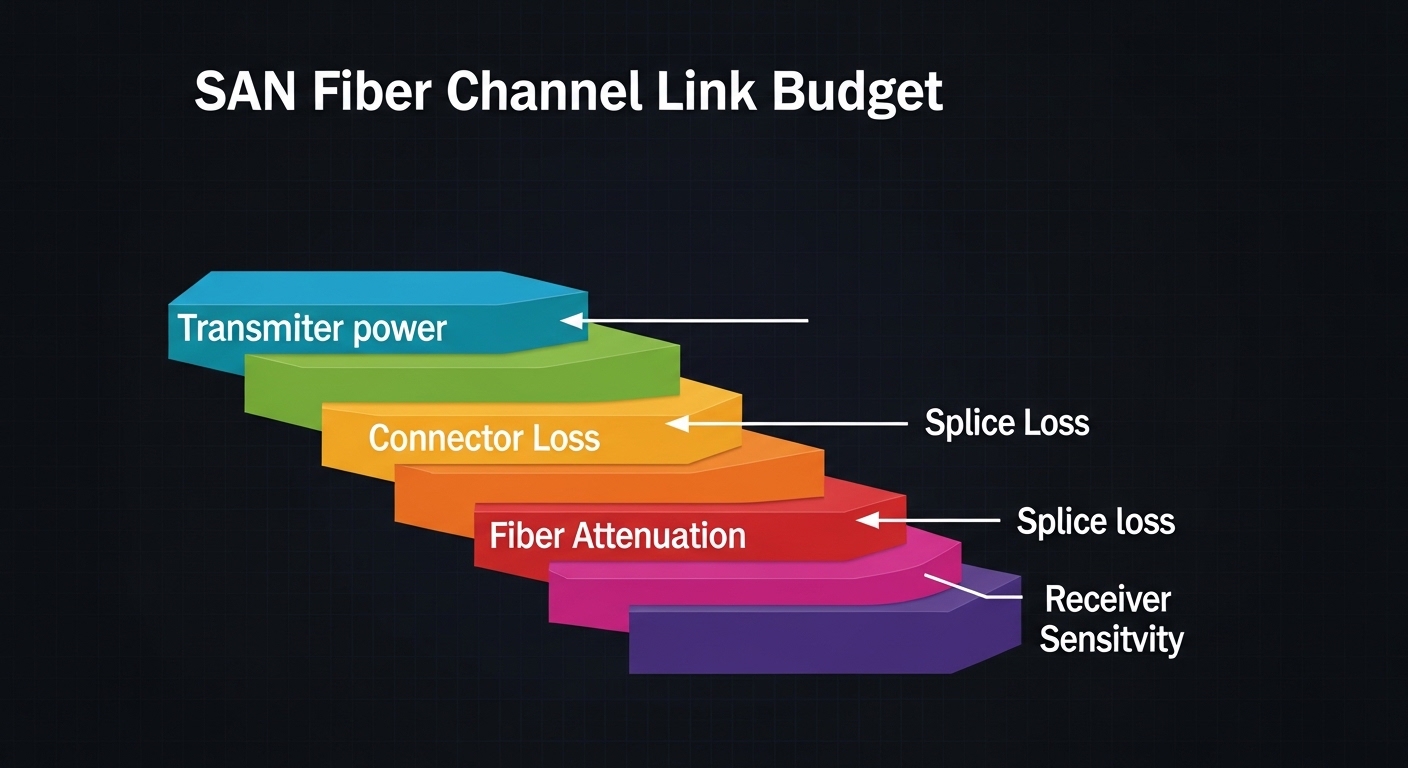

Expected outcome: you calculate worst-case link budget with margin for aging and cleaning variability. Use an OTDR or qualified optical power meter plus reference patch cords. Record worst-case dB loss at the transceiver wavelength and include connector insertion loss; for example, LC end-faces often land around 0.2 to 0.5 dB per mated pair when clean and properly terminated, but field conditions can be worse.

Validate environmental limits and airflow

Expected outcome: you confirm transceiver temperature range and derating assumptions. Industrial control rooms and machine cabinets can exceed typical office conditions, especially near drives and compressors. Choose optics with an operating temperature range that matches your enclosure; common industrial choices use a wider range than standard commercial modules.

Pro Tip: In smart manufacturing deployments, the most common “mystery” outage is not the transceiver itself; it is a marginally cleaned connector or a patch-cord swap that changes the effective link budget. Build a cleaning and verification workflow using IEC-style inspection practices before you blame the optics.

Optical module types for smart manufacturing: what actually fits

In industry applications, you usually choose between short-reach multimode for in-building runs and single-mode for longer backbones or EMI-robust paths. The decision is driven by distance, wavelength, connector style, and how your switch vendor validates optics. For Ethernet, ensure the module matches the switch’s expected electrical interface and optical standard.

Common module families and where they land

- SFP/SFP+ and SFP28: often used for 1G/10G/25G edge links where port density is moderate.

- SFP-DD and SFP56: used when switches support higher speeds per lane and require newer signal encoding.

- QSFP+/QSFP28/QSFP56: typical for 40G/100G aggregation and spine uplinks, often with MPO connectors.

- Coherent optics: for long-haul metro backbones, usually beyond most machine-level needs.

Single-mode vs multimode in plant networks

Multimode is common for short in-building runs due to lower cost and easier handling, especially with OM3/OM4 fiber. Single-mode is favored when you need longer reach, easier splicing across buildings, or future-proofing for higher-speed migrations. In practice, many factories deploy a hybrid architecture: multimode in machine rooms and single-mode for aggregation and inter-building paths.

Specs that matter: wavelength, reach, power, and temperature

Transceivers are not interchangeable just because they share a connector. For industry applications, the engineering question is whether the optics meet the optical power and receiver sensitivity requirements under worst-case loss. Below is a comparison of representative modules frequently used in enterprise and industrial Ethernet deployments.

| Module example (typical use) | Data rate | Wavelength | Reach class | Fiber type | Connector | Tx/Rx power class (typical) | Operating temperature | Where it fits in smart manufacturing |

|---|---|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR or FS.com SFP-10GSR-85 (10G SR) | 10G Ethernet | 850 nm | ~300 m (OM3) to ~400-500 m (OM4) | MMF OM3/OM4 | LC | Tx around -1 to -6 dBm; sensitivity often around -9 to -12 dBm | Commercial or extended variants | Machine-room edge switches to nearby control cabinets |

| Finisar FTLX8571D3BCL (representative 10G SR) | 10G Ethernet | 850 nm | ~300 m class | MMF OM3 | LC | Tx/Rx values per datasheet; verify against switch budget | Commercial | Short multimode patch links inside a plant |

| Cisco SFP-10G-LR or FS.com SFP-10GLR (10G LR) | 10G Ethernet | 1310 nm | ~10 km | SMF | LC | Tx around 0 to +3 dBm; sensitivity often around -14 to -16 dBm | Commercial or industrial options | Inter-building aggregation and long uplinks |

| QSFP28 25G-to-100G class modules (for aggregation) | 40G/100G | Varies by standard (SR4, LR4) | ~100 m to multi-km (varies) | MMF or SMF | MPO or LC quad | Per standard; verify laser safety and budget | Commercial | Leaf-spine between industrial edge clusters and the OT core |

When you validate the design, compute the margin as: Tx power – (fiber loss + patch loss + connector loss + aging allowance) + receiver sensitivity margin. For industry applications, aim for conservative headroom so you do not operate at the edge of the receiver sensitivity curve, especially after maintenance.

Deployment scenario: optical links in a leaf-spine smart factory

Consider a 3-tier smart manufacturing topology in a mid-size plant: 48-port 10G ToR switches connect to machine cells, and each cell has 12 ports used for PLC gateways, machine vision cameras, and industrial PCs. The plant uses 10G short-reach optics for machine-room patching, with multimode OM4 fiber for runs up to 120 m and single-mode for inter-building uplinks up to 3 km. During peak production, the edge experiences bursts up to 6 to 8 Gbps per rack, while control traffic stays latency-sensitive under 1 ms.

Engineers typically standardize on LC duplex 10G SR for ToR to machine cabinets and LC duplex 10G LR for aggregation to the OT core. A practical design approach is to target a link budget margin of at least 3 to 6 dB under measured worst-case loss, then enforce a connector inspection and cleaning SOP. This reduces “random” link resets caused by contaminated end-faces and maintains stable BER during temperature cycling.

Selection criteria checklist for industry applications

Use this ordered checklist when choosing transceivers and planning spares. It reflects how field teams avoid downtime and how security and compliance teams reduce operational risk.

- Distance and fiber type: confirm OM3/OM4 vs single-mode; validate worst-case attenuation with OTDR and patch-cord testing.

- Switch compatibility: verify the switch’s optics support matrix; confirm whether it supports third-party optics and how it treats DOM.

- Data rate and Ethernet standard: align with IEEE 802.3 PHY requirements for the target speed and lane configuration.

- DOM support and monitoring: ensure Digital Optical Monitoring is supported (temperature, bias current, transmit power, receive power) so you can alert before failure.

- Operating temperature and derating: select industrial temperature variants if optics sit in machine cabinets; confirm whether the vendor specifies derating curves.

- Vendor lock-in risk: if you buy only OEM optics, plan for supply continuity; if you buy third-party, validate across multiple batches to avoid calibration drift.

- Power budget margin: compute link budget with connector losses and aging allowance; avoid designs that run within 1 to 2 dB of receiver sensitivity.

- Safety and compliance: confirm laser class labeling and documentation for your facility’s compliance requirements.

Common pitfalls and troubleshooting for optical links

Even well-designed industry applications fail in predictable ways. Below are top field failure modes with root causes and corrective actions that reduce mean time to repair.

Failure point 1: link up/down after patch changes

Root cause: contaminated or mismatched connectors (wrong polarity, dirty end-faces, or damaged ferrules) introduced during maintenance. Solution: clean using a validated fiber cleaning workflow, inspect with a microscope, and re-test with an optical power meter. If the link remains unstable, replace the patch cord with a known-good cord and re-run OTDR to confirm no microbends or connector damage.

Failure point 2: high error counters with stable optical power

Root cause: lane mismatch, incorrect transceiver standard, or marginal signal integrity due to bad patching or wrong fiber type (for example, using OM3 in a design expecting OM4 performance). Solution: verify the transceiver part number and confirm the switch negotiates the expected mode. Check for interface errors (CRC, symbol errors) and compare receive power readings from DOM against the datasheet thresholds.

Failure point 3: optics work in lab but fail in cabinets under heat

Root cause: temperature outside the module’s specified operating range or insufficient airflow, leading to laser bias drift and receiver sensitivity degradation. Solution: deploy industrial temperature variants or improve cabinet cooling and airflow paths. Use DOM alerts to track temperature and transmit power trends across production cycles, then adjust alarm thresholds to catch drift early.

Cost and ROI note: what to budget for optics in production

In industry applications, optics are a mix of capex and operational risk. Typical pricing ranges vary widely by speed and reach: 10G SR LC modules often cost roughly $50 to $200 per unit depending on brand and industrial temperature grade, while 10G LR LC modules can be $200 to $600. Third-party optics can reduce upfront costs, but total cost of ownership depends on validation effort, failure rates, and whether your team must stock additional spares to cover compatibility surprises.

ROI usually comes from reducing downtime and lowering mean time to repair. If your maintenance plan includes DOM-based monitoring and a tested cleaning workflow, you can reduce repeat failures and avoid emergency truck rolls. Also factor in power and cooling impacts: while transceiver power is small compared to switch chassis, high-density fabrics increase aggregate heat, making temperature-appropriate optics and airflow planning financially relevant.

FAQ

What industry applications benefit most from fiber optics instead of copper?

Machine rooms with EMI sensitivity, long cable runs, and noisy environments benefit most. Fiber also reduces ground-loop risk and supports higher speeds without copper reach limitations.

How do I verify that a transceiver will work with my switch?

Check the switch vendor’s optics compatibility list and confirm the transceiver’s electrical interface matches the port type. Then validate in a staging area using your exact patch cords and measured link loss.

Is DOM support required for smart manufacturing monitoring?

DOM is strongly recommended because it enables proactive alerts for temperature drift, transmit power reduction, and receive power changes. Without DOM, you typically detect issues only after errors increase or links flap.

Should I standardize on multimode or single-mode for new builds?

For short in-building runs, multimode OM4 is often cost-effective. For future migration, inter-building links, and longer reach, single-mode is usually the safer standard and reduces redesign risk.

What is the most common reason for intermittent link errors in production?

Dirty or damaged connectors and patch cords are the most common causes. Even when optical power looks acceptable, microscale contamination can increase error rates and cause sporadic resets.

Are third-party optics acceptable for industry applications?

They can be acceptable if you validate thoroughly and confirm switch support for third-party optics and DOM behavior. Keep a controlled pilot batch and track error counters and DOM trends across temperature cycles.

Optical deployments for industry applications succeed when engineering choices match the plant’s distance, environment, and maintenance reality. Next, map your current port speeds and fiber plant loss into a transceiver shortlist using optics selection for industrial ethernet as your working template.

Author bio: I lead network architecture and reliability programs for industrial Ethernet, focusing on PHY compatibility, link-budget engineering, and operational monitoring. I have deployed fiber-based fabrics across OT environments where uptime is measured in production cycles, not office hours.