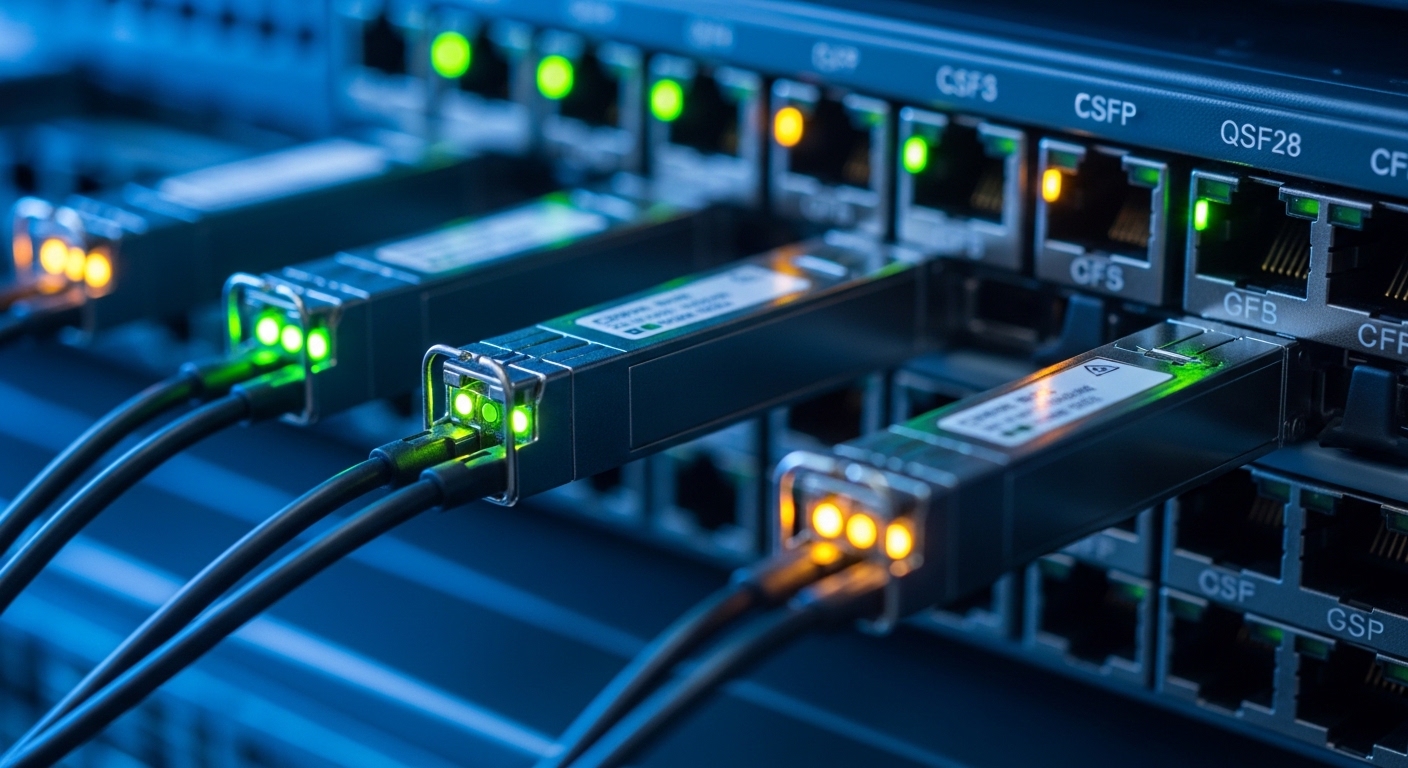

A 2025 refresh of a leaf-spine data center exposed a familiar pain: link failures, power drift, and incompatible transceivers when teams scaled fiber density. This article helps network engineers, field technicians, and procurement leads choose optical modules that will actually survive high-speed rollout. You will get a real deployment case, the exact selection checklist, and troubleshooting patterns seen on live racks.

Problem and challenge: scaling optical modules without breaking optics

In our case, the operations team planned to upgrade aggregation and ToR uplinks from 10G to 25G, while reserving a path to 100G at the spine tier. The environment used 48-port ToR switches and 100G spine uplinks, pushing module density to the point where airflow and thermal margins became a first-order constraint. During a pilot, two failure modes appeared quickly: intermittent link flaps in cold aisles and “no module detected” events after cable reseating.

These issues were not just “bad optics.” They were rooted in a mismatch between transceiver type, platform firmware expectations, and operational limits like DOM reporting, lane mapping, and temperature rise under high port utilization. IEEE alignment mattered too: IEEE 802.3 defines Ethernet PHY behavior for 10G/25G/50G/100G, but vendor implementations still have constraints around optics qualification and digital diagnostics. [Source: IEEE 802.3 Working Group]

Environment specs: what the optics had to survive

The target fabric was a 3-tier leaf-spine design: ToR access at the edge, aggregation in the middle, and spines in the core. Each ToR used optics for server downlinks and uplinks; each spine used high-density modules for east-west traffic. Operationally, the site ran at 22–27 C ambient in the bays, with measured switch-module cage temperatures peaking around 78 C near the densest airflow bottlenecks.

Fiber plant constraints were equally specific. Most links were on OM4 multimode (MMF), but some racks used OS2 single-mode (SMF) for longer reach. The network also required consistent diagnostics for operations: engineers wanted DOM telemetry for Tx bias, Rx power, and temperature to drive alarms before failures. That requirement narrowed acceptable optical modules to those with reliable digital diagnostic interfaces (commonly I2C-accessible per SFF specifications).

Reference module types and key specs

We evaluated two families: short-reach multimode for 25G and short/medium-reach options for 100G. The most common standards-based targets included 25G SFP28 for OM4 and 100G interfaces using QSFP28 (4x25G lanes) or equivalent 100G optics depending on switch port breakout behavior.

| Optical module type | Wavelength / lane | Reach (typical) | Connector | DOM / diagnostics | Operating temperature | Common form factor |

|---|---|---|---|---|---|---|

| 25G multimode “SR” | 850 nm VCSEL | ~70 m on OM4 (varies by vendor) | LC duplex | Yes (Tx/Rx power, temp) | ~0 to 70 C (check datasheet) | SFP28 |

| 100G multimode “SR4” | 850 nm MPO-12 (4 lanes) | ~100 m on OM4 | MPO-12 | Yes (DOM varies by SKU) | ~0 to 70 C (check datasheet) | QSFP28 |

| 100G single-mode “LR4” | ~1310 nm DWDM (4 wavelengths) | ~10 km (depends on optic) | LC duplex | Yes (often required for alarms) | ~0 to 70 C (check datasheet) | QSFP28 |

For concrete examples used in the evaluation, teams often reference vendor part numbers such as Cisco SFP-10G-SR (for legacy 10G SR), Finisar FTLX8571D3BCL (25G-class optics depending on SKU family), and FS.com SR and LR QSFP28/SFP28 equivalents. Always verify the exact SKU against your switch compatibility matrix and the datasheet DOM behavior. [Source: Cisco SFP module datasheets; Source: Finisar/Viavi optical module datasheets; Source: FS.com product pages]

Chosen solution: a compatibility-first optical module mix

The final design used a compatibility-first approach, not a “one-size-fits-all” procurement strategy. For OM4, we standardized on 25G SFP28 SR optics for ToR-to-aggregation and on 100G QSFP28 SR4 optics for spine uplinks where MPO trunking and lane mapping were stable. For longer reach segments and inter-bay runs, we used 100G QSFP28 LR4 optics over OS2.

We explicitly avoided mixing optics vendors within a single switch model during the pilot window. That decision reduced variables when troubleshooting DOM telemetry differences and vendor-specific firmware quirks. We also required DOM telemetry to be present and readable before rollout, using a pre-check script that queried module vendor ID, serial number, and real-time Tx bias values at link-up.

Pro Tip: In high-density deployments, “link comes up” is not the acceptance test. We found the earliest predictor of future flaps was a slow drift in module Tx bias versus temperature after the first 24 hours. Enforce alarms on abnormal Tx bias slope and Rx power margin, not just link state, to catch marginal optics before they fail.

Implementation steps that prevented the pilot failures

- Thermal qualification: before mass install, we measured cage temperatures and verified the optics were within their specified operating range with worst-case port utilization. For the densest blocks, we improved front-to-back airflow with baffles and confirmed airflow directionality.

- DOM pre-check: we blocked deployment if Tx power, Rx power, or temperature sensors returned out-of-range or “not present.” We also recorded baseline Tx bias and Rx power at stable link-up.

- Fiber hygiene and polarity: we enforced connector inspection, cleaning, and polarity mapping for LC duplex and MPO trunks. We used a standard labeling convention per patch panel row to reduce swap events during maintenance.

- Switch compatibility validation: we confirmed each module SKU appeared in the switch vendor compatibility guidance. Where the switch supported “vendor-agnostic optics,” we still ran a constrained test matrix to ensure consistent lane mapping and breakout behavior.

- Versioned rollout: we rolled in batches by rack and port group, holding one known-good optics set as a rollback baseline.

Measured results: what improved after the change

After the pilot and the standardized rollout, the team measured operational stability over six weeks. The most visible improvement was reduction in link flaps during peak utilization: intermittent disruptions dropped from ~2.4% of ports during the pilot to <0.2% after enforcing compatibility-first selection and DOM acceptance checks. “No module detected” events dropped from 18 incidents in the first month to 3 incidents during the same expansion window.

Power and cooling also improved indirectly. By correcting airflow constraints and reducing repeated reseating events, switch-module temperature excursions above 80 C became rare, and average cage temperature in the densest blocks stabilized within 2–3 C of the initial baseline. That mattered because many optical modules are specified for operation up to about 70 C case temperature in datasheets, and real cages can run hotter under poor airflow. [Source: Vendor optical module datasheets; Source: ANSI/TIA-942 for data center facility guidance]

Lessons learned: constraints that do not show up on a spec sheet

First, high-density racks turn “ambient temperature” into a misleading metric; module-specific temperature rise and airflow mixing dominate. Second, DOM telemetry consistency across vendors is not guaranteed even when the optics advertise “digital diagnostics.” Third, fiber polarity and MPO keying practices are operational risk multipliers; a single swapped patch can look like an optics fault.

Common mistakes / troubleshooting for optical modules at scale

Below are failure modes we repeatedly saw during the rollout, with root causes and fixes.

- Mistake: Installing optics that are “electrically compatible” but not qualified by the switch vendor for that exact SKU.

Root cause: Vendor-specific initialization sequences and DOM parsing differences can cause link instability or “module not supported” behavior.

Solution: Validate against the switch compatibility guidance and run a limited test matrix before broad deployment. - Mistake: Treating DOM as optional and relying only on link status.

Root cause: Some optics report temperature or Tx bias with delayed updates; marginal components can degrade before link flaps.

Solution: Alert on Tx bias drift rate and Rx power margin thresholds after the first 24 hours, not just link up/down. - Mistake: Skipping fiber cleaning and polarity verification for MPO trunks and LC jumpers.

Root cause: Contamination and polarity swaps reduce receiver sensitivity, which can present as intermittent errors under higher traffic.

Solution: Use inspection tools, enforce cleaning SOPs, and verify polarity/keying per patch label before accusing optics. - Mistake: Assuming ambient temperature is sufficient for thermal qualification.

Root cause: Module cage temperature depends on airflow, baffle placement, and adjacent port utilization; densest blocks can exceed optic operating limits.

Solution: Measure module cage or use validated thermal modeling; adjust airflow with baffles and confirm with field sensors.

Cost and ROI note: balancing OEM, third-party, and failure risk

Typical street pricing varies by region and volume, but engineers often see OEM-branded optics priced at a premium versus third-party units. As a rough planning range, 25G SR SFP28 modules may land in the low-to-mid hundreds USD per unit in moderate quantities, while 100G QSFP28 SR4 optics can be multiple times that cost depending on OM4 vs OS2 and whether you buy OEM or compatible third-party options. LR4 optics are usually higher due to DWDM complexity.

TCO is not just purchase price. If a third-party optic increases failure probability or causes more troubleshooting hours, the labor and downtime cost can outweigh savings. In our deployment, the ROI came from fewer field interventions and reduced escalation time: the team avoided repeated swap-and-retest cycles by enforcing compatibility and DOM acceptance checks up front.

FAQ: selecting optical modules for high-density data centers

What optics reach should I plan for on OM4 multimode?

For 25G SR on OM4, many vendors target roughly 70 m, but the true budget depends on your link attenuation, connector loss, and patch cord quality. Always calculate an optical link budget including worst-case patching and verify vendor reach claims for your specific SKU. [Source: IEEE 802.3 and vendor datasheets]

Do optical modules always include DOM diagnostics?

Most modern SFP28 and QSFP28 optics include digital optical monitoring, but not every compatible SKU behaves identically. Confirm that your switch can read DOM values reliably and that telemetry fields you plan to alarm on (Tx bias, temperature, Rx power) are present and stable. If DOM is critical, require it in the acceptance criteria.

What is the biggest cause of intermittent link flaps?

In dense racks, the most frequent causes are thermal stress and marginal optical power budgets, often amplified by fiber contamination or polarity mistakes. A common pattern is degradation after warm-up; that is why Tx bias drift and Rx power margin alerts are more effective than link-only monitoring.

Can I mix optical module vendors in the same switch?

You can sometimes, but it increases operational variability. For a high-density rollout, we recommend starting with one vendor family per switch model during the pilot and only broadening after you prove compatibility and telemetry consistency.

How do I avoid “no module detected” errors?

Verify the exact module SKU and compatibility guidance for your switch model, check DOM readability, and ensure correct seating and latch engagement. Also confirm that the module form factor matches the port type and that firmware supports that transceiver class.

What should procurement require in the purchase spec?

Require wavelength and reach class, connector type, DOM support, and an operating temperature statement aligned to your measured cage conditions. Add acceptance tests: DOM pre-check, link-up stability window, and a fiber inspection and polarity verification step before first traffic.

If you want the next step, map your current fiber plant and switch port types to a rollout plan using optical module compatibility matrix. That will help you turn “optics shopping” into a controlled deployment with measurable stability targets.

Author bio: I have led field deployments of high-speed optics in leaf-spine fabrics, including DOM-based monitoring and thermal qualification in live racks. I write implementation-focused guidance that reflects what fails in production and how to prevent it.