When AI applications stall, the root cause is often not the model, but the fiber link: link flaps, CRC errors, degraded optical power, or polarity mistakes. This article helps data center and network engineers troubleshoot fiber optic Ethernet links used in AI applications, with a head-to-head comparison of common transceiver options. You will get practical failure-mode diagnostics, selection criteria, and a decision matrix that ties technical risk to operational cost.

AI applications fiber links: what “healthy” actually looks like

In AI application networks, the traffic pattern is bursty and latency sensitive, so small physical-layer issues become visible quickly as retransmits and queue buildup. On 10G/25G/40G/100G Ethernet, you typically monitor link state stability, interface counters (FCS/CRC, symbol errors), and optical receive power against vendor thresholds. IEEE 802.3 defines the physical coding and link behavior, but the practical “health” window is defined by the transceiver datasheet and the switch vendor’s optics support matrix. [Source: IEEE 802.3]

For optics, “good” usually means the receiver is comfortably above its sensitivity floor while staying below maximum input power. In the field, engineers also correlate DOM readings (Tx bias, Tx power, Rx power) with intermittent failures during warm restarts or connector re-seating. If you see rising CRC or FCS errors without a corresponding optical drop, suspect lane mapping, channel swap, or marginal optics compatibility rather than pure light loss.

Transceiver choice comparison for troubleshooting speed and failure risk

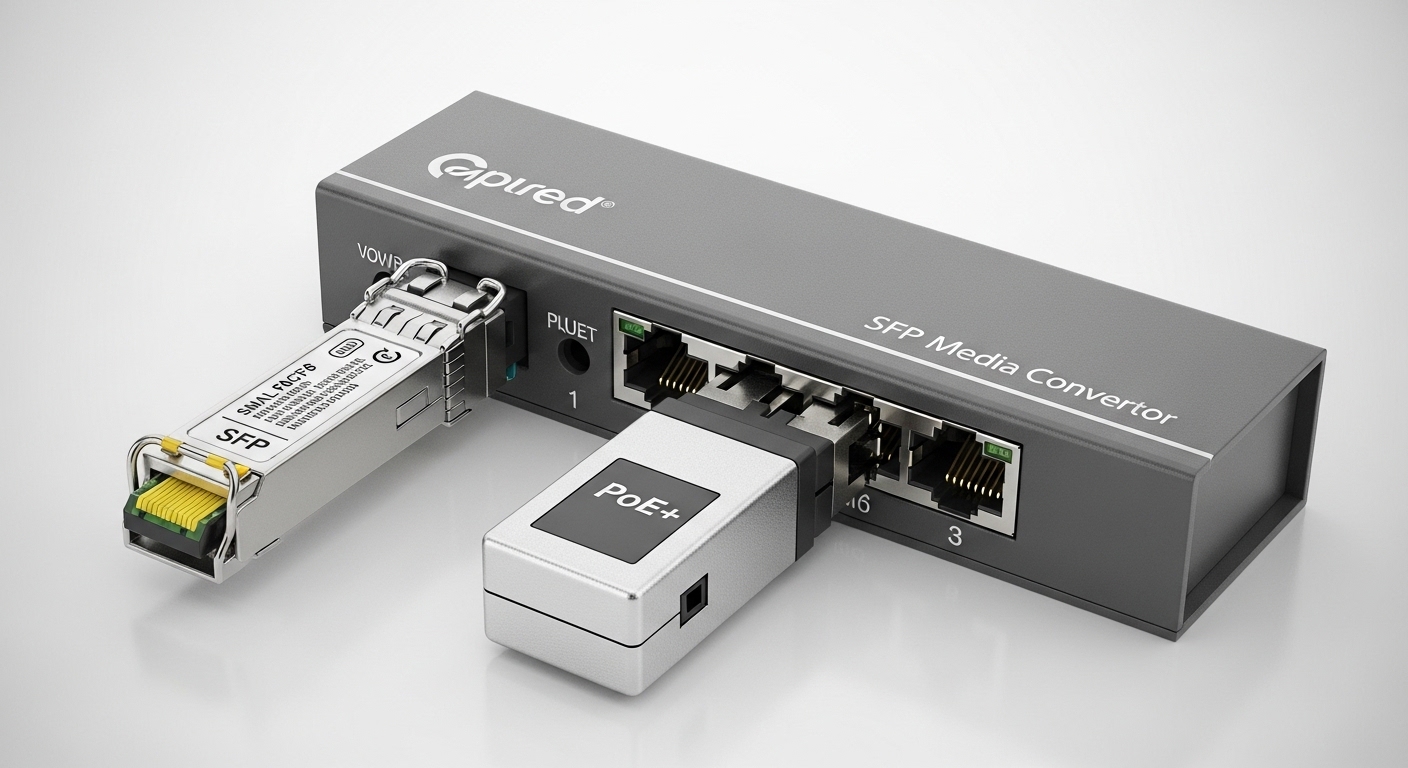

Choosing optics is not just about reach; it changes how quickly you can diagnose failures. In AI applications, you want predictable DOM support, stable compliance with the host switch’s electrical requirements, and consistent thermal behavior under high port density. Below is a practical comparison of widely deployed pluggables used for fiber runs in AI clusters, focusing on parameters that directly affect troubleshooting outcomes.

| Option (examples) | Typical data rate | Wavelength | Reach (typical) | Connector | DOM / diagnostics | Operating temp (typical) | Common troubleshooting angle |

|---|---|---|---|---|---|---|---|

| SFP-10G-SR (e.g., Cisco SFP-10G-SR) | 10G | 850 nm | ~300 m over OM3 / ~400 m over OM4 | LC | Yes (per SFF-8431/8472) | 0 to 70 C (varies by vendor) | Check fiber cleanliness and Rx power drift; watch for wrong fiber type |

| SFP-25G-SR (e.g., Finisar FTLX8571D3BCL) | 25G | 850 nm | ~70 m on OM4 (varies) | LC | Yes (per 25G SR modules) | -5 to 70 C (varies) | Lane-level errors; verify polarity and connector mating force |

| QSFP28-100G-SR4 (e.g., FS.com SFP-10GSR-85 shown as SR family example) | 100G | 850 nm (multi-lane) | ~100 m on OM4 (varies) | MPO/MTP | Yes (per QSFP28) | 0 to 70 C (varies) | Polarity and lane mapping are critical; inspect MPO endfaces |

Standards matter here: SFP and QSFP digital diagnostics are standardized in SFF documents, and most modern modules expose DOM data for Tx bias, Tx power, and Rx power. [Source: [EXT:https://www.snia.org/technology/sff|SFF Alliance]] For host compatibility, the switch checks module identity and calibration parameters; mismatches can present as “unsupported optics” or intermittent link training issues even when the light budget seems adequate.

Pro Tip: When troubleshooting AI applications links, don’t rely on “it links up.” Instead, capture a short time series of DOM Rx power and CRC/FCS counters during the same window. If CRC rises while Rx power stays flat, the failure is often electrical mapping (lane order, polarity, or host compatibility) rather than optical loss.

Field workflow: isolate physical, optical, and logical causes

Use a repeatable workflow so you can separate physical-layer defects from configuration problems. In most AI application fabrics, you will have multiple identical ports, predictable cabling patterns, and access to switch telemetry. Start with optics and the fiber path, then confirm Ethernet negotiation and finally validate application impact.

Validate link state and error counters

On the switch, check for link down/up events, autoneg behavior (where applicable), and interface errors: CRC/FCS, symbol errors, and discarded packets. A stable link with rising CRC can indicate marginal optical power or a connector contamination issue. A link that flaps after a brief time often points to thermal stress, poor insertion, or marginal lane alignment in MPO assemblies.

Compare DOM readings to module thresholds

Read Rx power, Tx power, and temperature from the DOM. If Rx power is below the vendor’s sensitivity or trending downward after reseating, treat it as an optical path issue: dirty endfaces, fiber microbends, or a damaged patch cord. If DOM shows “flat” optics but errors persist, shift focus to polarity, lane mapping, and switch port compatibility.

Inspect and clean connectors before swapping optics

Dust is the most common real-world cause of degraded receive power in high-density AI racks. Inspect the LC or MPO endfaces with a fiber scope; clean with lint-free wipes and approved cleaning tools, then re-check Rx power. If you must swap optics, do it after cleaning so you do not burn time comparing two “dirty-but-different” components.

Common mistakes and troubleshooting tips in AI application fiber links

Even experienced teams lose time when they skip the order of operations. Below are concrete failure modes seen in AI clusters and how to correct them.

- Mistake: Swapping transceivers without cleaning connectors

Root cause: contamination remains on the fiber endface, so the new optics inherits the same loss and errors.

Solution: inspect and clean first; then reseat and verify DOM Rx power before concluding compatibility issues. - Mistake: Ignoring MPO polarity and lane mapping on 100G SR4

Root cause: multi-lane optics require correct polarity mapping; swapped lanes can pass link training but produce high CRC.

Solution: confirm polarity convention (A/B, or manufacturer-specific mapping) and re-terminate or use correct polarity jumpers. - Mistake: Using an optics type beyond the switch support list

Root cause: host compatibility checks may allow insertion but fail under load due to parameter mismatch or calibration differences.

Solution: use the switch vendor optics compatibility list; confirm DOM format and compliance with the relevant SFF specification. - Mistake: Overlooking OM type and budget at short distances

Root cause: OM3/OM4 mismatch can still work at low load but fails under higher modulation stress and connector loss.

Solution: verify fiber type and measure link attenuation; compare to module reach and your patch-cord budget.

Cost and ROI: OEM vs third-party optics for AI applications

In AI applications, downtime is expensive because training and inference workloads are time-critical. OEM optics often cost more per module, but they tend to have predictable compatibility behavior with specific switch models and better documentation for DOM thresholds. Third-party optics can reduce upfront spend, but you may see higher iteration costs during troubleshooting if compatibility or DOM behavior differs.

Typical market pricing varies by speed and vendor, but a realistic engineering expectation is: 10G SR modules often land roughly in the low tens of USD, while 25G SR is commonly higher, and 100G SR4 can be substantially more. TCO should include expected failure rates, labor hours for cleaning and swaps, and the operational cost of link instability during peak training windows. If you can standardize on one optics family with known DOM behavior and compatibility, you reduce mean time to repair and avoid repeat incidents.

Selection checklist: decide fast, then troubleshoot smarter

Use this ordered checklist when selecting optics for AI applications so you minimize future link faults and speed up repair.

- Distance and fiber type: confirm OM3 vs OM4, patch cord length, and worst-case attenuation.

- Switch compatibility: choose optics from the host vendor optics support list; verify DOM and digital diagnostics.

- Connector and polarity requirements: LC for single-lane, MPO/MTP for multi-lane; ensure correct polarity jumpers.

- DOM support and thresholds: ensure Rx power and temperature telemetry are available and interpretable.

- Operating temperature and airflow: high-density AI racks can exceed spec if airflow is blocked; validate module temperature behavior.

- DOM and vendor lock-in risk: standardize module families where possible to reduce troubleshooting variance.

Decision matrix: which option reduces your troubleshooting time

The matrix below helps you choose based on operational priorities common in AI application networks.

| Priority | Best fit | Why it helps troubleshooting | Watch-outs |

|---|---|---|---|

| Fast diagnosis with consistent telemetry | Modules with well-supported DOM on your switch platform | DOM makes Rx power and temperature trends visible during faults | Confirm DOM interpretation and thresholds per vendor docs |

| Lowest optical path sensitivity to contamination | Proper cleanliness process + correct connector type (LC vs MPO) | Less time wasted on “mystery” CRC increases | Still requires inspection and cleaning discipline |

| Compatibility confidence in multi-vendor environments | OEM or explicitly validated third-party optics | Fewer unsupported module edge cases under load | Higher unit cost; plan spares accordingly |

| Budget optimization with controlled risk | Third-party modules standardized by model and batch | Repeatable behavior reduces troubleshooting variance | Validate in a lab or with staged deployment |

| Highest throughput AI fabrics (100G+) | QSFP28 SR4 with correct MPO polarity management | Clear lane-level fault isolation when DOM and counters align | MPO polarity mistakes are the dominant failure mode |

Which Option Should You Choose?

If you are running AI applications on a 10G or 25G footprint and you need predictable operations, choose the optics family that your switch vendor explicitly supports, and standardize on one module model per port group. If you operate 100G SR4 in dense pods, prioritize correct MPO polarity procedures and modules with reliable DOM so you can pinpoint lane vs optical loss issues quickly. If budget pressure is high, third-party optics can work, but you should stage-roll them, monitor DOM and CRC trends for at least one full maintenance window, and keep a short rollback path to OEM optics.

Next step: review your current optics inventory and fiber patching documentation, then align it with the selection checklist above using fiber-cleaning-and-mpo-polarity-best-practices|fiber cleaning and MPO polarity best practices.

FAQ

Q: How do I tell if an AI applications fiber issue is optical loss or a polarity problem?

If Rx power is low or drifting after reseating, it is usually optical loss from contamination, microbends, or damaged patch cords. If Rx power is stable but CRC/FCS rises, suspect polarity, lane mapping, or switch-host optics compatibility.

Q: What DOM readings should I capture during troubleshooting?

Capture Rx power, Tx power, and module temperature, then correlate them with interface error counters over a tight time window. This prevents misattributing electrical mapping issues to optical budget problems.

Q: Can I use third-party optics in AI application networks?

Yes, but only if they are validated for your switch model and meet the optics support list requirements. Stage deployment and monitor link stability and error counters before scaling.