In enterprise IT, the jump to 400G is rarely just a purchase order; it is a choreography of switch ASIC headroom, optics compatibility, fiber plant limits, and change-control windows. This article helps network and finance leaders quantify the cost-benefit of a 400G migration with enough technical specificity to survive an audit. You will get a practical decision checklist, a deployment scenario with measured port counts, and troubleshooting patterns engineers actually see in the field.

Why 400G migration feels expensive in enterprise IT

At first glance, the bill looks like optics plus transceivers. In practice, 400G migration in enterprise IT often expands into adjacent spend: upgrade labor, spare inventory, possible fanout and patching, and sometimes line card or fabric module refreshes. The hidden driver is capacity planning: many enterprises discover that 100G-to-200G upgrades delayed congestion, but 400G forces a new equilibrium for oversubscription and traffic engineering.

From a standards perspective, 400G Ethernet is anchored in the IEEE 802.3 family (for example, 400GBASE-R and related 400G PHY clauses), while optics interoperability is governed by vendor implementations of the transceiver electrical interface and management via MDIO/I2C plus digital diagnostics. For procurement, this matters because a “works on my bench” module can fail under thermal or link margin constraints.

Cost model: what you actually pay for during a 400G cutover

To build a credible cost-benefit analysis, split expenses into three buckets: capital (CAPEX), operational (OPEX), and risk (downtime and rework). In enterprise IT programs, risk is not a line item on the invoice, but it shows up as additional labor, extended change windows, and emergency spares.

CAPEX components

- Switch and line card upgrades if the target 400G ports require specific hardware support.

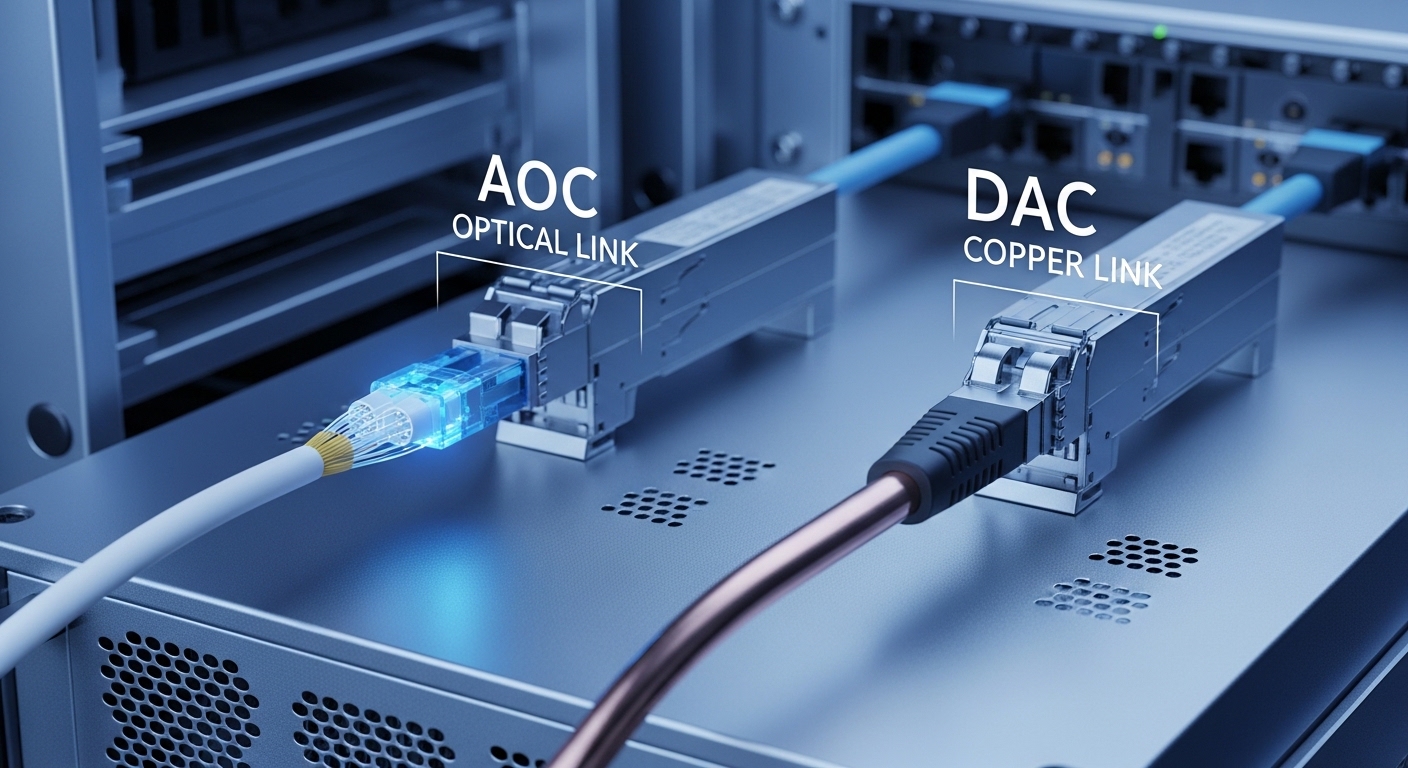

- Optics (QSFP-DD, OSFP, or vendor-specific form factors depending on platform).

- Breakout vs native decisions: some architectures prefer native 400G links; others use breakout to 100G lanes.

- Fiber plant readiness: patch panels, MPO/MTP harnesses, and cleaning tools.

OPEX components

- Installation labor: rack time, labeling, and verification runs.

- Monitoring and support: transceiver telemetry ingestion and alert tuning.

- Power and cooling deltas: higher-speed PHYs can shift thermal profiles.

Risk components

- Interoperability surprises between switch vendors and third-party optics.

- Link margin erosion from dirty connectors, aging fiber, or over-aggressive reach assumptions.

- Change-control overruns when rollback plans are not exercised.

Pro Tip: In field audits, the most common “mystery cost” is not the transceiver price; it is the time spent chasing intermittent link flaps caused by inadequate connector cleaning and inspection. If you standardize MPO/MTP cleaning (with inspection scopes) before the first 400G patch, you often prevent weeks of rework later.

Technical deep dive: optics reach, power, and interoperability

The 400G migration decision hinges on link type: short-reach for data center fabric, or longer-reach for campus and metro. In enterprise IT, the most common short-reach paths are SR4 style optics (four lanes), while longer-reach options use coherent or higher-grade modules depending on distance. The operational truth is that reach is bounded not only by the module spec, but by the full link budget: fiber attenuation, connector loss, patch cord length, and polarity/mating discipline.

Below is a simplified comparison of representative modules that teams often evaluate during 400G migrations. Exact compatibility still depends on the specific switch model and its transceiver support list, but these specs illustrate the trade space.

| Module example | Form factor | Wavelength | Target reach | Data rate / lanes | Connector | Typical diagnostics | Operating temperature |

|---|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR (legacy reference) | QSFP/SFP family (varies) | 850 nm | ~300 m (varies by class) | 10G (single) | LC | DMI / digital monitoring | Commercial |

| Finisar FTLX8571D3BCL (400G SR4 class example) | QSFP-DD / 400G SR4 family | 850 nm | ~100 m OM4 (typical) | 400G aggregated (4 lanes) | MPO/MTP | DOM via I2C | Commercial/industrial variants |

| FS.com SFP-10GSR-85 (10G SR reference) | SFP (legacy reference) | 850 nm | ~300 m (varies) | 10G | LC | DOM via I2C | Commercial |

| Vendor 400G LR4 example (varies by vendor) | QSFP-DD / 400G LR4 family | ~1310 nm | 2 km class (typical) | 400G aggregated (4 lanes) | LC | DOM via I2C | Commercial/industrial variants |

Note the table’s intent: it frames the engineering variables—wavelength, connector type, reach class, and diagnostics—without pretending that every module is drop-in identical. For enterprise IT, always verify the switch’s transceiver compatibility matrix and confirm the module supports DOM telemetry fields your network controller expects (temperature, voltage, bias current, received optical power, and alarm thresholds).

Real-world deployment scenario: 400G in a leaf-spine enterprise IT fabric

Consider a 3-tier data center leaf-spine topology inside a mid-sized enterprise with 48-port 10G ToR switches at the access layer and spine pairs providing east-west aggregation. The team plans to migrate a subset of leaf uplinks to 400G to relieve congestion during peak backup windows and AI training bursts.

They target 24 leaf switches, each with 2 uplinks upgraded from 100G to 400G, for 96 upgraded ports total. The optics plan selects short-reach 400G SR4 modules for ≤100 m paths across the row, using pre-terminated MPO/MTP harnesses and verified polarity. During cutover, the engineer runs a staged validation: optics insertion with DOM checks, link training, and then a traffic soak test with packet-per-second rates matching the measured peak baseline.

In practice, the first week focuses on operational stability: monitoring for link flaps, checking received optical power drift, and confirming that the network controller correctly reads alarms from the transceivers. Only after the telemetry pipeline is stable do they expand the rollout to the next batch of leaves, reducing the odds that a single incompatible module batch triggers broad rollback.

Selection criteria: the ordered checklist engineers use

When enterprise IT teams choose 400G optics and migration paths, they tend to follow an instinctive checklist that can be formalized. Use this ordered set to reduce surprises and procurement churn.

- Distance and fiber type: confirm OM4/OM5 grading, patch cord lengths, connector counts, and end-to-end loss assumptions.

- Switch compatibility: consult the platform’s transceiver support list and confirm the required form factor (QSFP-DD vs OSFP) and lane mapping.

- DOM and management integration: verify that the module’s digital diagnostics (via I2C/MDIO pathways) populate the telemetry fields your monitoring expects.

- Operating temperature: match module temperature class to the actual environment near the line card; do not assume “data center typical” equals safe.

- Budget and TCO: compare OEM and third-party total cost, including failure rates, warranty terms, and downtime cost.

- Vendor lock-in risk: assess whether future migrations will require the same vendor optics ecosystem.

Common pitfalls and troubleshooting tips during 400G migration

Even disciplined teams encounter failure modes that look mysterious until you trace them to physics and process. Below are common patterns, with root causes and fixes that field engineers can execute quickly.

Link flaps after optics insertion

Root cause: dirty MPO/MTP end faces or mis-seated ferrules causing intermittent reflections and receiver overload/underload. Solution: inspect with a fiber microscope, clean with validated procedures, re-seat using consistent torque/closure, then re-check received optical power thresholds from DOM.

“Incompatible transceiver” alarms despite matching speed

Root cause: switch-specific firmware expectations for lane mapping, vendor-defined EEPROM fields, or unsupported form factor variants. Solution: verify the exact transceiver part number against the switch’s compatibility matrix; if using third-party optics, purchase from a vendor that provides tested interoperability documentation for your exact switch model.

Persistent CRC errors and rising BER under load

Root cause: marginal optical budget from excessive patch cord length, too many connectors, or wrong fiber grade assumptions (for example, OM3 treated as OM4). Solution: measure and document end-to-end loss with a test kit, reduce patch length, simplify the harness path, or choose a higher-reach module class within the platform’s constraints.

Thermal throttling or higher-than-expected DOM temperature

Root cause: airflow obstruction, blocked intake vents, or modules operating near their temperature ceiling. Solution: validate airflow paths, confirm fan profiles, and ensure correct cable management around line cards; then compare DOM temperature to the module’s stated operating range.

Cost and ROI note: pricing reality and lifecycle math

In enterprise IT, 400G optics pricing varies widely by reach class and vendor. As a pragmatic range, short-reach 400G SR4 modules often land in the hundreds of dollars per module at volume, while longer-reach options can be significantly higher, especially when coherent or specialized optics are involved. OEM modules may cost more upfront, but they can reduce compatibility risk and warranty friction; third-party optics can be cost-effective if interoperability is proven and DOM behavior is verified.

For ROI, model not just bandwidth gained, but risk reduced: fewer congestion incidents, faster time-to-recovery during peak traffic, and lower probability of emergency rollbacks. A credible TCO model includes labor hours for installation and verification, spares inventory holding cost, and the expected failure rate under your temperature and cleaning practices. If your change windows are expensive, the “ROI” can come from fewer incidents rather than pure hardware savings.

FAQ

How do I estimate enterprise IT bandwidth savings from 400G?

Start with measured utilization curves (peak and sustained), then quantify the congestion window duration before and after migration. If your oversubscription model changes, validate it with traffic engineering counters and queue depth telemetry, not just link speed.

Are third-party 400G optics safe for enterprise IT?

They can be, but only when tested against your exact switch model and firmware. Verify DOM telemetry compatibility, alarms, and transceiver support lists; otherwise you may get management blind spots or intermittent link issues.

What fiber reach class should I plan for on 400G SR links?

Use the strictest interpretation of your fiber type, connector count, and patch cord lengths. If you assume OM4 but your plant behaves closer to a degraded scenario, your link margin can vanish under load.

What is the fastest way to reduce 400G troubleshooting time?

Standardize MPO/MTP cleaning and inspection before the first cutover, and pre-validate optics with a controlled acceptance test. Then build a telemetry baseline: received optical power, temperature, and error counters immediately after installation.

Should I use native 400G links or breakout designs?

It depends on your forwarding and traffic engineering model. Native 400G can reduce port count and simplify cabling, while breakout may map better to existing 100G policies—at the cost of additional complexity in lane handling.

How do I present the 400G business case to finance?

Translate migration into measurable outcomes: reduced congestion incidents, shorter change rollback cycles, and predictable capacity growth. Pair that with a TCO table that includes labor, spares, and risk mitigation, not just optics line items.

400G migration in enterprise IT succeeds when the math matches the physics: link budgets, thermal realities, and interoperability constraints all feed the cost-benefit outcome. If you want a companion view on planning the next step after optics selection, see enterprise IT network capacity planning for capacity modeling tactics that keep migration decisions grounded.

Author bio: I have deployed Ethernet trans