You are planning a data center upgrade and the optics aisle suddenly looks like a maze: 400G coherent modules promise headroom, while 800G can collapse port counts but demands tighter power, optics, and firmware alignment. This article helps network engineers and field technicians choose between 400G and 800G transceivers for a migration with measurable constraints. You will get a step-by-step implementation path, a spec comparison table, and a troubleshooting checklist grounded in real deployments.

Prerequisites: define the migration constraints before you touch optics

Start by treating transceiver choice as a system decision: switching ASIC behavior, optics compliance, fiber plant quality, and operational temperature margins all interact. Vendors may label modules “compatible,” yet mismatched firmware, DOM formats, or power class can still cause flaps during bring-up.

Step-by-step implementation prerequisites

-

Inventory ports and uplink topology. Record current port speed, breakout mode, and oversubscription ratio. For example: leaf-spine with 48x400G uplinks per leaf, or 96x200G-to-spine depending on stage. Capture switch model numbers and transceiver cages (QSFP-DD, OSFP, or similar).

Expected outcome: A port map that tells you exactly where 400G or 800G optics will land.

-

Measure fiber plant health. Pull OTDR reports and record end-to-end link loss, PMD, and connector inspection results. For short-reach migration, also confirm patch panel cleanliness and polarity labeling. Target worst-case link loss budget before ordering optics.

Expected outcome: A distance and loss envelope you can compare to vendor link specs.

-

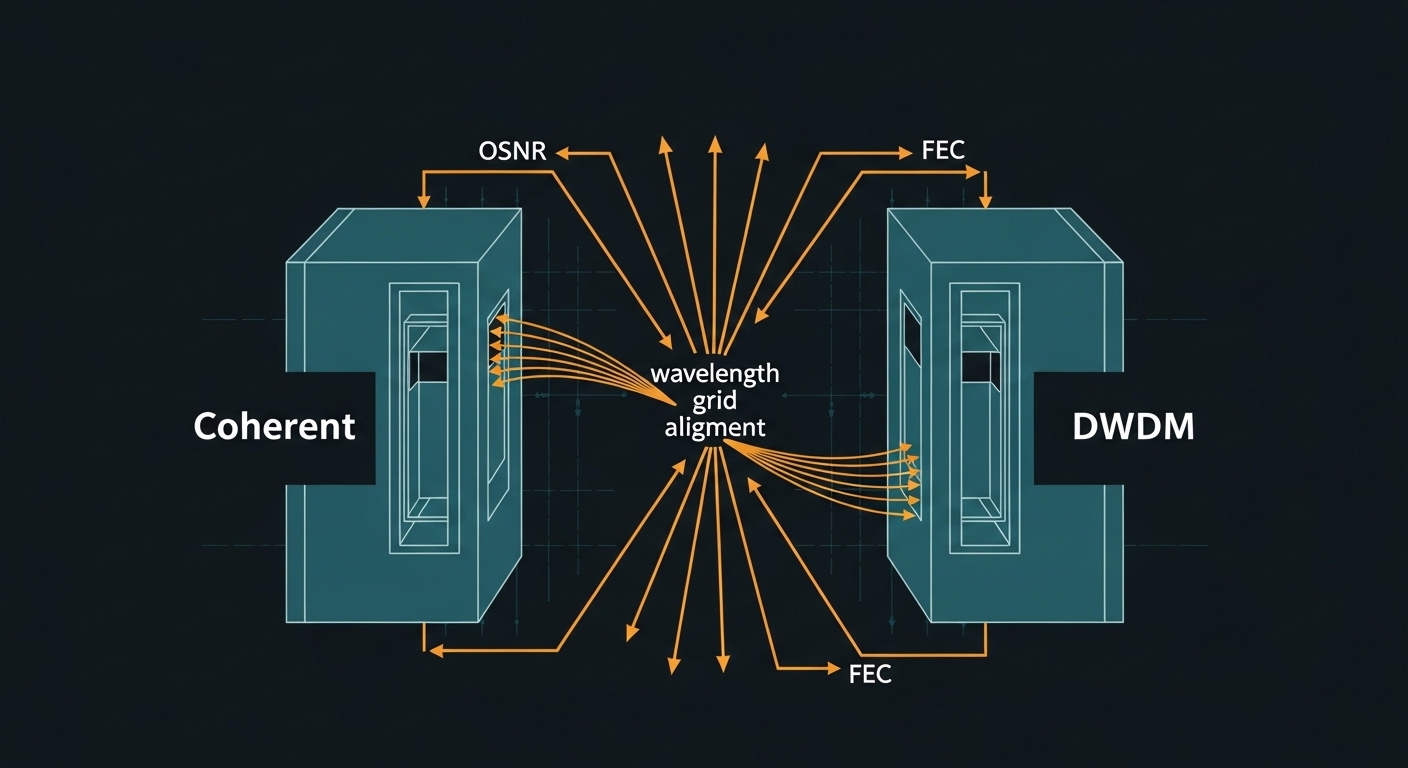

Confirm standards and electrical interface expectations. Verify the switch supports the module type and signaling (for example, 400G/800G Ethernet over coherent or direct-detect depending on your architecture). Align with IEEE 802.3 clauses for the relevant PHY speeds and vendor implementation notes. Use vendor datasheets for the exact optics form factor and DOM behavior.

Expected outcome: No surprises at link training time.

-

Plan power and thermal budgets. In dense racks, airflow paths matter. Confirm transceiver power draw and the switch’s max ambient operating range, then compare with your row-level cooling profile.

Expected outcome: Reduced risk of thermal throttling during peak traffic.

400G vs 800G: how the practical differences show up in the field

On paper, 800G can cut the number of optics by half for the same aggregate bandwidth. In practice, you trade that for stricter power/thermal headroom, higher sensitivity to module firmware and switch compatibility, and sometimes more demanding optical budgets depending on whether the link is direct-detect or coherent. The goal is to choose the module speed that matches your migration stage without creating an operational tax.

Key spec comparison you should actually use

Use this table as a starting point; always confirm exact reach and power class in the specific vendor datasheet. Many 400G and 800G options exist across different form factors and PHY types.

| Parameter | Typical 400G Module | Typical 800G Module |

|---|---|---|

| Data rate | 400 Gbps (e.g., 4x100G lanes or equivalent) | 800 Gbps (e.g., 8x100G lanes or equivalent) |

| Form factor | QSFP-DD / OSFP variants depending on vendor | OSFP / QSFP-DD high-density variants (platform-specific) |

| Wavelength | Depends on PHY: SR/DR variants or coherent bands | Depends on PHY: SR/DR variants or coherent bands |

| Reach (example ranges) | Short reach often tens of meters; longer reach varies by type | Short reach often tens of meters; longer reach varies by type |

| Connector | LC for SR/DR direct-detect; platform-specific for coherent | Often LC for SR/DR; platform-specific for coherent |

| Power (order-of-magnitude) | Often ~6–15 W for common direct-detect classes | Often ~10–25 W depending on PHY and vendor |

| Operating temperature | Commercial and industrial grades exist; confirm exact range | Confirm grade; dense racks need margin vs max ambient |

| DOM / monitoring | Commonly supports digital monitoring; DOM behavior varies | Same principle, but firmware parsing can differ by platform |

Pro Tip: In migrations, the biggest “gotcha” is not reach — it is DOM interpretation and link training behavior. Even when a module is electrically compatible, a switch may reject optics if vendor-specific DOM fields or power class identifiers do not match the expected profile. Validate with a single test port during change windows before rolling across all uplinks.

Implementation guide: choose 400G or 800G in a staged data center upgrade

Your decision should follow a migration rhythm: validate the platform with one optics type, measure optics error counters, then scale. This reduces the blast radius when something subtle breaks, like firmware version gating or a thermal edge.

Map your migration stage to the right speed

-

If you need fast rollout with minimal change risk, start with 400G. Use 400G where your switch platform and optics ecosystem are already proven. This is common in leaf-spine upgrades where you want predictable bring-up and stable counters.

Expected outcome: A lower probability of link instability during the first maintenance window.

-

If you are consolidating port counts or reducing oversubscription headroom, consider 800G. Choose 800G when your hardware supports it cleanly and when you can guarantee adequate thermal and power margins in every row. This often shows up in new builds or near-final stages of a migration.

Expected outcome: Fewer uplink ports, less cabling density, and simplified optics inventory — if compatibility holds.

Validate compatibility with one controlled pilot

-

Select a representative switch and cage type. Use the same switch model and same cage numbering pattern you will scale to. Confirm you have the correct firmware baseline for the transceiver class.

Expected outcome: A pilot that mirrors production behavior.

-

Install optics and run link bring-up checks. Bring up one link at a time, then inspect interface state, optics diagnostics, and error counters. Watch for rising FEC corrections, CRC spikes, or flapping.

Expected outcome: A stable link with acceptable error rates and normal DOM readings.

Confirm optical budget and connector hygiene

-

Compare measured loss to vendor reach. For direct-detect SR/DR optics, ensure your worst-case link loss including patch cords and connectors stays within the module budget. If you are close to the edge, cleaning and re-termination often beats changing module speed.

Expected outcome: No “it works on the bench” surprise.

-

Inspect and standardize polarity. For MPO/LC structures, mis-polarity can produce low-level errors that appear as congestion rather than a hard down link. Standardize labeling before rollout.

Expected outcome: Fewer intermittent failures during peak traffic.

Operationalize monitoring and rollback criteria

-

Define pass/fail thresholds. Use optics DOM fields like received power, temperature, and supply voltage where available. Also record interface counters such as CRC, FEC correct/uncorrect, and link flaps per hour.

Expected outcome: A deterministic decision to scale or revert.

-

Set rollback triggers. For example: if CRC errors exceed a small threshold for consecutive intervals, or if DOM indicates out-of-range temperature during normal load, pause rollout and isolate the failing links.

Expected outcome: Reduced downtime and faster root cause isolation.

Selection criteria checklist for a data center upgrade

When you weigh 400G vs 800G, you are balancing bandwidth density against operational certainty. Use this ordered checklist the way field teams actually prioritize it during procurement and change control.

- Distance and link budget: Use OTDR and vendor reach specs for your exact PHY type and connector path.

- Switch compatibility: Confirm the switch model supports the exact form factor and speed (and that firmware is aligned). Check vendor interoperability lists and known caveats.

- DOM and telemetry behavior: Ensure the platform accepts DOM fields and power class identifiers; validate during pilot.

- Operating temperature and airflow: Compare transceiver power draw to rack cooling conditions; keep margin vs maximum ambient.

- Budget and total cost of ownership: Consider acquisition cost, expected failure rate, and labor hours for rework.

- Vendor lock-in risk: Decide whether you will standardize on OEM optics or allow third-party modules with proven interoperability.

- Migration sequencing: If you need a quick, low-risk phase, choose 400G first, then consolidate with 800G when the platform matures in your environment.

Common pitfalls and troubleshooting tips

Even seasoned teams can trip over the same failure modes during a data center upgrade. Below are three high-frequency problems, with root causes and practical solutions.

Pitfall 1: “Link comes up, then errors climb”

Root cause: Marginal optical budget, dirty connectors, or slight fiber misalignment. In higher-speed lanes, small penalties show up as CRC and excessive FEC corrections.

Solution: Clean connectors with approved procedures, verify polarity, and re-check OTDR loss. If you are near the vendor threshold, fix the fiber plant before changing optics speed.

Pitfall 2: “Module recognized but interface flaps”

Root cause: DOM/power class mismatch, firmware gating, or cage compatibility differences across switch revisions. Some platforms treat certain module profiles as unsupported even if the module is physically seated.

Solution: Confirm switch firmware version and module compatibility guidance. Perform a one-port pilot, compare DOM readings, and isolate to a specific cage type or firmware baseline.

Pitfall 3: “Thermal alarms under peak load”

Root cause: Insufficient airflow at the rack row, blocked vents, or higher-than-assumed module power in 800G configurations. Temperature rise can be slow, appearing only after traffic ramps.

Solution: Measure ambient and exhaust temperatures during peak. Improve airflow (baffle alignment, cable management), and ensure you have margin against the switch and module operating ranges.

Cost and ROI note: what to budget for 400G vs 800G

Typical purchase pricing varies widely by PHY type, vendor, and volume, but field budgets often see OEM optics priced at a premium versus third-party. In many deployments, 400G modules tend to cost less per unit than 800G, while 800G can reduce the number of ports and cabling operations. The ROI often comes from labor savings and reduced operational complexity — but only if compatibility is smooth.

For TCO, include not just module cost, but spares strategy, change-control labor, and downtime risk. If you adopt third-party optics, plan for validation time and a narrower supported list to avoid repeated truck rolls. For reference on Ethernet PHY expectations, review IEEE 802.3 relevant clauses as implemented by vendors: [Source: IEEE 802.3]. For vendor-specific compatibility and power/DOM behavior, rely on the specific datasheets for your switch and optics models: [Source: Cisco transceiver documentation], [Source: Finisar/Foster datasheets], [Source: FS.com product pages].

FAQ

Which is safer for the first phase of a data center upgrade, 400G or 800G?

Most teams choose 400G for the first phase when the switch platform and optics