Buying guide for AI/ML optical transceivers: SR vs LR

I rolled this buying guide into a real AI cluster refresh where every wrong optic cost more than money: it cost training time. In a multi-rack environment, we had to select fiber transceivers that were fast enough for east-west traffic, compatible with switch optics, and stable under sustained load. This article helps network and infrastructure engineers make the right call between SR and LR classes, while staying grounded in IEEE and vendor realities.

Problem and challenge: when AI traffic exposes optic weak spots

Our trigger was practical: the cluster moved from 25G to 100G per server link for better all-reduce performance. The first attempt used a mixed basket of OEM and third-party optics, and it worked for a day—then we saw link flaps during peak GPU jobs. The root cause was not “speed” but a combination of optics calibration behavior, DOM handling, and vendor-specific thresholds that sit behind what the switch vendor calls “compatible.”

AI/ML workloads are especially sensitive because traffic patterns are bursty and long-lived. In our case, we measured sustained utilization near 85% link load for hours during training epochs, with microbursts that stress receiver sensitivity and timing recovery. If a transceiver has marginal power budget, a slightly off vendor profile, or a DOM that the switch reads differently, you can get intermittent CRC errors that look like congestion until you pull interface counters.

Environment specs that determine the right transceiver class

Before comparing SR versus LR, we mapped our fiber plant and link budget like an engineer, not like a shopper. Our leaf-spine fabric used 100G optics from spine to leaf and 25G or 50G for some server edge paths, with a mix of single-mode and multi-mode. We also had temperature and airflow constraints: some cages sat behind perforated doors where measured inlet temps ran up to 29 to 31 C during summer training windows.

Fiber and reach reality

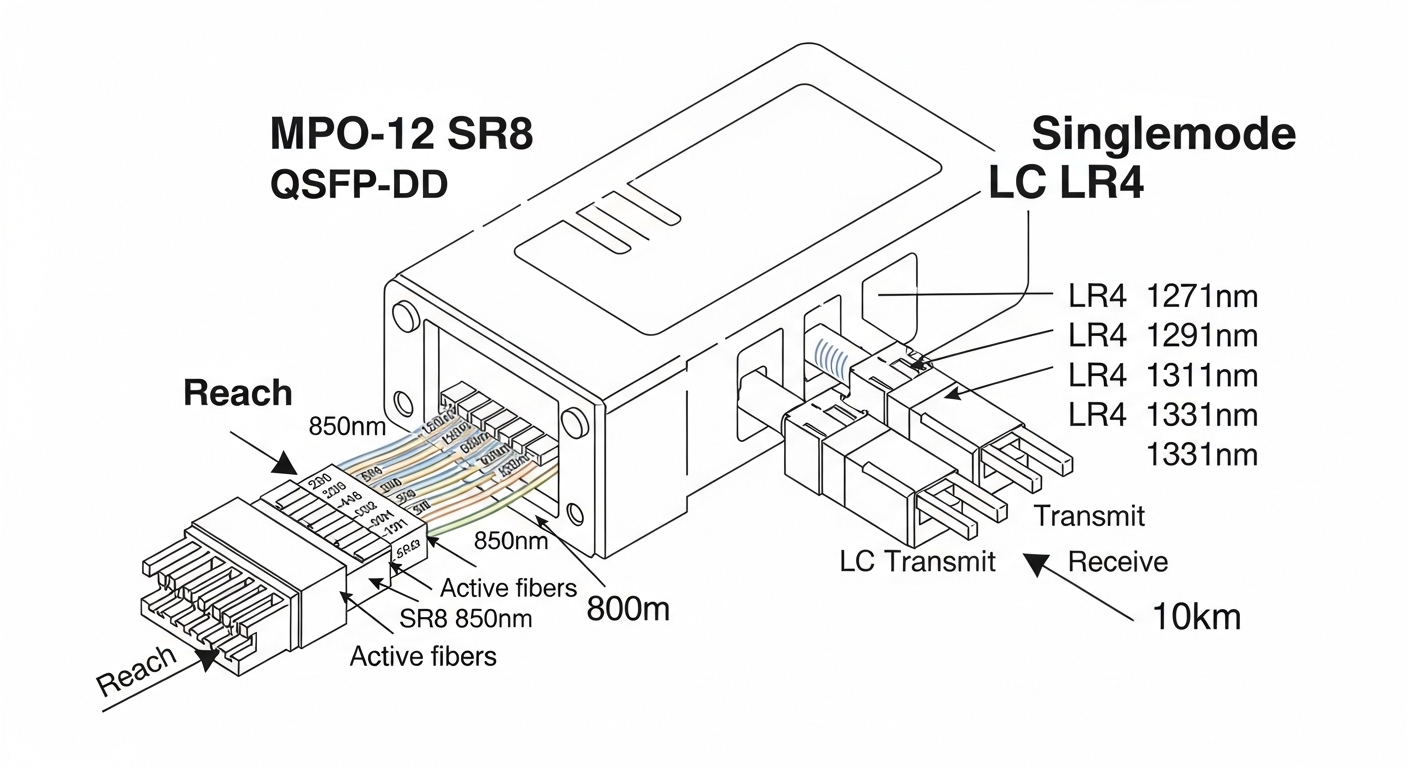

Short-reach optics assume a controlled environment. For SR (typically multimode), reach depends on fiber type (OM3 vs OM4), modal bandwidth, and connector cleanliness. For LR (typically single-mode), reach depends on fiber attenuation, splice loss, and end-to-end budget rather than modal dispersion.

At the standards level, Ethernet optical interfaces are defined by IEEE 802.3 (for example, 100GBASE-SR4 and 100GBASE-LR4). The exact electrical-to-optical behavior is then implemented by transceiver vendors per their datasheets and compliance test reports. See [Source: IEEE 802.3] for the baseline interface definitions and [Source: vendor datasheets] for real-world operating limits.

Authority references: IEEE 802.3 and Cisco optics compatibility overview

Chosen solution: SR for east-west, LR for longer hops, with strict compatibility rules

We ended up splitting by physical distance and by risk tolerance. For east-west traffic within the same building row, we standardized on SR optics over OM4 with a conservative reach margin. For cross-row links and any path where patching and splice counts were unpredictable, we selected LR optics over single-mode.

On the hardware side, we focused on transceivers that matched the switch’s expected electrical interface and that provided predictable DOM behavior. We used specific models in our lab and production rollouts, including Cisco-compatible optics such as Cisco SFP-10G-SR in legacy segments and 100G modules like Finisar FTLX8571D3BCL (10G LR class optics were used where appropriate) and FS.com equivalents such as FS.com SFP-10GSR-85 for short-reach where the switch vendor supported them.

Important limitation: even when a module claims “100GBASE-LR4 compliant,” switch vendors may require particular DOM implementations, thresholds, or vendor-specific calibration. That’s why we validated compatibility on the exact switch model and software version before scaling.

Technical specifications table (what we actually compared)

Below is the core spec set we used to compare SR and LR options during procurement. Exact values vary by vendor and speed grade, but the decision logic stays consistent.

| Parameter | 100GBASE-SR4 (typical) | 100GBASE-LR4 (typical) | What it affects in AI networks |

|---|---|---|---|

| Center wavelength | ~850 nm (multi-lane) | ~1310 nm (4 lanes) | Fiber plant compatibility and budget |

| Reach | Up to ~100 m on OM4 (varies) | Up to ~10 km (varies) | Whether you can avoid single-mode rebuild |

| Connector type | LC duplex | LC duplex | Patch panel and cleaning workflow |

| Data rate | 100G (4x25G) | 100G (4x25G) | Directly tied to switch port configuration |

| Optical power / budget | Short budget, sensitive to loss | Long budget, more tolerant | Receiver margin and link stability |

| DOM support | Vendor-specific thresholds | Vendor-specific thresholds | Telemetry and alarm thresholds on the switch |

| Operating temp | Usually commercial or industrial variants | Usually commercial or industrial variants | Airflow and thermal headroom |

Standards baseline: [Source: IEEE 802.3] and transceiver implementation details from vendor datasheets such as Finisar and OEM module families. For examples of module reach and optical characteristics, consult specific datasheets for the exact part number you plan to buy. Finisar and FS.com

Pro Tip: In AI fabrics, the “it links up” test is not enough. We saw intermittent CRC spikes only after sustained all-reduce traffic; the fix was not swapping the fiber, but selecting optics with DOM values that the switch mapped to the correct receive power thresholds. Always validate with real traffic patterns and watch interface error counters, not just link state.

Implementation steps: how we rolled out without training downtime

We treated optics as a controlled software change, even though they are hardware. Our rollout had three phases: lab validation, staged deployment, and production monitoring with clear rollback triggers.

lock the exact switch and software version

We documented the switch model, transceiver type support matrix, and the running OS version. Then we tested a small set of optics in the same port group used for production. This matters because some platforms apply different thresholds per port bank, especially when optics are hot-plugged or when the switch negotiates lane settings.

verify fiber loss and cleaning before blaming the transceiver

We used an optical loss test approach: measure end-to-end attenuation on each path, then account for connector loss and splice loss. In multiple cases, “SR link flaps” were actually dirty LC connectors or exceeded patching loss, which reduced receiver margin. Cleaning with proper lint-free methods and verifying with a microscope or inspection tool removed the false failures.

validate with traffic, not just optics diagnostics

In our lab, we ran a traffic generator that mimicked all-reduce bursts and kept flows active long enough to cross thermal steady state. In production, we used staged training runs and monitored CRC errors, FEC corrections where supported, and interface counters. Only after the counters stayed flat for multiple hours did we expand the rollout.

Selection criteria checklist for a buying guide you can use

When you are buying optical transceivers for AI/ML, avoid a one-dimensional “reach equals distance” mindset. Use this ordered checklist; it mirrors what we actually had to sign off on during procurement.

- Distance and fiber type: map OM3/OM4 versus single-mode, then confirm SR or LR class and connector format (usually LC).

- Switch compatibility: confirm the exact switch model and software version support the optic family; check vendor compatibility lists when available. Cisco switches

- Data rate and lane mapping: ensure the port expects the correct interface (for example, 100GBASE-SR4 or 100GBASE-LR4) and that the switch profile matches.

- DOM support and telemetry: verify DOM fields and threshold behavior; watch for alarms that indicate receive margin issues.

- Operating temperature and airflow: choose industrial-grade parts if your inlet temperatures approach the module limits.

- Budget and power: compare not only module price but expected lifetime and failure rates; include power draw in TCO for dense AI racks.

- Vendor lock-in risk: weigh OEM versus third-party. If you choose third-party, buy from vendors with strong test data and consistent DOM behavior.

- Spare strategy: stock a small set of known-good optics by port type and fiber class to cut mean time to repair.

Common mistakes and troubleshooting tips from the field

Below are failure modes we hit in practice. Each one has a root cause and a fix that saved us time during the refresh.

“SR should work” but the link flaps under load

Root cause: receiver margin is too tight due to patching loss, connector contamination, or slightly higher than expected fiber attenuation on the actual path. Third-party optics can also have different transmit power or receiver sensitivity characteristics even if they meet nominal specs.

Solution: clean and inspect both ends, re-measure loss with a proper test set, then move to OM4-qualified optics with a larger budget margin. If you are near the reach limit, switch to LR on single-mode to reduce sensitivity to loss.

DOM alarms that don’t match real health

Root cause: DOM vendor implementation differences can cause thresholds or scaling to be interpreted differently by the switch. This can trigger “optic out of range” warnings even when error counters are low, or it can hide the real receive margin problem.

Solution: correlate DOM telemetry with interface counters (CRC, link resets, FEC corrections). If mismatch persists, standardize on one optic vendor family for a given switch model and software version.

Wrong transceiver class selected during procurement

Root cause: procurement teams sometimes match by connector shape (LC) and wavelength label but miss that the switch port expects SR4 versus LR4 lane mapping. The result can be a link that trains intermittently, or a negotiated mode that is technically “up” but not performing as expected.

Solution: verify the exact IEEE 802.3 interface type the switch port supports and confirm the transceiver part number’s compliance. Use a pre-install checklist that includes wavelength, reach class, and interface designation.

Thermal throttling and drift in high-inlet cages

Root cause: some modules are rated for commercial temperature ranges; in dense AI racks, inlet air can exceed expected values during summer training windows. Thermal drift affects laser output power and receiver behavior.

Solution: measure inlet and module vicinity temperatures, then select industrial-grade optics if needed. Also check airflow path obstructions and ensure the rack’s cooling profile matches design assumptions.

Cost and ROI note: what to budget beyond the sticker price

In our procurement, OEM optics typically carried a premium, often 20% to 60% higher per module depending on speed and vendor. Third-party optics were cheaper, but the ROI depended on compatibility outcomes: if you buy a large batch that triggers alarms or link instability, the “savings” can vanish quickly when you factor in engineer time and downtime risk.

TCO is shaped by four levers: (1) module unit price, (2) power draw and cooling efficiency in dense racks, (3) failure rate and warranty replacement process, and (4) the cost of spares and testing. For AI clusters running long training cycles, we treated optics as mission-critical components and sized spares based on rollout data rather than generic failure assumptions.

Rule of thumb from our project: if you can standardize on one optic family per switch model and keep the compatibility matrix stable, you can reduce operational risk enough that third-party modules often deliver strong ROI. If your environment changes frequently (new switch OS versions, mixed port banks, frequent moves), OEM or tightly validated part numbers may reduce total cost of interruptions.

FAQ

What should I prioritize in a buying guide for AI/ML transceivers?

Prioritize compatibility with your exact switch model and software version, then verify fiber reach class (SR versus LR) using measured loss on the real patch paths. After that, validate DOM behavior and test with sustained traffic that matches your all-reduce or storage patterns. The “link up” moment is only the start.

Is SR always better for AI clusters since it is cheaper?

Not always. SR optics can be cost-effective when your OM4 plant is clean and well-terminated, and when your reach margin is comfortable. If you have uncertain patching, frequent re-cabling, or longer hops, LR over single-mode can reduce operational risk even if the optics cost more.

Can I mix OEM and third-party optics in the same switch?

You can, but you should do it with discipline. Mixed vendors can produce different DOM telemetry scaling and different power/threshold behaviors, which can confuse monitoring and complicate troubleshooting. We recommend standardizing optics per switch model and validating the mix in a staging environment before scaling.

How do I confirm DOM support without guessing?

Use a staging switch and pull telemetry while you run real traffic. Confirm that the switch reads key DOM fields consistently and that alarms correlate with error counters. If you see recurring “out of range” warnings that do not match interface health, standardize the optic family or adjust monitoring thresholds.

Do I need industrial temperature optics for data centers?

It depends on measured inlet temperatures and airflow design, not on assumptions. In our deployment, some cages approached the upper bounds during training seasons, so we moved to optics rated for the required operating range. If you cannot measure, at least validate the thermal profile during peak usage.

What is the fastest way to troubleshoot optic-related issues?

Start with fiber inspection and loss testing, then check interface counters during traffic bursts. Next, compare DOM telemetry against expected receive power margins and correlate with link resets or CRC spikes. Finally, swap to a known-good optic from your validated spare pool to isolate whether the issue is optics versus fiber.

If you take one step next, build your buying guide around measured fiber loss, switch compatibility, and traffic-based validation—not just reach and price. For more on planning your optical layer, see optical network planning for data centers.

Author bio: I write from hands-on deployments across enterprise and AI data centers, where optics selection is tested under real traffic and real failure modes. I focus on operational compatibility, measurable link budgets, and standards-aligned engineering decisions.