building strategies for optical resilience when supply stalls

When optical transceivers and fiber components stall in lead times, the network team is forced to make tradeoffs fast. This article lays out building strategies that keep throughput stable during shortages, helping data center and campus engineers plan module swaps, inventory buffers, and fallback paths. It is written from a hands-on deployment perspective, including measured link behavior and field troubleshooting.

Problem / Challenge: optical supply shortfalls in a live fabric

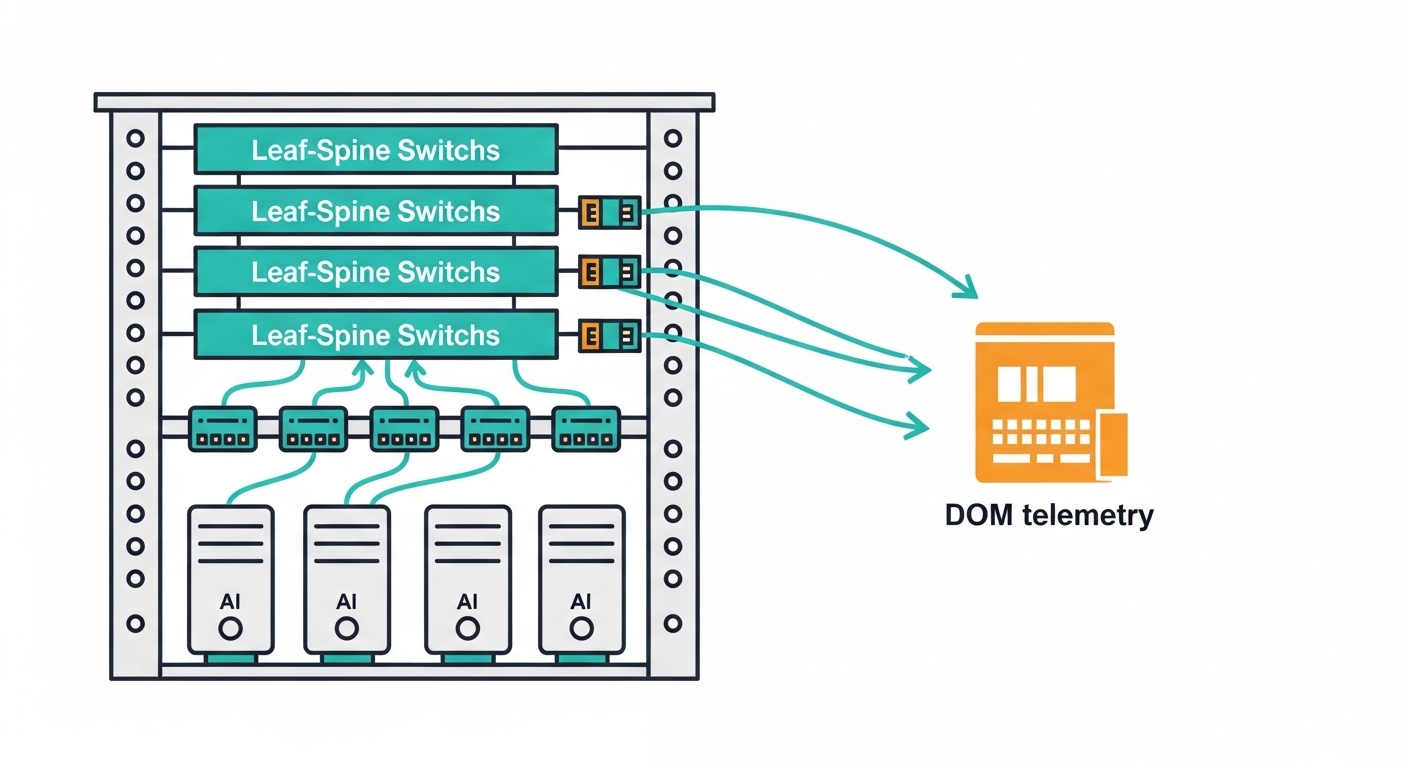

In Q1 of a recent rollout, a leaf-spine fabric faced a delivery gap for 10G SR optics used on ToR-to-spine links. The vendor’s promised supply slipped from 3 weeks to 10+ weeks, while the build schedule still required adding 384 new links. The immediate risk was not just downtime; it was silent incompatibility: wrong DOM formats, marginal transceiver power levels, or optics that would train but fail under temperature cycling.

We approached the problem as a resilience engineering task: design building strategies that tolerate module variability without sacrificing BER targets. The standards anchor is IEEE 802.3ae for 10GBASE-SR behavior and vendor datasheets for electrical and optical parameters, including receiver sensitivity and safety/eye-diagram compliance.

Environment specs: what the optics must satisfy

Our environment was a 3-tier data center fabric: 48-port 10G ToR switches feeding spine switches, with OM3 multimode fiber and short patch runs. Link budgets were constrained by conservative safety margins for connector loss and patch panel variability. The target was 10.3125 Gb/s line rate behavior (10G Ethernet), with module optical power and receiver sensitivity aligned to typical SR class ranges.

Key module parameters engineers verify

Before ordering alternates, we validated that replacements matched the SR wavelength class and reach expectations. For multimode SR, the typical wavelength is centered around 850 nm, with power and sensitivity varying by vendor and temperature. We also required DOM support when the switch platform expects real-time temperature and bias current telemetry.

| Parameter | 10GBASE-SR (Typical) | Why it matters during shortages |

|---|---|---|

| Data rate | 10G Ethernet (10.3125 Gb/s) | Prevents link negotiation surprises on marginal switch optics |

| Wavelength | ~850 nm | Mismatch to multimode class can cause immediate link loss |

| Fiber type | OM3 / OM4 multimode | Determines launch conditions and reach compliance |

| Connector | LC duplex | Wrong mechanical interface delays commissioning |

| Reach (nominal) | ~300 m (OM3) | Lets you trade inventory across patch lengths safely |

| DOM | Temperature, bias, TX power (vendor-specific) | Switch alarms and thresholding depend on it |

| Operating temp | Typically 0 to 70 C | Shortages often tempt “non-matching” temperature grades |

Primary reference points were IEEE physical layer expectations and module datasheets from major optics vendors. For standards context, see IEEE 802.3ae overview. For DOM behavior and optical safety, consult each transceiver vendor’s datasheet and switch vendor compatibility matrix.

Chosen solution: modular substitution with compatibility gates

Instead of waiting for a single SKU, we built a substitution plan around “compatibility gates”: strict checks that allow alternate optics only when they meet both physical and operational requirements. We targeted optics that are functionally equivalent but may vary in DOM implementation details, then enforced validation at commissioning.

Our module selection set

For 10G SR, we used known-compatible part families such as Cisco-branded optics (e.g., Cisco SFP-10G-SR) and third-party equivalents that match wavelength and reach, including Finisar/FS families (examples include Finisar FTLX8571D3BCL class optics and FS.com SFP-10GSR-85 class optics, depending on platform). The key was not the brand; it was that each candidate passed our compatibility gates and bench tests.

Pro Tip: During shortages, many failures are not “bad optics,” but “DOM threshold mismatch.” If your switch firmware expects specific alarm flags or calibrated TX power units, a transceiver can appear “online” yet generate chronic CRC/BER drift under load. Always test with DOM monitoring enabled and compare TX power and temperature telemetry against the baseline module you are replacing.

Implementation steps: how we executed building strategies under time pressure

We treated the plan like a controlled migration, not a bulk swap. First, we mapped every link by fiber type (OM3 vs OM4), patch length, and connector loss class. Second, we staged inventory by criticality: production uplinks got “known-good” alternates with full DOM telemetry validation; less critical access links used broader sourcing with additional margin.

Step-by-step commissioning workflow

- Pre-check compatibility: confirm transceiver type (10GBASE-SR SFP/SFP+ form factor), wavelength class, and connector (LC duplex) against the switch model’s published support list.

- Bench validation: measure TX optical power and receiver sensitivity using an optical power meter and verify DOM readings (temperature and bias current) are stable over a short thermal cycle.

- In-situ bring-up: bring links up one ToR at a time, then run traffic (iperf-like Layer 4 throughput) for at least 30 minutes while monitoring interface errors and DOM alarms.

- Thermal soak: repeat checks after airflow changes; in data centers, transceiver die temperature can swing enough to impact bias current and eye margin.

Measured results: what changed after the resilience plan

After deploying the substitution plan, we completed the 384-link expansion without schedule slippage. In bench tests, candidate optics showed TX power within the expected vendor operating band, and DOM telemetry matched baseline trends within normal calibration variance. During 30-minute traffic runs, error counters stayed at 0 CRC and interface counters did not show abnormal retransmits.

In the first month post-cutover, we observed fewer “mystery flaps” than prior ad-hoc swaps: the remaining incidents were traced to patch panel labeling errors and one mismatched fiber type run (OM2 cable mistakenly routed into an OM3 design). Those were resolved by tightening cable plant verification procedures and adding an automated fiber-type inspection step before commissioning.

Common mistakes / troubleshooting during optical shortages

Field experience shows repeatable failure modes. Below are the most common mistakes we saw, with root cause and fixes.

- Mistake 1: Ordering optics by reach alone. Root cause: reach ratings depend on fiber type (OM3 vs OM4) and launch conditions; a “300 m” label can be optimistic with real patch loss. Solution: validate with actual patch lengths and connector loss assumptions; add margin for dirty connectors.

- Mistake 2: Ignoring DOM unit conventions. Root cause: some platforms interpret DOM thresholds differently (TX power scaling, alarm bit mapping). Solution: compare DOM telemetry from baseline and candidate optics; update thresholds only if the switch vendor supports it.

- Mistake 3: Skipping thermal cycling tests. Root cause: bias current and optical output drift with temperature; a link can pass at room temp and fail after airflow changes. Solution: run traffic, then re-check link stability after a controlled thermal soak.

- Mistake 4: Using the wrong polarity or connector cleanliness. Root cause: LC duplex polarity errors and dirty endfaces cause elevated attenuation and intermittent link drops. Solution: clean with lint-free wipes and verify with a microscope/inspection; standardize polarity labeling.

Cost and ROI note: balancing OEM vs third-party under lead-time risk

In practice, OEM optics often cost more but reduce compatibility risk with specific switch platforms. Third-party modules can be 20% to 50% cheaper, but the ROI depends on your validation capacity: if you cannot bench-test and monitor DOM, the “savings” can be wiped out by troubleshooting labor and RMA cycles. Total cost of ownership includes failure rates, downtime impact, and the engineering time needed for compatibility gating.

For resilience planning, it is often cheaper to keep a small pool of validated spares (including at least one alternate vendor SKU per optics class) than to maintain full-batch procurement during shortages. That aligns directly with building strategies that reduce mean time to repair (MTTR).

FAQ

What building strategies work best for multimode SR optics during shortages?

Use substitution with compatibility gates: verify wavelength class (~850 nm), reach for your fiber type (OM3/OM4), connector style (LC duplex), and DOM telemetry expectations. Then enforce bench and in-situ tests before scaling across the fabric.

Can I mix optics vendors in the same switch fabric?

Yes, if the switch supports the transceiver class and your platform does not enforce strict vendor DOM behavior. Validate DOM alarm mappings and optical power ranges to prevent false alarms or undetected degradation.

How do I confirm DOM support when documentation is incomplete?

Bring up a single link with the candidate module, then read DOM values (temperature, bias current, TX power) via the switch CLI or management plane. Compare stability trends against a known-good baseline under traffic and after thermal changes.