AI infrastructure demands fast, reliable connectivity between GPUs, storage, and high-speed switches. The wrong transceiver can cause link instability, higher latency, or costly RMA cycles. This buying guide helps network and field engineers choose the right optics by matching data rate, reach, connector type, DOM support, and vendor compatibility to real deployment constraints. You will leave with a practical checklist and troubleshooting playbook.

Start with link math: map AI infrastructure traffic to optical reach

Before you compare part numbers, translate your topology into link requirements. Typical AI clusters use leaf-spine fabrics where ToR switches connect to spine switches, then to storage and out-of-band management. For each hop, engineers must determine target line rate (for example 100G or 400G), required reach (in meters), and fiber type (OM3, OM4, OM5, or single-mode).

Measure the actual fiber run, not the spreadsheet

In the field, fiber runs rarely match planning docs. Measure end-to-end distance including patch panels, slack loops, and trunk routing. Add margin for connector losses and aging; a common operational approach is to keep links within 80 to 90 percent of the transceiver’s specified reach when you expect frequent moves or cleaning variability.

Match optics families to your switch port standard

AI infrastructure frequently uses pluggable optics such as SFP28, QSFP28, QSFP-DD, and OSFP. Ensure the switch port supports the exact optics form factor and lane configuration. For example, 100G can use 4x25G lanes in QSFP28, while 400G often uses 8x50G lanes in QSFP-DD or OSFP variants depending on vendor.

Specs that matter: wavelength, reach, power, DOM, and temperature

Optics are not interchangeable just because the port says “100G” or “400G.” Engineers must validate wavelength (for SR vs LR), reach class, receive sensitivity, transmit power, and thermal limits. The most operationally relevant parameters for AI infrastructure are optical interface type, maximum operating temperature, and whether digital optical monitoring (DOM) is supported.

| Transceiver example | Data rate | Optical type | Typical wavelength | Reach target | Connector | DOM | Operating temp (typ.) |

|---|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G | Multimode SR | 850 nm | ~300 m on OM3 | LC | Yes | 0 to 70 C (varies by revision) |

| Finisar FTLX8571D3BCL | 10G | Multimode SR | 850 nm | ~400 m on OM4 | LC | Yes | 0 to 70 C (vendor datasheet) |

| FS.com SFP-10GSR-85 | 10G | Multimode SR | 850 nm | ~400 m on OM4 | LC | Yes (commonly) | 0 to 70 C (varies by grade) |

When you scale to 25G/50G/100G/400G, the same principles apply but the tolerance stack tightens. A few dB of margin matters in dense AI infrastructure because airflow and dust exposure can shift module temperature and optics cleanliness over time.

Pro Tip: In many AI racks, the biggest failure driver is not “bad optics,” but connector cleanliness plus thermal cycling. If you see intermittent link flaps after maintenance windows, re-clean LC/ MPO terminations and verify transceiver DOM temperature and bias current trends before replacing modules.

Compatibility checklist: avoid surprises with switch firmware and optics standards

Engineers succeed by treating transceiver selection as a compatibility project, not a catalog purchase. Start with the switch vendor’s optics matrix, then confirm the module meets the correct electrical and optical standard for the port.

- Data rate and lane mapping: confirm the module supports the intended speed (for example 100G or 400G) and lane configuration.

- Optics standard and form factor: verify QSFP28 vs QSFP-DD vs OSFP and that the switch port expects that exact type.

- Fiber type and reach: OM3/OM4/OM5 for SR; single-mode for LR/ER; confirm reach at your measured distance.

- DOM and monitoring: ensure the module exposes DOM fields your network OS expects (temperature, laser bias, received power).

- Operating temperature grade: choose modules rated for the environment; many data centers run hot at the top-of-rack.

- Switch firmware behavior: some platforms apply vendor-specific compatibility checks; validate with a small pilot.

- Vendor lock-in risk: OEM optics may be pricier, while third-party can be cheaper but sometimes have firmware quirks.

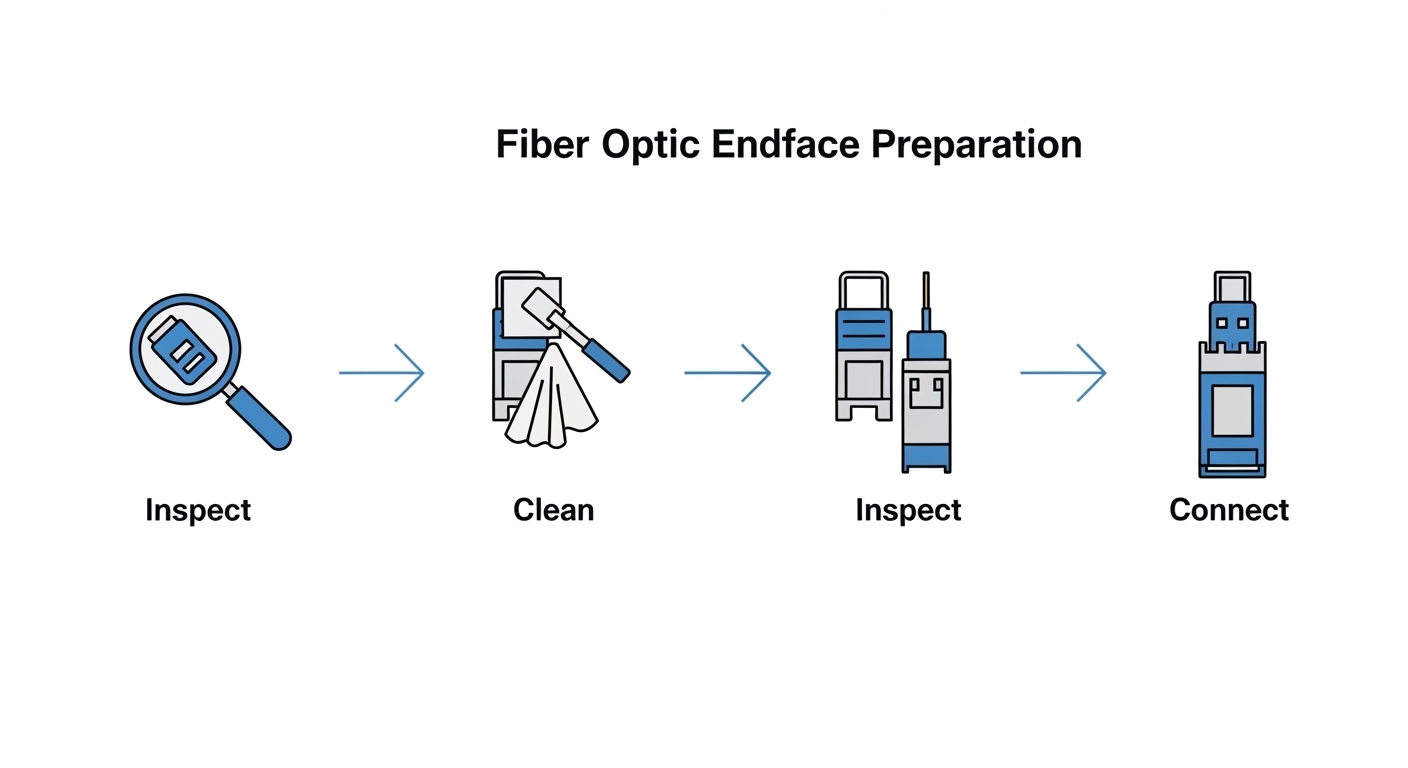

- Connector polish and cleaning process: confirm the termination type (LC or MPO) and enforce cleaning SOPs.

Reference points include IEEE Ethernet physical layer guidance such as IEEE 802.3 and vendor datasheets for specific transceiver families. For standards background, see [Source: IEEE 802.3]. For operational optics behavior, consult each vendor’s transceiver datasheet and compatibility notes.

Real-world deployment scenario: 48-port 100G ToR to spine in an AI cluster

In a 3-tier data center leaf-spine topology, a team deploys 48-port 100G ToR switches connecting to 12-port 400G spine uplinks. Each ToR has 32 active 100G server links and 16 100G uplinks aggregated into 400G bundles at the spine. The fiber runs from ToR to spine average 70 m through patch panels and cable trays, using OM4 multimode for SR links. Engineers standardize on QSFP28 SR optics rated for OM4 reach above the expected distance and keep a maintenance margin for re-termination events.

Operationally, they implement a rollout plan: install optics in 4 racks first, verify link stability for 72 hours, then expand. They monitor DOM received power and module temperature via the switch telemetry, and they enforce a cleaning SOP before any insertion after downtime. This reduces the probability that a marginal link becomes a recurring incident during training jobs.

Common mistakes and troubleshooting tips in AI infrastructure optics

Optical failures are often avoidable when you follow a consistent workflow. Below are frequent field issues, their root causes, and fast solutions.

Link flaps after “successful” insertion

Root cause: contaminated connectors or damaged ferrules leading to variable insertion loss. Even a small amount of dust can shift receive power below threshold during temperature swings. Solution: clean connectors with the correct method and inspect with a scope; verify DOM received power stability over time.

“No module detected” or port down after firmware updates

Root cause: switch firmware enforces compatibility checks or expects specific DOM behavior. Some third-party optics may pass basic electrical checks but fail platform validation. Solution: consult the switch vendor optics compatibility list and test the exact module part number against your firmware version in a pilot.

Range disappointment: SR used beyond practical loss budget

Root cause: the planned distance ignored patch cords, aging, or extra splices. SR optics have a finite power budget; budget overrun shows up as high error rates and retransmits. Solution: re-measure the full channel, calculate link loss including connectors, and move to higher-reach media or single-mode optics if needed.

Thermal mismatch in high-density racks

Root cause: optics rated for a narrower temperature range placed in hot zones with insufficient airflow. Laser bias current can drift, causing higher BER. Solution: use modules with the appropriate temperature grade and validate airflow paths; check DOM temperature trends.

Cost and ROI: OEM vs third-party transceivers for AI infrastructure

Pricing varies by speed and reach, but a realistic budgeting approach helps procurement. As a rule of thumb, OEM optics often cost more but can reduce compatibility risk and shorten support cycles. Third-party modules can cut initial cost, but you should factor pilot testing time, potential warranty handling, and any firmware compatibility quirks.

- 10G SR LC: often tens of dollars to low hundreds depending on grade and brand.

- 25G/100G SR: typically higher; expect mid-hundreds to several hundred dollars per module.

- 400G optics: can be significantly more; TCO impact is dominated by failure rate, downtime costs, and support turnaround.

For ROI, include power and cooling impacts indirectly: stable optics reduce link retries and avoid maintenance disruptions during training windows. The cheapest module is rarely the cheapest in practice if it increases incident volume.

FAQ: choosing optics for AI infrastructure

Which transceiver type should I buy for AI infrastructure: SFP, QSFP28, or QSFP-DD?

Buy based on your switch port form factor and intended speed. QSFP28 is common for 25G/100G class links, while QSFP-DD and OSFP are typical for 400G-class deployments. Always confirm with the switch vendor optics matrix.

How do I choose between OM4 multimode SR and single-mode LR for AI infrastructure?

Choose SR when your measured distance fits the OM4 channel loss budget with margin and your cabling plant is multimode-ready. Choose single-mode when you need longer reach, higher reliability over distance, or when you anticipate frequent expansions beyond multimode limits.

Do I need DOM support for monitoring in AI infrastructure?

Yes, for operational visibility. DOM enables monitoring of temperature, bias current, and received optical power, which helps you detect degradation before hard failures. Ensure your switch OS reads the DOM fields correctly for the specific module.

Are third-party transceivers safe for production?

They can be, but only after validation against your exact switch model and firmware. Run a pilot in a low-risk zone, verify link stability, and compare DOM readings and error counters with OEM optics.

What is the fastest troubleshooting step when a link comes up then drops?

Clean and inspect the connectors first, then review DOM received power and temperature trends. If received power is marginal or fluctuating, connector contamination and thermal cycling are the most common root causes.

Where can I verify standards for Ethernet optics used in AI infrastructure?

Use IEEE 802.3 for Ethernet physical layer context, then rely on vendor transceiver datasheets for exact reach, power budgets, and DOM behavior. For authoritative compatibility notes, consult your switch manufacturer documentation. [Source: IEEE 802.3]

If you want to reduce downtime during scale-out, pair this buying checklist with a disciplined optics lifecycle process: validate in a pilot, standardize part numbers, and monitor DOM. Next, explore fiber cleaning SOP for data center optics to keep links stable through maintenance cycles.

Author bio: Field-focused network writer who has deployed and validated high-speed optics in leaf-spine and AI cluster fabrics. I translate vendor specs into practical acceptance tests, monitoring, and troubleshooting workflows.