In a live 5G rollout, the first outage is often not radio, but time. When telecom timing optics drift, jitter rises, and synchronization collapses across gNodeBs, transport, and aggregation. This article helps network engineers, field technicians, and optical planners validate telecom timing optics for ITU-T G.8273.2 Class C behavior, focusing on transceiver choices, power budgets, and operational pitfalls.

Why telecom timing optics decide whether Class C timing holds

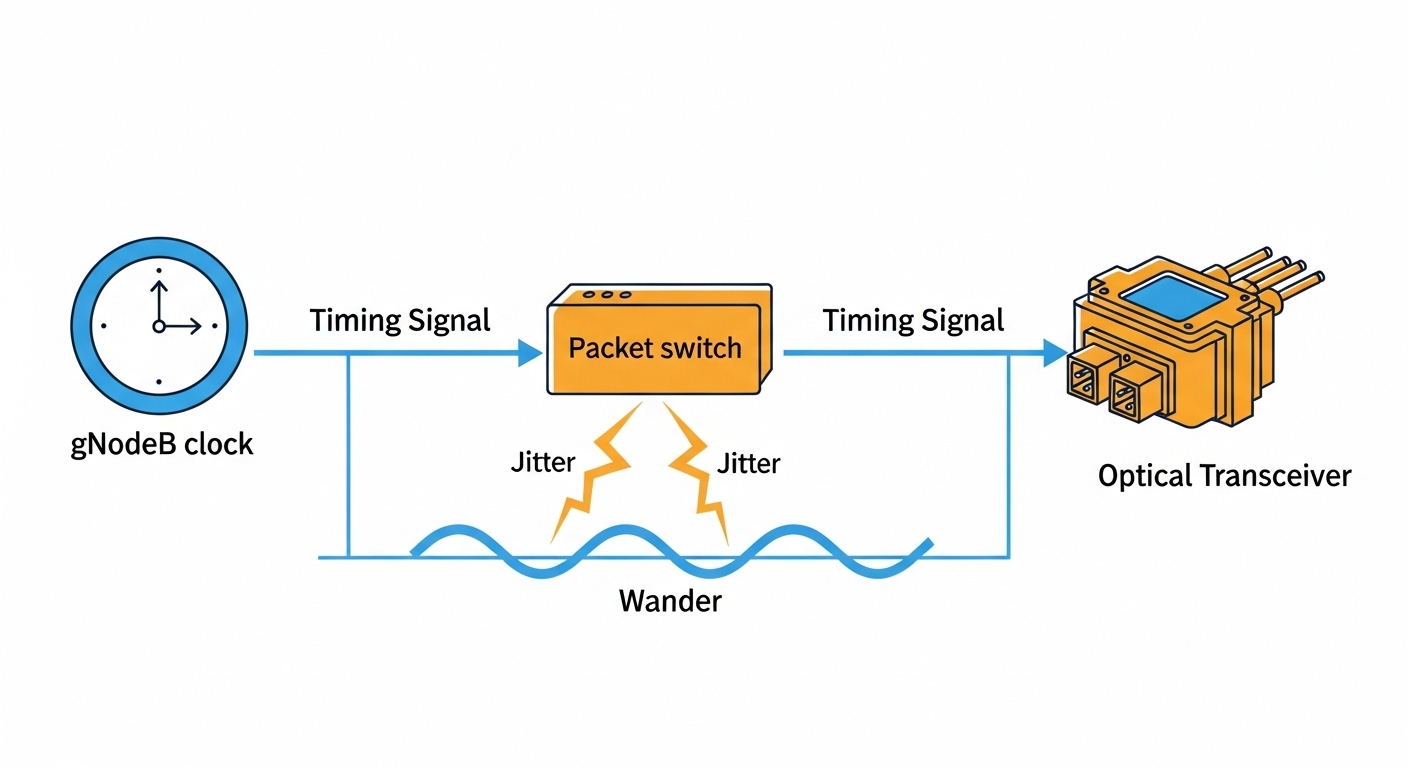

G.8273.2 Class C targets a bounded timing performance for packet networks, where the transport layer must deliver stable timing under realistic noise and wander. In practice, the optical transceiver is not merely a “light source”; it is the interface that determines optical power stability, receiver sensitivity margin, and tolerance to link impairments. If a module’s temperature behavior, transmitter bias control, or DOM-reported parameters deviate from expectations, you can see time error accumulation downstream.

Engineers typically align optics to IEEE 802.3 physical layer constraints for the selected interface rate, then map timing expectations to the transport timing chain. For 5G, this often means SyncE and/or PTP (IEEE 1588) running alongside packet forwarding, with clock recovery dependent on clean signals. Vendor datasheets and the module’s compliance claims matter, but your real proof is how the link behaves across temperature cycles and connector states.

G.8273.2 Class C and the transceiver: the practical engineering link

G.8273.2 Class C is concerned with transfer of timing over packet networks, including the impact of the network and interfaces on timing stability. While the standard does not “name” a specific wavelength or module vendor, it assumes that the physical layer does not introduce avoidable wander, packet loss bursts, or link-induced jitter that propagates into timing distribution. Therefore, engineers treat optics selection as part of the timing system design, not an afterthought.

In deployment, the optical link is frequently used to carry SyncE or to transport PTP over Ethernet. If the link intermittently degrades—due to marginal power, dirty connectors, or a transceiver with weak receiver margin—sync recovery can suffer. For field work, that means you should verify optical budget, link margin, and module health via DOM (Digital Optical Monitoring) before you declare timing readiness.

Operational parameters you should measure, not just assume

- Receive optical power (dBm) at commissioning and after maintenance windows; log it against the vendor’s recommended operating range.

- DOM readings for Tx bias current, Tx power, Rx power, and temperature; confirm they remain within tolerance across the cabinet’s thermal cycle.

- BER / link error counters on the switch or line card; correlate spikes with timing alarms.

- Connector cleanliness inspections; even “works most days” links can create timing instability under traffic bursts.

Pro Tip: In field troubleshooting, the most convincing evidence is not a single “pass/fail” optics test, but a timeline. Record Rx power and interface error counters for at least one full diurnal temperature swing; timing problems that appear only during warmer hours often trace back to transmitter bias drift or marginal link budget rather than the clock algorithm itself.

Comparing timing-capable optics options for 5G transport

For 5G fronthaul and transport, you will commonly choose between SFP/SFP+ and QSFP/QSFP28 families depending on interface rate and switch port density. Timing optics selection should consider wavelength (for reach and fiber type), connector style (LC vs MPO), and module class behavior (temperature range and optical control loops). The safest path is to match the module to the switch vendor’s optics compatibility list and to confirm the module’s timing-related performance claims in its datasheet.

The table below compares typical candidates engineers evaluate for Class C timing deployments. Exact values depend on the module SKU, vendor calibration, and host design, so treat this as a planning baseline and verify with your specific part numbers and switch platform.

| Module type | Wavelength | Reach (typ.) | Data rate | Connector | Operating temp | Typical power | Common part examples |

|---|---|---|---|---|---|---|---|

| SFP+ 10G SR | 850 nm | ~300 m (OM3), ~400 m (OM4) | 10G | LC | -5 to 70 C (often) | ~0.8 to 1.5 W | Cisco SFP-10G-SR; Finisar FTLX8571D3BCL; FS.com SFP-10GSR-85 |

| SFP28 25G SR | 850 nm | ~100 m (OM3), ~150 m (OM4) | 25G | LC | -5 to 70 C (often) | ~1.0 to 2.0 W | Vendor-specific 25G SR modules (verify DOM and compatibility) |

| QSFP28 100G SR4 | 850 nm (4 lanes) | ~100 m (OM3), ~150 m (OM4) | 100G | MPO | 0 to 70 C (often) | ~3 to 6 W | QSFP28 SR4 equivalents from major OEMs |

| Coherent long-haul (if used) | 1310/1550 nm bands | 10 km to 80 km+ (varies) | 10G/25G/100G+ | Varies (fiber type depends) | Varies | Higher (system-dependent) | Platform-specific coherent transceivers |

For Class C timing, the most important practical difference is not only reach, but margin stability and repeatability under thermal stress. A module that barely meets Rx power at commissioning can fail timing indirectly by creating sporadic link errors during temperature peaks or after connector re-mating.

Selection criteria checklist for telecom timing optics in the field

When engineers select optics for timing-sensitive 5G transport, the goal is deterministic behavior: stable optical output, stable receiver margin, and predictable DOM telemetry. Use this ordered checklist during procurement and commissioning.

- Distance and fiber grade: confirm OM3/OM4/OS2, measured attenuation, and connector loss; compute an optical power budget with safety margin.

- Switch compatibility: verify the exact transceiver SKU appears on the host switch vendor’s validated optics list; mismatched firmware or diagnostics can cause link resets.

- DOM support and thresholds: ensure you can read Tx power, Rx power, bias current, and temperature; confirm alert thresholds match your monitoring system.

- Data rate and modulation match: ensure IEEE 802.3 lane rate and optics type align with the port’s electrical interface; do not rely on “it lights up” tests.

- Operating temperature range: choose modules rated for your cabinet environment, not the mild conditions on a bench.

- Power and thermal budget: in dense deployments, transceiver heat can alter bias currents; validate with measured airflow and inlet temperatures.

- Vendor lock-in risk: balance OEM parts with third-party availability, but require the same DOM telemetry and compatibility; keep spares with known behavior.

- Timing claims vs operational reality: prefer modules with documented performance in timing-sensitive deployments; still validate with your network’s synchronization alarms.

Real-world deployment scenario: 5G transport with Class C timing validation

In a 3-tier data center leaf-spine topology supporting a 5G transport domain, a carrier deployed 48-port 10G ToR switches feeding aggregation, then uplinks to a regional timing and transport cluster. Each site used SyncE over Ethernet plus PTP for fine timing, with optics spanning 120 meters of OM4 fiber from edge cabinets to aggregation racks. Engineers installed SFP+ 10G SR modules in the ToR ports and validated commissioning with measured Rx power of about -2 to -6 dBm at 25 C, leaving a margin to the vendor’s minimum sensitivity. During a summer ramp, they observed timing alarms only when cabinet inlet temperature rose above 45 C; DOM logs showed Tx bias drift trending upward and occasional link error counter spikes, which correlated with elevated packet delay variation.

The fix was operational and optical: they cleaned and re-terminated two LC pairs, then adjusted the link margin by replacing two marginal fibers with lower-loss jumpers. After re-commissioning and a 24-hour soak, timing alarms disappeared and error counters stayed at baseline. The lesson was clear: even if a transceiver “meets spec” on paper, the timing chain is sensitive to link stability and thermal drift.

Common mistakes and troubleshooting tips for timing optics

Timing failures often masquerade as synchronization bugs, but the root cause is frequently optical. Below are concrete mistakes seen in field work, with likely causes and remedies.

“It passes link up” but timing still fails

Root cause: Receiver power is within range at room temperature, but margin collapses during warm operation, causing sporadic errors that disturb timing recovery. Solution: measure Rx power and DOM telemetry across a temperature swing; aim for a conservative optical budget that maintains margin at the warmest inlet temperature.

Dirty connectors after maintenance

Root cause: Connector contamination introduces micro-reflections and insertion loss changes, leading to intermittent BER increases during traffic bursts. Solution: enforce a cleaning and inspection workflow: use fiber scope inspection before re-mating, and document cleaning method and connector type.

Incompatible transceivers with partial diagnostics

Root cause: Third-party modules may light up but report DOM values differently, or the host may throttle or reset due to unsupported behaviors. Solution: confirm DOM compatibility and thresholds on the exact switch model; validate with vendor compatibility lists and run error counter monitoring under load.

Wrong fiber type or underestimated patch loss

Root cause: OM3/OM4 assumptions differ from what is actually installed, and patch cords add unexpected attenuation. Solution: verify fiber grade and measure end-to-end loss; update the link budget and replace any high-loss segments.

Cost, ROI, and what to budget for timing optics

In many networks, OEM SFP/SFP+ and QSFP modules cost more than third-party equivalents, often placing typical street pricing in the range of roughly $60 to $250 per 10G optics module depending on brand and spec, and higher for higher-rate families. Third-party modules can reduce upfront cost, but the TCO depends on failure rates, swap cycles, and the engineering time spent chasing intermittent timing faults. If your maintenance window is expensive, spending more on validated optics and verified compatibility can be cheaper than repeated truck rolls.

Also include the cost of monitoring: DOM-capable optics plus telemetry integration lets you detect bias drift before it becomes a timing incident. For ROI, quantify the avoided downtime risk and the reduced troubleshooting time, not only the per-module purchase price.

FAQ

What makes telecom timing optics different from regular data transceivers?

In timing-sensitive networks, the optics must behave predictably under thermal and operational stress. That means stable transmitter bias control, reliable receiver sensitivity margin, and trustworthy DOM telemetry so you can correlate optical health to synchronization alarms.

Do I need a specific wavelength to meet G.8273.2 Class C timing?

G.8273.2 Class C is about timing performance over the network, not a single wavelength requirement. However, wavelength choice affects reach and margin; if the optical link becomes unstable, timing can degrade indirectly through increased errors and delay variation.

Are third-party modules acceptable for Class C timing deployments?

They can be, but only if they are validated for your exact host switch platform and provide consistent DOM behavior. Require documented compatibility, then validate in your environment with measured Rx power and error counters across temperature.

How should I test optics before declaring timing readiness?

Commission with measured optical power at the far end, then monitor DOM readings and interface error counters under realistic load. For timing, run a soak across at least one full thermal cycle and watch for synchronization alarms tied to link events.

What are the most common symptoms of optics-related timing problems?

You may see timing alarms, increased packet delay variation, and intermittent sync instability that correlates with temperature or link re-mating. Often the optical error counters show brief spikes that line up with timing events.

Where can I confirm compatibility and standards expectations?

Start with the IEEE 802.3 physical layer specifications for your interface rate, then consult host vendor optics compatibility lists and transceiver datasheets. For timing over packet networks, review ITU-T G.8273.2 and related timing transfer guidance.

References used: [Source: IEEE 802.3], [Source: ITU-T G.8273.2], [Source: vendor transceiver datasheets and host switch optics compatibility guides].

Next step: if you are planning a 5G timing buildout, start by mapping your fiber link budgets and DOM telemetry targets, then select telecom timing optics that you can prove stable under temperature swing. For deeper planning, see telecom timing architecture for 5G transport.

Author bio: I work as a field-deployed optical and timing engineer, documenting real commissioning results from cabinets, patch panels, and live switch telemetry. I write with a photographer’s eye for alignment and a technician’s respect for margins, because the smallest optical detail can become the largest timing story.