Spatial Division Multiplexing (SDM) is reshaping optical network capacity by multiplying parallel light paths inside fiber. This article helps data center and campus network engineers choose next-gen transceivers that match SDM realities: connector loss, lane mapping, DOM behavior, and thermal limits. You will get a practical spec comparison, a field-ready decision checklist, and troubleshooting patterns observed in deployments.

Why SDM changes what “compatible transceivers” really means

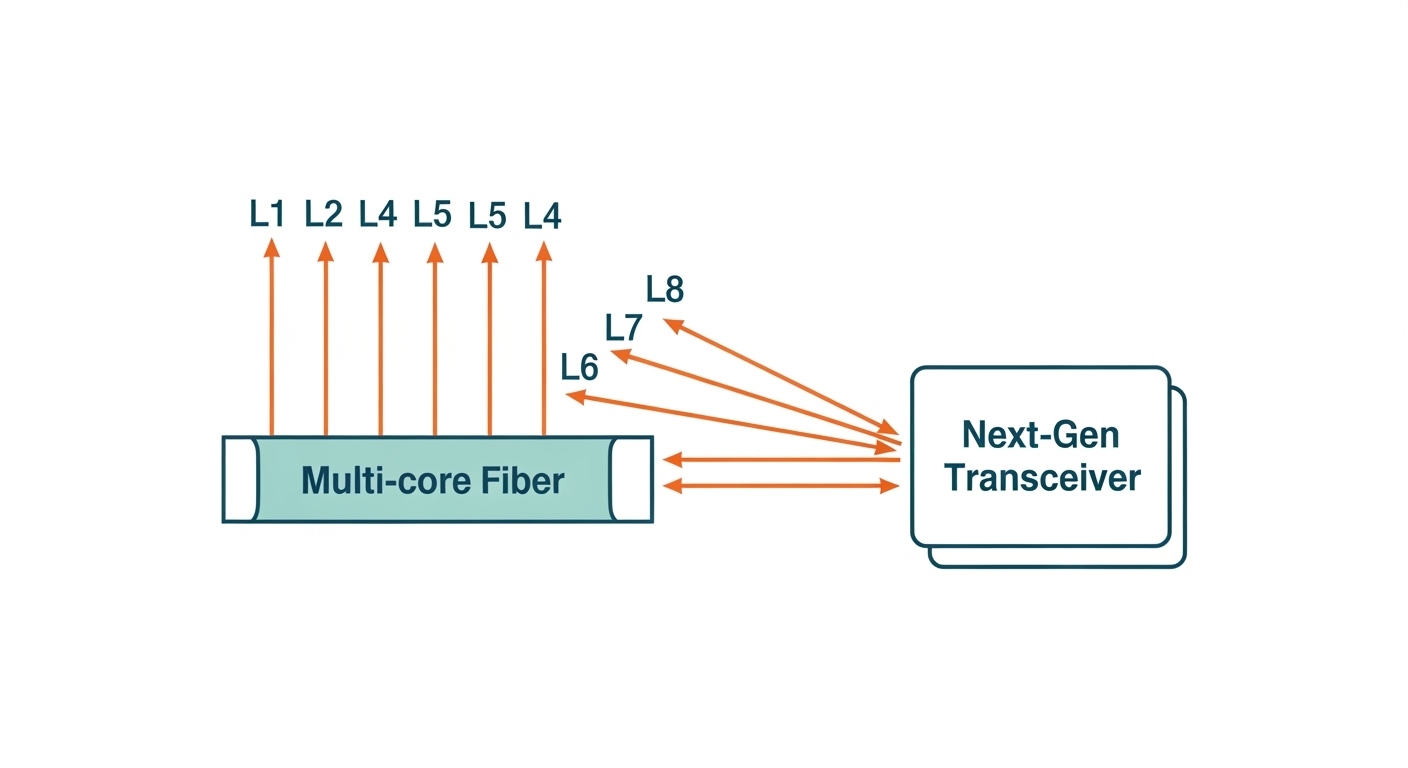

In SDM designs, capacity growth does not come only from higher baud rates; it also comes from more spatial channels (often called “lanes”) carried simultaneously. That means your transceiver optics must align not just by wavelength and interface standard, but by lane count, lane polarity conventions, and fiber type (e.g., multi-core fiber). From an engineering standpoint, the failure mode shifts: you can have “correct” wavelength yet still see elevated BER because lane mapping or differential skew is wrong.

Most SDM systems still rely on standard electrical interfaces (for example, IEEE 802.3 clauses for Ethernet PHY behavior) while moving optical complexity inward to the transceiver and optical module optics. As a result, you must verify both electrical compliance and optical performance: transmitter output power, receiver sensitivity, and the system’s connector and splice budget. For authoritative physical-layer baselines, reference [Source: IEEE 802.3] and vendor module datasheets for the exact optical/electrical parameters.

Pro Tip: In SDM rollouts, the fastest path to stable link brings is not “more power.” It is validating lane mapping end-to-end with a deterministic test plan (labeling, polarity verification, and consistent patch panel routing) before you commission live traffic.

Key specifications to match when buying next-gen transceivers

Engineers typically shortlist modules by form factor (QSFP/QSFP-DD/OSFP), data rate, and reach. For SDM, you also need to confirm lane count support and the exact fiber interface type. Below is a practical comparison template you can use to avoid mismatches during procurement and lab validation.

| Spec | What to Verify for SDM | Typical Values Engineers Check |

|---|---|---|

| Data rate | Matches switch/host PHY; confirm lane aggregation model | 25G, 50G, 100G per lane or per module depending on interface |

| Wavelength | Correct optical band; confirm per-lane wavelength plan | 850 nm (short reach) or 1310 nm/1550 nm (longer reach) |

| Reach (optical budget) | Include connector, splice, patch cords, and insertion loss | Examples: 100 m (MMF) class or 2 km+ (SMF) class depending on module |

| Fiber type | Confirm multi-core or multi-fiber strategy; ensure correct MPO/MTP mapping | Multi-core fiber variants or parallel MMF/MPO ecosystems |

| Connector | Verify polarity, keying, and lane ordering for your patch panels | MPO/MTP common in dense optics; check “polarity A/B” equivalents |

| DOM support | Check real-time temperature, bias currents, and alarm thresholds | Digital diagnostics with vendor-defined alarm levels |

| Operating temperature | Match switch room and plenum constraints | Common ranges: 0 to 70 C or -40 to 85 C depending on grade |

| Transmit power / Rx sensitivity | Budget must survive worst-case patching and aging | Vendor-specific; validate against your link loss model |

When you evaluate specific products, use exact model numbers from datasheets. For example, many teams compare 10G/25G MMF optics like Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, or FS.com SFP-10GSR-85 for baseline behavior, then move up to higher-density families that better fit SDM topologies. For SDM-focused procurement, always prioritize vendor documentation explicitly describing lane handling, fiber interface requirements, and DOM behavior. [Source: Cisco QSFP and SFP datasheets], [Source: Finisar/II-VI optical transceiver datasheets], [Source: FS.com transceiver datasheets].

Real-world deployment scenario: leaf-spine with SDM-ready optics

Consider a 3-tier data center leaf-spine topology with 48-port 400G ToR switches at the leaf and 400G uplinks to spine. The design goal is to expand capacity without doubling footprint or power draw. The team deploys next-gen transceivers that support SDM-capable fiber pathways in a controlled patch-panel layout, targeting a practical link loss budget that includes 0.5 dB per connector pair and worst-case patch cord insertion. They also require that DOM alarms are readable by the switch for proactive replacement windows.

In commissioning, engineers map each transceiver lane group to a specific patch-panel row and record the patch cord serial numbers. They then run a staged acceptance test: first verify link up and error counters at an idle state, then apply a controlled load profile that ramps packet sizes while monitoring BER/PCS errors. If a subset of lanes shows marginal margin, the team does not immediately swap modules; they first re-check polarity and lane ordering in the patch panel routing, because SDM lane misalignment is a common root cause.

Selection criteria checklist for SDM and next-gen transceivers

Use this ordered checklist to reduce commissioning churn and avoid “compatible on paper” failures.

- Distance and loss budget: Confirm reach class and compute total loss including connectors, splices, patch cords, and safety margin.

- Fiber interface and lane mapping: Verify multi-core or parallel-fiber strategy, MPO/MTP polarity conventions, and lane ordering assumptions.

- Switch compatibility: Confirm the host vendor’s supported transceiver list or at least PHY compliance expectations for your interface type.

- DOM and monitoring model: Ensure temperature, bias, and optical power telemetry are exposed and alarm thresholds match your NMS tooling.

- Operating temperature and airflow: Match module grade to actual cabinet inlet temperature; validate that thermal throttling is not triggered.

- Vendor lock-in risk: Compare OEM pricing vs third-party, but validate interoperability with your exact switch firmware train.

- Manufacturing and lifecycle: Prefer vendors with published change-notification processes and consistent part numbers across revisions.

Common pitfalls and troubleshooting patterns

SDM deployments can fail in ways that are subtle if you only check wavelength and reach. Here are field-tested mistakes, with root causes and fixes.

-

Pitfall 1: Link comes up but error counters climb quickly

Root cause: Lane mapping mismatch (polarity or lane ordering) between transceiver optics and patch panel routing.

Solution: Verify polarity convention, re-terminate or re-route patch cords to match the vendor’s lane mapping diagram, then rerun BER/PCS checks. -

Pitfall 2: Intermittent link flaps under temperature swings

Root cause: Module operating outside validated thermal envelope or insufficient airflow causing transmitter bias drift.

Solution: Measure cabinet inlet and module case temperature; ensure airflow baffling and fan curves are correct; deploy the appropriate temperature-grade module. -

Pitfall 3: Works with one vendor’s transceiver but not another

Root cause: Electrical parameter differences (Tx/Rx equalization tuning) and DOM alarm threshold behavior that interacts with switch firmware.

Solution: Validate with your switch firmware version; prefer modules with documented compatibility; update firmware only after capturing baseline telemetry. -

Pitfall 4: Apparent “dead” receiver

Root cause: Connector end-face contamination or improper MPO/MTP seating leading to high insertion loss on specific lanes.

Solution: Inspect and clean with approved procedures, confirm keying and full insertion depth, and test with a known-good patch cord.

For optical cleaning and connector handling, follow your connector manufacturer’s best practices and safety guidance. For network-layer verification and PHY error interpretation, align with IEEE 802.3 performance monitoring expectations where applicable. [Source: IEEE 802.3], [Source: MPO/MTP connector manufacturer application notes].

Cost and ROI: what engineers should budget for

Pricing varies widely by speed, fiber type, and whether SDM-specific optics are involved. In many deployments, next-gen transceivers can cost from roughly $300 to $2,000 per module at scale for mainstream categories, while SDM-capable or higher-density variants may be higher depending on vendor and supply conditions. TCO is dominated by failure rates, spares strategy, and labor time during commissioning and troubleshooting.

ROI improves when you reduce rework and downtime: lane mapping errors and thermal issues can multiply labor hours. Third-party modules may reduce purchase price, but the ROI only holds if DOM telemetry, alarm thresholds, and switch compatibility remain stable across firmware updates. A practical approach is to pilot with a small tranche, capture telemetry baselines, and confirm that optical power and temperature drift remain within vendor-defined limits over your acceptance window.

FAQ

What makes next-gen transceivers “next-gen” for SDM networks?

They handle higher-density optics and SDM-specific lane behavior, not just higher baud rates. Practically, you must confirm lane mapping, fiber interface requirements, and DOM telemetry consistency with your switch platform.

Do I only need to match wavelength and reach?

No. SDM requires matching the fiber strategy and connector polarity/lane ordering, plus ensuring your system loss budget survives connectors, splices, and patch cord variations.

How can I validate lane mapping before production traffic?

Use a deterministic labeling and routing plan, then run link bring-up and controlled BER/PCS checks with a known test pattern. If errors concentrate to specific lanes, re-check patch panel routing and polarity rather than immediately swapping modules.

Are third-party transceivers safe for next-gen deployments?

They can be, but only after compatibility validation with your exact switch models and firmware versions. Demand datasheets that clearly describe DOM behavior, optical parameters, and connector/polarity conventions.

What DOM alarms matter most during commissioning?

Track optical power, bias current trends, and temperature-related warnings. If your switch can surface these fields, set thresholds aligned to your operational envelope so you catch drift before it becomes link instability.

Where do most SDM transceiver failures originate?

Common causes include polarity/lane mapping mistakes, connector contamination, and thermal margin shortfalls. Address these first because they explain the highest fraction of “works sometimes” behavior.

If you are planning capacity growth, pair this SDM transceiver selection workflow with a link-loss and optics budgeting method tailored to your patch-panel design. Next step: How to calculate optical link loss for high-density transceiver deployments.

Author bio: I have deployed high-density Ethernet optical networks in data centers, validating optical budgets, DOM telemetry, and commissioning test plans under real rack thermal constraints. I write from an operator’s perspective, focusing on compatibility evidence, measurable margins, and failure-mode prevention for next-gen transceivers.