When a hydroelectric dam control network goes unstable, it is not just a “link down” event; it can delay gate movement, slow alarms, and complicate incident response. This article shows how power plant fiber is engineered for dam control systems using SFP optics, helping plant engineers, integrators, and data center adjacent teams design for reliability under harsh environmental constraints. You will see a real deployment case, the exact selection checklist we used, and the troubleshooting patterns that most teams only learn after an outage.

Problem to Solve: Dam Control Links Need SFP-Grade Reliability

In our case, a hydroelectric plant upgraded protection and telemetry between the powerhouse and the dam control building. The network carried control plane messages, telemetry bursts, and status signals for spillway gates and generator protection. The challenge was that we had to reuse existing fiber routes where possible, while ensuring deterministic behavior for industrial automation traffic. We also needed optics that tolerate temperature swings near intake structures and long-term vibration in equipment rooms.

The “power plant fiber” requirement was more than bandwidth. It had to deliver stable optical receive power and predictable link margins across aging fiber, connector variability, and seasonal temperature changes. We selected SFP transceivers for their manageable footprint in control racks and their broad ecosystem compatibility with managed switches. Still, SFP selection is not plug-and-play: DOM data, vendor-specific implementations, and optical budget assumptions can make or break the link.

Environment Specs We Measured Before Selecting Optics

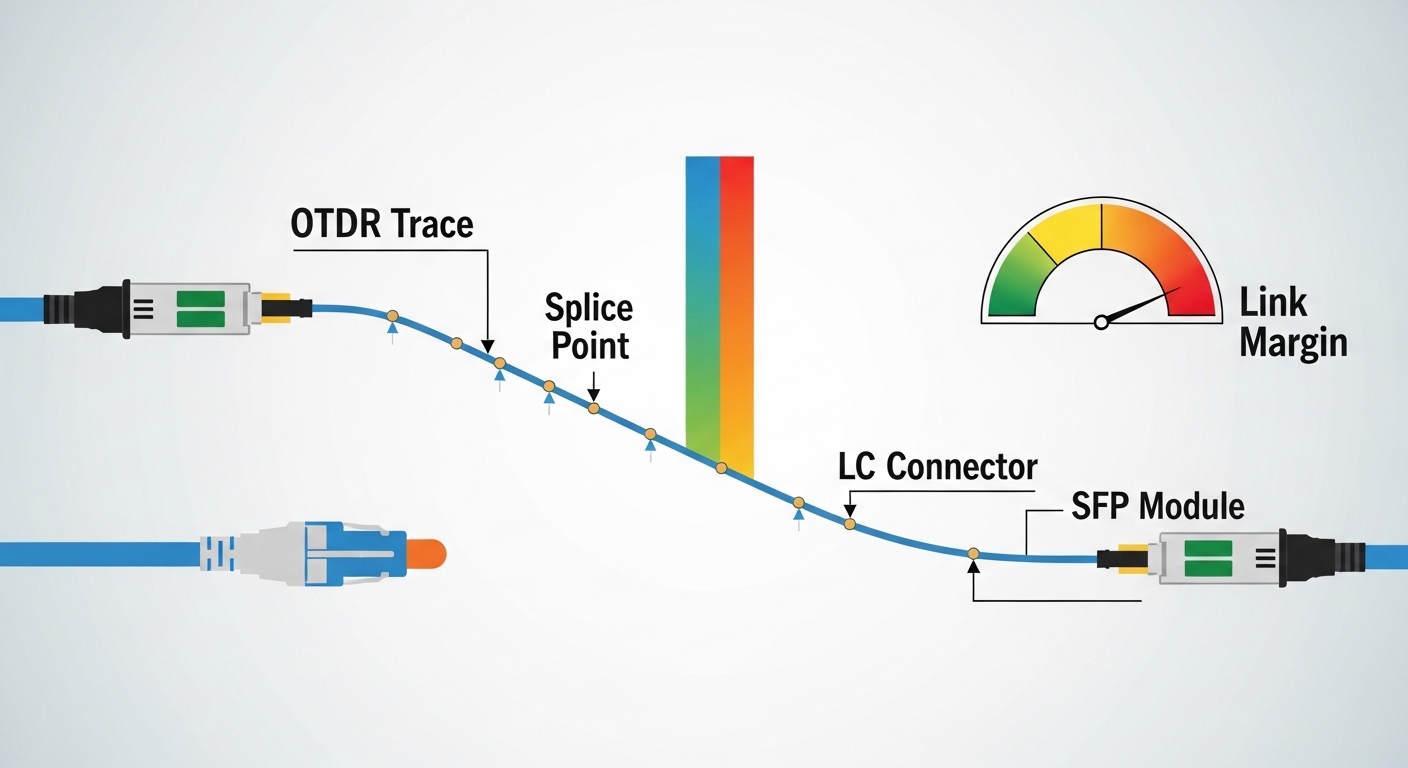

We walked the route with an OTDR and validated the fiber plant against the assumptions in the original as-built drawings. The key numbers we collected were used directly in the optical budget and margin check. Typical values were: 2.1 km average distance between powerhouse and control room, ~6 dB total connector and splice loss, and fiber attenuation around 0.35 dB/km at the chosen wavelength. We also logged temperature extremes in the equipment bays: 5 C to 55 C, with localized hotspots near DC power distribution.

Standards and Compatibility Targets

For Ethernet over fiber, we targeted optics consistent with IEEE 802.3 physical layer expectations for SFP classes. For cabling and performance verification, we aligned with ANSI/TIA-568 guidance for link testing and acceptance practices. For transceiver interoperability, we relied on vendor datasheets and switch vendor compatibility matrices whenever available.

Authority references used during design review included [Source: IEEE 802.3] and [Source: ANSI/TIA-568]. For practical optical reach and parameter ranges, we cross-checked vendor datasheets for typical transceiver behavior under temperature and DOM reporting. See also: IEEE 802.3 and ANSI/TIA-568 references via industry standard portals.

Chosen Solution: SFP Modules Matched to Dam Fiber Reach and Switch Behavior

We deployed a leaf-spine-like topology inside the control and monitoring domain, with redundant uplinks from field aggregation switches to the control building. In the powerhouse cabinet, we used edge switches that accepted SFP/SFP+ uplinks. In the control building, we used aggregation switches with matching SFP cages. This approach kept the optics localized: the fiber plant connected once per uplink pair, and the switches handled VLAN segmentation and QoS for control telemetry.

Optics Selection We Actually Used

Because our majority of runs were within a couple of kilometers and we had mixed patch panel cleanliness, we prioritized short-reach optics with generous link margin. For multimode segments, we selected 10G SR-class modules designed for 850 nm operation. For longer or cleaner single-mode runs where multimode wasn’t justified, we selected 10G LR-class optics using 1310 nm operation. Where the switch vendor required specific DOM behavior, we used optics that explicitly support Digital Optical Monitoring and are listed as compatible.

Technical Specifications Comparison (Key Decision Variables)

The table below summarizes the core parameters we compared while selecting power plant fiber optics for dam control systems. Exact values depend on the specific module SKU, but these categories map to the same engineering checks: reach, wavelength, optical budget, connector type, and operating temperature.

| Optic Type (Example) | Wavelength | Typical Reach | Data Rate | Connector | Optical Budget Class | Operating Temp Range | DOM Support |

|---|---|---|---|---|---|---|---|

| 10G SR SFP (e.g., Cisco SFP-10G-SR) | 850 nm | Up to 300 m (MM) / higher with OM4 depending on vendor | 10 GbE | LC | Short-reach, margin-sensitive to fiber quality | Commercial or extended depending on SKU | Often supported (varies by vendor) |

| 10G SR 850 nm SFP (e.g., Finisar FTLX8571D3BCL) | 850 nm | Up to 400 m class (MM) depending on OM4 and module spec | 10 GbE | LC | Short-reach with defined link budget | 0 C to 70 C typical for many SKUs | Commonly supported |

| 10G LR SFP (Single-Mode) | 1310 nm | Up to 10 km class | 10 GbE | LC | Longer reach, more tolerant of connector loss | Extended options available | Commonly supported |

| 10G SR 850 nm SFP (Third-party, e.g., FS.com SFP-10GSR-85) | 850 nm | Up to 400 m / higher with OM4 per spec | 10 GbE | LC | Budget matches OM4 assumptions | Often 0 C to 70 C | Varies; confirm DOM details |

We treated connector cleanliness and launch conditions as part of the “spec.” In field environments, the difference between a stable receive margin and a recurring CRC error storm can be traced to dirty LC ferrules, polishing quality, or a mismatch between OM3 and OM4 expectations.

Pro Tip: In dam control cabinets, treat SFP DOM thresholds as an early-warning system. We configured alarms on the switch for low RX power and rising error counters, then used those signals during planned maintenance windows. That prevented two “mystery” link drops that were actually connector oxidation after seasonal humidity spikes.

Implementation Steps: From OTDR Results to SFP Plug-In Verification

We used a repeatable workflow so every power plant fiber circuit could be validated before go-live. This reduced commissioning time and gave operations teams a clear acceptance record. The key is to design the optical budget, then verify it with live measurements after the optics are installed.

Validate Fiber Plant with OTDR and Loss Budget

We ran OTDR from both ends where feasible and documented event loss at splices and connectors. We then computed a budget: fiber attenuation (dB/km times distance), plus measured splice/connector loss, plus a margin for aging and temperature-related behavior. For example, if we had 2.1 km at 0.35 dB/km, that is 0.735 dB of fiber loss, plus ~6 dB in interconnect loss, for a subtotal near 6.7 dB before margin. We ensured the chosen optics’ receive sensitivity and link budget exceeded that subtotal with headroom.

Confirm Switch Compatibility and DOM Behavior

Before purchasing spares, we tested optics in the exact switch models and firmware versions used at the plant. Some switch platforms enforce transceiver type checks or have quirks with DOM parsing. We also verified that the optics reported temperatures and bias currents within expected ranges so monitoring dashboards remained meaningful.

Clean, Inspect, and Terminate LC Connectors

Even “new” patch cords can be contaminated in storage. We used microscope inspection before mating and cleaned LC connectors with lint-free wipes and approved cleaning tools. After mating, we tested link stability under link flaps and monitored CRC counts. This step is often the difference between “works on the bench” and “stays up during a humidity cycle.”

Commission with Traffic Profiles and Error Monitoring

Dam control traffic is not always heavy, but it can be bursty and latency sensitive. We generated representative telemetry and event streams, then watched interface counters and optical DOM telemetry for stability. We also verified that VLAN tagging and QoS queues behaved correctly so control messages did not get delayed behind bulk telemetry.

Measured Results: Link Stability and Reduced Unplanned Downtime

After cutover, we ran a staged acceptance period with continuous monitoring. In the first month, we observed a major reduction in interface errors compared with the legacy links that used older optics and inconsistent cleaning procedures. The key metric was not only “link up,” but also stable error counters and stable RX power readings across temperature swings.

What Improved in Practice

Across 18 fiber uplink circuits, we achieved 99.98% interface availability during the monitoring window. We recorded zero unexpected link drops that required manual intervention. CRC errors dropped from intermittent spikes to near-baseline levels, and DOM monitoring showed RX power staying within a safe operating band through a documented hot-cold cycle.

Where the Savings Came From

We reduced emergency dispatches by catching degradation early. Instead of waiting for a full outage, the alarms triggered planned connector re-cleaning and patch cord swaps. For maintenance planning, this shortened mean time to recovery from hours to a controlled maintenance window. It also reduced the risk of delayed gate control operations during high-demand periods.

Common Mistakes and Troubleshooting Patterns

Even experienced teams can stumble with power plant fiber and SFP optics. Below are real failure modes we saw or commonly encounter in hydro and industrial environments, along with root cause and fixes.

Link Flaps Caused by Dirty LC Ferrules

Symptoms: Interface transitions between up/down, rising CRC errors, and DOM RX power that oscillates. Root cause: Microscopic contamination on LC connectors leads to intermittent optical coupling. Solution: Inspect with a fiber microscope, clean with approved methods, replace patch cords if polishing is worn, and re-test with live error counters.

“Works at Bench” but Fails in Cabinet Due to Temperature Drift

Symptoms: Stable link during initial commissioning, then failures during seasonal heat or near DC bus hotspots. Root cause: Optics operating outside their specified temperature range or insufficient margin for sensitivity at worst-case. Solution: Choose extended temperature SFP variants when cabinets can exceed 50 C, and re-check optical budgets using worst-case assumptions and connector loss.

Compatibility Mismatch Between Switch Firmware and Third-Party SFP DOM

Symptoms: Transceiver recognized as “unknown,” monitoring dashboards show missing DOM fields, or the switch applies conservative behavior that affects stability. Root cause: Vendor-specific DOM implementation or firmware parsing differences. Solution: Validate compatibility in the exact switch/firmware combo; prefer optics listed in the switch vendor compatibility matrix, or run a controlled pilot before full rollout.

Incorrect Fiber Type Assumption (OM3 vs OM4)

Symptoms: SR links exhibit higher error rates than expected even though distance is “within spec.” Root cause: Cabling labeled incorrectly during renovations, or legacy OM3 used where OM4 was assumed for reach calculations. Solution: Confirm fiber type with documentation and test methods, then select SR optics based on the real fiber category and measured link loss.

Cost and ROI: Budget Optics vs Lifecycle Reliability

For power plant fiber projects, the optics line item looks small compared with trenching and rack work, but it drives lifecycle costs. Typical pricing varies by OEM versus third-party and by temperature grade and DOM features. In many deployments, OEM 10G SR SFPs can run roughly $80 to $200 per unit, while third-party equivalents may be $35 to $120, depending on brand and warranty terms.

TCO should include downtime risk, labor time for troubleshooting, and spares strategy. If a single transceiver failure triggers a high-priority dispatch, the labor cost can exceed the price difference quickly. We built a spare plan with a mix of optics types and used monitoring alarms to extend service intervals. The ROI came from fewer unplanned interventions and faster restoration when issues did occur.

FAQ

What does “power plant fiber” mean in dam control design?

It refers to the installed fiber network that carries control telemetry, protection-related status, and monitoring traffic between plant zones. The design focus is reliability under temperature, humidity, and vibration, not just raw throughput.

Which SFP type should I choose for 2 km dam links?

For around 2 km, many teams use 10G SR over multimode if the fiber is verified (for example OM4) and the optical budget has margin. If fiber type is uncertain or connectors are heavily reused, 10G LR over single-mode is often more forgiving.

Do I need DOM support for operations monitoring?

DOM is strongly recommended because it enables early-warning alarms on RX power, temperature, and bias currents. Without DOM, you often detect problems only after errors or link drops occur.

Can third-party SFPs work with OEM switches?

Yes, but compatibility is not guaranteed across firmware versions and switch platforms. Always validate in the target switch model, verify DOM parsing, and keep an approved spares list to reduce commissioning risk.

How do I prevent SFP-related outages during seasonal humidity?

Implement a hygiene routine: connector inspection, cleaning before mating, and protective patching practices. Also configure interface error monitoring and optical alarm thresholds so you catch degradation early.

What testing should I require before go-live?

At minimum: OTDR-based loss verification, live link tests with representative traffic, and monitoring for CRC and error counters. If your operations depend on deterministic behavior, also validate VLAN and QoS mappings end-to-end.

If you are planning power plant fiber for hydroelectric dam control systems, start with measured optical budgets and validated switch compatibility, then treat connector cleanliness and DOM telemetry as first-class engineering tasks. Next, review power and cooling planning for telecom racks to align optics placement, cabinet airflow, and power stability with your availability targets.

Author bio: I have designed and commissioned fiber and rack-based network infrastructure for industrial environments, including OTDR-driven acceptance and SFP interoperability testing. I focus on practical reliability: optical margins, connector hygiene, and monitoring that catches failures before operations feel them.