AI workloads in the data center create bursty, east-west traffic that punishes latency, jitter, and oversubscription. This article helps network and infrastructure engineers evaluate optical networking options for AI clusters, including 400G-class transceivers, fiber reach planning, and compatibility risks with switches and optics. You will get practical selection criteria, a head-to-head comparison, and field troubleshooting patterns that reduce downtime during cutovers.

Latency and reach: 400G optical networking options for AI traffic

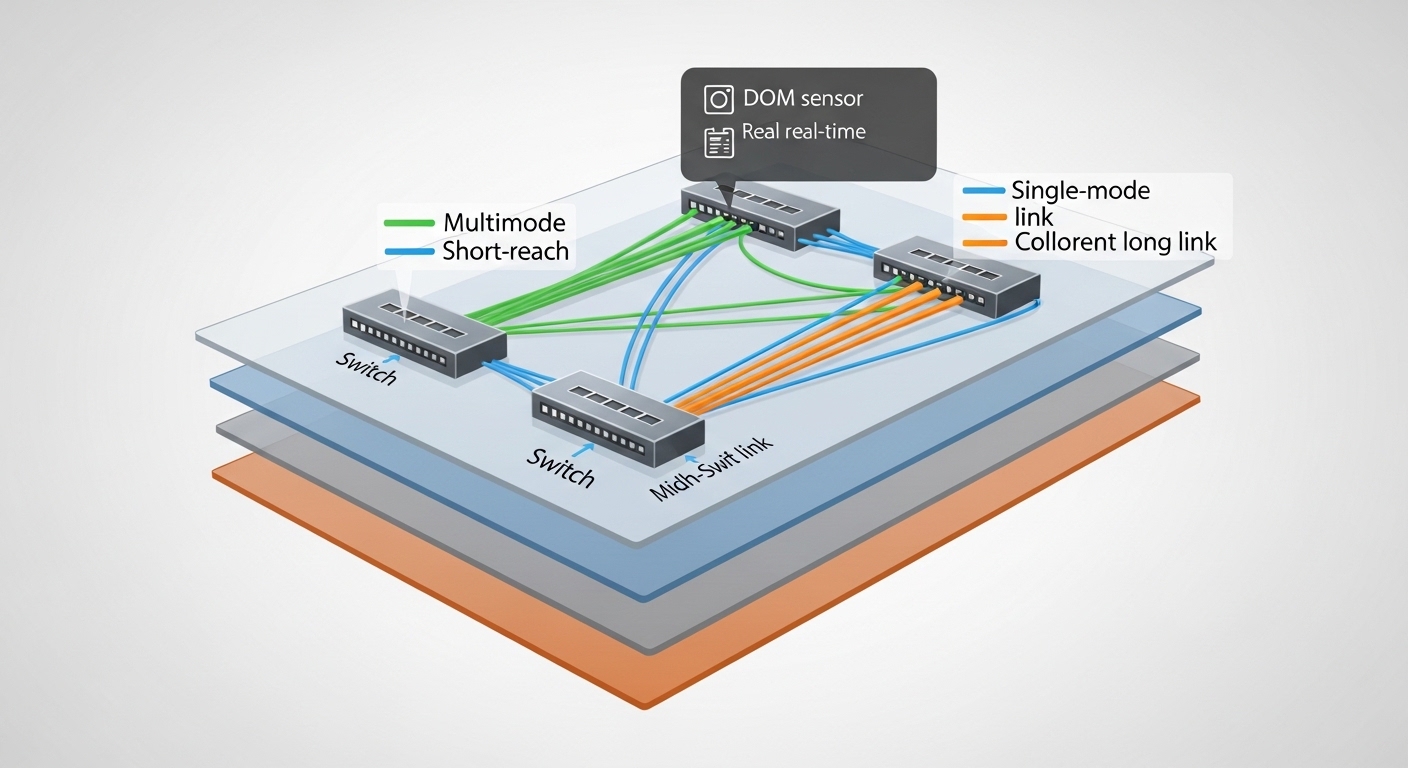

In AI training and inference, the “effective” distance is often the number of hops through leaf-spine layers, not just fiber meters. For optical networking, engineers typically target short-reach links between top-of-rack (ToR) and aggregation, then extend to spine with higher-reach optics when needed. Most 400G deployments use either coherent optics for longer reach or direct-detect multimode/single-mode optics for shorter spans, depending on the data hall architecture. Your choice should align with the switch front-panel optics, the fiber plant (MMF vs SMF), and the operational temperature profile in the cage.

Quick spec reality check (what matters in the field)

For AI leaf-spine fabrics, the most common practical constraints are: optical budget margins, connector cleanliness, transceiver DOM behavior, and switch lane mapping. Even if a transceiver advertises the correct reach, a tight budget can fail under high insertion loss or aging. As a rule, plan margin for dust, patch panel loss, and future re-termination. For authoritative background on Ethernet PHY behavior, see [Source: IEEE 802.3].

Head-to-head: direct-detect SR vs coherent LR/ZR for AI tiers

Below is a practical comparison for common 400G-class choices. Actual part numbers vary by vendor, but the engineering trade-offs remain consistent.

| Optical networking option | Typical data rate | Wavelength | Reach class | Fiber type | Connector | Power class (typ.) | Operating temp (typ.) |

|---|---|---|---|---|---|---|---|

| 400G direct-detect SR4 (multimode) | 400G (4x100G) | 850 nm | ~100–150 m class | OM4/OM5 MMF | LC | ~2–5 W | 0 to 70 C |

| 400G direct-detect DR4/FR4 (single-mode) | 400G (4x100G) | ~1310 nm | ~2 km class | SMF | LC | ~3–6 W | -5 to 70 C |

| 400G coherent (long-reach) | 400G (coherent) | C-band (varies) | ~40–80 km and beyond | SMF | LC/SC (varies) | ~8–20 W | -5 to 70 C |

Use direct-detect optics when the fiber plant supports it; use coherent when you must push distance or consolidate longer runs with fewer intermediate hops. For AI fabrics, coherent is less common inside the first mile, but it becomes relevant when you connect multiple halls or staged training clusters.

Cost and power: OEM vs third-party optics for AI clusters

Optical networking cost is not just the transceiver unit price; it is also power draw, spares strategy, and failure impact. In a modern AI cluster, a single failed link can stall distributed training, so availability matters. OEM optics often command higher prices but reduce compatibility friction with switch firmware and optics validation routines. Third-party optics can lower capex, but you must validate DOM support, lane mapping, and vendor-specific diagnostics behavior before scaling.

What engineers measure during rollout

During acceptance testing, measure link bring-up time, error counters under load, and DOM readings consistency. For power, record transceiver module temperature and total rack thermal headroom, especially when you upgrade from 100G to 400G. A typical operational approach is to stage a pilot with 20–40 links per switch model, then run traffic at line rate for 24–72 hours while monitoring CRC/BER and optical receive power thresholds.

Pro Tip: In many AI data centers, the biggest “hidden cost” is not the optics price—it is the time lost to repeated link flaps caused by marginal fiber cleaning. Standardize a cleaning workflow (lint-free wipes plus validated connector inspection) and enforce it at every swap; it often prevents weeks of intermittent training instability.

Compatibility and DOM: how to avoid switch-port surprises

AI fabrics often run with strict optics validation, and optical networking modules must match the switch’s expected electrical interface and supported DOM features. Before ordering at scale, confirm the switch model’s transceiver interoperability list, then verify DOM fields such as temperature, bias current, laser output, and received power. If a transceiver reports DOM values but the switch rejects it, you can end up with “link down” states that look like cabling issues.

Selection criteria: decision checklist you can use immediately

- Distance and fiber type: confirm OM4/OM5 vs SMF, and validate patch panel loss and splices.

- Switch compatibility: check the vendor interoperability guide for the exact switch SKU and transceiver speed/format.

- DOM support and behavior: ensure the module exposes required diagnostic fields and thresholds.

- Operating temperature: verify the transceiver spec matches the cage airflow and worst-case inlet temperatures.

- Budget and TCO: compare OEM vs third-party including spares, power, and labor for validation.

- Vendor lock-in risk: assess whether the switch firmware enforces strict optics whitelisting.

- Migration path: plan for future 800G/1.6T upgrades by ensuring optics form factors and lanes are compatible.

For optical link engineering fundamentals, IEEE Ethernet standards provide the baseline for link behavior, while vendor datasheets define module electrical and optical limits. See [Source: IEEE 802.3] and consult the specific transceiver datasheet for the module you plan to deploy.

anchor-text:IEEE 802.3 standard

anchor-text:Vendor interoperability guidance

Deployment scenario: upgrading an AI leaf-spine fabric without downtime

Consider a 3-tier data center leaf-spine topology with 48-port 10G/25G ToR switches upgraded to a mix of 100G and 400G uplinks for an AI training cluster. You have 120 servers in the rack group, each using GPU accelerators with east-west traffic, and you must improve oversubscription from 3:1 to 2:1. The fiber plant includes OM4 in the ToR rows and SMF from ToR to spines. The migration plan uses direct-detect 400G SR4 within rows (short reach) and direct-detect 400G DR4 for ToR-to-spine runs up to the measured loss budget, reserving coherent for cross-hall links where you exceed direct-detect reach.

During the change window, you pre-stage transceivers, verify DOM readings after insertion, and run a traffic generator at full line rate for each uplink set. Engineers also monitor optical receive power and interface error counters, then only move to the next batch after the counters remain stable. This staged approach prevents one misfit optic batch from halting the entire training job.

Common pitfalls and troubleshooting: what causes optical networking failures

Even with correct specs, optical networking deployments fail in recognizable patterns. Below are concrete issues I have seen during field bring-ups, with root causes and solutions.

Intermittent link flaps after a “successful” insertion

Root cause: connector contamination (dust or film) or a partially seated LC connector. High-speed optics can be sensitive to micro-misalignment, causing receive power to oscillate.

Solution: clean connectors using a validated procedure, inspect under magnification, and re-seat while checking for latch engagement. Then re-check receive power thresholds via DOM.

Switch reports unsupported transceiver or link stays down

Root cause: DOM fields not matching what the switch expects, or optics format mismatch (for example, speed/encoding variant). Some platforms also enforce stricter whitelisting than others.

Solution: confirm the transceiver part number against the switch’s interoperability matrix, update switch firmware if the vendor recommends it, and test one module per batch before scaling.

“Too much margin” that still fails under load

Root cause: optical budget calculations ignored patch panel aging, temperature effects, or connector rework loss. Engineers sometimes validate with a handheld meter at low test conditions, then see failures during thermal steady-state.

Solution: measure end-to-end loss with a calibrated OTDR or validated loss test, add margin for worst-case conditions, and perform a soak test at target airflow temperatures.

BER/CRC errors increase only after a transceiver swap

Root cause: wrong fiber polarity or swapped transmit/receive fibers in duplex LC patching, especially when labeling was inconsistent during earlier upgrades.

Solution: verify polarity with a structured labeling plan; correct patching and validate the link with traffic at line rate while watching error counters.

Cost and ROI note: building a resilient optics spares strategy

In practice, OEM optics for 400G-class links often cost more upfront than third-party modules, but they reduce validation labor and compatibility risk. A realistic budget range for a 400G direct-detect module can vary widely by vendor and reach class; you should treat it as a procurement exercise with quotes, not a fixed number. TCO should include: power consumption (which impacts cooling and rack budgets), spares inventory (how many modules per switch), and expected failure rates under your environment. For AI clusters, the ROI of getting this right is measured in training job continuity and faster recovery during incidents, not only in unit price.

Decision matrix: which optical networking option fits your constraints?

Use this matrix to choose between direct-detect SR/DR and coherent long-reach optics, based on the constraints that typically drive procurement and design reviews.

| Decision factor | Direct-detect SR (multimode) | Direct-detect DR (single-mode) | Coherent long-reach |

|---|---|---|---|

| Typical AI intra-row reach | Best fit | Good when SMF is available | Usually overkill |

| Cross-hall or long runs | Limited by reach | May fit mid distances | Best fit |

| Power and thermal load | Lower | Moderate | Higher |

| Compatibility friction | Moderate to low with correct part numbers | Moderate | Moderate; often platform-specific |

| Procurement simplicity | High | Medium | Lower due to coherent ecosystem |

Which Option Should You Choose?

If you are building or upgrading an AI leaf-spine fabric inside a single data hall, choose direct-detect SR when your fiber plant supports OM4/OM5 and the measured loss fits the optical budget. If you need a longer but still “data center distance” uplink and you have SMF, pick direct-detect DR and validate polarity, connector cleanliness, and DOM behavior. Choose coherent when you must span long distances between halls or consolidation sites, and you can justify higher power and platform-specific requirements.

Next step: map your fiber plant loss and switch port requirements, then run a small optics pilot before scaling using optical networking-aligned acceptance tests.

FAQ

Q: What optical networking type is most common for AI clusters?

A: Most AI intra-hall designs favor direct-detect multimode (SR) or single-mode (DR) based on reach and fiber availability. Coherent is more common for long cross-hall links or where you must reduce intermediate equipment.

Q: How do I verify DOM support before ordering 400G optics at scale?

A: Insert a single module into a test switch of the same model and firmware revision, then read the transceiver diagnostic fields and ensure the switch accepts the module. Run traffic briefly and confirm no unexpected interface resets or error spikes.

Q: Are third-party optical networking modules safe for production?

A: They can be,