When a 10G or 100G link suddenly fails acceptance, the root cause is often not the optics themselves but the way the optical BER measurement was performed and judged. This article helps network and transport engineers define defensible pass/fail criteria for optical transceivers, from QSFP/SFP modules to DWDM and PON optics. You will get field-usable thresholds, a step-by-step test workflow, and troubleshooting patterns that match what I see during deployments. It is written for teams validating links for data centers, fronthaul/backhaul, and metro transport where repeatable results matter.

Why optical BER measurement pass/fail criteria vary by standard

Bit Error Rate (BER) is the probability of incorrect bits observed during a test interval, and the acceptance threshold depends on the line rate, modulation, coding, and receiver sensitivity defined by the relevant standard or vendor compliance plan. In practice, two labs can measure the same transceiver and report different BER numbers if the test conditions differ: pattern type, PRBS length, optical launch power, receiver overload margin, or whether forward error correction (FEC) is in use. For Ethernet optics, IEEE 802.3 specifies optical link performance and test methodology, but vendors may still provide additional recommended test conditions in datasheets. For transport systems, ITU-T and internal operator specs can shift the “acceptable” error rate target.

From field work, the most reliable approach is to treat pass/fail as a combination of measured BER (or post-FEC error count) plus optical power and eye quality checks. For example, on a 100G QSFP28 link, I typically verify optical power in dBm, confirm the module DOM telemetry (temperature, bias current, laser power), then run BER with a PRBS pattern aligned to the transceiver’s compliance mode. If you only chase BER without checking optical level and receiver operating region, you can pass a link that will later fail under temperature swings or aging.

Common acceptance philosophies you can operationalize

- BER-threshold acceptance: declare pass when BER is below a specified target at a defined test interval and pattern.

- Error-count acceptance: declare pass when total errors remain below a count within a time window (especially when BER is extremely low).

- FEC-aware acceptance: declare pass based on corrected/un-corrected error metrics after FEC, plus optical margin constraints.

In many real acceptance test plans, you will see both BER and optical budget requirements combined. That is because BER alone can be misleading if you are not operating near the intended launch power and receiver gain settings.

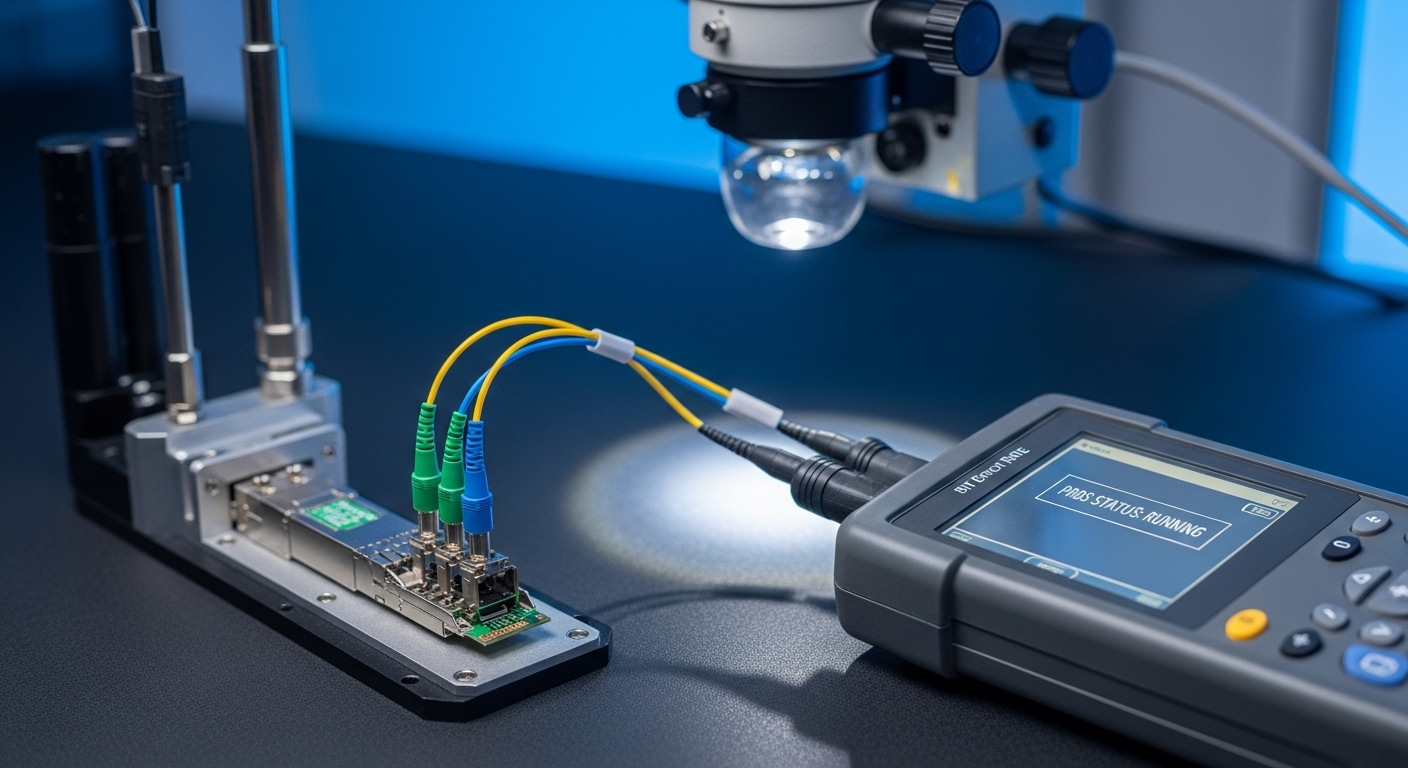

Build a defensible BER test setup: patterns, windows, and optical levels

To make your optical BER measurement defensible, standardize the test bench: PRBS generator/synchronization, test interval, signal levels, fiber type, and connector cleanliness. I have walked into sites where “BER failure” was actually a dirty MPO cassette; the fix was inspection, re-termination, and re-cleaning, not swapping optics. The acceptance test should also record the optical path loss and the actual measured receive power at the receiver input, not just the nominal link budget.

For Ethernet optics, PRBS patterns like PRBS-31 are common in short-reach scenarios and PRBS-31/PRBS-9 variants may appear depending on the equipment and standard. For 25G/50G/100G, PRBS selection and test mode must match the transceiver’s supported compliance mode. If the transceiver supports FEC and your link uses it, align the BER measurement tool to the same FEC mode; otherwise, you may be judging pre-FEC errors that are not the operator’s real performance metric.

Technical specifications table: typical BER test parameters you will standardize

| Parameter | Typical field value | Why it matters for pass/fail |

|---|---|---|

| Data rate | 10G / 25G / 50G / 100G | BER targets and receiver sensitivity differ by rate and coding. |

| PRBS pattern | PRBS-31 (or vendor-specified) | Pattern affects error distribution and jitter tolerance tests. |

| Test interval | 60 s to 300 s per power point | Short windows can hide intermittent errors; long windows reveal instability. |

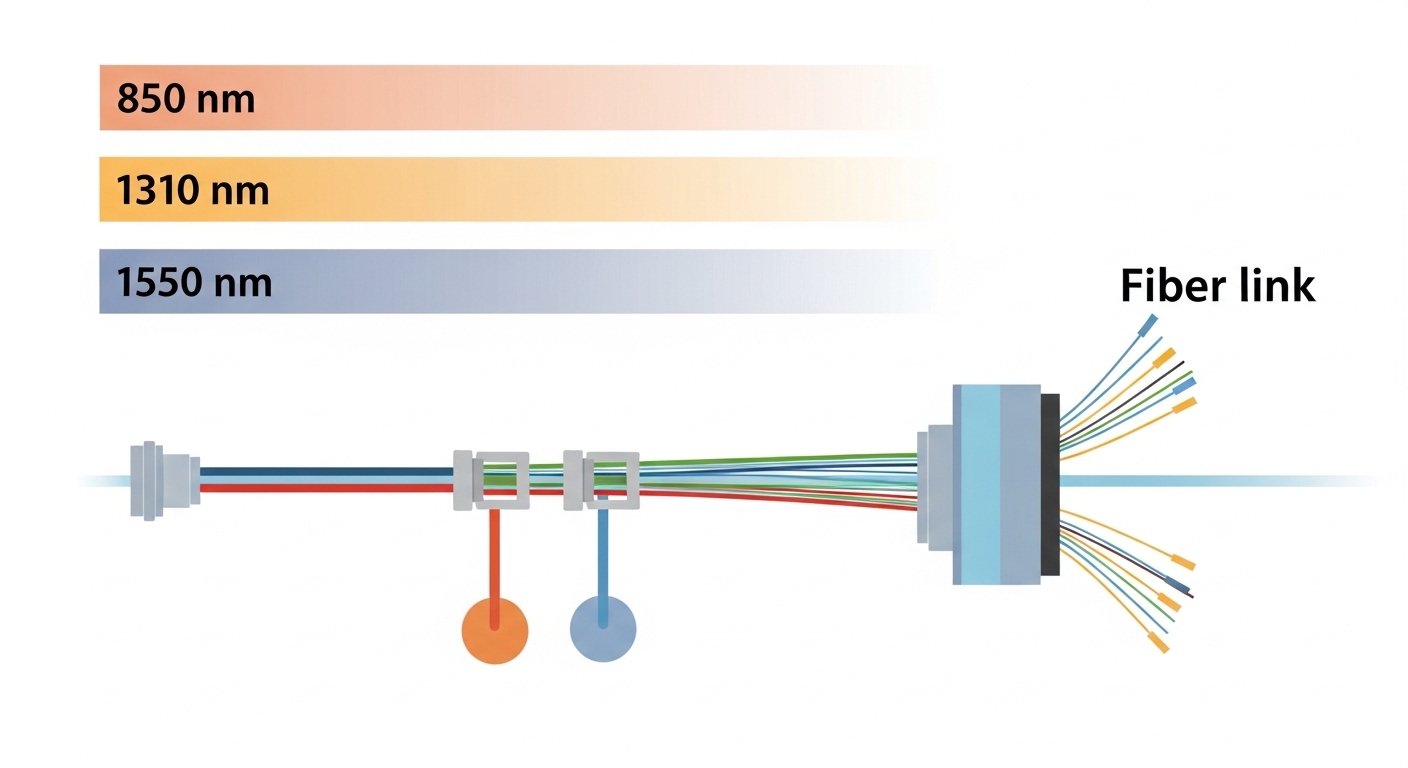

| Optical wavelength (example) | 850 nm (SR) or 1310 nm/1550 nm | Fiber and connector loss profiles depend on wavelength. |

| Connector type | MPO/MTP or LC | Cleaning and polarity handling impact real BER outcomes. |

| Operating temperature range | 0 to 70 C for many enterprise optics; extended ranges for transport | DOM data and margin must match the deployment environment. |

| DOM support | Yes (per SFP/QSFP MSA) | Lets you correlate BER events with bias current and laser temperature. |

For standards references, IEEE 802.3 provides the framework for link performance and test practices for Ethernet physical layers. For optics device electrical and optical behavior expectations, consult vendor datasheets and the relevant MSA documents; in the field, these often define the practical “how to test” boundaries. [Source: IEEE 802.3] [[EXT:https://standards.ieee.org/standard/802_3]]

Pass/Fail criteria: practical thresholds you can adopt without guesswork

There is no single universal BER target that applies to every link, because “pass” is tied to the system’s coding and performance model. In many Ethernet deployments, the acceptance target is expressed as a BER threshold or an equivalent error count over a fixed time, sometimes with additional requirements like “no loss of sync” and “no uncorrectable FEC events.” When FEC is used, post-FEC metrics are often the acceptance decision driver, because the uncorrectable error rate is what affects user traffic.

Here is a field-friendly way to set criteria that are consistent across sites: define your acceptance as a two-stage gate. First, require optical health and link alignment (receive power within your allowed window, stable DOM telemetry, clean connectors, correct polarity). Second, require BER performance at one or more optical power points, using an agreed PRBS pattern and a minimum test time. This structure prevents “false passes” caused by overdriving or by measuring only at one convenient power level.

Example acceptance templates (adjust to your operator spec)

- Pre-FEC BER gate: pass when BER is below the project threshold during at least 60 s at the nominal receive power.

- Error-count gate: pass when errors remain below a defined count over 120 s (useful when BER targets are extremely low).

- Post-FEC gate: pass when uncorrectable error counters remain at zero during the test interval, and corrected error counters stay within the expected range.

Because thresholds vary by coding and equipment, I recommend capturing the acceptance logic directly in your test procedure and mapping it to the exact transceiver family and test instrument. If your vendor provides a compliance test report, align your pass/fail targets with the same test conditions in that report to reduce disputes during commissioning.

Pro Tip: In many field failures, the BER number looks “close enough” while the link becomes unstable after thermal drift. Always run at least two temperature points (or two time-separated runs) and log DOM values like laser bias current and module temperature alongside BER. The correlation often reveals marginal operation well before it becomes a hard failure.

Distance, fiber type, and wavelength: choosing the right test points

Acceptance test points should reflect the true operating region, not just the shortest path. For example, if your deployment uses OM4 or single-mode fiber with patch cords and splitters, you need to measure and test at the actual expected receive power after all components. For DWDM optics, wavelength accuracy and channel spacing affect receiver performance; for PON, optical power budgets and splitter loss dominate, so you should verify the same launch conditions used by the PON OLT/ONU.

In practice, I set up BER testing at the nominal receive power, then step the launch power down in controlled increments to confirm margin. If your tool supports it, also test at the worst-case expected temperature and connector condition. This is how you catch links that pass at the bench but fail after field re-termination or when a connector is slightly misaligned.

Selection criteria / decision checklist (ordered like a field worksheet)

- Distance and link budget: confirm actual measured attenuation and connector/splice loss.

- Data rate and coding/FEC mode: ensure BER test matches the link’s real operating mode.

- Switch and transceiver compatibility: verify transceiver type is supported by the switch firmware and optics qualification list.

- DOM support and logging: require temperature/bias/laser power telemetry to correlate errors with optical health.

- Operating temperature range: pick acceptance windows that cover the deployment environment (especially for extended reach optics).

- Vendor lock-in risk: avoid acceptance procedures that only work with one vendor’s tool or only accept one optical power profile.

- Connector and cleaning strategy: standardize MPO/MTP polarity handling, LC cleaning method, and inspection before every BER run.

Real-world deployment scenario: acceptance testing in a metro transport handoff

In a metro transport project, we commissioned a 3-site chain connecting data center aggregation to a regional router core using 100G optics over single-mode fiber with patch panels and controlled slack. Each site had roughly 18 dB total loss including connectors and splices, and the transceivers were QSFP28 LR-type modules operating around 1310 nm with vendor-specified launch power targets. During acceptance, we measured receive power at the far end and set BER testing at the nominal point and at a reduced power point to verify margin. The pass/fail decision required (1) no link resets, (2) stable DOM telemetry within the expected range, and (3) BER below the agreed threshold for 120 s using the configured PRBS pattern. One batch initially “failed BER” until we discovered a contaminated connector face; after cleaning and re-inspection, the same modules passed without replacement.

Common pitfalls and troubleshooting tips for optical BER measurement

Even experienced teams can misinterpret results. Below are real failure modes I have seen, including the root cause and the corrective action that usually fixes the issue.

Pitfall 1: Testing at the wrong optical power point

Root cause: The receive power is outside the transceiver’s intended operating region, causing receiver overload or insufficient sensitivity. BER can look “bad” or “random” due to clipping or excessive noise.

Solution: Measure receive power at the receiver input, then repeat BER at the nominal point and one margin point. Use the same attenuator settings and document them in the acceptance record.

Pitfall 2: PRBS pattern mismatch or loss of pattern lock

Root cause: The BER tester and the link are not using the same PRBS mode, or the test fails pattern synchronization. Some instruments still show a number even when lock is unstable.

Solution: Verify PRBS lock status before starting the error measurement, and confirm the test configuration matches the transceiver compliance mode.

Pitfall 3: Dirty MPO/MTP or wrong polarity handling

Root cause: Connector contamination or polarity reversal can reduce optical coupling dramatically, producing BER failures that look like “bad optics.”

Solution: Inspect with a fiber microscope, clean with approved methods, and re-run BER. For MPO/MTP, confirm polarity method (Method A vs Method B) and match it to the patch hardware.

Pitfall 4: Ignoring FEC mode when the link uses it

Root cause: BER measured pre-FEC may not reflect post-FEC performance, leading to overly strict or misleading pass/fail outcomes.

Solution: Align the BER test to the same FEC mode used by the transceiver and link partner, and base acceptance on the correct metric (post-FEC uncorrectable errors where applicable).

Cost and ROI note: what acceptance testing changes in total cost

In typical enterprise and metro builds, third-party optics may cost less upfront than OEM modules, but the total cost of ownership depends on failure rate, rework time, and acceptance disputes. As a rough planning range, many 10G SR and 25G SR modules are often priced in the tens to low hundreds of dollars per unit, while 100G optics can be several times higher depending on reach and brand. OEM modules frequently come with tighter documentation and easier compliance handling, which reduces commissioning time. Third-party modules can be cost-effective when paired with a strong incoming quality process: optical inspection, DOM checks, and standardized optical BER measurement at defined power points.

ROI improves when acceptance testing prevents field truck rolls. A single avoided re-termination or swapped transceiver can outweigh the cost of a few hours of lab time and a microscope-assisted cleaning process. However, if your acceptance criteria are not aligned to the real test conditions and FEC mode, you risk both false rejects and false accepts, which hurts ROI.

FAQ

What BER target should we use for optical transceiver acceptance?

It depends on the line rate, coding, and whether you use FEC. Use your operator or vendor compliance reference and define pass/fail by either BER threshold or error-count over a fixed interval at agreed optical power points.

Is optical BER measurement enough to qualify a module?

No. I recommend combining BER with optical receive power verification, DOM telemetry sanity checks, and a connector cleanliness inspection. BER without optical health checks can hide marginal operation that fails under temperature drift.

How long should the BER test run to be meaningful?

Common practice is 60 s to 300 s per power point, with 120 s a solid baseline for acceptance documentation. Longer runs reveal intermittent errors that short tests can miss.

Can we compare BER results across different test instruments?

You can only compare them if the instruments use the same PRBS mode, FEC mode, test interval, and measurement method. Otherwise, interpret results as “pass under our conditions” rather than a universal BER number.

What usually causes “BER fail” right after installation?

The most common causes are dirty connectors (especially MPO/MTP), polarity mismatch, and incorrect optical power resulting from wrong attenuation or patch selection. Less frequently, PRBS lock issues or FEC mode mismatch are the culprits.

How do we handle links that use FEC during BER testing?

Base acceptance on post-FEC uncorrectable error metrics where available. Ensure your BER tester and link partner are configured consistently, then record both corrected and uncorrected counters along with receive power and DOM data.

If you want repeatable commissioning results, standardize your BER test conditions, align pass/fail logic to the real coding mode, and always correlate errors with optical health data. Next, review optical transceiver DOM telemetry best practices to make your acceptance records faster to troubleshoot and easier to defend.

Author bio: I am a telecom engineer who has commissioned 5G fronthaul/backhaul and metro transport links using DWDM, SDH/OTN, and PON optics. I write field-focused acceptance procedures that turn optical BER measurement into a reliable pass/fail gate.