OpenConfig Optical Network challenges: 8 field-tested picks

In a modern transport fabric, OpenConfig promises consistent automation across vendors, but the challenges show up fast when you touch optics, transceivers, and coherent link behavior. This article helps network engineers and field operators understand where OpenConfig mappings break down in optical networks, and how to choose practical workarounds. You will get a top list of the most common friction points, plus a selection checklist you can apply during rollout and troubleshooting.

Top 8 challenges of OpenConfig in optical networks: where reality bites

Think of OpenConfig as a universal set of wiring labels. It can standardize what you call a port, a channel, or a service, but fiber links are not just “wires,” they are analog systems with optics, power budgets, and vendor-specific telemetry quirks. In the field, the hard part is aligning data models, operational semantics, and timing across controllers, transceivers, and line systems. Below are the eight most frequent challenges I have seen during deployments involving 10G, 25G, and coherent optics.

Model-to-hardware gaps: the same “interface” means different physics

OpenConfig can describe an interface generically, but optical gear often exposes additional states that do not map cleanly. For example, coherent transceivers and line cards may report measured received optical power, laser bias, CD/OSNR, and alarm thresholds with vendor-specific scaling. When the controller expects standardized leaf names and units, you can end up with automation that “sets” something that the line system never truly accepts.

Best-fit scenario: Use OpenConfig when your optical layer has a well-supported telemetry and configuration story from the same vendor family, and you have a validated unit mapping for key measurements.

- Pros: Faster normalization of port/service objects.

- Cons: Risk of silent mismatches for optical-specific parameters.

Optical telemetry semantics: alarms are not events everywhere

Optical networks generate rich alarm streams, but OpenConfig operational data models may treat them as simple status. In practice, some devices expose alarms as latched conditions with severity and time stamps, while others provide only “current state” without history. That difference breaks alerting pipelines that rely on edge-triggered events, such as correlating a power dip to a specific maintenance window.

Best-fit scenario: If your NMS expects event semantics, validate whether the OpenConfig operational data includes time stamps, severity, and clearing behavior. Otherwise, plan for a translation layer that converts raw alarms into your event schema.

- Pros: Consistent baseline for dashboards if semantics match.

- Cons: Alerting logic can drift across vendors.

Transceiver capability discovery: DOM is helpful, but not uniform

OpenConfig can drive interface configuration, but optical transceivers bring their own constraints via Digital Optical Monitoring (DOM). DOM values such as Tx bias current, Tx power, Rx power, and temperature may appear with different scaling and refresh rates. Even when two vendors both support “DOM,” the data cadence might be 1 second on one platform and 30 seconds on another, which changes how quickly you react to link degradations.

Best-fit scenario: For mixed fleets, require a DOM normalization step and verify that the refresh period and unit scaling are documented in vendor datasheets.

- Pros: Better closed-loop monitoring when normalization is done.

- Cons: Mixed vendor fleets can create confusing thresholds.

Coherent optics tuning loops: timing and idempotency issues

With coherent systems, automation often needs to coordinate multiple parameters: laser settings, dispersion-related tuning, and adaptive equalization. OpenConfig pushes configuration as desired state, but coherent tuning can be non-idempotent: applying the same settings again may cause a retrain cycle or momentary traffic hit. If your controller retries aggressively after timeouts, you can trigger repeated tuning events.

Best-fit scenario: Pair OpenConfig with explicit “operation windows” and lock mechanisms. Use device-specific capabilities to confirm whether configuration changes are transactional or staged.

- Pros: Repeatable provisioning when tuning is managed.

- Cons: Retries can cause instability and service disruption.

Vendor implementation maturity: “supports OpenConfig” is not enough

Vendors may claim OpenConfig support, but the implementation depth varies. Some platforms map only a subset of the YANG models, while others lag on newer leaves related to optical telemetry. The result is a partial automation surface: your controller can set interface admin state but cannot read key optical health metrics.

Best-fit scenario: Require a conformance test plan with a list of required leaves (for example, transceiver temperature and optical power). Validate both configuration and operational reads before scaling.

- Pros: Clear path to automation when coverage is verified.

- Cons: Partial coverage leads to brittle scripts and hidden gaps.

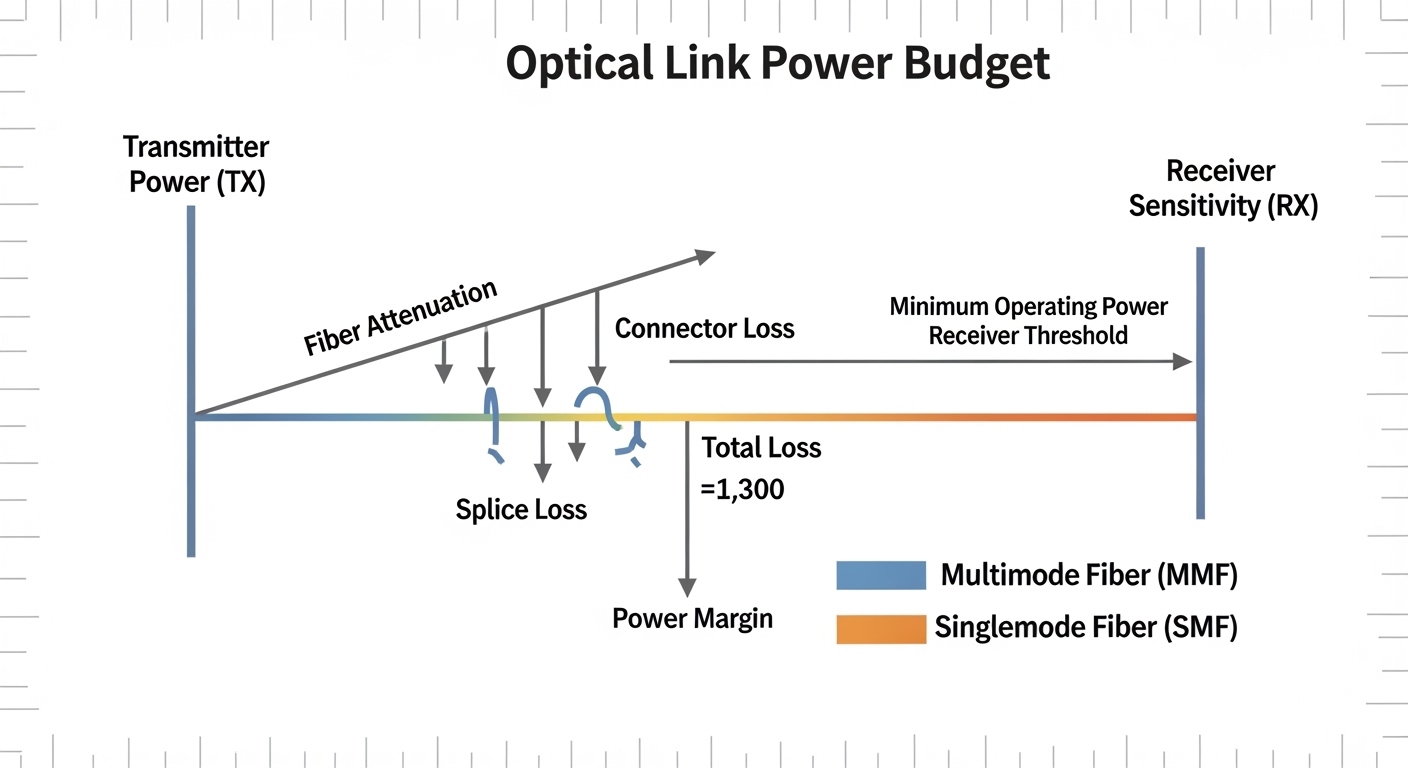

Fiber reach and power budgets: automation cannot ignore the link equation

OpenConfig can automate “what should be configured,” but optical reach depends on real power budgets and link attenuation. For example, a 10G SR module for multimode fiber is designed for limited reach, while long-haul systems need different wavelengths and receiver sensitivity. If your orchestration assumes “port up means link healthy,” you may miss marginal power conditions that only show up as rising error rates.

Best-fit scenario: Add pre-checks based on expected link budgets and module specs, then use optical telemetry to confirm that the received power stays within tolerance.

- Pros: Lower operational surprises with budget-aware workflows.

- Cons: Requires maintaining accurate physical-layer data.

Mixed media and connector realities: LC vs MPO, MMF vs SMF

Optical networks are full of “almost the same” variants: MMF vs SMF, LC vs MPO, and different transceiver families. OpenConfig abstracts interfaces, but your physical deployment still depends on correct media type and connector mating. Automation may succeed at the configuration layer while the link fails due to fiber mismatch, polarity errors, or incorrect patching.

Best-fit scenario: Treat media metadata as part of your service model. Validate polarity and connector mapping with a commissioning checklist, not only with software state.

- Pros: Fewer “mystery link down” incidents.

- Cons: Demands disciplined inventory and documentation.

Standards and interoperability: OpenConfig vs IEEE and vendor management planes

OpenConfig is a data modeling and automation approach, but optical behavior still intersects with Ethernet standards and management protocols. IEEE 802.3 defines optical interfaces at a high level, yet optical transceivers and line systems often add proprietary management extensions for alarms and diagnostics. If your automation assumes IEEE-only semantics, you can miss critical optical-specific management features that live outside the base model.

Best-fit scenario: Use IEEE 802.3 for baseline interface expectations, and rely on vendor datasheets for optical diagnostics and alarm thresholds. Keep your runbooks aligned with the device’s management plane behavior.

- Pros: More reliable interoperability planning.

- Cons: More integration work across management planes.

Spec comparison: mapping optics realities to OpenConfig-friendly expectations

Before you automate, anchor your expectations in module specifications. Below is an example comparison of common transceiver types used in enterprise and data center optical networks. The key point is that even when both ends are “10G,” reach class, wavelength, and DOM behavior differ, and those differences become challenges when your controller expects uniform telemetry.

| Transceiver example | Data rate | Wavelength | Reach (typical) | Connector | DOM / diagnostics | Operating temp |

|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G | 850 nm | Up to 300 m on OM3 | LC | Supported via SFP DOM | 0 to 70 C (typical) |

| Finisar FTLX8571D3BCL | 10G | 850 nm | Up to 300 m on OM3 | LC | Supported via SFP DOM | -5 to 70 C (typical) |

| FS.com SFP-10GSR-85 | 10G | 850 nm | Up to 300 m on OM3 | LC | Supported via SFP DOM | -5 to 70 C (typical) |

| Example coherent module (vendor-specific) | 25G/50G/100G class | varies | kilometers class | varies | Enhanced diagnostics often vendor-specific | varies |

Reference anchors: IEEE 802.3 for Ethernet optical interfaces and vendor datasheets for module reach, wavelength, and DOM behavior. Use [Source: IEEE 802.3] for baseline interface classes and [Source: Vendor transceiver datasheets] for operational diagnostics.

Decision checklist: selecting an OpenConfig approach for optical links

When teams face OpenConfig challenges, they often jump to tooling before validating assumptions. Use this ordered checklist during design and acceptance testing. It is practical enough to run during a pilot in a live rack with a staged rollout.

- Distance and fiber class: confirm OM3/OM4/MMF or SMF expectations; map to module reach classes and planned attenuation.

- Wavelength and transceiver family: verify compatibility across both ends and ensure consistent transceiver types or validated alternatives.

- Switch and line system compatibility: check vendor OpenConfig coverage for the specific platforms and software versions.

- DOM and diagnostics support: confirm which telemetry leaves are exposed and whether units and scaling match your alerting thresholds.

- Operating temperature and power budget margins: validate that your environment stays within the module’s operating range, and that your link budget has headroom.

- Vendor lock-in risk: assess whether third-party optics are supported for diagnostics and alarms, not only for link bring-up.

- Rollback and idempotency: test configuration retries and confirm whether optical tuning operations are safe under automation loops.

Pro Tip: In optical deployments, the fastest way to uncover OpenConfig mapping gaps is to compare three timelines side by side: controller desired-state change, device operational leaf updates, and transceiver DOM refresh. If the DOM refresh is slower than your automation retry interval, you will misdiagnose “stuck” links and trigger unnecessary remediation.

Common mistakes and troubleshooting tips for OpenConfig optical challenges

Below are concrete failure modes that cause the most pain. Each includes a root cause and a practical fix you can apply during troubleshooting and incident response.

“Port is up” but link quality is silently degrading

Root cause: Automation checks only admin/oper status, while optical health depends on received power and error metrics that may not be mapped into the same operational model. DOM refresh delay can also hide degradation long enough to trigger retries.

Solution: Add telemetry gating: require received optical power to stay within a defined window and correlate it with interface error counters before declaring the service healthy.

Unit mismatch for DOM thresholds leads to false alarms

Root cause: One vendor exposes temperature in Celsius with a certain granularity; another exposes it with different scaling or rounding. Your controller then applies thresholds that do not match the device’s semantics.

Solution: During acceptance tests, read raw DOM values and confirm scaling. Normalize units in the integration layer, then set thresholds based on validated vendor behavior.

Automation retries cause coherent retrain cycles or service hits

Root cause: Idempotency assumptions fail for optical tuning operations. Timeouts at the controller lead to repeated configuration pushes that trigger retraining or optical parameter recalculation.

Solution: Implement change tracking: treat optical tuning as a staged operation with explicit completion signals. Reduce retry aggressiveness and use device acknowledgements where available.

Fiber polarity or connector mismatch masquerades as software failure

Root cause: LC vs MPO wiring mistakes, polarity swaps, or incorrect patching can prevent link bring-up even when OpenConfig configuration succeeds. The device reports link down, but engineers chase model leaves instead of physical reality.

Solution: Use a commissioning checklist with polarity verification and a patch map. In incidents, perform quick optical layer checks before deep software debugging.

Cost and ROI note: what OpenConfig changes in the budget

The ROI of solving OpenConfig challenges comes from reducing manual provisioning time and lowering operational incident volume, but only if the integration is engineered carefully. In many real deployments, engineers budget between $50k and $250k for integration testing, telemetry normalization, and runbook creation across a pilot set of sites. Third-party optics can reduce per-transceiver cost, but TCO may increase if you lose diagnostic parity or face higher failure rates due to compatibility constraints.

As a rough market reference, enterprise 10G SR optics often land in the $30 to $150 range depending on brand and warranty, while coherent optics are typically far higher and require vendor support for diagnostics. If your organization already standardizes on one vendor’s transceivers and line cards, OpenConfig adoption can be smoother; if you run mixed fleets, expect additional engineering time for unit normalization and alarm translation. For ROI, track both mean time to recovery and the number of “false-positive” alarms caused by semantic mismatches.

Summary ranking: which OpenConfig challenges matter most first

Use this ranking table to prioritize your pilot work. It is ordered by frequency in field incidents and the potential blast radius in optical environments.

| Rank | Challenge | Impact | Likelihood | First action |

|---|---|---|---|---|

| 1 | Telemetry semantics and alarm/event mismatch | High | High | Validate event clearing and time stamps |

| 2 | DOM unit scaling and refresh cadence differences | High | High | Normalize DOM values and set thresholds after validation |

| 3 | Model-to-hardware gaps for optical-specific parameters | High | Medium | Confirm required leaves are supported in OpenConfig |

| 4 | Coherent tuning idempotency and retry safety | High | Medium | Test retries and completion signaling |

| 5 | Fiber reach and power budget automation assumptions | Medium | Medium | Pre-check link budgets and add telemetry gating | .wpacs-related{margin:2.5em 0 1em;padding:0;border-top:2px solid #e5e7eb} .wpacs-related h3{margin:.8em 0 .6em;font-size:1em;font-weight:700;color:#374151;text-transform:uppercase;letter-spacing:.06em} .wpacs-related-grid{display:grid;grid-template-columns:repeat(auto-fill,minmax(200px,1fr));gap:1rem;margin:0} .wpacs-related-card{display:flex;flex-direction:column;background:#f9fafb;border:1px solid #e5e7eb;border-radius:6px;overflow:hidden;text-decoration:none;color:inherit;transition:box-shadow .15s} .wpacs-related-card:hover{box-shadow:0 2px 12px rgba(0,0,0,.1);text-decoration:none} .wpacs-related-card-img{width:100%;height:110px;object-fit:cover;background:#e5e7eb} .wpacs-related-card-img-placeholder{width:100%;height:110px;background:linear-gradient(135deg,#e5e7eb 0%,#d1d5db 100%);display:flex;align-items:center;justify-content:center;color:#9ca3af;font-size:2em} .wpacs-related-card-title{padding:.6em .75em .75em;font-size:.82em;font-weight:600;line-height:1.35;color:#1f2937} @media(max-width:480px){.wpacs-related-grid{grid-template-columns:1fr 1fr}}