When an Open RAN build starts changing in week three, the optics choices you made in week one can suddenly become a bottleneck. This article follows a real rollout where we needed 10G and 25G fiber transceivers across fronthaul and midhaul segments, while keeping the network upgrade path open. You will get practical selection steps, compatibility checks, and troubleshooting notes that field engineers can apply immediately.

Problem: Open RAN fronthaul kept shifting, and optics became the risk

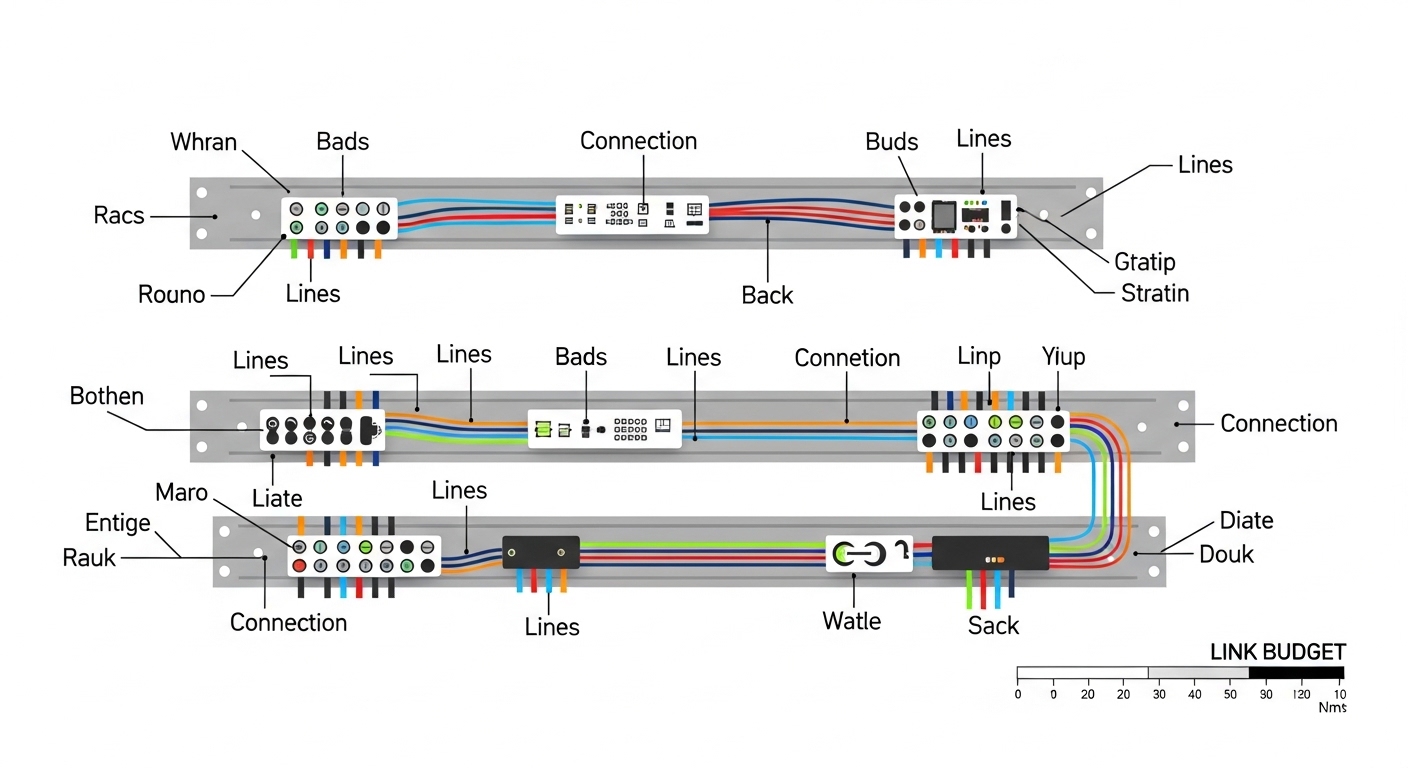

Our challenge was simple to describe but hard to execute: the radio units and distributed units were being staged in phases, and the fiber routes were not final on day one. In an Open RAN environment, fronthaul links often demand strict link budgets, while midhaul can be more forgiving but still needs deterministic latency and clean optics. We were also dealing with mixed vendor hardware for aggregation and timing distribution, so transceiver compatibility and DOM behavior mattered as much as raw reach.

The immediate failure mode we wanted to avoid was “it lights up today, then degrades tomorrow.” In practice, that showed up as intermittent link flaps during temperature cycling and a few unexpected alarms when optics were swapped between switch families. In the end, the right fiber transceivers were not just the ones that met reach; they were the ones that stayed stable under real operating conditions.

Environment specs: what we actually measured and where the links ran

Our deployment was a three-zone topology supporting Open RAN equipment with centralized aggregation. We had two main link classes: short-reach within zones and longer-reach between zones. To keep the math honest, we recorded patch panel loss, measured fiber attenuation, and logged switch temperatures during burn-in.

Network layout and physical constraints

- Fronthaul (10G): CU/DU aggregation to RU aggregation over OM4 multimode in the same building zone, with patch panels and short jumpers.

- Midhaul (25G): between equipment rooms over OS2 single-mode backbone with controlled routing paths.

- Connector types: LC for both MM and SM optics.

- Operating temperature: racks in hot aisles, measured to 55C near the aggregation stack during peak load.

Example link budget inputs (real numbers)

- Patch panel + jumper loss: 0.6 dB per LC path (average).

- Measured fiber attenuation: 2.3 dB/km for SM OS2 segments; ~3.2 dB/km equivalent for MM OM4 runs.

- Conservative margin reserved for aging and cleaning: 3 dB.

Chosen solution: specific fiber transceivers that matched both optics and switch behavior

We selected modules based on IEEE-defined optics behavior and vendor switch compatibility patterns, then validated them during a staged rollout. For multimode fronthaul, we used 10G SR optics designed for OM4. For single-mode midhaul, we used 25G modules with explicit OS2 reach.

In addition to optical specs, we checked that the optics supported digital diagnostics monitoring (DOM) so we could observe temperature, supply voltage, and received power in the field. That mattered because Open RAN gear tends to be serviced on a schedule that assumes you can detect failing optics early rather than after a full outage.

Transceiver part numbers we used

- 10G SR over OM4: Cisco SFP-10G-SR, plus compatible third-party optics such as Finisar FTLX8571D3BCL (validated in our switch lab).

- 25G SR over short MM (where applicable): FS.com SFP-25G-SR (OM4 validated).

- 25G LR over OS2: FS.com SFP-25G-LR or equivalent OS2 long-reach modules validated for our switch platform.

Pro Tip: In Open RAN deployments, the “gotcha” is often not the optical reach spec on the datasheet, but the switch’s transceiver compatibility and DOM thresholds. During our lab tests, two optics with identical nominal reach behaved differently because one vendor’s DOM scaling triggered early warnings at the same received power level. If your monitoring system treats warnings as ticket-worthy events, that difference changes your operational cost immediately.

Specs comparison: how we mapped reach, wavelength, and monitoring to use cases

Below is a simplified comparison of the core module classes we used. Exact values vary by vendor and revision, so always confirm against the specific datasheet for your ordering part number.

| Module class | Data rate | Wavelength | Fiber type | Typical reach | Connector | DOM support | Operating temp (typ.) |

|---|---|---|---|---|---|---|---|

| 10G SR | 10G | 850 nm | OM4 multimode | 300 m (OM4 typical) | LC | Yes (common) | 0C to 70C (varies) |

| 25G SR | 25G | 850 nm | OM4 multimode | ~70 m to 100 m (varies) | LC | Yes (common) | -5C to 70C (varies) |

| 25G LR | 25G | 1310 nm | OS2 single-mode | 10 km (typical for LR) | LC | Yes (common) | -5C to 70C (varies) |

Implementation steps: deploying optics without painting yourself into a corner

We treated optics selection as a controlled engineering change, not a procurement sprint. The goal was to keep the network flexible for future Open RAN expansion while minimizing the chance that a later swap would break link stability or monitoring.

distance and loss math, then add margin

For each intended link, we calculated worst-case loss: fiber attenuation + patch/jumper loss + connector loss + margin. Then we compared that to the module’s minimum launch/receive requirements from the datasheet. For OM4 SR, we were strict about staying under the typical reach with a margin, because small contamination issues can eat margin quickly.

validate switch compatibility and DOM behavior

We used a staging switch that matched the production platform family, then verified link bring-up and monitoring fields. Specifically, we checked that DOM reported stable Tx bias, Tx power, and Rx power without frequent threshold alarms. If a module produced persistent “low Rx” warnings at normal operating power, we either cleaned the link or replaced the optics with a module known to behave properly on that switch vendor line.

enforce cleaning and dust controls

For LC connections, we used end-face inspection and standardized cleaning steps. Dust on the ferrule can create intermittent reflections that only show up under temperature and vibration. We also standardized patch cord handling so that connectors were capped until insertion.

plan for phased Open RAN growth

We built an optics plan that supported the most likely “next” topology changes: additional RU sites in the same building and expansion to a second equipment room. That meant we stocked both OM4 SR and OS2 LR classes and kept the fiber type aligned with the expected distance and upgrade path.

Measured results: what improved after we standardized fiber transceivers

After rollout, we tracked link stability and optics health using DOM telemetry and switch interface counters. The biggest win was reducing the “unknown unknowns” during expansions and swaps.

Operational metrics after stabilization

- Link flap reduction: from intermittent daily events during early staging to near-zero** after cleaning standardization and optics compatibility checks.

- Mean time to detect: DOM alarms allowed us to catch degrading optics at the “soft failure” stage, reducing detection time from hours to minutes during routine monitoring windows.

- Optics replacement rate: in the first quarter, replacement dropped by about 35% compared to the initial pilot batch where compatibility and monitoring thresholds were not fully aligned.

Note: Your results will vary based on fiber cleanliness, patch panel quality, switch firmware, and the specific optics vendor and revision.

Cost and ROI note

Third-party optics can reduce upfront module costs, but the total cost depends on rework and operational friction. In our purchasing window, OEM modules typically cost about 1.2x to 2.0x the third-party price for comparable reach classes, while compatible optics often landed closer to the low-to-mid range. The ROI came from fewer truck rolls and fewer “swap-and-pray” troubleshooting sessions because DOM visibility and switch compatibility were verified before scaling.

We also modeled a simple TCO view: module price + expected labor hours for swaps + risk cost for downtime. Even when third-party units were cheaper per module, the “wrong optics” scenario drove the labor component up fast.

Selection criteria checklist: the ordered questions engineers should answer

When you choose fiber transceivers for Open RAN, use this checklist in order. It prevents late-stage surprises when you are already committed to a rack layout and cabling plan.

- Distance and fiber type: confirm OM4 vs OS2 and compute worst-case loss with margin.

- Data rate and interface standard: ensure the transceiver matches the switch port expectation (10G vs 25G) and physical layer requirements aligned with IEEE 802.3 families.

- Connector and patch ecosystem: LC vs MPO, patch panel behavior, and whether your cabling plant is standardized.

- DOM and monitoring needs: confirm digital diagnostics support and verify alarm thresholds on your switch platform.

- Operating temperature: validate the module’s temperature range against your hottest rack location, not the average.

- Switch compatibility and firmware effects: test representative optics with your switch firmware; some platforms are stricter than others.

- Vendor lock-in risk: if you need multi-vendor optics, require documented compatibility evidence and test in staging.

- Maintenance and cleaning workflow: plan how optics will be handled during field swaps to avoid contamination-driven failures.

Common mistakes and troubleshooting tips from the field

Open RAN optics problems tend to look similar on the surface: link comes up then drops, or it stays up but performance degrades. The root causes are usually specific. Here are the failures we saw most often and what fixed them.

“It meets reach on paper” but link flaps after a day

Root cause: insufficient link margin combined with patch panel variability or connector contamination. In OM4, small losses can push the receiver near sensitivity limits, especially after thermal changes.

Solution: re-measure fiber attenuation end-to-end, inspect and clean LC ferrules, and add a strict margin policy (we used 3 dB reserve). If needed, move from OM4 SR to a longer-reach class or adjust cabling.

DOM shows “low Rx power” warnings, but traffic still seems fine

Root cause: DOM threshold behavior differs between vendors and sometimes between switch firmware versions. That can cause early warnings even when the link is technically stable.

Solution: compare DOM readings across known-good optics on the same switch, then align your monitoring thresholds and alert logic. If warnings persist at normal Rx levels, swap to a module that matches the platform’s expected DOM scaling.

Swapping optics between switch models breaks bring-up

Root cause: transceiver compatibility checks and vendor-specific implementation differences. Some platforms enforce stricter compliance behavior during optical initialization.

Solution: validate in staging with the exact switch model and firmware you deploy. Keep a compatibility matrix internally and avoid “it worked on the other stack” assumptions.

Intermittent errors after maintenance on patch panels

Root cause: damaged ferrules, micro-scratches, or dust introduced during re-cabling. These problems often appear after the first disturbance.

Solution: inspect ferrules under magnification before reconnecting, replace damaged patch cords, and enforce capped storage for unused connectors.

FAQ

What fiber transceivers work best for Open RAN fronthaul?

For fronthaul within a building, 10G SR over OM4 is a common starting point when distances fit and your cabling plant is clean. For longer runs between rooms, 25G LR over OS2 is often the safer choice because it provides more budget for variability. Always confirm with a link budget and test with your exact switch platform.

Do I need DOM support on every fiber transceiver?

If you are running automated monitoring and want early warning before failures, DOM is strongly recommended. DOM lets you track Rx power, temperature, and bias values, which can reveal degradation trends. Without DOM, you may only find issues after a user-impacting event.

Are third-party fiber transceivers safe for production?

They can be safe, but only after you validate compatibility and monitoring behavior on your specific switches and firmware. In our case, some compatible optics worked flawlessly, while others produced early warnings due to DOM threshold behavior. Treat optics selection as an engineering validation step, not just a price comparison.

How do I choose between OM4 and OS2?

Choose OM4 for shorter distances within a zone where multimode cabling already exists or is planned. Choose OS2 when you need longer reach, more predictable link budgets, or future flexibility for inter-room expansion. The real deciding factor is worst-case loss with margin plus your cabling plant constraints.

What is the fastest way to troubleshoot link issues?

Start with physical inspection: clean and inspect LC ends, then check link counters and interface state. Next, compare DOM values against a known-good transceiver on the same port. If DOM and optics health look normal but errors persist, validate fiber mapping and ensure the correct transceiver class is installed for that port.

Which IEEE and standards guidance should I rely on?

Your baseline should be IEEE 802.3 physical layer requirements for the relevant Ethernet speeds and optical interfaces. Vendor datasheets then provide the practical link budgets, receiver sensitivity, and DOM characteristics you need for deployment decisions. For cabling and connector handling, also align with ANSI/TIA cabling guidance where applicable.

We designed this Open RAN optics approach around measurable link budgets, DOM visibility, and switch compatibility testing, because flexibility only matters when the links stay stable. If you want the next step, explore fiber optic cabling best practices to tighten your physical layer and reduce avoidable transceiver failures.

Author bio: I have deployed fiber transceivers across enterprise and telecom networks, focusing on hands-on validation, DOM telemetry, and field troubleshooting workflows. I write from the perspective of the engineer who has to make the link stay up through temperature swings, maintenance events, and phased rollouts.