Open RAN deployments live at the edge of tight budgets, mixed vendors, and evolving transport requirements. This article helps network engineers and field teams choose transceivers that keep future-proof networking intact as rates, optics, and interoperability expectations change. You will get practical selection criteria, a spec comparison table, deployment-ready troubleshooting, and a short FAQ for procurement and operations teams.

Open RAN transport reality: where transceivers make or break interoperability

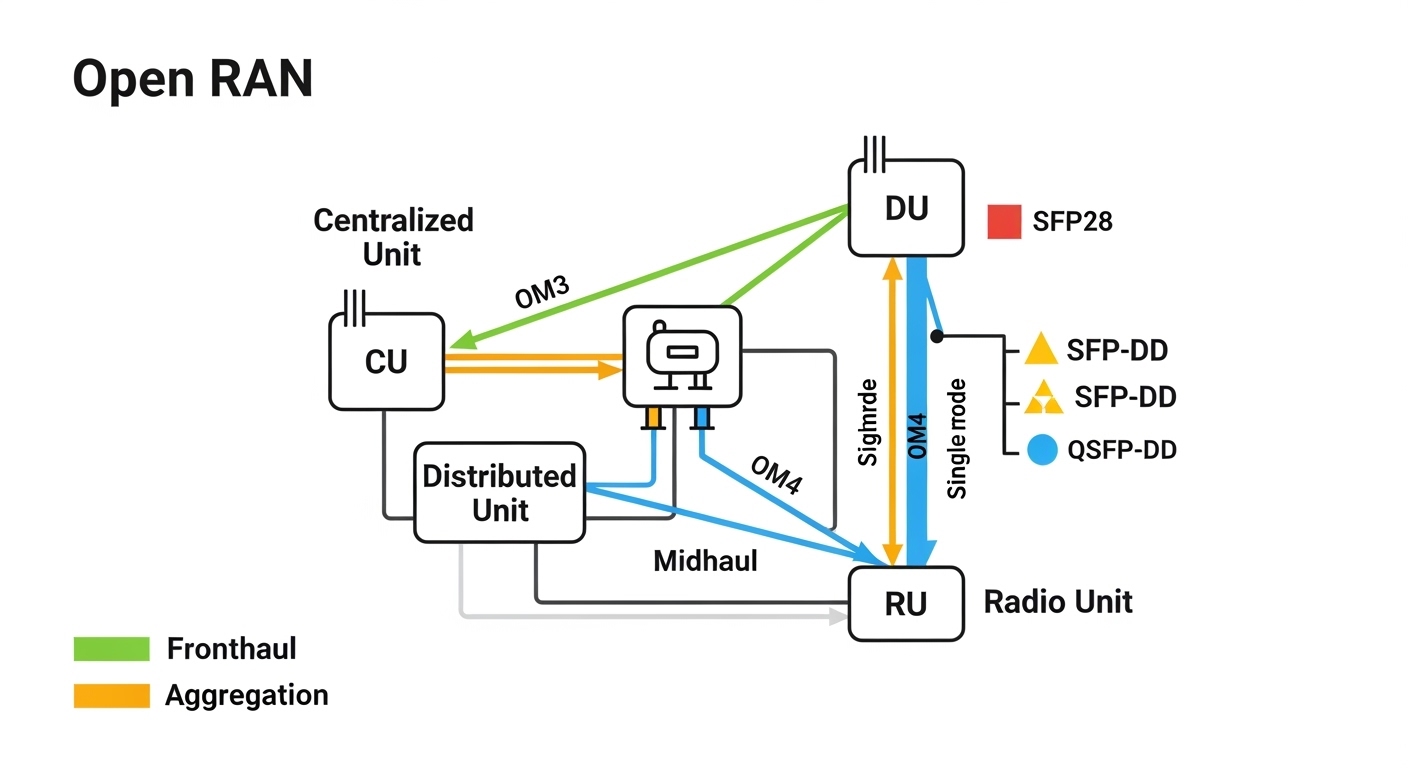

In Open RAN, you often connect distributed units (DU) and radio units (RU) through fronthaul and midhaul links, then backhaul into aggregation and core. The optics decision is not just about link reach; it also affects timing, power budget margins, and compatibility with vendor optics management. For future-proof networking, the goal is to avoid “optics lock-in” while keeping deterministic behavior for latency-sensitive segments.

Most Open RAN transport designs rely on Ethernet framing and standardized optical interfaces defined by IEEE 802.3 and SFF multi-source agreements. Practically, you will be selecting between common pluggable form factors such as SFP28, SFP-DD, QSFP28, and QSFP-DD, mapping them to line rates like 10G, 25G, 40G, and 100G. Your choice also depends on fiber type (single-mode vs multimode), connector style (LC vs MPO), and whether you need DOM (digital optical monitoring) for fleet observability.

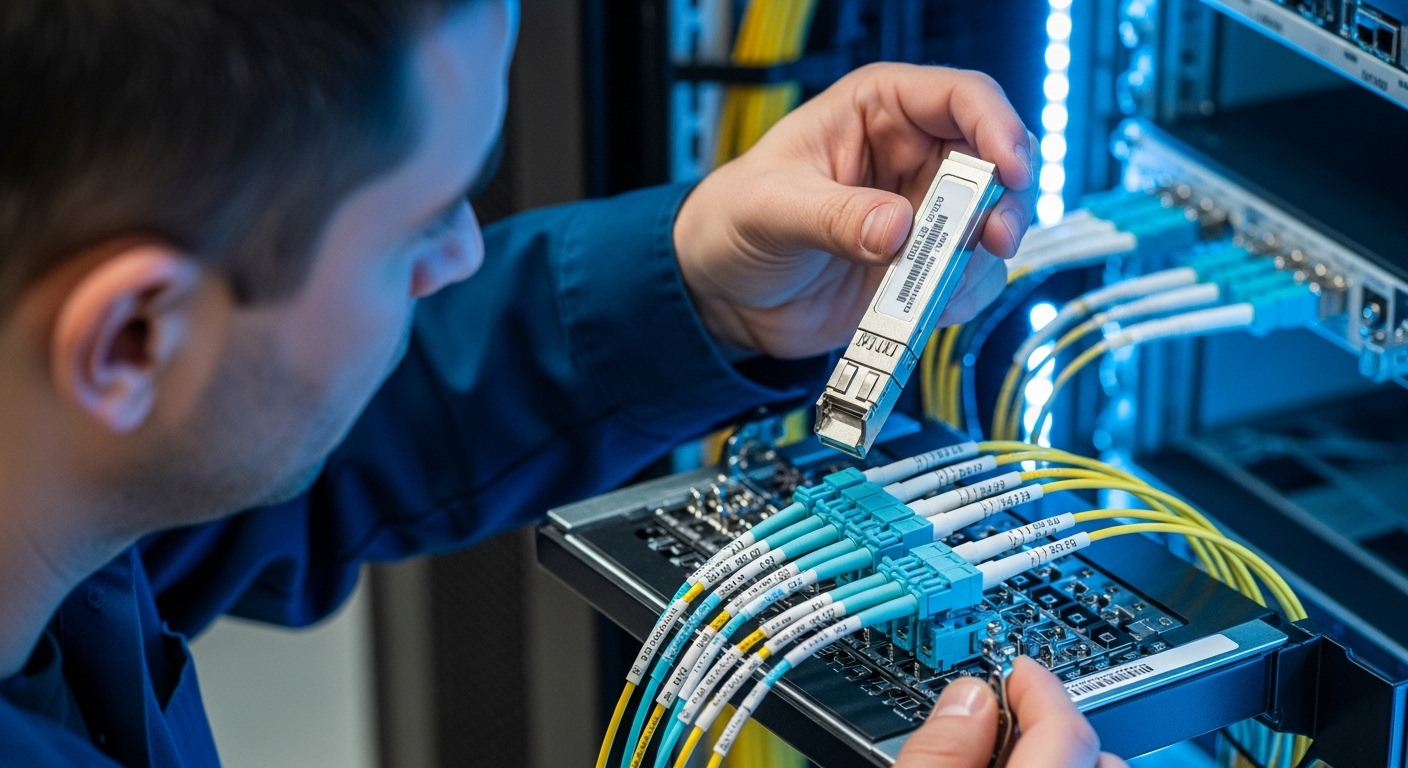

In field operations, we often see teams underestimate how optical budget interacts with aging and temperature. A transceiver that “meets reach” on paper can fail under higher connector loss, dirty fiber, or hotter-than-expected enclosures. Future-proof networking therefore means selecting optics with adequate power margin, robust diagnostics, and operational temperature headroom.

Key transceiver specs that determine future-proof networking

To evaluate transceivers for Open RAN transport, engineers typically start with wavelength, reach, and power consumption. Then they verify interface compliance (for example, Ethernet 10G/25G/40G/100G optical specifications), DOM capability, and the operating temperature range for outdoor cabinets. Finally, you confirm that the switch or optical transceiver cage supports the module type and vendor compliance mode you plan to use.

What to measure in the lab before you deploy

Before installation, validate the optics with a controlled test: clean the fiber, run a link stability test, and read DOM values (Tx bias current, received power, and temperature) under expected ambient conditions. For example, if you use a 25G SR module over OM3, you should expect received power readings to remain within the vendor’s specified “acceptable” window while Tx bias stays stable. If DOM shows frequent threshold crossings, you may have a budget issue or a connector contamination problem.

Practical comparison table: common module types for Open RAN

The table below compares representative module families used in modern Ethernet-based transport. Exact values vary by vendor and part number, so always confirm with the specific datasheet you intend to purchase.

| Module type | Nominal wavelength | Typical reach | Form factor | Connector | Data rate | DOM | Operating temperature |

|---|---|---|---|---|---|---|---|

| 10G SR | 850 nm | Up to 300 m (OM3), up to 400 m (OM4) | SFP+ | LC | 10G | Yes (2-wire/MDIO via SFP) | Commercial: 0 to 70 C; Extended variants exist |

| 25G SR | 850 nm | Up to 100 m (OM3), up to 150 m (OM4) | SFP28 or SFP-DD (vendor-specific) | LC | 25G | Yes (SFP/SFF DOM standard support) | Commercial and industrial options |

| 25G LR | 1310 nm | Up to 10 km (single-mode) | SFP28 | LC | 25G | Yes | Commercial and industrial options |

| 100G LR4 | ~1310 nm (4 lanes) | Up to 10 km (single-mode) | QSFP28 or QSFP-DD (vendor-specific) | LC (or MPO with adapter) | 100G | Yes | Commercial and industrial options |

Open RAN tip: for future-proof networking, consider whether your DU or aggregation site might later require higher aggregate throughput. If you expect growth, planning for 25G or 100G-capable uplinks early can reduce forklift upgrades later.

Matching transceivers to Open RAN segments: fronthaul, midhaul, and aggregation

Open RAN networks are often segmented by performance requirements. Fronthaul can be highly latency-sensitive and may use specialized transport profiles, while midhaul and aggregation are more tolerant but still require reliability and observability. This means you should treat each segment as a separate optics budget and compatibility exercise.

Distance and fiber type: the first constraint

For short in-building runs (for example, from a DU cabinet to an edge aggregation switch), multimode optics such as 10G SR or 25G SR can be cost-effective. If you need longer distances between sites or through outdoor runs, single-mode modules like 25G LR or 100G LR4 become the practical choice. In future-proof networking, single-mode typically provides more upgrade flexibility because you can scale reach without changing the fiber plant.

Interface and switch compatibility: the second constraint

Not every transceiver works in every cage, even when the connector and speed match. Some switches enforce vendor-specific qualification lists or require specific DOM behavior. For field deployments, verify compatibility using the switch vendor’s optics matrix or run a controlled acceptance test with the exact module part numbers.

DOM and monitoring: operational resilience

DOM support helps you detect early failures: rising Tx bias, falling received power, or temperature excursions. In Open RAN operations, you want alarms that map cleanly to your monitoring stack so you can correlate optical degradation with environmental factors. While DOM is common, the exact thresholds and how they surface in telemetry can differ by vendor.

Pro Tip: In live sites, the most frequent “mystery outages” are not dead optics; they are dirty or mismatched connectors that shift received power just enough to cross vendor thresholds. Always schedule fiber cleaning and inspection as a first-line troubleshooting step before replacing transceivers, and use DOM trend graphs to confirm whether degradation is gradual or sudden.

Selection criteria checklist for future-proof networking (engineer ordering logic)

When choosing optics for an Open RAN program, engineers typically follow an ordered checklist to prevent rework. Use this sequence as a procurement-to-commissioning workflow.

- Distance and fiber plant reality: measure end-to-end loss, including patch panels, splices, and connector pairs. Confirm whether your link budget includes future connector aging and remakes.

- Target data rate and upgrade path: decide whether you need 10G, 25G, 40G, or 100G today, and whether you want spare capacity for future-proof networking.

- Transceiver form factor and cage support: match SFP, SFP28, SFP-DD, QSFP28, or QSFP-DD to the switch and optical module cage. Ensure the cage supports the speed and lane mapping.

- DOM support and telemetry behavior: confirm DOM is enabled and that your monitoring system can ingest key metrics. For example, ensure Rx power and Tx bias are readable through your platform.

- Operating temperature and enclosure constraints: outdoor cabinets and near-radio equipment can exceed standard commercial ranges. Choose industrial or extended temperature modules when ambient conditions demand it.

- Compatibility and vendor lock-in risk: evaluate whether the switch enforces an optics brand list. If possible, test at least two sources and keep a qualification record for each.

- Power, thermal, and reliability targets: compare typical power draw and thermal design. Field failures often correlate with heat and repeated thermal cycling.

- Connector cleanliness workflow: define cleaning tools, inspection method, and acceptance criteria at installation time. This is part of future-proof networking because it reduces avoidable failures.

Example deployment scenario with measured operational details

Consider a 3-tier Open RAN transport design in a regional network: 48-port 25G leaf switches at the DU aggregation sites, uplinked to a 100G spine pair in the metro core. Each DU-to-leaf link uses 25G SR over OM4 for intra-building runs of 70 to 90 m, while leaf-to-core uses 100G LR4 over single-mode fiber for 6 to 12 km spans. During commissioning, engineers read DOM values and set alerts for Rx power drift; for stable links, Rx power remained within the module vendor’s typical “acceptable” range and Tx bias did not trend upward beyond the threshold over 30 days. When one site experienced intermittent link flaps, the root cause was connector contamination at a patch panel; after cleaning and re-termination, DOM showed Rx power recovery and link stability returned without changing modules.

Common mistakes and troubleshooting that protect future-proof networking

Even high-quality optics can fail if teams skip operational discipline. Below are frequent pitfalls seen in the field, with root causes and corrective actions.

“Meets reach on paper” but fails in real life

Root cause: link budget miscalculation due to extra patch cords, dirty connectors, unaccounted splice loss, or aging. Solution: re-measure loss using an OTDR or certified loss test, then add a margin for connector cleaning cycles. Prefer modules with extra optical budget headroom when possible, especially for outdoor runs.

DOM mismatch or disabled monitoring leads to delayed detection

Root cause: monitoring assumes DOM metrics exist, but the platform does not expose them consistently for certain third-party modules or certain firmware levels. Solution: validate telemetry visibility during acceptance testing: confirm Rx power and temperature are present in your telemetry pipeline before mass deployment.

Switch cage incompatibility causes intermittent link or “unsupported transceiver” alerts

Root cause: the module is electrically compatible in theory but fails vendor-specific compliance checks, lane mapping expectations, or firmware constraints. Solution: use the switch vendor’s optics compatibility matrix and test exact part numbers. Maintain a qualification spreadsheet tying module part numbers to switch firmware versions.

Cleaning and inspection skipped during maintenance windows

Root cause: fiber endfaces degrade performance quickly; even small contamination can increase insertion loss enough to trigger alarms. Solution: institute a standard cleaning SOP with inspection before reconnecting. Use a fiber scope to verify ferrule endface quality and ensure dust caps are used any time fibers are disconnected.

Cost and ROI note: balancing OEM optics, third-party options, and total risk

Costs vary widely by rate and reach. As a practical budgeting reference, 10G SR SFP+ modules often land in the low tens of dollars per unit, while 25G SR and 25G LR SFP28 modules are commonly higher; 100G LR4 QSFP class modules typically cost substantially more. OEM-branded optics can carry a premium, but they may reduce compatibility risk and simplify warranty workflows.

ROI should include total cost of ownership: spares availability, mean time to repair, and the operational cost of troubleshooting. In many deployments, third-party optics are viable for future-proof networking if you enforce rigorous acceptance testing and maintain strict DOM and compatibility validation. However, warranty terms and telemetry differences can increase labor cost if issues emerge later, so treat optics procurement as an engineering risk decision, not only a line-item purchase.

FAQ

What optics types are most common for Open RAN Ethernet transport?

Engineers commonly use SFP28 or SFP-DD for 25G over multimode or single-mode, and QSFP28 or QSFP-DD for higher-capacity uplinks. The exact choice depends on distance, fiber type, and switch cage support.

How do I ensure future-proof networking when the DU or aggregation capacity may grow?

Plan for higher-rate uplinks and select optics that match the switch’s upgrade ceiling. For example, designing with 25G or 100G-ready aggregation paths reduces future forklift upgrades.

Are third-party transceivers safe to use in production?

They can be, but only after compatibility testing with your specific switch models and firmware versions. Validate DOM telemetry and run stability tests under realistic link loss and temperature conditions.

What is the fastest troubleshooting path for link flaps?

First check DOM trends for received power and temperature drift, then inspect and clean connectors. If the issue persists, confirm switch events for “unsupported transceiver” and verify optics compatibility against the platform matrix.

Should I choose multimode or single-mode for long-term flexibility?

Single-mode usually offers more upgrade flexibility because it supports longer reaches without replacing the fiber plant. Multimode can still work well for short intra-building segments where cost matters.

Which standards and references should I rely on for design confidence?

Base your physical-layer assumptions on IEEE 802.3 for Ethernet optical interfaces and on SFF specifications for module behavior. Also rely on vendor datasheets for exact optical budget and DOM threshold details, and verify against your switch vendor’s optics qualification guidance.

For deeper validation workflow, see future-proof networking fiber budget methodology.

Author bio: I am a field-focused network scientist who designs and validates optical transport for production systems, including DOM telemetry and acceptance testing. I routinely collaborate with fiber and radio teams to reduce link outages and keep future-proof networking stable under real environmental conditions.

Update: Article updated on 2026-05-01.