In a busy data center, link flaps and “mystery” CRC errors often trace back to fiber loss you can measure but not always interpret. This article helps network and field engineers prevent transceiver underperformance by turning optical loss into actionable budgets, test plans, and procurement rules. You will get practical reach math, compatibility caveats, and troubleshooting patterns drawn from real deployments.

Why fiber loss becomes a reliability issue in a data center

Fiber loss is not just an optics spec line; it is the end result of attenuation across the fiber core, connectors, splices, patch panels, and excess loss from routing bends. Modern transceivers for Ethernet—defined under IEEE 802.3—typically assume a total optical budget that includes both intrinsic fiber attenuation and “system” penalties. When the real plant exceeds that budget, the receiver sees a lower optical power margin, which can manifest as bit errors, increased FEC corrections, and eventual link instability.

In day-to-day operations, loss problems show up as intermittent issues during moves, adds, and changes (MACs), or after cleaning/patching events. A single dirty connector can add several dB of excess loss; similarly, a damaged patch cable can silently reduce launch power. The key operational mindset is to treat the fiber plant as an optical system with measurable margins, not as “just cabling.”

Optical budgets that actually match your data center links

To manage fiber loss effectively, you need a consistent way to compute a “worst-case” budget for every link type: wavelength, reach target, connector count, splice count, and expected excess loss. The relevant numbers usually come from the transceiver datasheet (Tx power, Rx sensitivity, and sometimes allowable receiver overload) and from fiber plant documentation (fiber type, measured attenuation, connector loss, and bend policy).

Below is a practical comparison for common Ethernet optics. Values are representative of typical vendor modules; always confirm with the exact part number and datasheet revision you buy. For standards context, Ethernet links are specified in IEEE 802.3 for optical interface characteristics and reach targets, while detailed optics parameters are vendor-specific. IEEE 802.3

| Transceiver / Interface | Typical Wavelength | Target Reach | Fiber Type | Connector | Operating Temp (typ.) | How to manage loss |

|---|---|---|---|---|---|---|

| SFP-10G-SR class (10GBASE-SR) | ~850 nm | Up to 300 m OM3 / 400-500 m OM4 (varies) | OM3/OM4 multimode | LC | ~0 to 70 C | Budget connectors/splices and patch cords; watch aging and contamination |

| SFP+/SFP28-25G/10G-LR class (10GBASE-LR / 25GBASE-LR) | ~1310 nm | Up to 10 km | Single-mode (OS2) | LC | ~0 to 70 C | Track dB per km and ensure patch/splice losses stay inside margin |

| QSFP28-100G-SR4 class | ~850 nm (4 lanes) | Up to 100-150 m (varies by OM) | OM4 multimode | MPO/MTP | ~0 to 70 C | Account for MPO insertion loss and lane-to-lane imbalance |

| QSFP28-100G-LR4 class | ~1310 nm (4 lanes) | Up to 10 km | Single-mode (OS2) | LC | ~0 to 70 C | Guard against connector overload and verify dispersion assumptions |

In field terms, you typically compute:

- Fiber attenuation (dB/km) times route length

- Connector insertion loss (LC vs MPO can differ)

- Splice loss (fusion vs mechanical)

- Excess loss from patch panels, shelves, and bend radius deviations

Then compare to the transceiver’s allowable optical budget (Tx power minus Rx sensitivity, minus any additional system penalties the vendor specifies). If you do this consistently, “it should work” becomes “it will work with margin.”

Pro Tip: When troubleshooting a marginal link, do not rely on a single “end-to-end” continuity check. Instead, measure with an OTDR or optical power meter at the patch level and verify each connector group. Excess loss often clusters at patch panels and remated connectors, so fixing the worst 1-2 locations can restore margin faster than recabling the entire route.

Operational workflow to manage loss in the data center

Effective loss management is a workflow, not a one-time calculation. Start by standardizing how you document links: every link should have a fiber ID, route length, connector and splice counts, and measured attenuation. Many teams already store this in a cabling database; the improvement is to tie it to transceiver selection and acceptance tests.

Align transceiver choice with the installed fiber plant

For multimode, confirm the fiber grade (OM3 vs OM4) and ensure patch cord types match the optics assumptions. For example, 100G SR4 systems using MPO/MTP connectors are sensitive to insertion loss and lane balance. If your plant includes a mix of patch cord qualities or unknown patch history, you may need to tighten acceptance thresholds or select optics with better receiver sensitivity.

Use acceptance testing with realistic thresholds

During installation, measure optical loss in the same direction every time and record results by patch segment. For field teams, a common operational approach is to set a “pass” threshold that includes a safety margin (for instance, allowing only a fraction of the vendor budget before the link is considered stable). This is especially important for high-speed optics where FEC and receiver thresholds can leave less headroom.

Monitor and respond to warning signs

Many transceivers support diagnostic monitoring via digital interfaces such as I2C and standardized management data (commonly including Tx bias/current, optical power, and temperature). If your switch or optical management platform exposes DOM telemetry, alert on trends rather than only on link-down events. A slow decline in Tx power or rising error counters can indicate contamination, connector wear, or fiber damage from repeated remating.

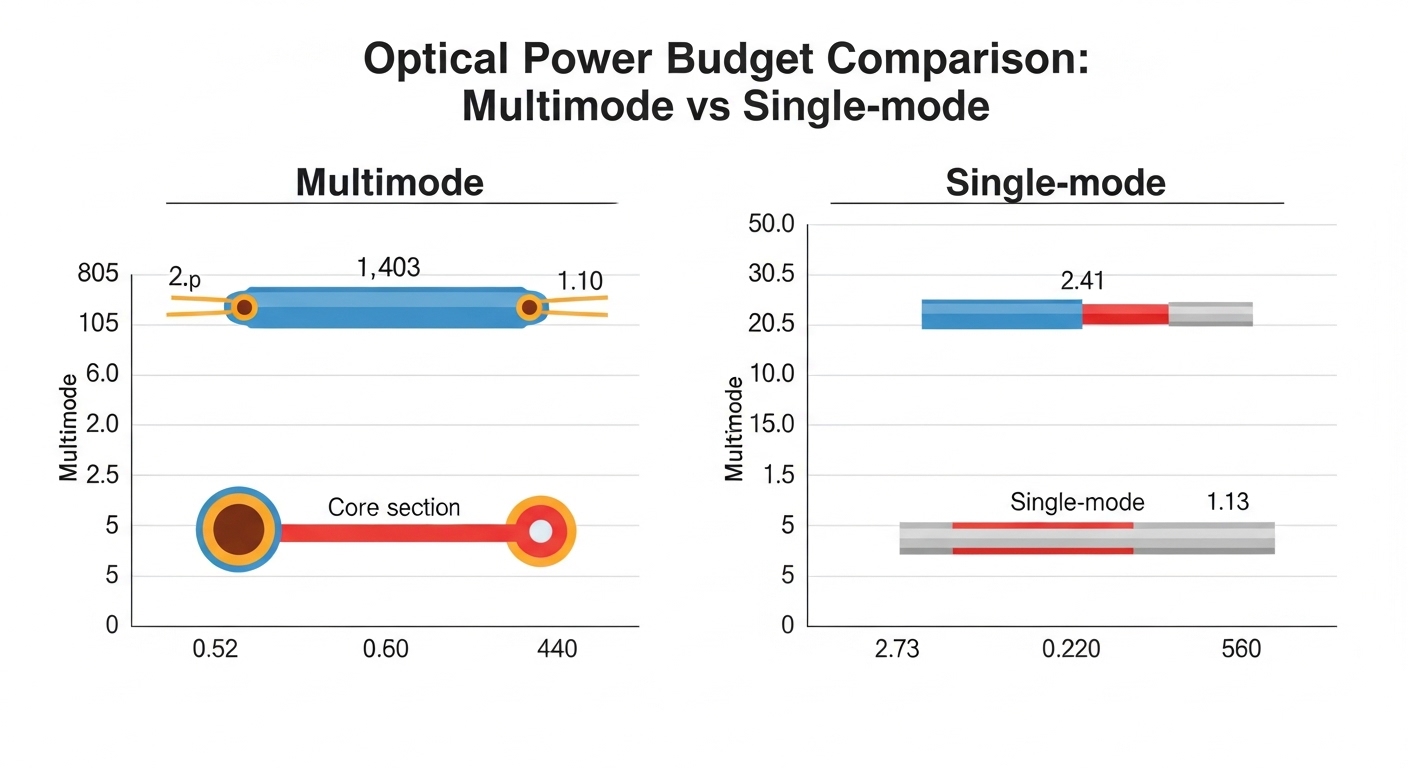

Comparison: multimode vs single-mode loss behavior for data center optics

In a data center, both multimode and single-mode are common, but their loss behavior and operational risks differ. Multimode optics at ~850 nm can be more cost-effective over shorter distances, yet they are sensitive to connector cleanliness and MPO insertion loss. Single-mode optics at ~1310 nm can support longer reach, but the operational risks shift toward connector quality at scale and correct end-to-end planning across patching infrastructure.

Key differences engineers should account for

- Connector style: MPO/MTP for many high-speed multimode links increases the importance of insertion loss control and polarity/lane management.

- Excess loss sensitivity: Contamination can create large dB penalties; multimode budgets often have less margin at higher speeds.

- Plant documentation: Single-mode deployments often span more infrastructure layers, so route documentation errors become a major loss driver.

- Temperature and aging: Higher temperatures can affect transceiver output power stability; aging and remating can degrade connector performance.

When you standardize your approach, you reduce the “it depends” factor. The goal is to make fiber loss management repeatable across racks, rows, and pods.

Selection criteria checklist for data center fiber loss control

When choosing transceivers for a data center, engineers should evaluate optical performance in the context of the installed plant and expected operational events. Use this ordered checklist during design, procurement, and acceptance testing.

- Distance and route loss: Confirm planned fiber length and measured attenuation (not only labeled lengths).

- Connector and splice counts: Count every patch panel interface and splice; MPO links require extra attention.

- Transceiver budget fit: Verify vendor optical budget with your measured losses; require margin for future MAC activity.

- Switch compatibility: Ensure the transceiver type is supported by the exact switch model and software version (and confirm whether optics vendor restrictions apply).

- DOM support and telemetry: Prefer modules that expose Tx/Rx power and diagnostic flags to your monitoring stack.

- Operating temperature and airflow: Verify transceiver temperature range and confirm rack cooling and inlet temps.

- DOM and firmware risk: Consider vendor lock-in risk and whether third-party optics are validated for your specific platform.

- Cleaning and maintenance plan: Plan connector cleaning tooling and remating procedures before rollout.

For concrete part examples, teams commonly compare OEM and third-party optics such as Cisco-branded modules and compatible equivalents like Finisar and FS.com families (exact models vary by interface speed and reach). Always validate against your switch’s supported optics matrix and the optics datasheet for DOM behavior. Cisco switch platform documentation

Common mistakes and troubleshooting tips for fiber loss

Even experienced teams run into predictable failure modes. Below are common pitfalls with root causes and solutions that field engineers can apply immediately.

Mistake: “It passes continuity, so the link is fine”

Root cause: Continuity checks do not measure optical power or connector insertion loss. A link can pass continuity while still exceeding the transceiver optical budget due to excess loss.

Solution: Measure end-to-end optical loss with a calibrated source and meter (or OTDR for pinpointing). Record results per patch segment and compare to the transceiver budget.

Mistake: Mixing patch cords and connectors without tracking loss

Root cause: Teams may substitute patch cords during maintenance. Different cable constructions and connector types (especially MPO/MTP) can change insertion loss and lane balance, pushing the link into a marginal operating region.

Solution: Enforce patch cord standards by speed and connector type. During change control, require proof of patch cord type and measured insertion loss for high-speed links.

Mistake: Ignoring connector cleanliness during remates

Root cause: Remating without proper cleaning can add several dB of excess loss, especially on MPO/MTP ferrules and LC end faces. Optical power degradation may be intermittent, leading to confusing “works after reboot” behavior.

Solution: Implement strict cleaning SOPs: inspect end faces, clean with validated methods, and only then remate. After cleaning, re-measure loss on the affected link group.

Mistake: Assuming labeled fiber length equals optical path length

Root cause: In many data center builds, labeled lengths exclude patch panel slack loops, reroutes, and additional jumpers added during staging. That hidden extra length can consume your margin.

Solution: Use as-built documentation and validate with measured OTDR distance when margins are tight. Require engineering sign-off when a route changes after initial cabling acceptance.

Mistake: Overlooking temperature and airflow effects on receiver margin

Root cause: Transceiver output power and bias can drift with temperature. In hot aisles or constrained airflow, the link can become marginal even if the optical loss is within budget.

Solution: Validate transceiver operating temperature, inlet temps, and airflow paths. Use DOM telemetry to correlate error counters with thermal conditions.

Cost and ROI: what fiber loss management is really worth

Optics and cabling are only part of the total cost. In most data center environments, the ROI comes from reducing downtime, avoiding expensive emergency cabling work, and preventing repeat failures. Price varies by vendor and speed, but a practical budgeting pattern is: OEM optics can cost more upfront than third-party compatible modules, while third-party options can reduce capex if they are validated and supported by your platform.

For example, a typical 10G SFP+ SR module might range from tens to low hundreds of dollars depending on brand and spec; 25G and 100G optics can cost substantially more, especially for vendor-validated parts. The TCO improves when you include testing labor, cleaning supplies, and spares planning. A field team that can identify excess loss locations quickly often prevents multiple truck rolls and prolonged incident timelines.

If you want a measurable improvement, treat loss management as an operational program: enforce acceptance thresholds, standardize patch cords, and use telemetry to catch margin erosion early.

FAQ

How much fiber loss margin should we keep for data center optics?

Keep margin based on the vendor optical budget and your measured plant losses, then add operational slack for MAC events and connector wear. Practically, teams often set acceptance thresholds that leave headroom beyond the minimum budget, especially for 25G and 100G optics where receiver thresholds are tighter.

Does cleaning matter if the link is already “working”?

Yes. A dirty connector can still allow a link to come up while operating with reduced margin, leading to intermittent errors under temperature changes or after small power drift. Clean and re-measure loss when you see rising error counters or telemetry trends.

What is the fastest way to pinpoint excess loss in a data center patch panel?

Use an OTDR for fiber-level location and then optical power measurements per patch segment to isolate connector groups. This combination helps you avoid blanket recabling and focuses work on the highest-loss interfaces.

Are third-party transceivers safe for a data center?

They can be safe if they are validated for your exact switch model and software release, and if DOM behavior matches what your monitoring expects. The risk is not just optics performance; it is also compatibility, management quirks, and return policy constraints.

Which connector type causes more data center optical loss problems: LC or MPO/MTP?

MPO/MTP often causes more issues in high-speed multimode links because insertion loss and lane balance effects are amplified across multiple lanes. LC connectors can also fail, but the operational failure pattern is frequently more visible and easier to isolate per individual connector.

What telemetry signals indicate fiber loss is getting worse?

Look for rising error counters, changing FEC correction rates, and drifting Tx/Rx optical power trends from DOM telemetry. When these correlate with a specific link group or route, it strongly suggests a physical plant change such as contamination or connector damage.

Fiber loss management in a data center succeeds when you connect optical budgets to measured reality, enforce connector cleanliness, and use telemetry to detect margin erosion early. Next, review your transceiver and cabling standards using related topic to align procurement, acceptance testing, and operational change control.

Expert bio: I lead field-ready optical and network reliability programs, translating vendor datasheets into measurable acceptance tests and runbooks for live data center deployments. My focus is reducing outage risk through margin-based design, disciplined change control, and practical troubleshooting at the patch level.