When a fiber link starts degrading, outages often look random until you correlate optics behavior with traffic, temperature, and error counters. This article explains how a digital twin SFP models transceiver health so teams can predict failures and schedule maintenance before link loss. It helps network engineers, optical field technicians, and reliability teams who manage 1G to 10G SFP/SFP+ deployments in data centers and industrial sites.

Digital twin SFP vs “read-only optics telemetry”: what changes?

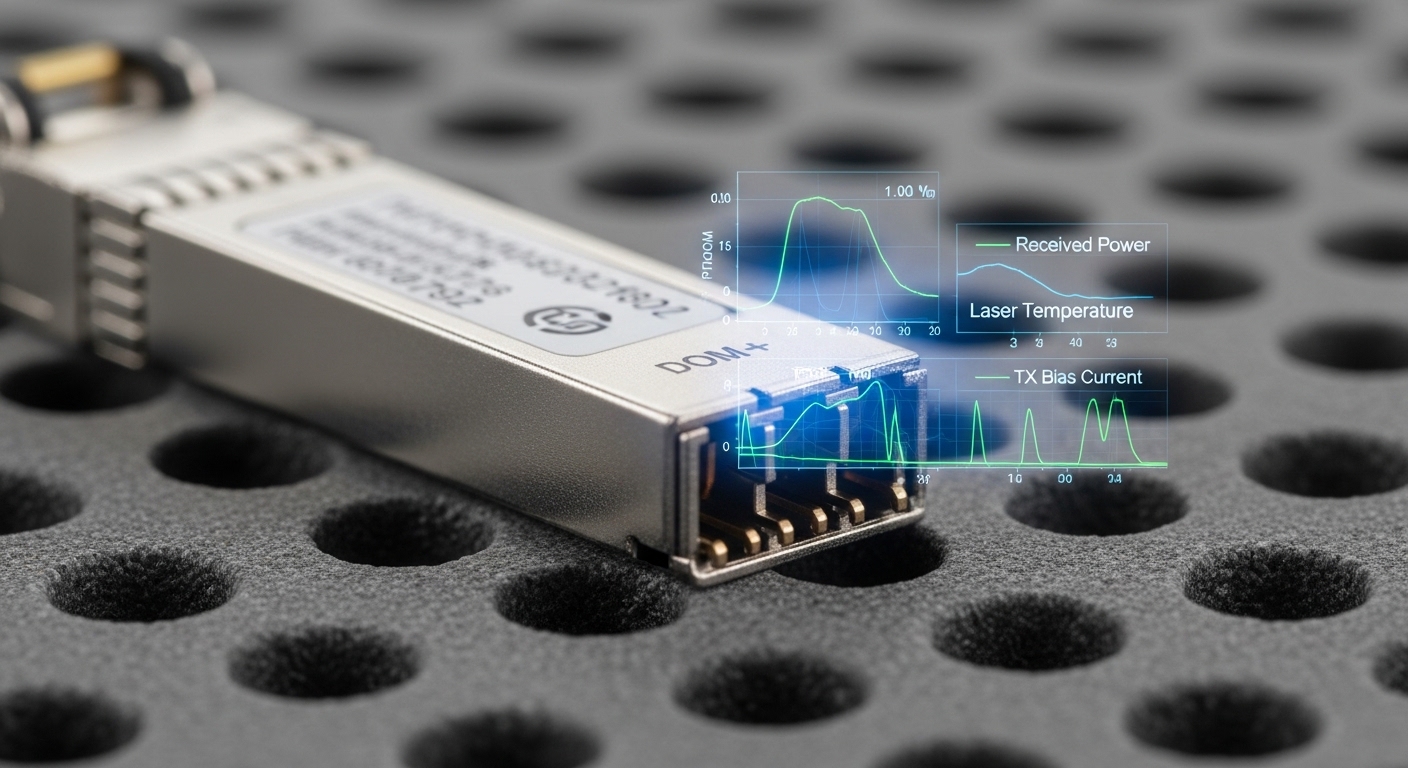

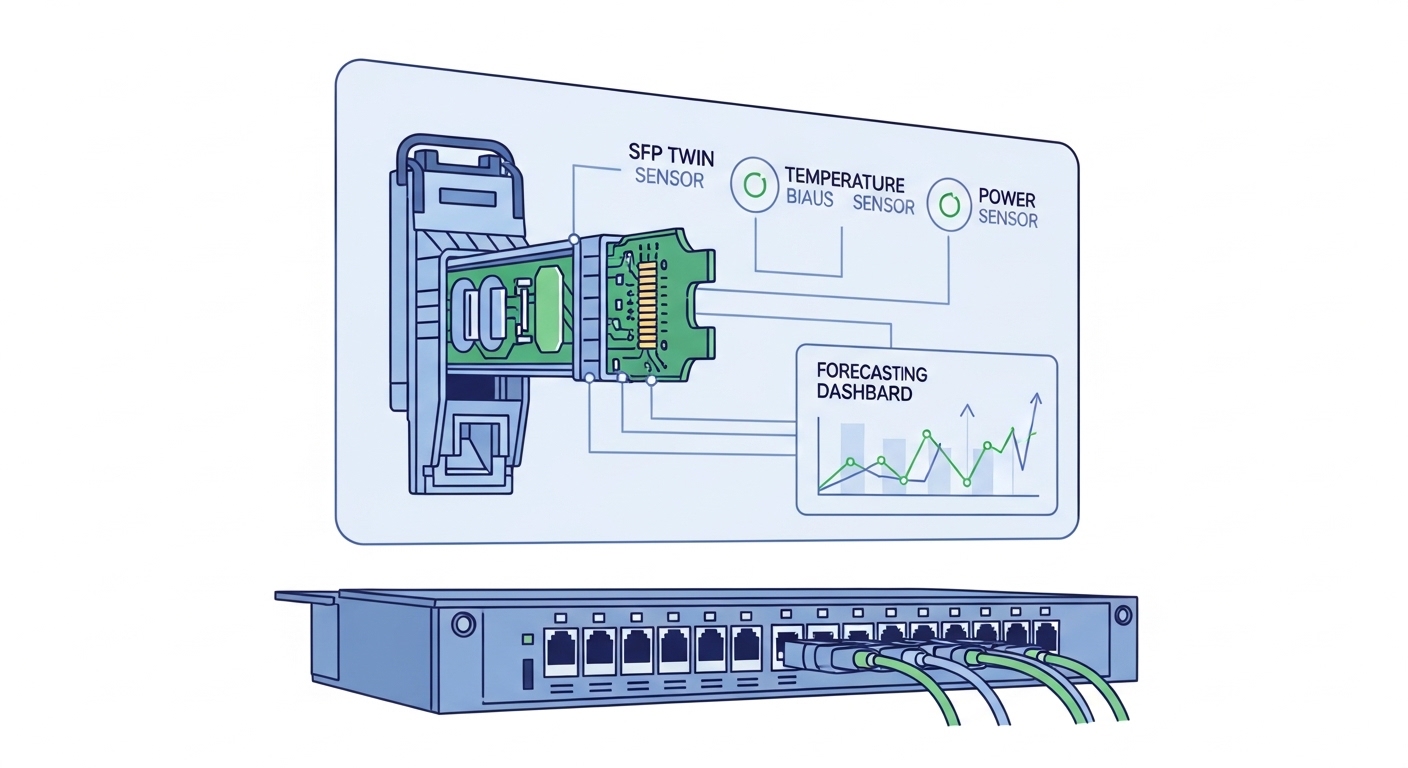

A classic monitoring setup polls DOM (Digital Optical Monitoring) values and raises alerts after thresholds are crossed. A digital twin SFP goes further by building a physics-informed and metrics-driven model of the optical path and electronics so you can forecast drift. In practice, the twin fuses DOM trends (TX bias current, laser temperature, received power, module voltage) with link-layer error signals (LOS/LOF, FEC counters where applicable, and CRC/Symbol errors). The result is earlier diagnosis of mechanisms such as laser aging, connector contamination, and thermal overstress.

For teams using IEEE 802.3 Ethernet PHYs, the key is that the optics are part of the physical layer performance envelope. IEEE 802.3 defines electrical/optical behavior and link integrity expectations, while vendor datasheets define DOM registers and absolute maximum ratings. A predictive model helps you act before the system transitions from “degraded but stable” to “link down.” Source: IEEE 802.3

What the digital twin actually models

Field deployments typically see three dominant failure precursors. First, laser output power decay (TX power drops for a given bias current) due to aging and packaging stress. Second, photodiode responsivity drift and receiver sensitivity changes that show up as received power trending downward. Third, thermal and mechanical stress driven by airflow changes, blocked vents, or higher ambient temperatures near the cage.

A good twin also models environment and installation variables: fiber type (OM3/OM4/MMF vs SMF), connector grade, patch-panel cleanliness, and link budget margins. In real networks, these are not static; they change after moves/adds/changes or when racks are reconfigured. The predictive layer uses time-series forecasting and anomaly detection to estimate remaining useful life (RUL) for each module and fiber hop.

Specs and compatibility: which SFP types can be “twin-ready”?

Not every SFP is equally suited for digital-twin predictive maintenance. The twin needs reliable telemetry, stable register access, and predictable optical behavior over temperature. Most modern SFP/SFP+ modules support standard DOM interfaces, but the available registers and update behavior vary by vendor and switch platform. You should validate DOM polling stability and ensure the switch exposes module health counters consistently.

Below is a practical comparison of common SFP optical categories for data center and enterprise fiber. Note that “reach” is only one axis; the twin also depends on whether the link budget has headroom to accommodate drift before BER/CRC counters spike.

| Module class | Typical wavelength | Reach (typ.) | Connector | DOM support | Operating temp | Common twin failure signals |

|---|---|---|---|---|---|---|

| 10G SFP+ SR | 850 nm | 300 m (OM3), 400 m (OM4) | LC | Yes (vendor-specific register maps) | 0 to 70 C (often) | TX bias drift, received power decay, increased error rate |

| 10G SFP+ LR | 1310 nm | 10 km (SMF) | LC | Yes | -5 to 70 C (often) | RX sensitivity margin loss, connector contamination patterns |

| 1G SFP SX/LX | 850/1310 nm | 550 m (OM2) to 10 km (SMF) | LC | Yes | -5 to 70 C (often) | Gradual power drop, temperature coefficient changes |

Example parts used in field audits include Cisco SFP-10G-SR, Finisar/II-VI family modules such as FTLX8571D3BCL, and third-party equivalents like FS.com SFP-10GSR-85. Always verify that your switch platform supports DOM reads for these modules without excessive timeouts or “ghost” alarms. Source: Cisco SFP compatibility guidance

Pro Tip: In pilot deployments, the earliest predictive signal is often not received power alone. Engineers who succeed usually model relationships between TX bias current, laser temperature, and slope efficiency. When that relationship shifts, you can forecast degradation even before absolute power crosses a threshold.

Decision matrix for twin readiness

Use this matrix to judge whether a given module family and switch combination will produce stable twin inputs. It is intentionally practical: it reflects what breaks in the lab and in the rack.

| Criteria | Best case | Acceptable | Risky |

|---|---|---|---|

| DOM polling reliability | Consistent register reads, no timeouts | Occasional missed samples | Frequent DOM failures or stale values |

| Telemetry granularity | Frequent updates (e.g., sub-minute) | Minute-level trends | Only event-based telemetry |

| Switch error counters availability | Per-port CRC and error counters accessible | Aggregated interface stats | No reliable link-layer counters |

| Link budget margin | Operational headroom for drift | Moderate margin | Near-threshold links |

| Operating temperature stability | Documented airflow and ambient sensors | Limited ambient data | Unknown thermal hotspots |

Head-to-head: predictive maintenance value vs cost and operational change

Compared to “alert on threshold,” a digital twin SFP requires more integration work: telemetry collection, time-series storage, and a forecasting model. The payoff is fewer surprise outages and more targeted maintenance. In a typical environment, that means cleaning a specific connector pair or reseating a single module rather than replacing optics in bulk.

Cost depends on whether you use an OEM approach (vendor tooling, tighter support, higher unit cost) or a third-party optics + monitoring approach (lower unit cost, more integration effort). For many teams, the largest TCO driver is not the transceiver price; it is downtime risk, labor hours, and the cost of escalations. A twin can also reduce truck rolls by prioritizing the links with the highest predicted RUL risk.

Real-world deployment scenario (what it looks like)

In a 3-tier data center leaf-spine topology, a team runs 48-port 10G ToR switches with 8 active uplinks per leaf, using 10G SFP+ SR optics to a structured cabling plant. Over a 6-month period, they observed that two out of twelve affected uplinks developed rising interface CRC errors during hot months. After deploying a digital twin SFP model, the system flagged early drift: TX bias current increased while received power failed to recover after a routine reseat. Maintenance targeted the patch panel for those specific LC pairs, cleaned connectors, and verified margin; link stability returned without replacing the entire transceiver pool.

Operationally, this reduced “unknown cause” escalations and allowed planned maintenance windows. Importantly, they still monitored link-layer counters because the twin is a forecast tool, not a substitute for protocol-level health checks.

Selection criteria checklist: how engineers choose the right twin approach

Before you invest in a digital twin SFP program, engineers typically run a structured evaluation. The list below reflects what works across both OEM and third-party optics ecosystems.

- Distance and optical budget: confirm link budget headroom using module specs and measured fiber loss, not only “rated reach.”

- Switch compatibility: validate DOM reads and error counter access on your exact switch model and firmware.

- DOM and telemetry model fit: ensure the twin can ingest stable registers for temperature, bias, and received power.

- Forecasting strategy: decide whether you need RUL scoring per module or anomaly detection per port.

- Operating temperature range: align module grade (industrial vs commercial) with rack airflow realities.

- Connector and cabling maturity: if patch panels are frequently reworked, prioritize twin predictions that separate contamination vs aging.

- Vendor lock-in risk: evaluate whether your monitoring stack can support multiple transceiver vendors and firmware revisions.

Common pitfalls and troubleshooting tips (with root cause)

Even well-designed digital twin SFP systems fail when telemetry quality or assumptions drift. Here are field-tested pitfalls and how to correct them.

-

Pitfall 1: Twin flags “laser aging” but the real cause is connector contamination.

Root cause: received power drops can be caused by dirt or micro-bends, not only aging.

Solution: correlate twin predictions with physical maintenance logs and inspect LC endfaces; verify by cleaning and re-measuring optical power. If error counters drop after cleaning, update the model features to include maintenance events as labels. -

Pitfall 2: DOM telemetry is intermittent, creating false anomalies.

Root cause: switch firmware or incompatible transceiver DOM implementations can cause stale reads or timeouts.

Solution: run a telemetry health check: validate sample timestamps, detect stale values, and exclude missing windows from model training. -

Pitfall 3: Model predicts failure but the link stays up because BER margin was larger than expected.

Root cause: using “catalog reach” instead of measured loss leads to optimistic drift tolerance.

Solution: calibrate the twin using measured optical power at install time and include the actual link budget margin in the model. -

Pitfall 4: Temperature effects are misattributed to aging.

Root cause: rack airflow changes shift laser temperature and bias behavior, mimicking long-term drift.

Solution: feed ambient temperature and airflow indicators into the twin; normalize telemetry by temperature and compare residuals rather than raw values.

Cost and ROI note: budgeting for a digital twin SFP program

Unit pricing for SFP/SFP+ optics varies widely by vendor and grade, but in many enterprise purchases, optics cost is only a fraction of the labor and downtime risk. A realistic ROI model treats the twin as a reliability investment: it reduces unplanned outages and cuts replacement churn. OEM optics may cost more but can reduce compatibility friction and provide stronger support for DOM behaviors; third-party modules often reduce purchase cost but can increase integration and validation time.

From a TCO perspective, account for telemetry infrastructure (switch polling, time-series storage, and dashboards) and the engineering time to build and validate twin models. In pilot programs, teams often see the break-even point after reducing even one high-severity incident and avoiding several “shotgun” maintenance actions.

Which option should you choose?

If you manage critical uplinks with strict uptime targets and you already collect DOM and interface error counters, a digital twin SFP is the most direct path to predictive maintenance. If you are still using only basic threshold alarms and your telemetry quality is inconsistent, start with a telemetry hardening phase: stabilize DOM reads, standardize polling intervals, and validate error counter visibility on each switch model. For smaller environments with low optical counts, a lightweight anomaly detection approach may deliver value faster than full RUL modeling.

Choose based on your constraints:

- Reliability-focused data center ops: digital twin SFP with RUL scoring per port/module.

- Industrial/OT networks with harsh temperature swings: twin plus temperature-normalized features.

- Teams early in monitoring maturity: start with telemetry validation and measured optical budget calibration.

- Budget-constrained but high volume: third-party optics can work, but only after compatibility and DOM validation per switch firmware.

Next step: review how to structure your optics monitoring pipeline and labeling so the twin remains accurate over time via optical transceiver monitoring best practices.

FAQ

Q: Do I need a specific switch model to use a digital twin SFP?

A: Yes, compatibility matters. The twin depends on consistent DOM reads and access to per-port error counters; validate this on your exact switch model and firmware before scaling.

Q: Will a digital twin work with third-party SFP modules?

A: Often yes, but not automatically. You must verify DOM register behavior, update cadence, and that the switch does not mark modules as unsupported or intermittently unreadable.

Q: What optical failure modes can a twin predict reliably?

A: Most reliably, it predicts drift patterns tied to aging and thermal stress, and it can separate some contamination patterns when correlated with maintenance events. However, it still requires physical inspection for confirmation when uncertainty is high.

Q: How do I set thresholds if the twin forecasts failures?

A: Use the twin’s RUL score or anomaly severity to drive maintenance windows, then keep conservative hard thresholds as safety nets. The forecasting model should complement, not replace, immediate alarms.

Q: What data should I store to train and validate the twin?

A: Store DOM telemetry time series (bias, temperature, voltage, TX power, RX power where available), interface error counters, link state transitions, and maintenance events. Include timestamps and module serial identifiers if your platform exposes them.

Q: How long should a pilot run before trusting predictions?

A: Many teams run 4 to 12 weeks to capture seasonal temperature effects and establish baseline drift rates, then extend to 6 months for more stable R