In high-power AI data centers, the cabling decision is no longer just about “distance.” It affects switch port behavior, link stability, airflow, and total cost across thousands of inter-rack connections. This article helps data center engineers and field technicians compare DAC vs AOC for GPU-heavy deployments, with practical selection steps and failure-mode troubleshooting you can apply during commissioning. Update date: 2026-05-01.

Prerequisites before you decide: inventory, standards, and link targets

Before comparing DAC vs AOC, confirm your physical layer targets and the switch optics expectations. Most issues come from mismatched speed grades, connector types, or DOM and power constraints rather than the fiber itself. For Ethernet, you are typically aligning to IEEE 802.3 specifications for 10G, 25G, 40G, 100G, or 200G PHY behavior, while the transceivers follow vendor datasheets and optics compliance requirements. For structured cabling and pathways, align with ANSI/TIA-568 and related rack and cabling practices.

What to gather in your site sheet

- Port speed and breakout: e.g., 100G breakout to 4x25G, or native 200G.

- Switch model and optic compatibility list: capture the exact platform part number and the vendor’s supported transceiver matrix.

- Planned distance: measure rack-to-rack and ToR-to-spine runs in meters (include slack).

- Connector ecosystem: QSFP28, SFP28, QSFP56, or similar; LC vs MPO/MTP; and whether you need active alignment.

- Environmental constraints: ambient temperature near the rack and any hot-aisle recirculation risk.

- Power and airflow assumptions: you will compare transceiver power budgets and total rack heat.

Expected outcome: you will have a concrete list of “must-match” items so your DAC or AOC selection does not fail at the first link bring-up.

How DAC vs AOC behaves at the physical layer in AI racks

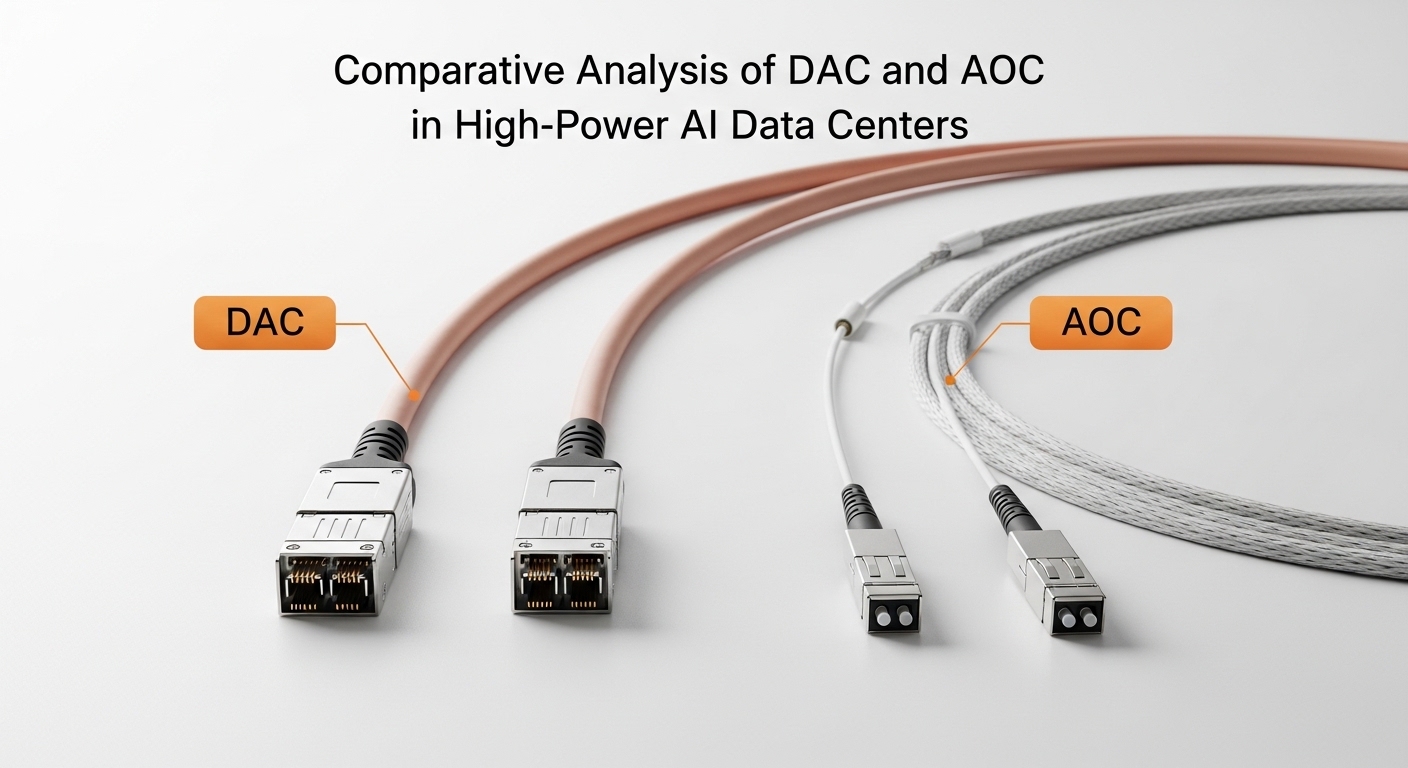

Both DAC and AOC can carry Ethernet at the required data rate, but they use different physical media and electrical/optical architectures. A DAC is typically a copper twinax cable assembly with integrated transceiver electronics, so it usually has low latency and consumes modest power for very short reach. An AOC is an active optical cable: it converts electrical signals to optical at each end, allowing longer reach while reducing copper-related signal integrity issues.

Key architectures you should expect

- DAC (direct attach copper): copper twinax within a short reach class (commonly 1 m to 7 m depending on speed), integrated connectors (often SFP+ or QSFP28 style), and passive copper transmission characteristics.

- AOC (active optical cable): optical fiber inside an active assembly, with an electrical-to-optical conversion module at each end; typically supports greater reach than DAC at the same rate class.

Why “high-power AI” changes the decision

In AI racks, you have dense airflow patterns and high heat loads. Even small differences in transceiver power can affect inlet temperature and fan duty cycle over time. Also, AI clusters often scale out quickly, so you care about spare inventory, failure replacement time, and the likelihood of “works on my switch” issues when you add new line cards.

Specs comparison: DAC vs AOC for reach, connectors, power, and operating limits

Use the table below as an engineering baseline. Exact values vary by vendor and speed grade, so confirm against datasheets for your chosen transceiver part numbers before purchasing.

| Parameter | DAC (Twinax Copper Assembly) | AOC (Active Optical Cable) |

|---|---|---|

| Typical data rates | 10G, 25G, 40G, 100G (model-dependent) | 10G, 25G, 40G, 100G (model-dependent) |

| Typical reach class | ~1 m to 7 m (common for high-rate DAC) | ~5 m to 100 m+ (common for short-reach AOC) |

| Optical wavelength | N/A (copper) | Often 850 nm for short-reach multimode |

| Connector ecosystem | Integrated to switch port format (e.g., QSFP28) | Integrated to switch port format (e.g., QSFP28) or sometimes LC-like ends depending on design |

| Power and heat | Usually lower than active optics for very short runs, but depends on speed | Active conversion increases power; still often acceptable but check rack thermal impact |

| Operating temperature | Commonly vendor-defined industrial ranges; verify with datasheet | Commonly vendor-defined ranges; verify with datasheet |

| DOM support | Often present; verify whether platform reads DOM fields correctly | Often present; verify platform compatibility and telemetry behavior |

| EMI and grounding sensitivity | More sensitive to grounding and cable routing near high-power rails | Fiber reduces copper EMI coupling; easier to route across noisy zones |

Expected outcome: you will be able to map your rack geometry and thermal constraints to the correct reach class and connector format before you buy.

Pro Tip: During field installs, the biggest “gotcha” is not reach—it is switch compatibility checks (often based on vendor-specific transceiver ID/DOM behavior). Always validate the exact part number against the switch vendor’s supported optics list, then run a controlled link test (loopback or traffic) before you scale out.

Step-by-step implementation: choosing DAC vs AOC for your AI link budget

This section is written as an implementation checklist you can execute during planning and commissioning. It assumes you are selecting transceivers for Ethernet links between GPU servers, ToR switches, and aggregation/spine tiers.

Classify each link by distance and routing constraints

- For each connection, record the measured distance in meters plus slack. Use a tape measure from connector face to connector face.

- Mark links as short (typically within the DAC reach class) or long (beyond that class).

- Note any routing through EMI-heavy zones (near large power distribution, busbars, or dense motor drives).

Expected outcome: you will have a per-link decision category rather than a one-size-fits-all choice.

Match port format and optics compatibility

- Identify the switch port type (example: QSFP28 vs QSFP56 vs SFP28) and the PHY speed mode.

- Pull the vendor’s supported transceiver list and filter by required speed grade.

- If your platform enforces strict compatibility, avoid “looks similar” modules.

Expected outcome: your selection will pass initial DOM read and link negotiation.

Estimate thermal and power impact across the rack

In many deployments, the simplest approach is to compare vendor datasheet power per transceiver and multiply by the number of active ports. For example, if a short-reach AOC consumes meaningfully more power than a DAC at the same data rate, you may see higher rack inlet temperatures during sustained training. Validate with your facility’s airflow model or at least compare inlet temps before and after swapping a batch of optics.

Expected outcome: you will avoid “it worked in the lab” thermal surprises under full GPU utilization.

Choose reach strategy for scale-out and spares

- For links that will be reused across multiple rack installs, standardize on one DAC length class per topology segment.

- For variable distances, consider AOC reach flexibility to reduce the number of spare lengths you stock.

- Plan spares by failure rate expectations: optics are replaceable, but downtime costs usually dominate.

Expected outcome: fewer part numbers in your spares kit and faster MTTR during incidents.

Commission with controlled verification

Bring up links one row at a time and verify link state, error counters, and optical/copper diagnostics. If your switch supports per-port telemetry, confirm that DOM fields populate and remain stable over time. Run a traffic profile that matches your job mix (e.g., sustained east-west flows) and confirm no CRC or alignment errors increase.

Expected outcome: you will detect marginal links early, before you fully power the training workload.

Selection criteria checklist engineers actually use

When teams debate DAC vs AOC, the decision is usually constrained by compatibility and operations. Use this ordered checklist for each link group.

- Distance and reach margin: choose a reach class that includes slack and installation tolerance; avoid operating at the edge of the spec.

- Budget and TCO: include spares, replacement logistics, and downtime cost, not only module purchase price.

- Switch compatibility: confirm the exact transceiver part number is supported; verify DOM telemetry behavior.

- DOM and diagnostic visibility: ensure the platform can read temperature, bias, and error counters; prefer consistent telemetry across vendors.

- Operating temperature: check datasheet ranges and compare to measured inlet temperatures during peak training.

- Vendor lock-in risk: if you rely on a single OEM, plan for supply constraints and pricing volatility.

- Cabling and EMI environment: use AOC in noisy routing corridors where copper signal integrity is a recurring issue.

Expected outcome: a repeatable, defensible choice that survives audits and future refresh cycles.

Common pitfalls and troubleshooting tips (top failure modes)

Below are frequent real-world problems when choosing between DAC vs AOC, along with root causes and fixes. Treat these as your first-pass troubleshooting path.

Failure mode 1: Link does not come up after insertion

- Root cause: incompatible transceiver ID/DOM expectations or unsupported speed mode on the switch platform.

- Solution: verify the transceiver part number against the switch vendor’s supported optics list; reseat the module; confirm port speed configuration matches the transceiver capability.

Failure mode 2: High CRC or interface errors under load

- Root cause: marginal signal integrity due to excessive run length, improper routing/bend radius, or inadequate grounding for DAC.

- Solution: shorten the path if possible; reroute away from power cables; for DAC, ensure correct bend handling and connector seating; for AOC, confirm the assembly is not damaged and that connectors are clean if applicable.

Failure mode 3: Intermittent link drops during thermal peaks

- Root cause: temperature operating margin exceeded, especially in hot-aisle recirculation where transceiver temperature rises faster than expected.

- Solution: measure inlet and transceiver-reported temperatures (DOM if available); improve airflow management (blanking panels, fan curve verification); if needed, switch to a transceiver with a better validated temperature range for your environment.

Failure mode 4 (bonus): DOM fields show but telemetry is inconsistent

- Root cause: partial DOM mapping differences across vendors or firmware expectations mismatch.

- Solution: run a telemetry consistency check across a small batch; standardize on a vendor for the same transceiver class within a cluster.

Expected outcome: faster isolation of whether the issue is compatibility, signal integrity, thermal margin, or telemetry mapping.

Cost and ROI note: what to budget for DAC vs AOC in AI clusters

Pricing varies widely by vendor, speed grade, and volume, but in many enterprise and colocation markets you can expect meaningful differences between copper and active optical assemblies. As a practical planning range, consider that third-party DAC assemblies are often cheaper per port than AOC, while AOC can cost more but may reduce operational risk for longer runs and noisy routes. TCO should include expected failure replacement cycles, spare inventory complexity, and downtime cost when a link is down during training.

If your network is mostly within short reach and your switch has strict compatibility, DAC can deliver a strong ROI by reducing purchase price and keeping power draw lower per link. If you have a mix of longer runs, frequent rack re-mapping, or known EMI issues, AOC may lower the probability of intermittent faults, which is often worth more than the upfront price delta.

Expected outcome: you will choose based on operational impact and lifecycle cost, not only the initial invoice.

FAQ

Which is better for AI data centers: DAC vs AOC?

For very short distances inside a row or between closely spaced racks, DAC is often the lowest-friction choice. For longer runs, variable routing, or EMI-heavy pathways, AOC can be more reliable because fiber reduces copper-related signal integrity and grounding issues. The best answer depends on your switch compatibility list and measured thermal conditions.

Do DAC and AOC support the same switch ports and speeds?

They can, but only when the transceiver form factor and speed grade match your switch ports. A DAC assembly must be compatible with the port type (for example QSFP28) and the negotiated Ethernet speed mode. Always validate the exact part number and DOM behavior against the switch vendor documentation.

What reach margin should we plan for?

A common practice is to include installation slack and keep your run comfortably inside the rated reach class. If you are near the upper edge of a DAC reach spec, you increase the chance of CRC errors under load and thermal stress. For AOC, still avoid operating at maximum distance unless the vendor datasheet and your site conditions support it.

Will third-party DAC vs AOC modules work reliably?

Often they do, but reliability depends on strict compatibility rules, DOM telemetry mapping, and vendor quality control. In field rollouts, teams reduce risk by standardizing on one or two vetted suppliers for the same transceiver class. If your platform is strict, OEM or fully validated third-party optics reduce commissioning time.

How do we troubleshoot errors after deployment?

Start by checking port speed negotiation, then review interface counters for CRC, alignment, and symbol errors. For DAC-related issues, inspect routing, connector seating, and bend handling. For AOC, check for assembly damage and confirm any relevant diagnostics and telemetry remain stable during peak thermal load.

Where can I find authoritative compatibility and standards references?

Begin with IEEE 802.3 for Ethernet PHY behavior and the switch vendor’s optics compatibility matrix. For structured cabling practices, reference ANSI/TIA-568 and related implementation guides. For optics electrical and DOM expectations, use the specific transceiver datasheet from the manufacturer. anchor-text: IEEE 802.3 standards anchor-text: ANSI/TIA-568 cabling standards

Expected outcome: you will be able to answer stakeholder questions consistently during procurement and commissioning.

Choosing DAC vs AOC in a high-power AI environment is a physical-layer and operations decision: match reach and compatibility first, then verify thermal and error behavior during real traffic. If you want a related next step, review How to size a fiber optic link budget for short-reach AI networks to confirm your optical assumptions before scaling.

Author bio: I have deployed and troubleshot high-speed DAC and AOC links in AI clusters and data center expansions, focusing on compatibility, DOM telemetry, and thermal commissioning. I write implementation-first workflows that field engineers can execute during cutover windows.