DAC vs AOC for 400G: Best practices that prevent link failures

In 400G leaf spine and high density server networks, the wrong optics choice can turn into intermittent link flaps, thermal throttling, or expensive rework. This article helps data center and network engineers apply best practices when choosing Direct Attach Copper (DAC) versus Active Optical Cable (AOC) for 400G optics, with practical selection criteria and failure-mode troubleshooting. It is written for teams planning 400GBASE style connectivity using 112G PAM4 class signaling and typical QSFP-DD or OSFP form factors. Update date: 2026-05-01.

DAC vs AOC in 400G: what changes operationally

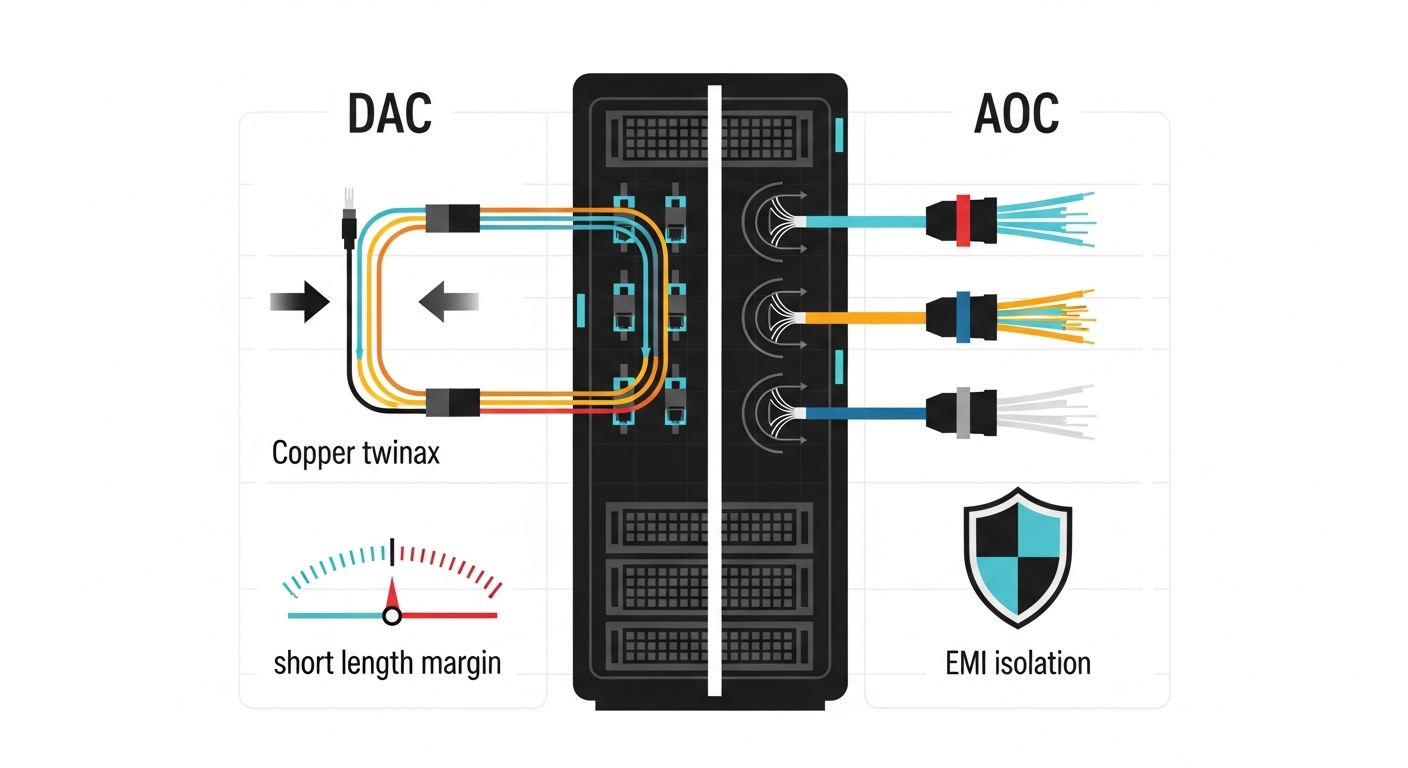

Both DAC and AOC can support short-reach 400G connectivity, but they behave differently under heat, EMI, and installation constraints. DAC is electrical all the way through the cable assembly, while AOC converts electrical signals to optical at each end and uses fiber inside the cable. In practice, DAC tends to be simpler and often cheaper up front, while AOC can tolerate harsher electromagnetic environments and can reduce cable management stress in dense racks. The IEEE Ethernet PHY behavior still matters: 400G links typically run at 112G per lane with strict equalization and receiver sensitivity requirements, so physical layer margins are not optional.

Quick spec comparison (typical 400G short reach)

Use this table as a field reference; exact values vary by vendor and transceiver type (QSFP-DD vs OSFP) and by whether the link is specified for copper twinax or AOC fiber.

| Characteristic | DAC (Direct Attach Copper) | AOC (Active Optical Cable) |

|---|---|---|

| Typical internal signal path | Electrical (twinax) end-to-end | Electrical to optical conversion at each end |

| Connector / module fit | QSFP-DD or OSFP copper interface, vendor-specific | QSFP-DD or OSFP optical interface, vendor-specific |

| Reach categories (common) | Often ~1 m to ~5 m depending on length grade | Often ~5 m to ~100 m depending on cable class |

| Power draw (rule of thumb) | Moderate; depends on equalization and vendor design | Often higher than passive copper, but can reduce system heat at the port |

| EMI sensitivity | Higher susceptibility in noisy cable trays | Lower susceptibility due to optical isolation |

| Thermal behavior | Heat concentrated at the connector region | Heat distributed across active cable ends |

| Operational limits | Length and insertion loss strict; poor routing can break margins | Fiber bend radius and handling can impact margins |

| Temperature range (typical) | 0C to 70C (varies by product grade) | 0C to 70C or broader for enterprise grades (varies) |

For standards grounding, check Ethernet PHY requirements and link training behavior in IEEE 802.3 for 400G Ethernet (400GBASE-R). For module compliance expectations, rely on vendor datasheets and host transceiver interface specifications for your switch model. External references: IEEE 802.3 standard and IEC general fiber handling guidance.

Selection best practices for 400G DAC vs AOC

The goal is not “which is better,” but which preserves link margin under your installation realities. Engineers should treat this as a PHY budgeting exercise plus a mechanical routing plan. Start with the distance and host compatibility, then validate DOM reporting, thermal headroom, and environmental constraints. Finally, plan for failure isolation: when a link flaps at 400G, you need to know whether the cable, the optics cage, or the port lane mapping is at fault.

Decision checklist (ordered)

- Distance vs reach grade: match the cable length to the vendor’s specified reach for 400G at your host’s port type (QSFP-DD/OSFP). If you are at the edge (example: 4 m when the spec is 5 m), assume extra loss from routing bends.

- Switch and host compatibility: confirm the exact transceiver type is in the switch vendor’s compatibility list, including lane mapping and supported power class.

- DOM and telemetry support: verify whether the module exposes Digital Optical Monitoring (DOM) or equivalent electrical telemetry, and whether the switch accepts it without warnings.

- Operating temperature: check the switch airflow profile and cable end heating. In high density racks, a module that passes at room temperature can fail under full load.

- Budget and TCO: compare not only purchase price but also rework costs. AOC often reduces troubleshooting time when EMI is the culprit.

- Vendor lock-in risk: assess whether third party cables are supported and whether they maintain consistent firmware or initialization behavior over time.

Rule of thumb for common environments

If you have short runs in cool, clean cable pathways and you need lowest latency and simplest cabling, DAC is often the pragmatic choice. If you have dense EMI sources (power distribution, high current busbars, or poorly segregated trays) or you need more reach flexibility without adding a full patch panel, AOC can be the stability play. Either way, validate with a pilot batch before scaling to all top-of-rack ports.

Pro Tip: In 400G links, link up is not the same as link margin. Run sustained traffic (for example, line-rate if your platform supports it) for 30 to 60 minutes and watch for micro-errors or CRC spikes; a “green” link at idle can still fail under equalization stress after thermal soak.

Real-world deployment scenario: 3-tier data center leaf spine

Consider a 3-tier data center leaf spine design with 48-port 400G ToR switches in each rack, using 400G uplinks to spine switches. Each ToR has 16 uplinks (400G each), with physical distances ranging from 1.5 m to 8 m depending on rack row geometry. The environment includes alternating power rows with high current density and shared cable trays for some management cabling. In this scenario, teams often choose DAC for the 1.5 m to 3 m segments where routing is direct and airflow is strong, and AOC for the 5 m to 8 m segments where bend radius constraints and EMI coupling become more likely. A practical best practice is to stage a pilot: 4 uplinks per switch, mix DAC and AOC per distance class, and confirm sustained throughput plus stable error counters before mass deployment.

Common mistakes and troubleshooting tips

400G PHY failures can look identical at the CLI level but have different root causes. The most effective troubleshooting starts with narrowing the fault domain: cable vs port vs optics cage vs environmental stress. Below are frequent failure modes and what to do next.

Link flaps after thermal load

Root cause: insufficient margin due to insertion loss, tight bends, or airflow mismatch; DAC is especially sensitive to routing and insertion loss, while AOC can be sensitive to handling and bend radius. Solution: re-route to reduce sharp bends, verify the exact cable length grade, and compare module temperature telemetry (or host warnings) during sustained load. If you can, swap with a known-good module of the same part number and length.

“Unsupported transceiver” or DOM warnings

Root cause: module type or revision not supported by the host firmware, or missing expected telemetry/EEPROM fields. Solution: confirm the transceiver interface is correct for the switch (QSFP-DD vs OSFP) and validate compatibility with the switch vendor’s list. Prefer OEM or officially supported third party parts when stability matters more than cost.

High CRC errors that increase with EMI

Root cause: DAC susceptibility in noisy trays, or cable dressing that couples into power lines. Solution: segregate data and power pathways where possible, improve grounding and tray separation, and test AOC on the same run length. If AOC improves stability immediately, you have strong evidence of EMI coupling rather than a defective port.

One direction works, the other fails (asymmetric symptoms)

Root cause: bad seating, partial connector engagement, or damage to the cable end during installation. Solution: power down safely if required, reseat both ends, inspect connector pins for contamination or bent contacts, and run a loopback or port swap test to isolate whether the host port or the cable assembly is at fault.

Cost and ROI note: what to budget beyond the purchase price

Typical field pricing varies widely by vendor and quantity, but engineers should assume DAC is usually lower cost per link than AOC for short distances, while AOC can command a premium. The real ROI comes from reduced downtime and fewer truck rolls: a single 400G port outage during a critical maintenance window can cost far more than the cable price difference. TCO should include expected failure rates (often driven by handling, heat, and routing), replacement logistics, and the engineering time spent on PHY troubleshooting. For high density deployments, a mixed strategy (DAC where it fits perfectly, AOC where margins are tight) often yields the best balance.

FAQ

What are the best practices for choosing between DAC and AOC at 400G?

Start with distance vs reach grade, then confirm switch compatibility and telemetry support. Validate using sustained traffic and monitor errors after thermal soak. If EMI is present or routing is constrained, AOC is often the stability-first choice.

Which is safer for high EMI environments: DAC or AOC?

AOC is generally more resilient because it uses optical isolation between ends, reducing susceptibility to electromagnetic coupling. DAC can still work well, but best practices include improved cable routing separation, careful grounding, and avoiding aggressive bends.

Do I need DOM for 400G DAC vs AOC?

Not always for basic link operation, but DOM or equivalent telemetry is valuable for rapid isolation when links degrade. If your operations team relies on automated health checks, confirm the switch reads the module’s expected fields without warnings.

What troubleshooting steps should I try first when a 400G link will not come up?

Verify connector seating and inspect for physical damage, then confirm the exact module type and length grade match the vendor’s supported list. Next, swap the cable with a known-good part and compare host port behavior. Finally, check switch logs for lane training or transceiver compatibility messages.

Can third party DAC or AOC reduce cost without increasing risk?

Sometimes, but best practices require compatibility validation with your specific switch model and firmware revision. If you cannot test in a pilot, OEM or officially supported modules reduce the probability of subtle initialization or telemetry mismatches.

How do I measure whether I have enough margin on a 400G link?

Monitor error counters (CRC, FEC, or platform equivalents) under sustained line-rate workloads, not just at link-up. Temperature soak tests help reveal marginal links that only fail after the cable ends and optics cages reach steady-state heat.

If you want the next step, review 400G cabling and airflow best practices to align port density, airflow, and cable management with stable PHY margins. For deeper standards context, cross-check IEEE 802.3 guidance and your switch vendor’s transceiver compatibility notes.

Author bio: I have deployed and troubleshot 10G through 400G Ethernet links in production data centers, including pilot rollouts and thermal margin validation. I write field-focused guidance that prioritizes measurable link health, compatibility, and operational reliability.