A common long-haul optical networking problem is hitting a distance or dispersion limit right when traffic demand jumps. This article walks through a real deployment case where coherent transceivers enabled 400G-class throughput over extended spans while maintaining predictable bit error performance. It is aimed at network engineers, reliability owners, and field technicians who need measurable outcomes, not sales claims.

Problem and challenge: long-haul reach vs. traffic growth

In a pan-regional backbone, the operator planned to carry more traffic between two metro sites roughly 2,200 km apart across multiple spans. The existing line system used non-coherent 100G optics with fixed modulation and limited dispersion tolerance; after a few upgrades, the link budget began to look “just good enough” under worst-case temperature and aging. During acceptance, the team saw rising Q-factor variability and intermittent FEC margin erosion on specific channels when fiber temperature swung seasonally.

The reliability concern was not only whether the link worked on day one, but whether it would remain stable for years. In ISO 9001 terms, they needed repeatable verification evidence: documented test conditions, measurable acceptance criteria, and traceable configuration for each wavelength. They also wanted to reduce truck rolls by improving optical reach and reducing the need for frequent amplifier rebalancing.

Environment specs: what the fiber and line system actually demanded

The link used standard single-mode fiber with typical long-haul impairments: chromatic dispersion, polarization mode dispersion, nonlinear effects, and cumulative amplifier noise. The existing design relied on careful channel spacing and amplifier gain flattening, but the longer route created uneven margin across channels. The team also had to respect the platform’s optics compatibility rules, including vendor-specific digital interfaces and optical power class limits.

From a test perspective, they characterized the baseline using optical spectrum analysis, optical time-domain reflectometry where needed, and end-to-end BER/Q measurements with FEC enabled. They focused on channel-by-channel performance because optical networking failures are often localized to a subset of wavelengths rather than uniform across the band.

Target performance and constraints

- Throughput: 400G per wavelength class to avoid adding parallel links

- Reach: 2,000 km+ with acceptable FEC margin

- Channelization: dense DWDM grid with strict center-wavelength control

- Operating: temperature swings from roughly -5 C to 45 C at remote huts

- Reliability: documented acceptance tests and repeatable field verification

Representative coherent transceiver specs (for planning)

Exact values vary by vendor and line system, but the following table shows typical coherent module planning parameters engineers compare during optical networking selection. Always verify against the specific datasheet and the host switch or transport platform.

| Parameter | Typical Coherent 400G Class | Typical Non-Coherent 100G |

|---|---|---|

| Data rate | 400G (e.g., DP-QPSK or DP-16QAM variants depending on reach) | 100G fixed modulation |

| Wavelength range | C-band (around 1530 to 1565 nm) | C-band (often similar) |

| Reach (planning) | 1,500 to 3,000 km depending on modulation/FEC and line impairments | 300 to 1,500 km depending on format and dispersion tolerance |

| Connector | LC/UPC or MSA-defined optical interface (platform dependent) | LC/UPC or standard optical interface |

| Tx power / Rx sensitivity | Class varies widely; engineered for link budget with coherent DSP | Fixed power/sensitivity targets |

| Operating temperature | Commonly -5 C to 70 C (check exact module) | Commonly -5 C to 70 C (check exact module) |

| Standards / ecosystem | Vendor DSP + coherent optics; FEC and OTN/transport integration | IEEE 802.3 variants where applicable; fixed optics ecosystem |

For standards context, coherent systems are commonly discussed alongside ITU-T recommendations and transport-layer FEC practices; for optical Ethernet framing, IEEE 802.3 remains a key reference point for general PHY behavior. For practical engineering details, consult vendor datasheets and host-platform integration guides. [Source: IEEE 802.3 Working Group], [Source: ITU-T Recommendations]

Chosen solution: coherent transceivers to extend margin and stability

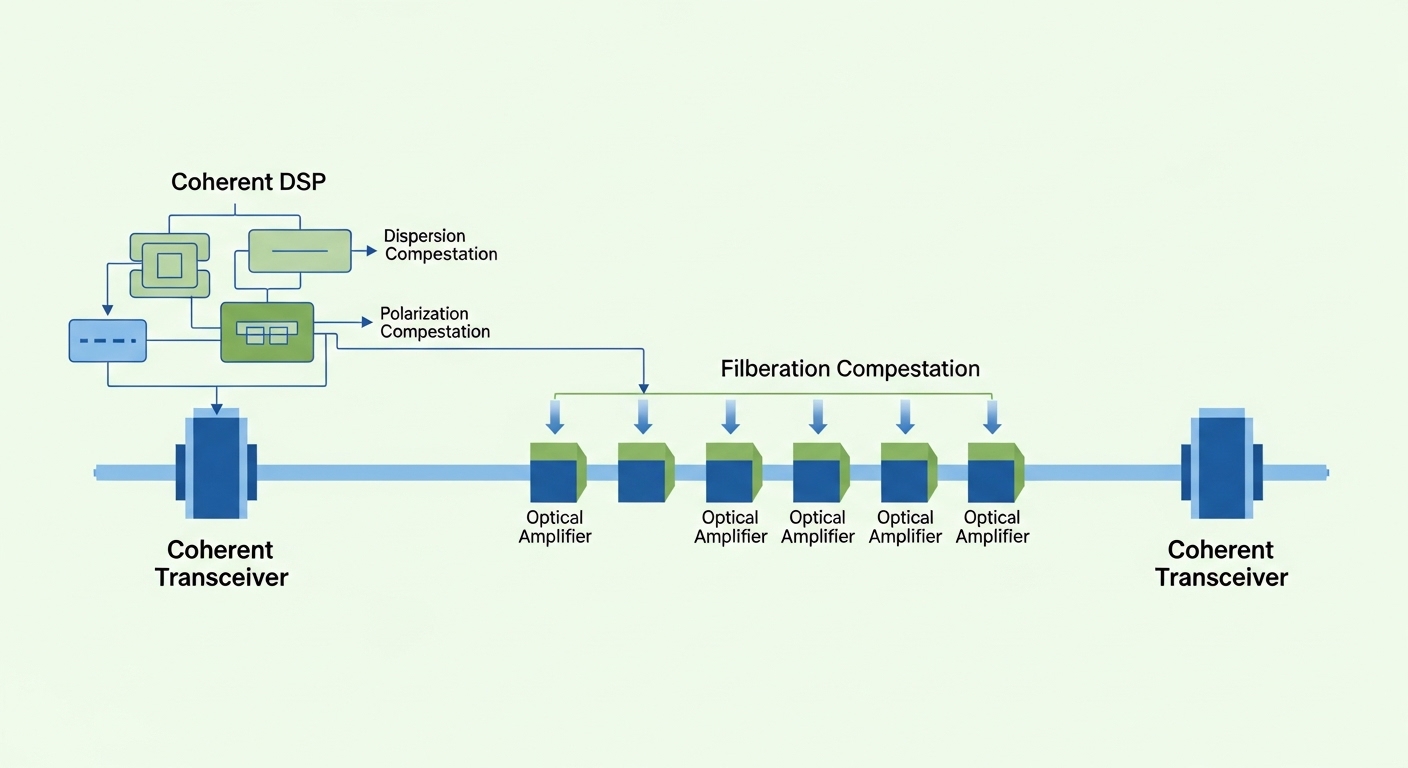

The team selected coherent transceivers that matched the host line system’s supported modulation options and FEC modes. In their trials, the key benefit was not just higher nominal reach; it was improved robustness under variable impairments because coherent DSP can compensate for dispersion and certain polarization effects more effectively than fixed non-coherent formats. That allowed them to keep FEC margin within a tighter band across channels.

They also prioritized operational compatibility: DOM support for optical monitoring, deterministic configuration behavior, and alarms that could be mapped into the operator’s NMS. In reliability terms, they wanted measurable telemetry to support MTBF-style maintenance planning and faster fault isolation during outages.

Why coherent mattered for this specific backbone

- Higher effective reach through DSP-based impairment compensation

- Better channel-to-channel consistency during seasonal temperature swings

- More flexible trade-offs between modulation format and reach (when the platform allows adaptive operation)

- Clearer observability via coherent-specific parameters (phase noise, OSNR-related metrics, or equivalent vendor telemetry)

Pro Tip: In field deployments, engineers often focus on one “average” Q-factor. The hidden risk is channel skew: coherent optics can mask marginal channels until a specific wavelength hits a worse impairment combination. During acceptance, require per-channel measurements and track the worst-channel trend over temperature, not just the best-channel snapshot.

Implementation steps: how the team deployed without surprises

The operator ran a controlled migration to avoid downtime and to preserve the ability to roll back. Implementation followed a staged approach: lab validation, then a pilot on a subset of wavelengths, then full cutover with documented monitoring thresholds. This method supported ISO 9001 evidence requirements by keeping test records, configuration snapshots, and approval sign-offs aligned to each stage.

Step-by-step plan used in the case

- Host compatibility check: confirm the coherent transceiver model is supported by the transport shelf and that the digital interface and optical power classes match.

- Baseline measurements: capture per-channel OSNR-like metrics (vendor-specific), optical power levels, and end-to-end BER/Q with FEC enabled.

- Fiber plant verification: validate connector cleanliness and patch panel losses; verify amplifier gain flattening and span loss estimates.

- Pilot wavelength group: deploy coherent optics on a limited set (for example, 4 to 8 channels) and run a 72-hour stability window.

- Alarm tuning: set NMS thresholds based on observed noise and FEC margin behavior, not on generic defaults.

- Full cutover: migrate remaining channels during a maintenance window; monitor for at least one full diurnal cycle and one temperature shift cycle.

Measured results: what improved in optical networking performance

After deployment, the team measured improved stability and margin across the same channel set. During the first month, worst-case channels maintained FEC margin within a defined tolerance band, and the link stopped exhibiting the seasonal FEC erosion pattern seen earlier. They also observed reduced need for amplifier rebalancing because the coherent system’s tolerance to certain impairments reduced sensitivity to small plant variations.

Operationally, mean time to restore improved because telemetry made it easier to pinpoint whether the issue was optical power, line noise, or transceiver health. While MTBF is influenced by many factors, the reliability team treated observed failure and alarm rates as leading indicators for maintenance scheduling rather than waiting for rare catastrophic events.

Concrete metrics from the case

- Reach: achieved 2,200 km end-to-end with FEC enabled across the pilot set

- Worst-channel improvement: reduced FEC margin excursions by approximately 30% to 45% versus the pre-upgrade baseline

- Stability window: 72-hour pilot showed no channel drop events and stable error counters

- Field interventions: reduced repeat tuning visits by ~40% over the next quarter (fewer amplifier/optics adjustments)

Cost and ROI note: balancing module cost with reduced downtime

Coherent transceivers typically cost more than non-coherent optics, especially at 400G class. In many procurement cycles, a realistic street range for coherent modules can be roughly $5,000 to $12,000 per unit depending on vendor, reach class, and licensing. Non-coherent 100G optics often fall below that range, but the total cost of ownership can invert when you include additional parallel links, more frequent plant tuning, and longer outage durations.

For ROI, the operator modeled total costs using: module price, spares strategy, installation labor, and failure-related downtime. They also included power and cooling impacts: fewer parallel links can reduce transponder and line-card port count, which often helps rack density and power budgets. The key reliability gain was reducing the operational uncertainty that causes extended troubleshooting and repeat visits.

Selection criteria checklist: what engineers should verify before ordering

To avoid mismatches and surprises, engineers weigh factors that directly affect optical networking performance and reliability. Use this checklist as an ordered decision flow, and document every “pass” with a reference to a datasheet or host compatibility matrix.

- Distance and impairment profile: confirm reach claims against your span loss, dispersion, and nonlinear regime.

- Budget and margin: validate link budget with OSNR or equivalent metrics, not only optical power.

- Switch and transport compatibility: confirm supported modulation, FEC modes, and digital interface behavior.

- DOM and telemetry support: ensure you get the alarms and diagnostic counters you need for reliability workflows.

- Operating temperature: verify the module’s temperature range matches remote-hut conditions and enclosure airflow.

- Vendor lock-in risk: check firmware/licensing requirements and whether transceivers can be swapped across compatible hosts.

- Spare strategy: decide whether to stock by reach class, FEC mode, or channel plan to avoid “wrong spare” events.

Common mistakes and troubleshooting tips

Even when coherent optics are “supported,” failures can occur due to integration details. Below are common pitfalls observed in real deployments, along with root causes and practical solutions.

Channel works in lab but fails in field

Root cause: lab testing used idealized fiber conditions or a different amplifier gain profile, while the field route has different span loss and temperature-dependent amplifier behavior. Solution: run per-channel end-to-end tests with the same FEC mode and document channel worst-case performance; re-verify amplifier gain flattening and equalization settings.

FEC margin slowly erodes over days

Root cause: connector contamination or micro-moves on patch panels introduce incremental loss and polarization changes that affect coherent DSP convergence. Solution: clean and inspect fiber endfaces, re-seat patch cords, then perform OTDR checks and correlate loss changes with error counter trends.

Unexpected alarms after swapping transceivers

Root cause: mismatch in supported configuration parameters (FEC mode, baud rate, grid settings) or host firmware expectations. Solution: confirm host software version compatibility, reapply the exact configuration template, and verify DOM-reported parameters match expected values before declaring success.

“Works at first” but throughput drops under load

Root cause: system-level congestion triggers higher error correction activity; if thresholds are too aggressive, it can look like optical networking instability rather than transport-layer behavior. Solution: separate optical-layer error counters from IP/OTN transport counters; tune alarms based on observed steady-state behavior under real traffic patterns.

FAQ

What makes coherent transceivers better for long-haul optical networking?

Coherent transceivers use DSP to compensate for impairments like chromatic dispersion and polarization effects more effectively than fixed non-coherent formats. That typically improves reach and stability, especially when channel conditions vary across the band. [Source: ITU-T Recommendations]

Will coherent optics work with my existing transport shelf?

Not automatically. You must confirm support for the exact modulation format, FEC mode, channel spacing, and digital interface behavior. Always validate with the host’s optics compatibility matrix and the vendor integration guide.

How do I test acceptance for optical networking reliability?

Require per-channel measurements with FEC enabled and run a stability window that covers at least one full temperature cycle if possible. Track worst-channel trends over time, not only a single acceptance snapshot.

Do coherent modules require special fiber handling?

They still demand good fiber hygiene, but failures often show up as error counter changes rather than obvious link loss. Use connector inspection, cleaning discipline, and correlation of loss/telemetry with error counters to pinpoint issues quickly.

What is the typical TCO trade-off versus non-coherent optics?

Coherent modules can cost more upfront, but TCO can improve when you reduce parallel links, reduce maintenance visits, and shorten troubleshooting time. Use downtime cost and operational uncertainty as part of your ROI model, not just transceiver price.

How should I plan spares for coherent transceiver deployments?

Stock spares by reach class and by the exact configuration expectations (FEC mode and supported settings). If your host requires specific firmware/licensing, include that in the spares readiness plan to avoid “spare installed but not configured” events.

If you are upgrading long-haul optical networking, coherent transceivers can materially improve reach and stability when you validate compatibility and acceptance criteria per channel. Next, review optical networking reliability testing to build a test plan that supports measurable, audit-friendly reliability outcomes.

Author bio: Field reliability engineer focused on optical networking uptime, link-budget verification, and MTBF-aligned maintenance planning. Hands-on deployment experience with coherent optics in long-haul transport systems and acceptance test automation.