Step-by-step: prerequisites for a 400G vs 800G decision

If you are planning a leaf-spine refresh, the transceiver choice can swing both capex and ongoing power. This article helps data center engineers compare 400G optics and switching options against 800G from a cost and performance standpoint, including how to validate compatibility before you order. You will get an implementation-style checklist, plus troubleshooting for the most common optics and link issues. 400G cabling planning

Update date: 2026-05-01. Primary references include IEEE Ethernet specifications, ANSI/TIA fiber standards, and vendor transceiver datasheets. IEEE 802.3 standards page ANSI/TIA standards portal

Prerequisites (what you should have before comparing)

- Switch model numbers for both the current and target platforms (for example, a leaf switch with 400G ports vs an 800G line card), plus the vendor optics compatibility matrix.

- Fiber plant inventory: MPO/MTP counts, fiber type (OM4 vs OS2), patch panel loss budget, and measured link attenuation.

- Power and cooling baselines: rack power draw, inlet temperature, and expected IT load increase over 12 to 36 months.

- Traffic model: average and peak utilization, oversubscription ratio, and expected growth cadence.

map your application to the throughput you actually need

Engineers often jump to port speeds, but the real requirement is workload throughput and congestion behavior. In many modern leaf-spine fabrics, the bottleneck is oversubscription at aggregation or the fan-in of spine uplinks, not the raw port rate alone. Start by measuring current utilization per ToR uplink and per spine link, then project growth.

For example, in a 3-tier topology with ToR switches feeding spines, you may see 55% average utilization with 85% peaks during batch windows. If the upgrade goal is to reduce packet loss and shorten queueing delay, you may get most of the benefit by moving to 400G uplinks while keeping the same port count and line card density. Only consider 800G when your traffic profile or port-count constraints make doubled per-port capacity necessary.

Expected outcome: a quantified “capacity gap” in Gb/s at each tier, plus a decision whether you need more ports or higher per-port bandwidth.

compare transceiver and link-layer performance constraints

Both 400G and 800G commonly use optical transport that is effectively a bundle of lanes. The key performance question is whether your optics, transceivers, and fiber plant can sustain the required reach with acceptable margin for temperature, aging, and connector loss. For Ethernet, confirm that the transceiver supports the correct electrical interface (for instance, PAM4-based variants for high-speed Ethernet) and that the switch firmware enables the port mode you plan to use.

Practically, 400G is often available with mature short-reach options such as QSFP-DD or OSFP form factors depending on vendor. 800G options exist, but they can introduce higher power per port and may require specific line card generations. Your goal is to ensure the link budget and vendor interoperability are proven for your exact switch model.

Technical specifications comparison (representative optics)

The table below illustrates typical short-reach and long-reach optical characteristics you might compare when planning 400G and 800G deployments. Always confirm exact values in the vendor datasheet for the specific part number and temperature grade.

| Parameter | 400G SR8 (example) | 800G SR16 (example) | 400G LR4 (example) | 800G LR8 (example) |

|---|---|---|---|---|

| Data rate | 400G Ethernet (8 lanes) | 800G Ethernet (16 lanes) | 400G Ethernet (4 lanes) | 800G Ethernet (8 lanes) |

| Wavelength | 850 nm nominal | 850 nm nominal | ~1310 nm nominal | ~1310 nm nominal |

| Typical reach | Up to 100 m on OM4 with MPO | Up to 100 m on OM4 with MPO | Up to 10 km on OS2 | Up to 10 km on OS2 (varies) |

| Connector type | MPO/MTP (8-fiber) | MPO/MTP (16-fiber) | LC duplex | LC duplex (varies by implementation) |

| Operating temp | 0 to 70 C commonly (check datasheet) | 0 to 70 C commonly (check datasheet) | 0 to 70 C commonly (check datasheet) | 0 to 70 C commonly (check datasheet) |

| Typical power | Often ~6 to 12 W class (depends on vendor) | Often higher per port; check line card budget | Often ~5 to 10 W class | Often higher per port; check line card budget |

Representative part examples you may encounter during qualification include Cisco optics such as Cisco SFP-10G-SR for legacy, and for high-speed Ethernet you will typically use vendor-supported 400G SR8 and 800G SR16 transceivers rather than mixing unrelated families. For third-party optics ecosystems, you will commonly see branded modules like Finisar or FS.com equivalents, but compatibility is still switch-specific. When evaluating, verify the exact short-reach type, lane count, and DOM support.

Examples of third-party optics you might look at during research include FS.com short-reach families (verify the exact 400G/800G designation) and Finisar part families for multi-lane optics. FS.com transceiver catalog Lumentum and former Finisar optics documentation

Pro Tip: In dense MPO/MTP environments, the limiting factor is often not the nominal reach on paper but the connector cleanliness and patch panel insertion loss. Before you decide on 400G vs 800G, have your contractor re-measure end-to-end loss with a calibrated OTDR or OLTS setup and document the margin at the highest expected temperature.

run a cost model that includes optics, line cards, and power

A fair comparison between 400G and 800G must include more than transceiver unit price. 800G upgrades can require line card changes, different optics types (for example, higher-fiber-count MPO), and sometimes different cooling headroom due to higher per-port power. Meanwhile, 400G may allow you to reuse more of your existing optics inventory and patching strategy, especially if your current plant is already built around MPO trunks for 100G/200G and then scaled to 400G.

In typical enterprise and mid-market builds, you might see 400G SR8 optics priced in the tens to low hundreds of dollars per module depending on OEM vs third-party, while 800G SR16 can be materially higher. In larger hyperscale procurement, contract pricing can reduce the spread, but the line card and integration costs often dominate. For TCO, include failure rates and RMA logistics: third-party modules can be cost-effective, but only if you have validated switch compatibility and a clear warranty path.

Expected outcome: a 3-year and 5-year TCO estimate with power cost, optical spares, and refresh cycles.

Cost and ROI note (realistic ranges and drivers)

- Optics capex: OEM optics typically cost more than third-party, but they reduce compatibility risk and downtime during deployments.

- Integration capex: 800G can trigger additional line card and backplane changes; that cost can exceed optics savings.

- Power and cooling: If 800G increases per-rack power by even a few hundred watts, the operational cost can outweigh the transceiver premium difference over time.

- Spare strategy: Higher-speed optics may have longer lead times; stock levels should match your deployment timeline.

For authority on fiber loss measurement practices and test methods, see ANSI/TIA standards portal and vendor OLTS/OTDR guidance in datasheets and application notes. IEEE 802 working group resources

validate fiber plant reach using link budgets and connector loss

Reach decisions should be grounded in measured loss, not only module “up to” claims. For short-reach, test your MPO/MTP trunks end-to-end and confirm that insertion loss remains below the transceiver’s supported budget with margin. For long-reach, verify OS2 path loss, splice quality, and connector cleanliness.

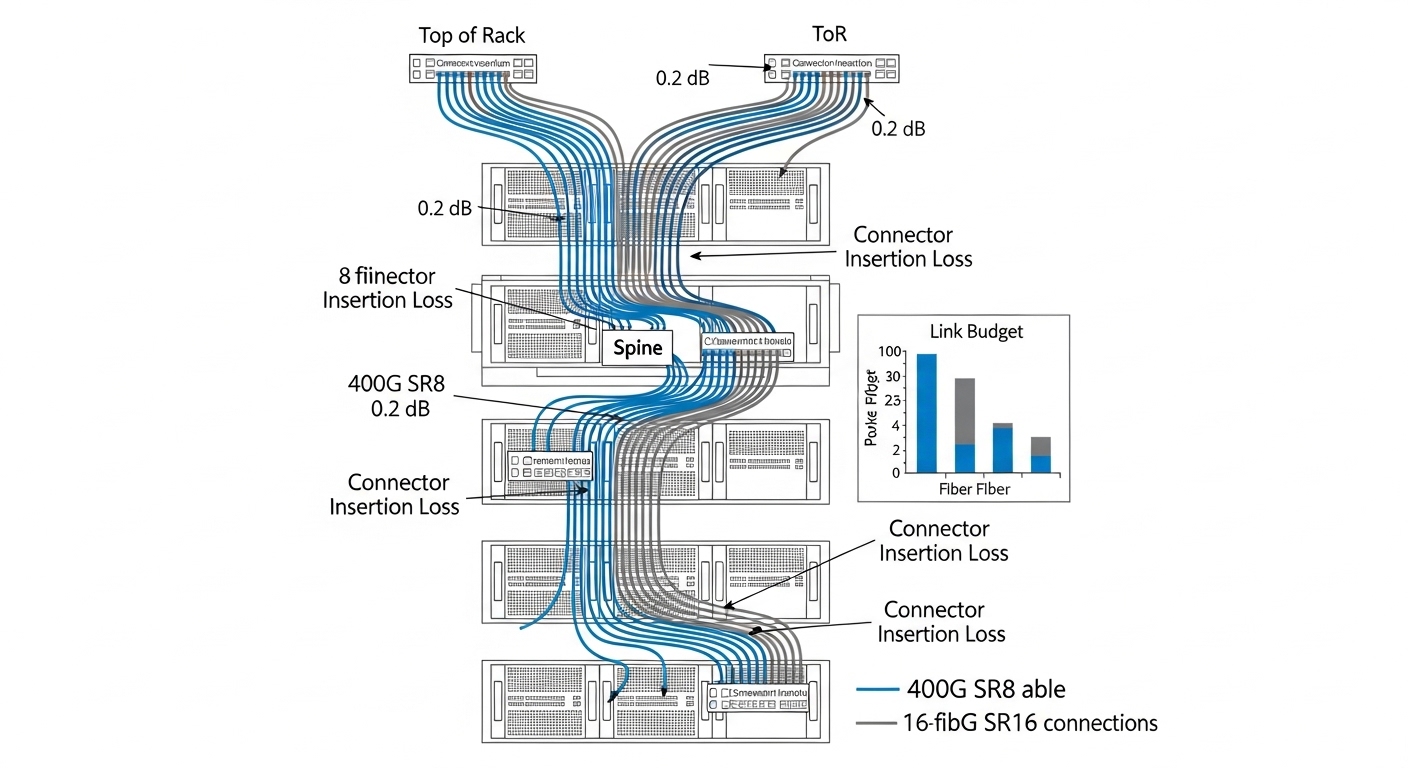

In many leaf-spine designs, your most common optics are short-reach SR types between ToR and spine within the same row or suite. If you are using 400G, you may connect multiple 8-fiber MPO links that match your existing trunking approach. 800G SR solutions may require 16-fiber MPO/MTP cabling or different breakout rules, which can increase retrofit labor and risk if your patch layout was not designed for it.

Expected outcome: confirmed that both 400G and 800G options can meet your actual distances with margin, or an explicit reason to avoid one.

Image insertion 1

confirm switch compatibility, DOM behavior, and firmware port modes

Even when optics are “standard,” compatibility is determined by the switch vendor’s port mode, lane mapping, and supported transceiver list. Before procurement, test with the exact part numbers you plan to deploy and confirm that DOM telemetry (temperature, laser bias/current, received power, and vendor-specific flags) works in your monitoring stack.

For example, if your monitoring expects a particular DOM threshold format, a third-party module might expose different alarm semantics even if the link comes up. Also confirm that the switch firmware supports the interface (for example, breakout modes or lane reversal behaviors) for the specific transceiver. If you plan a mixed environment, validate that optics are not inadvertently forced into an unsupported speed/encoding mode.

Expected outcome: a compatibility sign-off including “link up, stable, telemetry visible, and alarms behave as expected.”

Selection criteria / decision checklist (ordered)

- Distance and link budget: measured loss vs module reach, including connectors, splices, and patch panels.

- Switch compatibility: vendor optics matrix for the exact switch model and firmware release.

- Port density and upgrade path: whether 800G requires a new line card and how many ports you gain or lose.

- DOM and monitoring integration: telemetry fields, alarm thresholds, and SNMP/telemetry mapping.

- Operating temperature and power budget: ensure the line card and optics stay within thermal limits at your inlet temperature.

- Fiber plant readiness: MPO/MTP fan-in/out rules, 8-fiber vs 16-fiber cabling, and retrofit labor.

- Vendor lock-in risk: OEM vs third-party availability, warranty terms, and RMA turnaround.

- Spare strategy and lead times: whether you can meet deployment schedules and maintain redundancy during failures.

Common deployment scenario: when 400G wins in practice

In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches replaced by 32-port 400G-capable leaf switches, the team targeted uplink congestion reduction during nightly ETL bursts. They measured 85% peak utilization on spine uplinks and planned a 24-month growth curve. The existing fiber plant used 8-fiber MPO trunks between rows, with measured end-to-end loss averaging 2.2 dB at the test points and consistent connector inspection. They chose 400G SR8 to avoid retrofitting the patch layout for 16-fiber MPO required by many 800G SR16 configurations.

The result was a faster rollout: fewer cabling changes, stable link bring-up, and clear telemetry in the network management system. The team reserved 800G for a future spine refresh where the line cards and cabling design could be standardized, rather than forcing a mixed cabling retrofit mid-project.

Image insertion 2

implement the upgrade using a controlled rollout and acceptance tests

When you move from planning to execution, treat optics like a controlled integration project. Start with a lab or a pilot rack, then expand in waves while verifying telemetry, error counters, and thermal stability. Use the switch CLI to confirm that the port negotiated the expected speed and that interface counters remain stable under load.

Expected acceptance tests should include: link up time, no recurring link flaps, stable optical receive power, and error counters that remain within vendor guidance under traffic. If you run traffic generators, validate throughput at line rate for the expected payload profile, not just link status.

Troubleshooting: top failure points and how to fix them

- Symptom: Link stays down or flaps during bring-up. Root cause: incorrect optics type or unsupported port mode; sometimes lane mapping mismatch after firmware updates. Solution: confirm the switch firmware and optics part number against the vendor compatibility matrix; reseat and verify DOM reads; try a known-good transceiver from the same batch.

- Symptom: High packet loss or CRC errors despite link up. Root cause: excessive insertion loss or dirty MPO/MTP endfaces causing intermittent optical power degradation. Solution: clean connectors with approved procedures, inspect with an endface microscope, and re-run OLTS/OTDR to confirm loss within budget.

- Symptom: Monitoring shows alarms or missing DOM fields. Root cause: DOM telemetry schema differences between OEM and third-party optics, or monitoring thresholds not aligned to the vendor’s definitions. Solution: map DOM fields in your telemetry pipeline, update thresholds based on vendor documentation, and verify alarms during controlled thermal cycling.

Image insertion 3

FAQ: 400G vs 800G buying questions engineers ask

Is 400G always cheaper than 800G?

Not always. Optics for 800G can be more expensive per module, but if 800G reduces the number of ports or line cards needed, the overall system capex can converge. The decision depends on your switch platform generation, required line card upgrades, and whether you must retrofit cabling for higher-fiber MPO.

What fiber type should I plan for 400G SR?

Most short-reach 400G designs use OM4 with MPO/MTP trunks for typical in-row or nearby-row distances. Confirm your measured insertion loss and connector cleanliness, because the practical margin often matters more than the “up to” reach in marketing specs.

Will third-party optics work for both 400G and 800G?

They can, but only after you validate against the exact switch model and firmware release. DOM telemetry support and alarm semantics may differ, and some platforms enforce strict transceiver qualification. Run a pilot with the exact part numbers before scaling.

How do I compare power impact between 400G and 800G?

Use vendor line card and transceiver power specs, then validate with your rack-level power measurements. Even if per-port optical power is similar, 800G line cards may have different thermal behavior and higher total consumption, affecting cooling setpoints and fan curves.

When is 800G worth the upgrade?

800G is often worth it when you have strict port-count constraints, a platform that already supports 800G natively, and a cabling design that minimizes retrofit risk. If your fiber plant is already optimized for 400G SR8, the ROI may favor 400G for the first phase.

How should I structure my acceptance tests?

Test link stability under realistic traffic, verify optical receive power and DOM alarms, and monitor CRC or FEC-related counters for sustained intervals. Also validate thermal stability by running at your expected inlet temperature, not just at room conditions.

Choosing 400G vs 800G is less about headline speed and more about measured loss, switch compatibility, thermal headroom, and a realistic TCO model. If you want to extend this into a full build plan, start with 400G cabling planning and align optics selection with your rack, PDU, and cooling constraints.

Author bio: A data center engineer with hands-on experience deploying leaf-spine fabrics, qualifying optics, and troubleshooting MPO link loss and DOM telemetry in production racks. I focus on practical power, cooling, and fiber acceptance workflows that reduce rollout risk.