In an SDN fabric, your controller does not just steer packets; it also ends up “steering” optics. If you pick the wrong transceivers, centralized optical control can be sabotaged by mismatched DOM data, surprise power budgets, or temperature drift that turns a green link into a blinking sad trombone. This article helps network engineers and field teams select optics that behave predictably under software control, with practical specs, compatibility cautions, and troubleshooting steps.

How centralized optical control changes the transceiver job

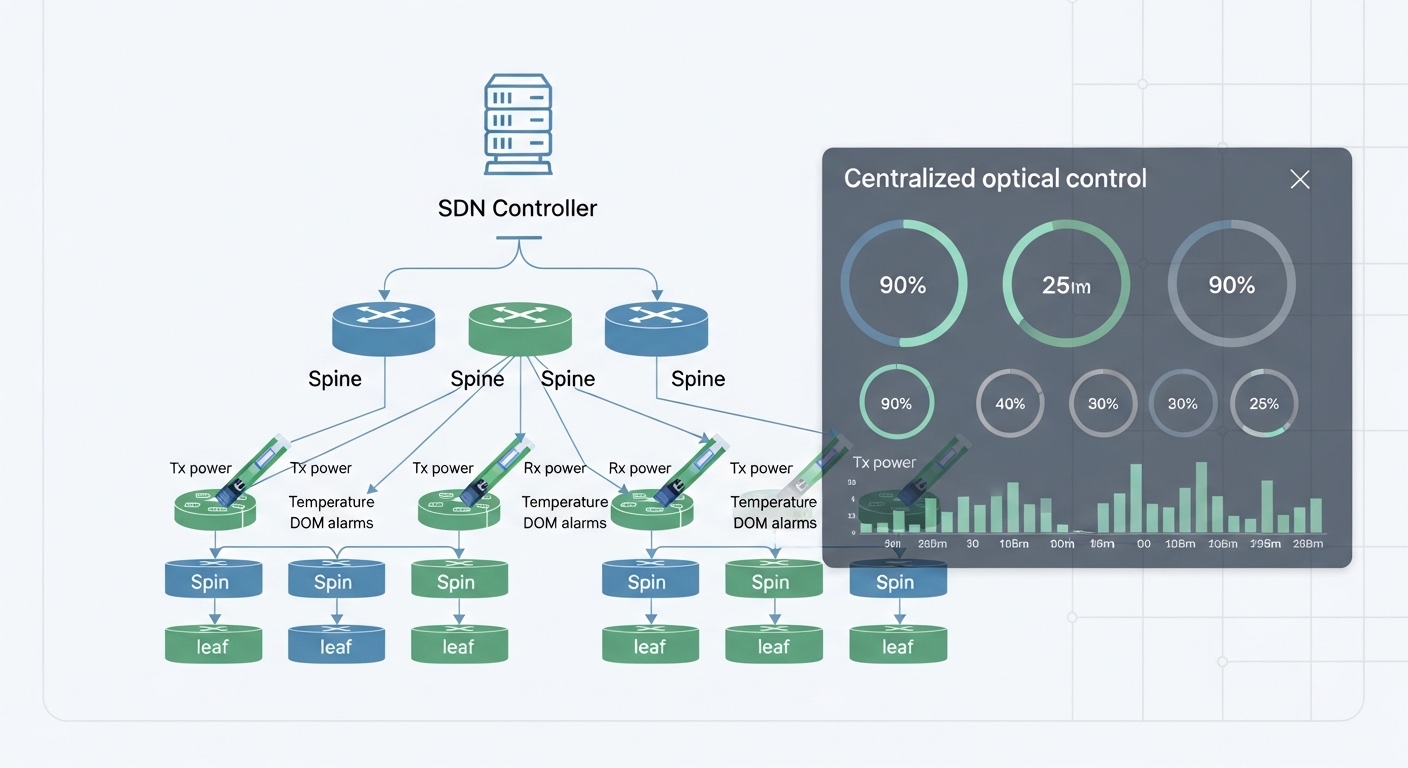

Traditional networks treat transceivers as mostly dumb endpoints: plug in, link up, move on. In a Software-Defined Optical Networks mindset, centralized optical control relies on predictable telemetry (DOM), stable optical parameters, and consistent diagnostics so the SDN controller can automate provisioning and fault isolation. In practice, that means your transceiver must support industry-standard digital diagnostics (not just “it works on my switch”), and it must match the switch vendor’s expectations for thresholds, alarms, and data reporting cadence.

At the standards level, Ethernet optics typically align with IEEE 802.3 link-layer requirements for speed and PMD/PCS behavior, while the transceiver management interface commonly follows SFF specifications for DOM. Even when your optics are “standards-based,” vendors implement threshold semantics differently, so the controller may interpret “low bias current” as “service degradation” or as “temporary normal.” That mismatch is where centralized optical control either becomes a superpower or a chaos gremlin.

Operationally, field engineers often discover that the SDN controller’s automation logic depends on transceiver identification fields (manufacturer, part number, serial, vendor OUI) and DOM readouts (laser bias current, received power, transmit power, temperature, supply voltage). If the switch reports DOM values with different scaling or rounding, your automation rules can misfire. The controller may then quarantine ports unnecessarily or fail to detect a failing laser early enough.

What the controller actually needs from optics

- DOM reliability: consistent readings for temperature, bias current, and optical power.

- Alarm semantics: vendor-specific threshold mapping that the SDN controller can interpret.

- Link stability: stable BER performance within the optical budget over temperature and aging.

- Provisioning compatibility: switch firmware support for the transceiver type and speed mode.

Pro Tip: Before scaling optics across the fabric, run a “DOM sanity test” from the controller: poll DOM every 30 to 60 seconds for at least 24 hours while you vary switch fan speeds or room temperature. If your alarms trigger only under certain airflow conditions, you will catch threshold mapping problems long before production turns into an interpretive dance.

Transceiver types for SDN fabrics: what to match first

In SDN deployments, the first matching step is not wavelength; it is data rate and electrical interface. Your controller may abstract flows at L3/L2, but the optics must still align to the switch’s transceiver cage (SFP, SFP+, QSFP+, QSFP28, QSFP56) and the line-side signaling. If you mismatch form factor or electrical lane mapping, centralized optical control can’t even get a clean telemetry baseline, and automation logic tends to fail early.

Next comes optical reach and link budget. Your SDN controller can reroute traffic, but it cannot repair an under-budget link where the received optical power falls below the receiver sensitivity across worst-case temperatures and aging. For short-reach Ethernet, you usually select multimode (MMF) around 850 nm or single-mode (SMF) around 1310 nm or 1550 nm, depending on distance and architecture.

Reference example optics (real part numbers to anchor the discussion)

- 10G SR (MMF, 850 nm): Cisco SFP-10G-SR (or equivalent vendor modules), Finisar FTLX8571D3BCL, FS.com SFP-10GSR-85.

- 100G SR4 (MMF, 850 nm): common QSFP28 SR4 modules from major vendors with OM3/OM4 reach specifications.

- 100G LR4 (SMF, 1310 nm): typical QSFP28 LR4 modules with longer reach and tighter budget management.

Because SDN centralized optical control frequently depends on consistent diagnostics, it is wise to validate DOM interoperability with your switch firmware rather than assuming “compatible” means “identical thresholds.”

Specs that matter: a comparison table for SDN-ready optics

Engineers often compare optics by reach alone, but centralized optical control cares about behavior under load, telemetry accuracy, and thermal stability. Below is a practical comparison of typical 10G SR (850 nm multimode) and 10G LR (single-mode) categories, focusing on the fields that show up in controller logic and field troubleshooting.

| Spec | 10G SR (MMF, 850 nm) | 10G LR (SMF, 1310 nm) |

|---|---|---|

| Typical data rate | 10.3125 Gbps | 10.3125 Gbps |

| Wavelength | 850 nm | 1310 nm |

| Reach (category) | ~300 m (OM3) to ~400 m (OM4), varies by module | ~10 km, varies by module |

| Fiber type | Multimode OM3/OM4 | Single-mode OS2 |

| Connector (common) | LC | LC |

| DOM support | Typically yes (temperature, bias, Tx/Rx power) | Typically yes (temperature, bias, Tx/Rx power) |

| Optical power class | Class varies by module; confirm Tx power and Rx sensitivity | Class varies by module; confirm Tx power and Rx sensitivity |

| Operating temperature | Commercial: often 0 to 70 C; confirm exact spec | Commercial or industrial variants; confirm exact spec |

For SDN automation, validate the exact DOM thresholds and units from the vendor datasheet and compare them to what your switch reports. If the controller expects a particular “low Rx power” threshold behavior, you want optics that match that expectation.

Real-world deployment: where transceiver choice meets automation

Imagine a 3-tier data center leaf-spine topology with 48-port 10G ToR switches and 2x 100G uplinks per ToR, running an SDN controller that automates port provisioning and proactively monitors optics. The fabric uses a mix of 10G SR for server access over 120 m OM4 links and 10G LR for certain cross-row aggregation paths up to 6 km. The controller polls DOM every 60 seconds, and if it detects abnormal Tx/Rx drift, it triggers a workflow that moves traffic and schedules a maintenance window.

In this environment, centralized optical control becomes valuable only if the transceivers provide consistent diagnostics. During commissioning, the team swaps in a new batch of third-party optics and discovers that DOM “temperature” values are offset by about 3 to 5 C compared to vendor modules. The automation logic interprets this as a bias-current problem and quarantines ports prematurely. The fix is not “buy the most expensive optics,” it is aligning module selection with switch firmware expectations and calibrating controller thresholds to your validated module set.

Selection criteria checklist for SDN optics under centralized control

When choosing transceivers for centralized optical control in an SDN environment, engineers typically weigh the following factors in order. Yes, it is a checklist; yes, it saves weekends.

- Distance vs reach: confirm OM3/OM4 type, insertion loss, connector loss, and worst-case budget, not just “rated reach.”

- Switch compatibility: verify the switch vendor’s transceiver support list or tested optics catalog for your exact model (firmware matters).

- DOM support and semantics: confirm DOM fields, units, scaling, and alarm threshold behavior.

- Data rate and lane mapping: match SFP/QSFP form factor and speed mode (especially for multi-lane optics).

- Operating temperature: choose industrial-grade where airflow is unpredictable; confirm exact temperature range.

- Vendor lock-in risk: assess cost and availability of validated modules; plan for multi-vendor compatibility tests.

- Optical compliance: confirm IEEE 802.3 alignment and vendor datasheet parameters for Tx power and Rx sensitivity.

- Support and RMA process: centralized control increases automation-driven rollouts, so you need fast replacement workflows.

Common pitfalls and troubleshooting tips (field-tested)

Here are the failure modes that actually show up when centralized optical control tries to “help” and instead trips over optical reality.

DOM alarms that trigger too early

Root cause: DOM temperature or power scaling/offset differences between transceiver vendors, or switch firmware interpreting vendor thresholds differently. Solution: Validate with your switch model and firmware; compare DOM readings against a known-good reference module; adjust controller thresholds or constrain module sourcing to a validated set.

Link up but intermittent packet loss

Root cause: Optical budget shortfall from excessive patch cord loss, dirty connectors, or aggressive bend radius causing additional attenuation and modal noise (MMF). Solution: Clean connectors with proper fiber cleaning tools, inspect with a microscope/inspection scope, verify bend radius, and recalculate budget including all connectors and splices.

Works at room temperature, fails in production airflow

Root cause: Transceiver operating temperature range mismatch or insufficient cooling margin; laser bias current drifts and raises BER. Solution: Use the vendor’s temperature specs and validate in situ; track DOM temperature and bias current trends during peak airflow; consider industrial-temperature optics where the equipment room runs hot.

Controller reroutes traffic endlessly

Root cause: Automation policy loops: the controller reacts to transient DOM dips as “hard failure,” reroutes, then sees the link recover and flaps again. Solution: Implement hysteresis and debounce windows in the controller logic; require consecutive alarm samples before triggering a maintenance workflow.

Cost and ROI note: what you pay versus what you avoid

Pricing varies by speed and reach, but in many markets you can expect third-party 10G SR modules to cost roughly $20 to $60 each, while OEM or tightly validated modules may run $60 to $150. For 100G optics, unit costs are typically higher and can dominate transceiver spend during fabric expansions. The ROI angle is not only purchase price; it is reduced downtime from predictable telemetry and fewer “mystery link” incidents that consume engineer time.

TCO drivers include failure rate, RMA logistics, and the time you spend tuning centralized optical control thresholds. If you deploy a mixed vendor fleet without validation, you may spend weeks on controller rule tuning and port quarantine policies. If you validate a small set of optics models early and enforce that set during rollout, you usually reduce troubleshooting churn and speed up automation onboarding.

FAQ: centralized optical control and SDN transceiver decisions

What does centralized optical control require from transceivers?

It needs reliable DOM telemetry (Tx power, Rx power, temperature, bias current) and consistent alarm behavior so the controller can automate detection and remediation. You also need optics that the switch firmware recognizes cleanly.

Can I use third-party optics safely in an SDN fabric?

Often yes, but only after compatibility testing with your specific switch models and firmware. Validate DOM semantics and alarm thresholds to avoid controller misinterpretation.

How do I calculate optical budget for SDN link provisioning?

Include fiber attenuation plus connector loss, splice loss, and any patch cord overhead. Then confirm the transceiver’s Tx power and receiver sensitivity across the expected temperature range and aging margin.

What are the fastest troubleshooting steps when centralized monitoring shows Rx power drops?

First check for dirty connectors and verify with an inspection scope. Next confirm the patching and bend radius, then compare DOM readings over time to distinguish transient events from persistent degradation.

Do I need industrial-temperature transceivers for data centers?

Not always, but if your racks experience high ambient temperatures or inconsistent airflow, industrial-grade optics can provide extra margin. Validate using DOM trends during peak conditions.

How should I prevent controller flapping during transient optical events?

Add debounce logic and hysteresis so the controller requires multiple consecutive samples before declaring failure. Also consider maintenance workflows that do not immediately reroute on the first alarm.

If you want centralized optical control to behave like a disciplined assistant instead of a nervous intern, pick transceivers based on DOM semantics, optical budget, and firmware compatibility — not just reach. Next, review optical monitoring and DOM threshold to design monitoring rules that match real-world optical behavior.

Author bio: I have deployed Ethernet optics in production fabrics, including DOM-based alerting and SDN automation rollouts across leaf-spine topologies. I write like a field engineer: measured values, vendor datasheets, and failure modes included.