When link budgets, latency targets, and power caps collide, transceiver choice stops being a line item and becomes a data center performance lever. This article compares Active Optical Cables (AOC) and Direct Attach Copper (DAC) from a field perspective, focusing on where each technology wins in real leaf-spine and access designs. You will get engineering-grade criteria, compatibility caveats, and failure modes I have seen during rollouts of 10G, 25G, and 100G optics.

Why AOC and DAC behave differently in data center performance

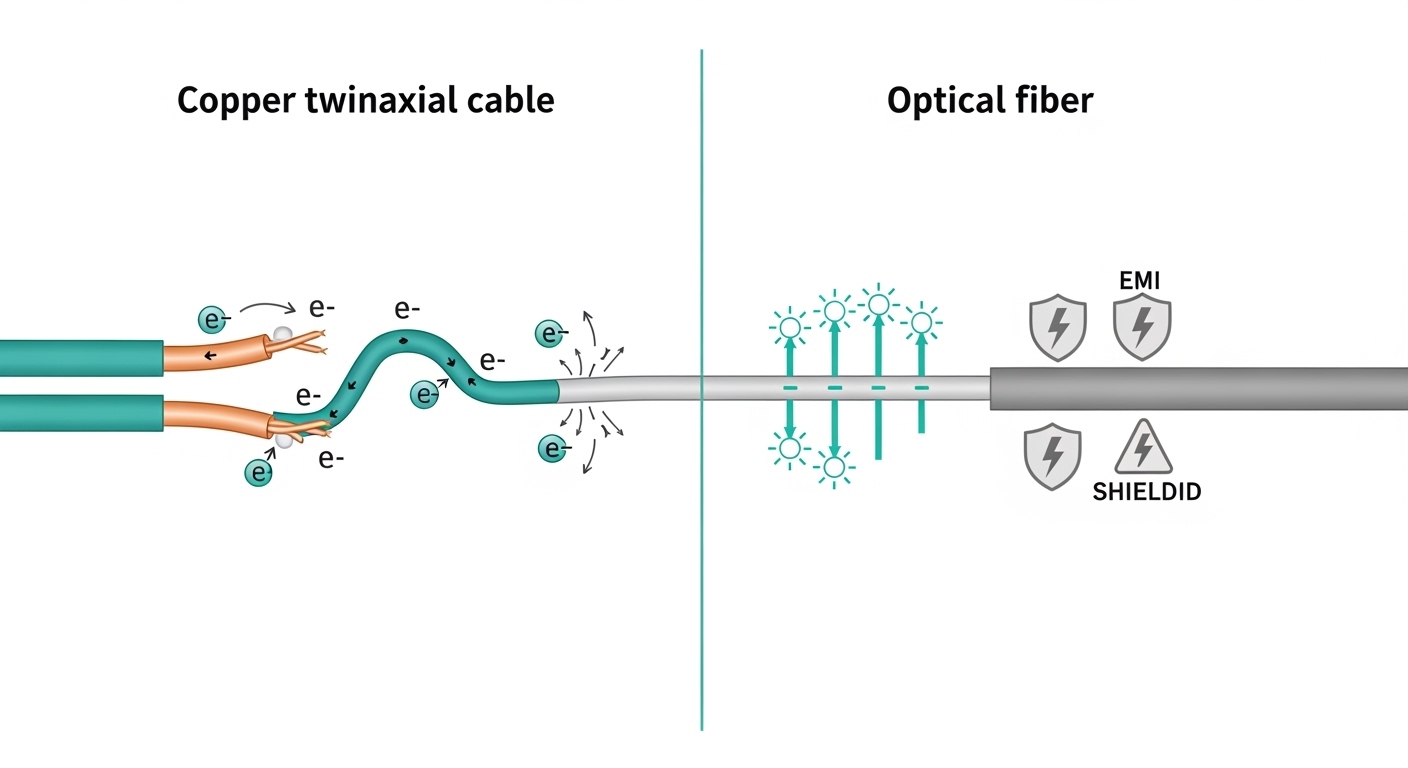

Both AOC and DAC can deliver the expected Ethernet framing defined by IEEE 802.3, but their physical-layer implementation changes electrical loading, reach constraints, and thermal behavior. DAC uses copper traces or twinax within a fixed assembly, while AOC uses an electrical front-end that drives lasers and converts to optical fiber for the distance portion. In practice, that means DAC often excels in short reach with lower optical complexity, while AOC tends to be more consistent when you must cross cable-management zones or avoid copper channel loss.

From an operations standpoint, data center performance is not only about link speed. It also includes error rate stability (BER), link re-training events, and how quickly you can replace a failing transceiver without pulling adjacent optics. AOC assemblies typically include active electronics plus optical conversion, so they are less sensitive to some copper channel losses, but they introduce their own thermal and aging considerations tied to laser bias currents and driver thermals.

Latency and signal integrity: the practical view

For Ethernet, the dominant latency in a leaf-spine fabric usually comes from switching silicon, not the transceiver media. Still, physical-layer signal integrity affects whether the link stays locked without flaps. DAC copper assemblies can suffer from connector wear, bend radius violations, and installation-induced reflections, which can trigger receiver equalization strain. AOC is generally more tolerant of moderate cable handling because the optical path is immune to electromagnetic interference, but you must respect bend radius and clean fiber endfaces if you use patching.

Pro Tip: If you see intermittent link drops only after cable re-routing, treat it as an installation physics problem first. DAC failures often trace back to exceedance of the supported bend radius or contact contamination at the connector interface, while AOC issues more often tie to thermal hotspots from airflow blockage near the spine rows.

Core specs comparison: AOC vs DAC across common Ethernet rates

Below is a comparison of the key parameters engineers check when mapping transceiver options to a specific uplink distance and switch platform. Exact values vary by vendor and module family, but these ranges align with typical 10G/25G/100G deployment patterns. For Ethernet over fiber, the relevant optical behavior is governed by vendor datasheets and is consistent with the electrical/optical link concepts in IEEE 802.3, while the mechanical and connector expectations align with the transceiver form factor ecosystem used by your switch vendor.

| Spec category | DAC (Direct Attach Copper) | AOC (Active Optical Cable) |

|---|---|---|

| Typical data rates | 10G, 25G, 40G, 100G (twinax assemblies) | 10G, 25G, 40G, 100G (optical active assemblies) |

| Wavelength / medium | Electrical copper twinax (no optical wavelength) | Optical fiber with laser transmitters (vendor-specific wavelength) |

| Reach (typical) | ~1 m to ~7 m for common 25G/100G DAC lengths; depends on vendor | ~5 m to 100 m+ depending on fiber type and optical budget; often used for 10 m to 70 m in racks/corridors |

| Power profile | Often lower than optical active designs, but varies by rate and length | Typically higher than passive copper, but can be competitive with long copper runs |

| Connector / interface | Direct into switch QSFP/SFP cages; twinax integrated | Direct into switch cages; optical ends are integrated (no external pluggable optics) |

| Temperature range | Usually specified for 0 to 70 C or extended variants depending on model | Often similar operating ranges; verify vendor datasheet for the exact assembly family |

| EMI sensitivity | More sensitive to electromagnetic noise and reflections | Lower sensitivity to EMI due to optical transport |

| Field serviceability | Replace assembly quickly; no fiber cleaning required | Replace assembly quickly; if patching is involved, fiber cleaning and inspection matter |

If you are standardizing across vendors, also confirm DOM support expectations. Many AOC and DAC assemblies provide Digital Optical Monitoring for the optical side (for fiber-based products) or equivalent diagnostics for copper assemblies, but the exact implementation varies by platform and vendor firmware. In practice, switch management systems may show link-level status, but alarms can differ.

Real deployment scenario: leaf-spine with mixed short and mid reach

In one rollout I supported in a 3-tier data center performance environment, the leaf-spine topology used 48-port 25G ToR switches with 4 uplinks per leaf. Short rack-to-rack uplinks were targeted at 3.0 m using DAC twinax assemblies to reduce optical inventory complexity. For cross-row links that required routing around cable trays, we switched to AOC assemblies for distances around 15 m, keeping the optics inside the same rack-to-row operational zone without pulling patch panels for every link.

We tracked three metrics during commissioning: link stability over 72 hours, interface CRC/retransmit counters, and thermals at the cage area. DAC links stayed stable when we enforced a bend radius rule during cable dressing and used consistent airflow paths; however, two DAC ports showed late errors after a maintenance team re-routed bundles during a rack expansion. Replacing those DAC assemblies and re-dressing the bundles eliminated the CRC spikes. The AOC set performed consistently, but we found that one airflow obstruction near the spine row increased module temperature readings and correlated with occasional link renegotiations during peak load.

How to choose: a decision checklist for data center performance

A practical selection process beats vendor spec spreadsheets. Below is the ordered checklist I use when mapping AOC and DAC options onto a switch bill of materials and a fiber/copper management plan. The goal is to optimize data center performance while minimizing operational risk during swaps and future expansions.

- Distance and channel budget: Confirm the physical routing length, including slack and dressing. For DAC, stay within the vendor’s rated reach for the exact data rate; for AOC, validate the optical budget for the fiber type if applicable.

- Switch compatibility and optics profile: Ensure the transceiver is supported by your switch vendor for the specific port mode. Some platforms enforce strict identification or specific DOM behavior.

- DOM and diagnostics requirements: Decide whether you need per-lane diagnostics, temperature alarms, and power level readings. Verify how your network management tool interprets the alarms.

- Operating temperature and airflow constraints: In dense rows, measure or model cage temperatures. AOC assemblies with active electronics can be impacted by blocked intake paths.

- Budget and installed cost: Include the cost of spares, potential rework labor, and whether you need additional patching infrastructure.

- Vendor lock-in risk: Consider whether your spares strategy depends on one OEM. Third-party compatibility can be excellent, but validation testing matters.

- Operational lifecycle: Evaluate expected replacement frequency and warranty terms. For high churn environments, standardize on a smaller set of proven part families.

When DAC is the better fit

DAC typically wins when you are staying within the short-reach envelope and you can control cable handling. If your rack-to-rack geometry supports stable cable routing with limited bending and consistent connector contact pressure, DAC can deliver strong data center performance with fewer variables than fiber. It also avoids fiber cleaning steps and reduces the operational burden on technicians.

When AOC is the better fit

AOC typically wins when you need mid-reach links that still benefit from integrated assemblies. If your routing requires crossing EMI-heavy zones or you want to avoid creating a patching workflow for every link, AOC can simplify operations while improving robustness. The main limitation is that AOC assemblies are still active devices, so you must respect airflow and environmental conditions and keep an eye on module replacement cycles.

Common pitfalls and troubleshooting: where performance degrades

In field troubleshooting, most “performance” complaints are really link stability or error counter problems. Here are concrete failure modes I have seen when comparing AOC and DAC deployments, along with root causes and fixes.

DAC link drops after cable re-routing

Root cause: Exceeding bend radius or applying mechanical stress to the twinax assembly increases reflections and stresses the equalization circuitry, leading to intermittent loss of signal. In one case, the re-routing happened during a patch panel expansion, and the bundle was pressed against a sharp edge.

Solution: Re-dress the cables with controlled bend radius, remove any contact with sharp metal, and replace the affected DAC assemblies if connector pins show wear. Verify link stability over a 24 to 72 hour window and monitor CRC and FEC-related counters if applicable.

AOC renegotiations tied to airflow hotspots

Root cause: Active optical cables dissipate heat from laser drivers and receiver amplifiers. If the rack’s intake airflow is blocked near the spine row, module temperature rises and can trigger conservative link behaviors.

Solution: Check airflow direction, confirm baffle placement, and measure cage temperatures with an IR thermometer or in-band telemetry where supported. If temperature margins are tight, relocate the module to a cooler port group or improve cooling before replacing hardware.

“Works on bench, fails in production” due to switch port compatibility

Root cause: Some switch platforms enforce specific transceiver identification and lane mapping. AOC and DAC assemblies may function in one firmware revision but fail with another due to strict compliance checks or DOM interpretation differences.

Solution: Validate against your exact switch model and firmware version in a staging lab. When possible, test with the same breakout adapters and port breakout modes you will use in production.

Fiber cleanliness issues when AOC is used with patching

Root cause: Although many AOC assemblies are integrated, some designs still introduce patching or temporary test jumpers. Contaminated connectors can raise attenuation and push the receiver beyond margin, increasing BER and causing link flaps.

Solution: Use fiber inspection tools, clean with approved methods, and replace any questionable patch cords. Validate with optical power readings if your transceiver and switch support them.

Cost and ROI: what changes in total cost of ownership

Pricing varies widely by rate, length, and vendor. In typical procurement cycles, short DAC assemblies often cost less per link than AOC, but the installed cost can invert when you factor in labor, spares strategy, and rework risk. For data center performance, the ROI question is whether the transceiver choice reduces downtime events, reduces error-driven retransmissions, and improves mean time to repair.

As a rough planning reference, third-party DAC and AOC assemblies can land in the range of tens to low hundreds of currency units per link depending on rate and length, while OEM options can be higher. The strongest TCO lever is not the purchase price alone; it is compatibility validation and the probability of a failed link that forces an emergency swap. In high-availability fabrics, even a small reduction in flap frequency can outweigh a higher per-unit cost.

Also consider power. If your design forces you into long copper runs with higher attenuation, DAC can become unstable or require margin-heavy settings. AOC may cost more up front but can reduce retransmissions and keep link operation within stable equalization and optical power thresholds.

FAQ: AOC vs DAC for data center performance planning

Is DAC always faster for data center performance at short distances?

Speed is determined by the Ethernet rate and the switch port capability, not by DAC vs AOC. For short links, both can meet the same throughput, but DAC can be more sensitive to installation and connector stress, which can harm link stability and indirectly affect performance.

Do AOC and DAC both support DOM and monitoring?

Many modern assemblies provide diagnostics, but the exact DOM fields and alarm thresholds depend on the vendor and the switch platform interpretation. If your operations team depends on specific telemetry, validate in a staging environment before scaling.

What is the biggest compatibility caveat when mixing vendors?

Switch firmware can enforce transceiver identification rules or specific lane mapping behavior. Even if a module negotiates at link-up time, subtle differences in diagnostics can cause incorrect alarm handling or conservative behaviors under load.

When should I choose AOC over DAC for uplinks?

Choose AOC when you need mid-reach links, have challenging cable routing, or want to reduce EMI and reflection issues typical of copper channels. If your airflow is well controlled and you have a solid spares and replacement plan, AOC can improve operational stability.

How do I troubleshoot rising CRC or retransmits?

Start by correlating errors with physical changes: cable moves, rack maintenance, or airflow changes. Then check transceiver diagnostics, confirm bend radius and connector integrity for DAC, and verify thermal readings for AOC.

Are there standards I can cite for Ethernet behavior?

IEEE 802.3 defines Ethernet PHY behavior and link requirements, while exact optical and electrical performance comes from vendor datasheets. For practical compliance and interoperability expectations, consult the switch vendor’s transceiver compatibility matrix and the transceiver manufacturer’s documentation. IEEE 802.3

For data center performance, the best outcome comes from matching transceiver physics to your rack geometry, airflow reality, and switch compatibility rules. Next, compare your planned link distances and monitoring needs using optics-compatibility-checklist so you can standardize spares and reduce commissioning surprises.

Updated: 2026-05-01

I travel between data centers to support migrations and performance investigations, focusing on optics, cabling, and operational reliability. My goal is to translate vendor specs into field-tested decisions that protect uptime and throughput in real deployments.