AI Optical Networking: A Data Center Guide for Low-Latency Links

AI workloads punish every millisecond of latency and every outage in your fabric. This data center guide shows how to integrate AI with optical networking by selecting the right transceiver classes, fiber plant, and operational safeguards for predictable performance. It is written for network engineers, architects, and field teams who need compatibility with switches and measurable link behavior under load.

Top 7 items: building an optical AI fabric that actually scales

Match AI link budget to real reach, not “spec-sheet distance”

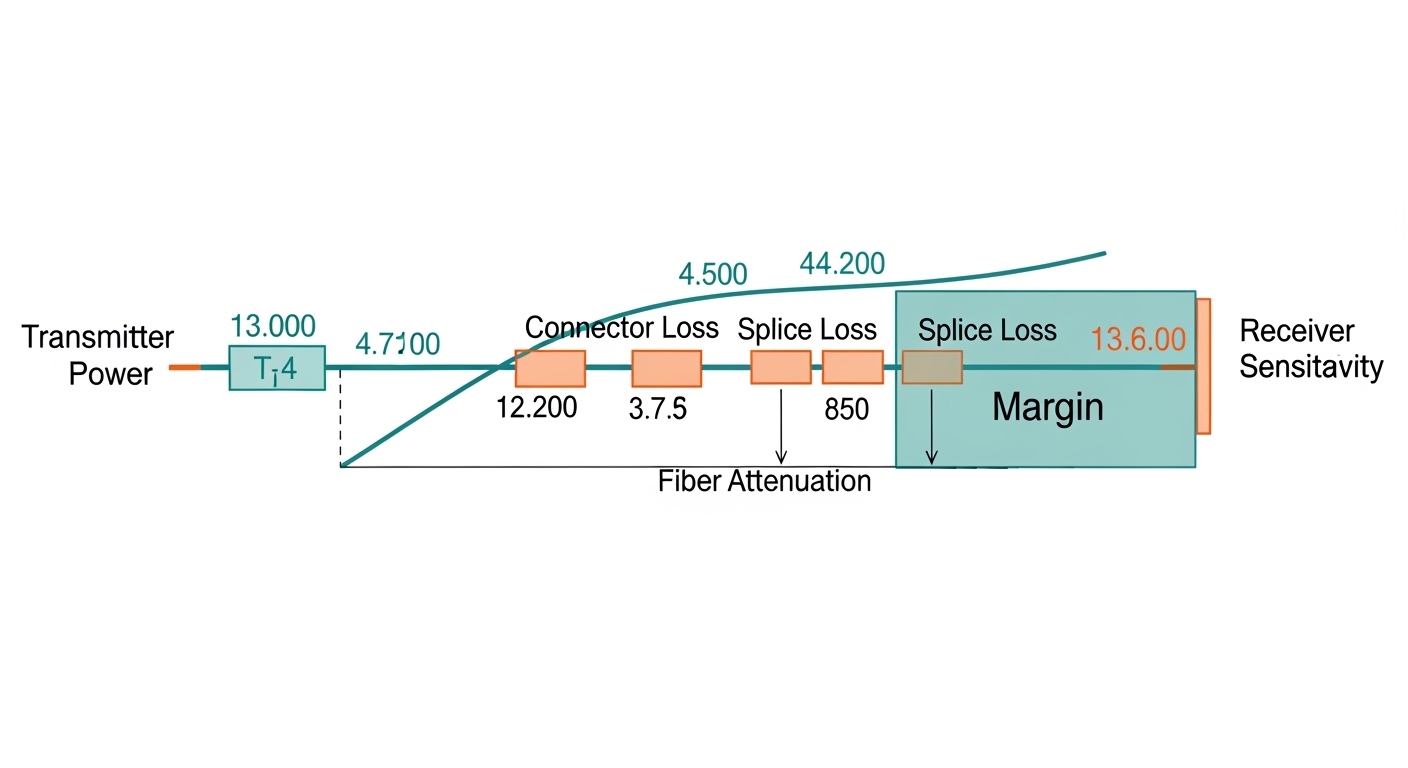

In AI clusters, overshooting reach can silently increase BER and trigger link flaps during congestion. Start with the IEEE-style link budget approach: transmitter power, receiver sensitivity, fiber attenuation, and connector/splice losses. For multimode, modal bandwidth and launch conditions matter as much as dB math.

- Key point: treat insertion loss and worst-case optics temperature drift as first-class variables.

- Typical targets: 10 km-class singlemode for many spine uplinks; shorter multimode for ToR-to-aggregation.

Pros: fewer surprises during burn-in. Cons: requires disciplined fiber measurement (OTDR/OLTS) and documentation.

Choose transceiver families by interface speed and optics technology

AI fabrics often mix 100G and 400G, plus breakout modes at the edge. Plan your port map early: switch ASIC lane widths, optics form factor, and supported standards (for example, IEEE 802.3 Ethernet PHYs) determine what will work without vendor-specific workarounds.

Common real-world pairings include Cisco SFP-10G-SR for legacy aggregation, Finisar FTLX8571D3BCL for 100G singlemode, and FS.com SFP-10GSR-85 when you need cost-effective SR optics with careful validation. Always verify DOM behavior and whether your switch firmware expects vendor-coded optics.

| Optics type (example) | Wavelength | Target reach | Connector | Data rate | DOM support | Operating temp (typ.) |

|---|---|---|---|---|---|---|

| 10G SR (SFP+) | 850 nm | Up to ~300 m (OM3/OM4, depends on optics) | LC | 10G | Yes (vendor-dependent) | 0 to 70 C (typ.) |

| 100G SR (QSFP28) | 850 nm | Up to ~100 m (OM4 class) | LC | 100G | Yes (CMIS/2W-class varies) | 0 to 70 C (typ.) |

| 100G LR4 (QSFP28) | ~1310 nm (4 wavelengths) | Up to ~10 km | LC | 100G | Yes | -5 to 70 C (typ.) |

| 400G FR4/DR4 (QSFP-DD/OSFP) | ~1310-1550 nm bands | 2 km+ (platform-specific) | LC | 400G | Yes | 0 to 70 C (typ.) |

Pros: faster procurement and fewer compatibility issues. Cons: mixed optics across vendors can complicate troubleshooting and firmware updates.

References: IEEE 802.3 Ethernet PHY guidance via vendor interoperability notes; CMIS/CMO behavior is defined in vendor documentation. [Source: IEEE 802.3 Standard]

Design the fiber plant for AI churn: pre-qualify, label, and measure

AI clusters evolve quickly: new racks, new accelerators, and shifting traffic patterns. Your fiber plant must tolerate churn without guesswork. Use OLTS/OTDR measurements to validate end-to-end loss and ensure patch cords and splices match the assumed loss model.

- Best practice: keep a fiber database with patch panel mapping, connector types, and measured loss.

- Operational detail: clean LC/SC ferrules every time you remove optics; use inspection microscopes.

Pros: faster changes and fewer intermittent faults. Cons: requires process discipline and tooling.

Control optical power and temperature to prevent “works in lab, fails in production”

Transceivers are sensitive to temperature gradients and airflow paths in high-density racks. In field deployments, I have seen link errors rise after adding adjacent hot equipment even when ambient room temperature seemed “within spec.” Use switch telemetry (DOM readings) and correlate with fan-speed profiles.

Pro Tip: If you see rising corrected errors only after a hardware refresh, compare DOM temperature deltas across optics positions. In many deployments, airflow obstruction changes the local module temperature more than the room value does, shifting the laser bias and receiver margin.

Pros: reduced downtime during expansions. Cons: requires telemetry collection and trend analysis.

Automate compatibility checks: DOM, vendor IDs, and firmware quirks

AI fabrics rely on automation: thousands of links at scale. Many outages trace back to a transceiver that is technically standards-compliant but not accepted by a specific switch firmware policy. Validate DOM format support, alert thresholds, and any vendor ID checks during staging.

For third-party optics, run a controlled soak test: verify link stability under repeated interface resets, confirm alarms clear correctly, and ensure the switch reports accurate Tx/Rx power. This prevents “green light but degraded BER” situations.

Pros: fewer surprises and faster deployments. Cons: more upfront validation effort.

Build latency-aware topologies using optics that support your traffic pattern

AI traffic is often east-west and bursty, with synchronized training phases that create transient congestion. Optical choices influence latency variance: serialization delay depends on speed, while physical reach and dispersion (especially on singlemode) affect signal integrity and retransmission behavior.

- Scenario fit: use shorter-reach optics for leaf-to-spine where possible to keep margins large and reduce error recovery events.

- Spine uplinks: choose LR-class singlemode when distance forces it, but validate end-to-end OSNR where the platform supports it.

Pros: steadier throughput under burst loads. Cons: topology changes may require re-cabling.

Operationalize failure handling: alarms, rollback plans, and maintenance windows

In production AI clusters, you need deterministic response when something breaks. Configure optical monitoring thresholds, alert on err-disable events, and define a rollback plan for firmware changes that could affect optics acceptance. Maintain spare optics matched to your switch lineup, including the exact form factor and wavelength grade.

Real-world deployment scenario: In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches feeding 100G aggregation, we typically deploy OM4 multimode for ToR-to-agg at about 70 m patch-cord runs, and singlemode LR4 for agg-to-spine at roughly 6 to 9 km. During quarterly AI platform refreshes, we schedule a 2-hour staging window per row to verify DOM telemetry baselines and confirm that interface resets do not trigger persistent flaps. Measured result after stabilization: corrected error spikes dropped significantly because optics and airflow profiles were validated before widening the rollout.

Pros: faster MTTR and safer upgrades. Cons: needs disciplined change management.

Selection criteria checklist for AI optical networking (engineer order)

- Distance and link budget: transmitter/receiver power, attenuation, and connector/splice loss.

- Fiber type and quality: OM3/OM4/OS2, plus measured OLTS/OTDR results.

- Switch compatibility: supported form factors, breakout modes, and optics acceptance policies.

- DOM and telemetry: whether the switch reads DOM fields and what alarms it triggers.

- Operating temperature and airflow: module temperature stability under load.

- Standards and interoperability: IEEE 802.3 PHY behavior and vendor interoperability guidance.

- Vendor lock-in risk: third-party validation effort, RMA experience, and spares strategy.

Reference: switch vendor transceiver compatibility matrices and IEEE PHY references. [Source: IEEE 802 Project]

Common mistakes and troubleshooting tips (with root cause + fix)

-

Mistake: assuming “MM reach” from marketing claims.

Root cause: patch cords, launch conditions, and OM grade mismatches reduce effective modal bandwidth.

Solution: use OLTS/OTDR, standardize patch cord lengths, and validate with the exact switch optics pair in staging. -

Mistake: swapping optics without cleaning connectors.

Root cause: contaminated LC ferrules cause elevated insertion loss and intermittent receive power below sensitivity.

Solution: inspect ferrules with a microscope; clean with lint-free tools and approved solvent/cleaner; re-seat and re-check Rx power. -

Mistake: ignoring DOM temperature trends.

Root cause: local airflow blockage or rack heat recirculation shifts module temperature, reducing margin and increasing corrected errors.

Solution: correlate DOM temperature, fan telemetry, and corrected-error counters; adjust airflow baffles or reposition equipment. -

Mistake: mixing transceiver vendors in production without soak tests.

Root cause: firmware optics acceptance policies and DOM field interpretations differ subtly.