AI workloads do not politely wait for your cabling plan. They surge, reorder, and demand low-latency bandwidth across leaf-spine fabrics, often pushing optics, power budgets, and switch compatibility past their comfort zones. This guide helps network and infrastructure engineers design, validate, and operate future-ready networks by pairing AI-aware traffic patterns with Ethernet optical best practices, including DOM telemetry and upgrade-safe transceiver choices.

Optical choices that survive AI traffic bursts

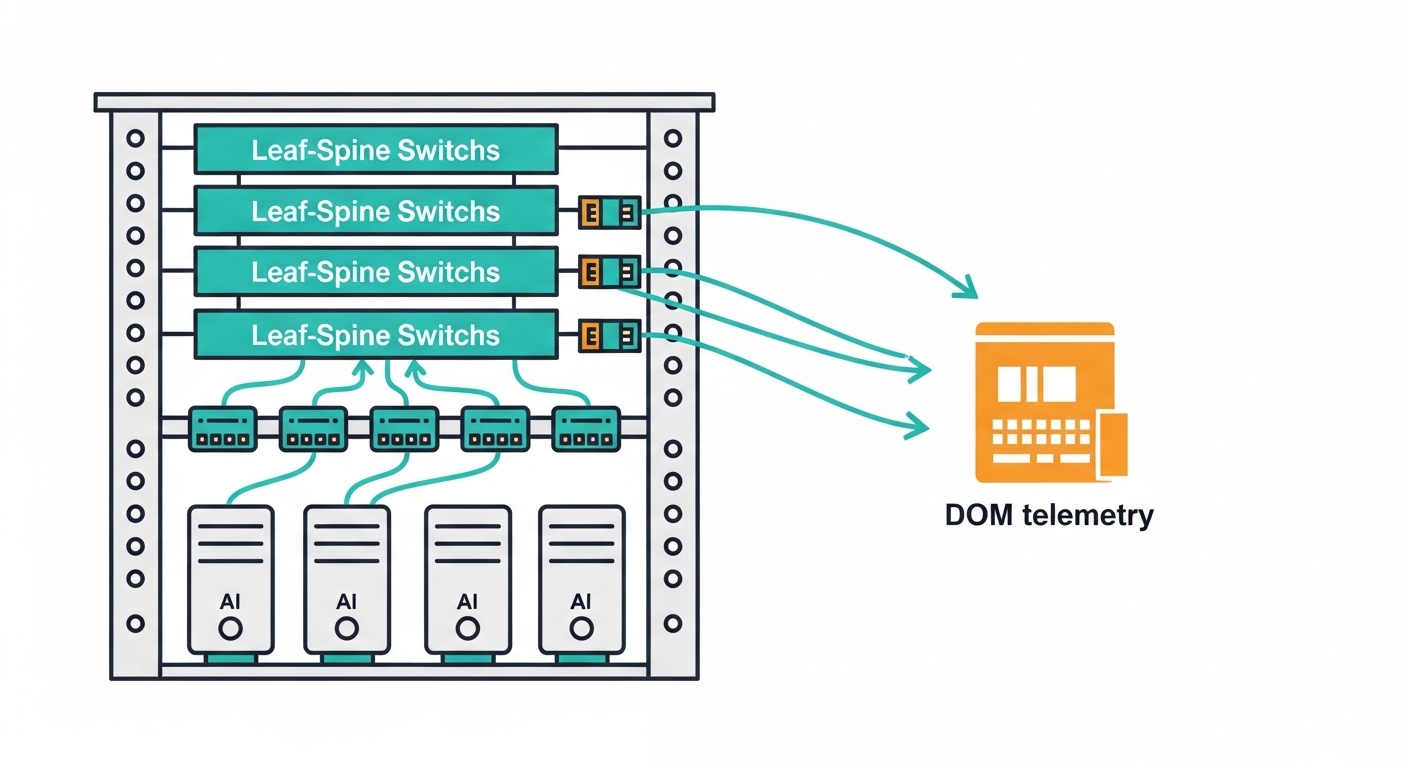

In AI clusters, microbursts and all-to-all communication can spike utilization and trigger link flaps if optics are marginal. Ethernet over fiber must meet IEEE 802.3 requirements for signal integrity, while your transceivers must behave predictably under real temperature swings and vendor-specific controls. A field-tested approach is to standardize on a small set of optics profiles (for example, 10G SR for existing racks and 25G/50G SR or 100G SR for expansion) and treat DOM telemetry as a first-class monitoring signal.

Know what “compatible” means on the wire

Compatibility is not only “it lights up.” It is whether the link trains to the expected encoding and whether the optics stay within vendor-recommended receive power ranges. For short-reach multimode, target optics aligned to 802.3 reach classes and the fiber plant’s modal bandwidth. For example, 10G SR commonly uses 850 nm multimode optics such as Cisco SFP-10G-SR; 25G SR frequently uses 850 nm with higher-grade modules such as FS.com SFP-25G-SR and enterprise switch platforms that support SFP28.

Specs that matter: wavelength, reach, power, and temperature

When AI scale grows, the wrong optics choice becomes a silent tax: higher retransmissions, reduced mean time between failures, and sudden outages during maintenance windows. Use the table below as a quick reference for selecting transceivers for future-ready networks that must handle both near-term AI and near-term migrations.

| Module type | Typical wavelength | Target reach (OM3/OM4) | Data rate | Connector | DOM telemetry | Operating temp (typ.) |

|---|---|---|---|---|---|---|

| SFP-10G-SR (ex: Cisco SFP-10G-SR) | 850 nm | ~300 m / ~400 m (multimode-class dependent) | 10G Ethernet | LC | Yes (vendor DOM) | 0 to 70 C (typ.) |

| SFP28-25G-SR (ex: FS.com SFP-25GSR) | 850 nm | ~100 m / ~150 m (multimode-class dependent) | 25G Ethernet | LC | Yes (SFF-8472 / vendor) | -5 to 70 C (typ.) |

| QSFP28-100G-SR4 (ex: compatible 100G SR4 optics) | 850 nm | ~100 m / ~150 m (multimode-class dependent) | 100G Ethernet | LC (4-lane) | Yes (vendor DOM) | -5 to 70 C (typ.) |

Operationally, DOM support matters because you will want to alert on rx power, laser bias current, and temperature before errors become incidents. Most modules follow SFF-style digital diagnostics behavior; still, validate that your specific switch firmware correctly reads and exposes DOM fields.

Pro Tip: During AI cluster burn-in, poll DOM at a short cadence (for example, every 30 to 60 seconds) and correlate rising rx power or temperature drift with application-level retransmissions. A “perfect” link at install time can degrade faster than expected when you later reorganize airflow or replace patch panels.

Build-vs-buy: transceiver strategy for upgrade safety

For future-ready networks, you are buying a behavior model, not just an optical transmitter. OEM modules reduce compatibility friction, but third-party optics can cut costs if they are validated for your switch line cards and firmware. The decision should be grounded in measurable outcomes: link error rate, DOM read consistency, and field failure patterns.

Practical decision checklist

- Distance and fiber type: confirm OM3 vs OM4, patch loss, and connector cleanliness targets before choosing SR vs LR.

- Switch compatibility: verify module part numbers against your specific switch platform and firmware release notes.

- DOM and monitoring: ensure your NMS supports the DOM fields your ops team needs (rx power, temp, bias).

- Operating temperature: account for hot aisle recirculation; check module temp range and switch airflow constraints.

- Vendor lock-in risk: plan a small “known-good” third-party pool, not a full rip-and-replace.

- Optical budget and margin: leave headroom for aging, cleaning, and future patching changes.

Deployment scenario: leaf-spine AI fabric with safe optics rollouts

Consider a 3-tier data center leaf-spine topology with 48-port 25G ToR switches at the leaf, 96-port spine aggregation, and an AI workload running 8 GPUs per host. In week 1, you deploy 25G SR optics for east-west traffic within a 80 to 120 m multimode reach envelope, using DOM alerts to watch rx power. In week 3, you add a subset of 100G SR4 uplinks for the highest-bandwidth racks, ensuring the transceivers match the switch’s supported optics matrix. During the change window, you run a controlled traffic test: measure link CRC errors, interface counters, and application-level throughput while verifying the optics do not show abnormal temperature or bias drift.

This approach keeps the fabric “upgrade-shaped”: you can expand bandwidth without rewriting your monitoring or replacing optics categories under pressure.

Common mistakes and troubleshooting that actually closes incidents

Optics failures often look like software problems until you inspect the physical and diagnostic truth. Here are frequent failure modes in future-ready networks and how to fix them fast.

- Mistake: “It linked at install time” with no DOM monitoring.

Root cause: gradual laser bias drift or rx power degradation from patch panel rework.

Solution: enable DOM polling and alert thresholds; schedule connector cleaning and verify rx power stays within vendor guidance. - Mistake: Mixing transceiver vendors across the same switch port group.

Root cause: subtle firmware interpretation differences for DOM fields or marginal vendor calibration.

Solution: standardize by switch model and firmware; maintain a validated optics list and test each candidate in a staging rack. - Mistake: Overlooking fiber plant loss and patch cord quality.

Root cause: dirty connectors or higher-than-modeled attenuation reduces optical margin, causing intermittent errors under load.

Solution: inspect with a fiber scope, clean with approved methods, replace suspect patch cords, and re-check link error counters during a traffic replay. - Mistake: Ignoring temperature and airflow changes after cabling work.

Root cause: hot spots increase module temperature and can shift transceiver performance.

Solution: validate airflow paths; log module temperature from DOM during peak AI job windows.

FAQ

How do future-ready networks handle AI scaling without constant downtime?

Use optics categories aligned to your switch support matrix and validate them with DOM-based monitoring. Then roll out in batches during controlled traffic tests so you can detect drift early rather than after production load spikes.

Should we prefer OEM optics or third-party modules?

OEM reduces compatibility friction and support ambiguity. Third-party can be cost-effective, but only if you validate on your exact switch models and firmware, and you track DOM field behavior and error counters over time.

What fiber distance is safe for SR modules in AI data centers?

Safety depends on patch loss, connector quality, and the multimode class (OM3 vs OM4). Treat reach as “link margin,” not a single number; measure with the installed plant and leave headroom for growth.

What DOM metrics should we alert on first?

Start with rx power, laser bias current, and module temperature. Correlate these with interface error counters (CRC or symbol errors) so alerts reflect real risk, not just normal telemetry variation.

Why do links sometimes flap only during AI job bursts?

AI bursts stress signal integrity and can expose marginal optical margin, airflow hot spots, or dirty connectors. Reproduce the issue with a traffic replay, then inspect DOM trends and fiber endpoints.

Building future-ready networks is a discipline of verification: validate optics against IEEE expectations, monitor DOM like an operational heartbeat, and plan upgrade paths that match your switch and fiber reality. Next step: review your existing optics inventory and map each transceiver type to your switch compatibility matrix via transceiver compatibility and DOM monitoring.

Author bio: I have deployed leaf-spine fabrics for AI clusters and debugged optics incidents using DOM telemetry, interface error counters, and fiber inspection workflows. I now help teams reduce tech debt in network hardware planning through pragmatic build-vs-buy decisions.