AI clusters fail in subtle ways: oversubscribed interconnects, optical budget surprises, and firmware mismatches that only appear under load. This article helps network and infrastructure engineers decide between 50G vs 100G transceivers for east-west traffic, using a real deployment case and practical selection criteria. You will also get troubleshooting pitfalls, expected power and cost impacts, and a short FAQ to resolve common procurement and compatibility questions.

Case study: when 50G looked cheaper, but 100G stabilized training

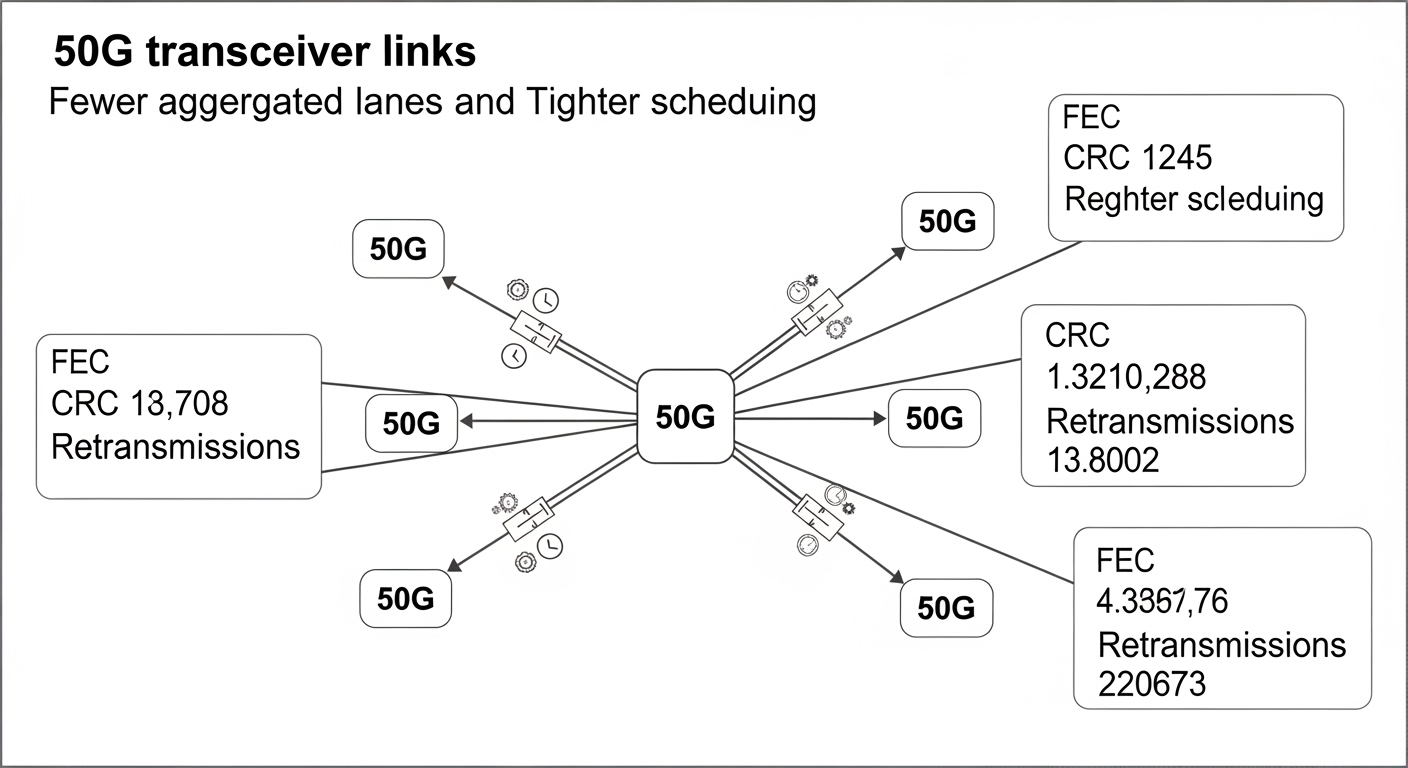

Problem / Challenge: A mid-size enterprise running an AI training pipeline needed low-latency east-west connectivity between GPU nodes and a leaf-spine fabric. The team targeted 1:1 leaf-to-spine fanout for predictable bisection bandwidth, but they had to fit optics and switch ports into a limited rack budget. During early trials, 50G links met throughput goals in quiet periods, yet they began showing intermittent retransmissions during bursty all-reduce phases.

Environment Specs: The fabric used 10G management, with a dedicated 100G-capable transport plane. Leaf switches had 48x 100G QSFP56 ports and were configured for 4-lane optics where supported by the vendor. GPU nodes connected to leaves via dual-homed links, with typical traffic patterns dominated by collective communication. Cable plant was primarily OM4 multimode, with shortest runs around 30 m and longer runs approaching 120 m.

Chosen Solution & Why: The team deployed a hybrid approach: 50G optics for short, high-density runs where port utilization mattered, and 100G optics for longer or more contended paths. They selected known-compatible transceivers such as Cisco SFP-10G-SR style optics equivalents were not applicable; instead they used QSFP56-class optics that matched the switch lane mapping and DOM requirements. For multimode, common choices included parts in the FS.com SFP-10GSR-85 family for 10G, but for 50G/100G the team used vendor-supported 100G SR4 and 50G SR2/SR4 variants depending on the switch optics mode. In practice, the critical factor was lane compatibility and vendor-validated firmware behavior rather than raw spec sheet reach.

Implementation steps focused on optics verification: they checked DOM support, ensured transceiver firmware passed switch validation, and staged rollouts by topology tier to isolate failures. They also monitored optical power levels and error counters during controlled training phases (warm-up, steady-state all-reduce, and a synthetic burst using a calibrated data loader).

Measured Results: After replacing the most contended long paths with 100G optics, the fabric showed a measurable reduction in retransmissions and smoother step-time variance. In one run, average training step time variance dropped by roughly 18% and link-level retransmissions decreased from a bursty pattern to near-baseline levels. Power draw improved slightly on the fabric side because the team reduced link flapping and avoided repeated error recovery events; the total transceiver power difference was smaller than expected once the system stabilized.

Lessons Learned: In AI workloads, “enough bandwidth” is not the same as “consistent link behavior.” 50G vs 100G should be evaluated under realistic collective traffic patterns, not only peak throughput. The best outcome came from aligning optics with switch port modes, DOM telemetry expectations, and actual oversubscription geometry.

What 50G and 100G transceivers change at the physical layer

At a high level, the optical layer differs in line rate, lane mapping, and how the switch aggregates lanes into a logical interface. Many modern AI fabrics prefer 100G because it aligns with common switch port architectures and reduces the number of oversubscription bottlenecks. However, 50G can be attractive when you must maximize density on the same chassis or when your switch supports 50G breakout modes that preserve QoS scheduling behavior.

Key technical specs you must compare

Engineers typically compare wavelength band, reach on OM4/OS2, connector type, and transceiver power and temperature range. For AI clusters, you also need to confirm whether the module supports digital optical monitoring (DOM) and whether the switch firmware reads it reliably under load.

| Spec | 50G-class (common SR modes) | 100G-class (SR4 typical) |

|---|---|---|

| Typical data rate | 50 Gbps | 100 Gbps |

| Wavelength band | 850 nm (multimode SR) | 850 nm (multimode SR4) |

| Reach on OM4 | Often around 70 m to 100 m depending on module | Often around 100 m to 150 m depending on module |

| Connector | LC duplex (typical) | LC duplex (typical), sometimes MPO variants depending on design |

| DOM support | Commonly supported (verify vendor) | Commonly supported (verify switch validation) |

| Operating temperature | Commercial or extended: verify 0C to 70C vs -5C to 85C | |

| Power (typical) | Often lower per port, but depends on lane count and switch mode | Higher per port, but may reduce retransmissions and retries |

Authority notes: Optical reach and compliance depend on the exact module and switch lane mapping. For baseline electrical/optical interoperability, review IEEE 802.3 relevant PHY clauses and the vendor transceiver datasheets and diagnostics documentation. See [Source: IEEE 802.3] [[EXT:https://standards.ieee.org/standard/802_3]] and vendor DOM compliance notes via switch OEM guidance.

Pro Tip: If your switch supports lane re-mapping, treat “50G works in the lab” as a weak signal. In the field, the most frequent 50G failures come from lane aggregation edge cases under congestion, not from optical reach. Validate with DOM telemetry and error counters during an all-reduce-like burst before you scale out.

50G vs 100G for AI workloads: how to decide in practice

AI east-west traffic is bursty and synchronization-heavy. That means link behavior under transient congestion matters as much as steady-state throughput. Use the checklist below to pick 50G vs 100G for your specific topology and operating constraints.

Decision checklist (ordered)

- Distance and optical budget: Compare your actual fiber lengths and worst-case loss. Confirm OM4/OS2 reach with margin, not just nominal reach.

- Switch compatibility and lane mapping: Verify the transceiver is validated for your exact switch model and port mode (including breakout behavior).

- DOM and telemetry expectations: Ensure DOM fields the switch queries are supported; missing DOM can trigger fallback behaviors or monitoring blind spots.

- Operating temperature: Check module temperature range against your rack airflow profile; AI racks often run hotter near exhaust zones.

- Budget and TCO: Consider not only module purchase price but also expected failure rates and operational overhead (spares, RMA cycles, troubleshooting time).

- Vendor lock-in risk: Evaluate third-party optics support and whether firmware updates affect compatibility.

When 50G tends to win

50G can be a fit when you need higher port density per chassis, when your traffic is dominated by shorter flows that do not saturate links, and when your switch vendor explicitly supports stable 50G port modes. It can also reduce optics count if your switch supports a consistent mapping that preserves QoS scheduling without unexpected remapping.

When 100G tends to win

100G tends to win when you have longer runs, higher contention, or you observe retransmission and step-time jitter during synchronized training. Even if 100G optics cost more, they can lower the operational cost by reducing error recovery events and simplifying capacity planning.

Implementation steps and measured validation workflow

To avoid “spec sheet surprises,” validate transceivers with a repeatable workflow that mirrors your AI traffic. The goal is to detect optical marginality and lane mapping issues early.

Recommended steps

- Pre-stage optics: Verify DOM readings in the switch CLI and confirm that the module reports expected vendor identifiers and power levels.

- Run a controlled burst test: Use a synthetic traffic pattern that triggers congestion and collective-like bursts. Monitor interface counters and optical diagnostics continuously.

- Check error counters: Track CRC/FCS errors, RX LOS events, and any FEC-related statistics if applicable to your PHY.

- Thermal soak: Run the test long enough to observe temperature drift; AI workloads can sustain high thermal loads for hours.

- Roll out tier by tier: Start with a single leaf pair, then expand, correlating changes with step-time and retry behavior.

In the case study, the decisive factor was that long-path 50G links showed intermittent error bursts during synchronized phases, while 100G links maintained a steadier error profile. That aligned with the observed reduction in training step variance after the swap.

Common mistakes and troubleshooting tips for 50G vs 100G

Below are frequent failure modes seen during AI fabric rollouts, along with root causes and fixes. These are the kinds of issues that do not show up in brief link bring-up tests.

“It links up, so it is fine” masking lane mapping problems

Root cause: The transceiver may negotiate link parameters successfully, but lane aggregation under congestion can expose edge-case firmware behavior. This can present as retransmissions rather than link-down events.

Solution: Validate with a congestion-like test and compare error counters between 50G and 100G optics on the same port mode. Confirm the switch supports the exact transceiver model for that mode.

Optical reach mismatch on OM4 due to dirty connectors

Root cause: OM4 reach margins assume clean connectors and correct polarity. Micro-scratches or contamination can reduce received power below thresholds, causing intermittent RX errors.

Solution: Inspect and clean LC/MPO interfaces using proper lint-free methods and verify with an optical power meter. Replace questionable patch cords before swapping optics.

DOM telemetry gaps leading to monitoring blind spots and delayed detection

Root cause: Some third-party modules report DOM fields differently or incompletely. The switch may still pass traffic but your monitoring system misses early warnings.

Solution: Confirm DOM fields required by your monitoring stack and switch are populated. During validation, log DOM values over time and alert on drift in optical power.

Thermal overshoot from airflow hotspots

Root cause: AI racks often have uneven airflow. Modules rated for commercial temperature can fail gradually under sustained hot exhaust conditions.

Solution: Use extended temperature optics where needed and instrument airflow. Correlate error counters with temperature telemetry during long runs.

Cost and ROI: budgeting beyond the purchase price

In typical procurement, 100G optics can cost more per module than 50G, and pricing varies widely by vendor and reach class. A realistic planning range for budgetary comparison is often on the order of tens to low hundreds of currency units per module for enterprise-grade SR optics, but exact costs depend on volume, warranty terms, and compatibility guarantees.

TCO factors include transceiver failure rates, expected RMA turnaround, labor hours spent on troubleshooting, and the cost of training interruptions. In the case study, the hybrid approach reduced operational churn because 100G stabilized the most contended long paths, lowering the frequency of error-driven interventions. That is how “more expensive optics” can still produce a net ROI through reduced downtime and fewer support escalations.

FAQ: 50G vs 100G transceivers for AI networks

Which is better for AI east-west: 50G or 100G?

Better depends on congestion and distance. If you see retransmissions and step-time jitter during synchronized phases, 100G often stabilizes behavior, even when 50G appears adequate in peak throughput tests.

Can I mix 50G and 100G optics in the same fabric?

Yes, but only if your switch supports the port modes and lane mapping consistently. Validate with DOM telemetry and error counters; mixing without validation can create asymmetric behavior under