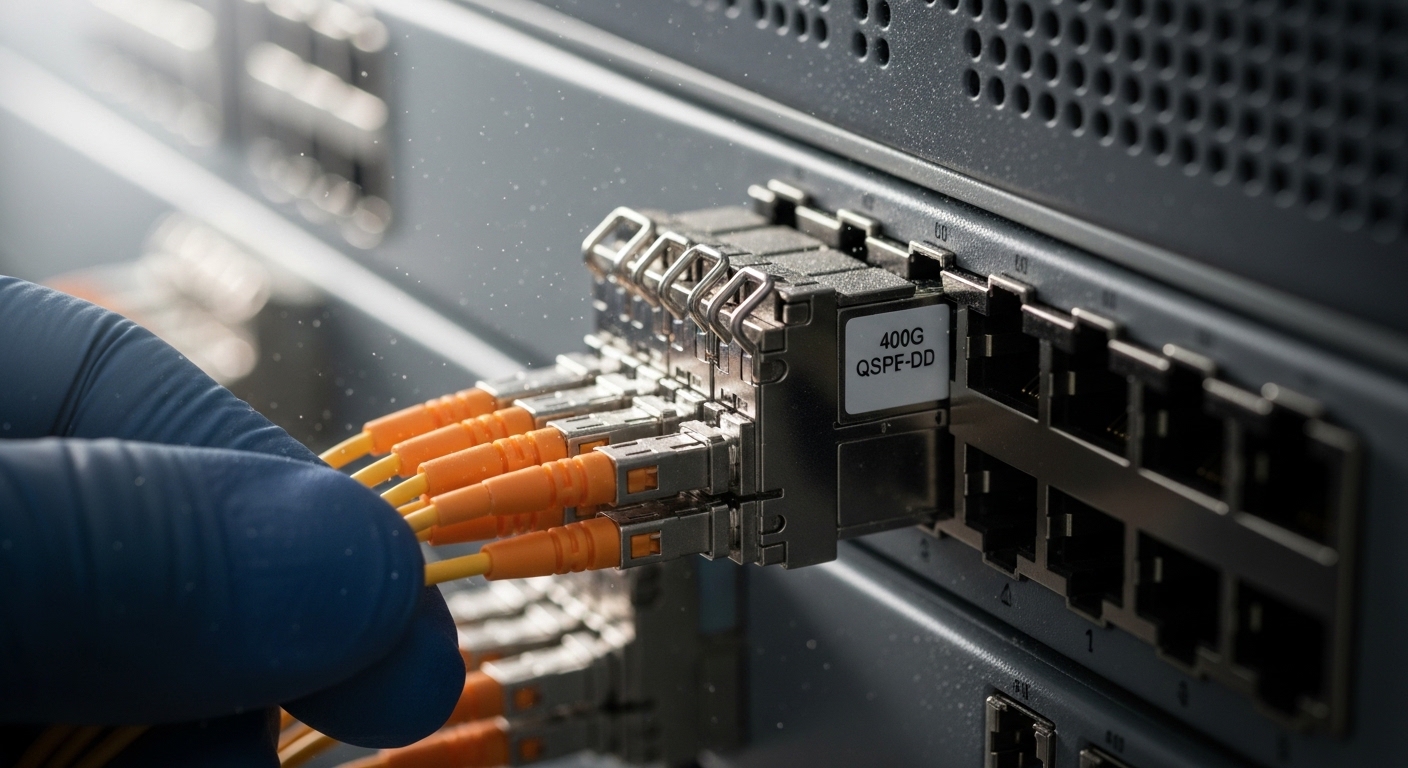

If your leaf-spine fabric is hitting congestion at peak hours, upgrading optics can beat chasing more compute. This reference helps operations and procurement teams evaluate data center transceivers for 400G ports using costs that actually show up in change windows, spares, and failure handling. You will get a selection checklist, a spec comparison table, and the common pitfalls field engineers see when modules move between switch generations.

Where 400G data center transceivers pay off in real deployments

In a 3-tier data center leaf-spine topology, the usual KPI is utilization per uplink and oversubscription behavior. For example, in a cluster with 48-port ToR switches and 12 uplinks at 400G per leaf, a single optics refresh can reduce the number of active uplinks during peak by enabling higher effective throughput per port. That can delay adding new line cards or cabling runs, which often drives the biggest part of the ROI.

Practically, teams justify 400G optics when they can keep the same fiber plant and avoid additional breakout transceivers. If you already have OM4 or OS2 infrastructure, selecting the right wavelength and reach (SR4/DR4/FR4 style) can minimize re-cabling. The cost levers usually include module price per port, power draw per transceiver, and the operational cost of optics swaps during maintenance windows.

For standards and compatibility baselines, start with IEEE Ethernet PHY expectations and vendor transceiver guidance. Ethernet 400G implementations map onto defined lanes and coding, while the physical layer optics must match the switch vendor’s supported optics list. Reference points include IEEE 802.3 for 400G Ethernet and vendor datasheets for specific transceivers and platforms. IEEE 802.3 (400G Ethernet baseline)

Specs that determine reach, power, and compatibility

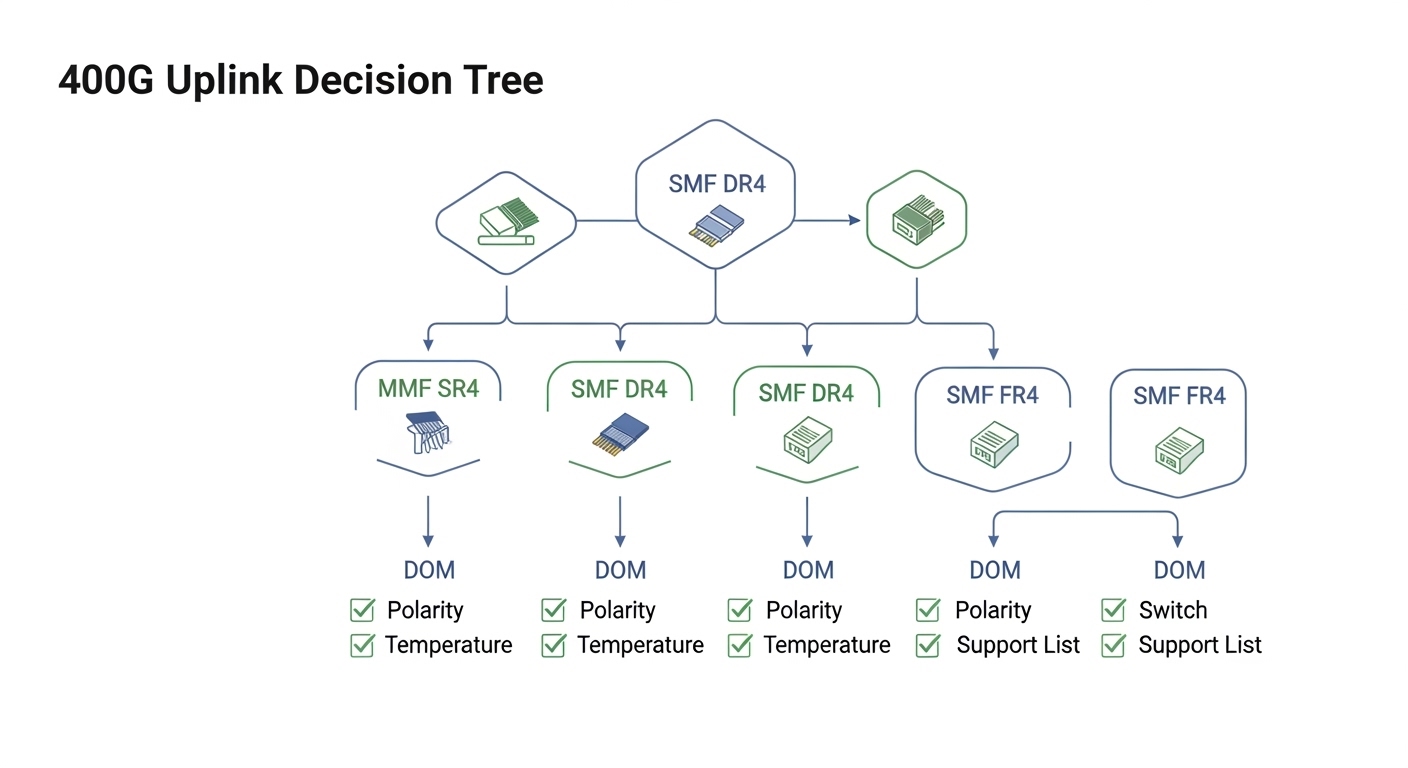

400G optics commonly fall into short-reach multi-fiber (often SR4 class) and longer-reach single-mode variants (DR4/FR4 class). Your choice is less about “maximum distance” and more about link budget margin, transceiver temperature limits, and whether the switch expects a particular connector and lane mapping. A field engineer also checks optical diagnostics support (DOM) because it changes how quickly you detect aging or contamination.

The table below compares representative 400G data center transceivers by interface characteristics. Exact availability depends on switch vendor optic support lists and region, but these categories reflect common procurement choices.

| Type (common naming) | Typical wavelength | Reach class | Connector | Data rate | DOM / diagnostics | Operating temperature |

|---|---|---|---|---|---|---|

| 400G SR4 (MMF) | ~850 nm | Up to ~100 m on OM4 | MT/MPO-12 or MPO-16 (varies) | 400G Ethernet | Usually supported | Commonly 0 to 70 C (commercial) or -5 to 70 C (extended) |

| 400G DR4 (SMF) | ~1310 nm | Up to ~500 m | LC duplex | 400G Ethernet | Usually supported | Commonly 0 to 70 C |

| 400G FR4 (SMF) | ~1550 nm | Up to ~2 km | LC duplex | 400G Ethernet | Usually supported | Commonly 0 to 70 C |

What to verify before purchasing

- Switch compatibility: confirm the exact transceiver part number is supported by the switch model and firmware level.

- Connector and fiber type: MPO polarity and MMF grade (OM3 vs OM4) must match your installed plant.

- Reach margin: measure end-to-end loss and confirm the vendor’s link budget supports it with aging headroom.

- DOM and alarm thresholds: ensure the switch can read diagnostics and map them to actionable alerts.

- Temperature and airflow: validate the module operating range against actual cage airflow and inlet temperatures.

Pro Tip: In many outages, “wrong optics” is not the root cause. The more common failure mode is MPO polarity mismatch or a swapped transmit/receive fiber pair after patch panel rework. DOM may still report “link up” while BER and error counters climb, so always check error statistics and not only link state.

Cost and ROI: how teams should price 400G optics decisions

To maximize ROI, treat optics as an operational system: purchase cost plus the cost of downtime, spares, and troubleshooting time. Typical street pricing varies by vendor and volume, but many teams see 400G transceivers priced roughly in the range of several hundred to over a thousand currency units per module depending on reach class (SR4 generally cheaper than DR4/FR4) and whether you buy OEM vs third-party. Use your own quotes, but model TCO over a 3 to 5 year horizon.

Include power in the model. If your platform supports power telemetry, compare average module draw under normal traffic. Even a small delta per port can matter at scale when you have hundreds or thousands of optics installed across a fabric. Also add risk costs: OEM optics may carry lower compatibility risk, while third-party options can reduce upfront spend but may require more validation cycles and may affect RMA processes.

Finally, account for spares strategy. In a leaf-spine rollout with hot-swappable optics, you might keep 1 to 2 spares per rack group, but the exact number depends on your failure history and how quickly you can source replacements. ROI improves when spares reduce mean time to repair (MTTR) and reduce maintenance window overruns.

Decision checklist for selecting 400G data center transceivers

Use this ordered list during procurement and pre-install testing. It is designed to prevent the most expensive compatibility surprises.

- Distance first: map each link to the required reach class (MMF SR4 vs SMF DR4/FR4) based on measured fiber loss.

- Switch compatibility: confirm the switch model, line card, and firmware version support the exact optic SKU.

- Connector and polarity: verify MPO type and polarity requirements; document patch panel mapping before any swap.

- DOM support: ensure the switch reads diagnostics and that alarms route into your monitoring stack.

- Operating temperature and airflow: validate ambient and inlet temps in the actual rack aisle, not just the lab spec.

- Vendor lock-in risk: if you choose OEM-only optics, quantify the replacement premium for the next refresh cycle.

- RMA and warranty terms: compare turnaround time, shipping policies, and whether the vendor tests return optics with optics-specific procedures.

Common mistakes and troubleshooting tips in the field

Even experienced teams stumble during optics refreshes. Below are failure modes that repeatedly show up during migrations, cabling changes, and vendor swaps.

-

Mistake: MPO polarity mismatch after patch changes

Root cause: transmit/receive fiber mapping does not match the transceiver’s expected polarity.

Solution: verify polarity using a polarity tester or documented patch map; confirm with bidirectional link tests and check error counters, not only link state. -

Mistake: Assuming “OM4 equals 100 m” without measuring loss

Root cause: patch cords, connectors, and splices can push total loss beyond the link budget, especially after rework.

Solution: measure with an OTDR or calibrated loss tester; apply vendor link budget margin and consider aging headroom. -

Mistake: Ignoring temperature and airflow constraints

Root cause: modules can run hotter in front-to-back airflow reversals or high recirculation racks, leading to degraded optical power and higher BER.

Solution: check inlet temperatures, ensure baffles are intact, and validate module diagnostics for optical power drift. -

Mistake: Third-party optics without full switch validation

Root cause: switch platforms may accept the optics but apply different thresholds, causing intermittent errors under specific traffic patterns.

Solution: test in a representative port group with realistic traffic load; confirm DOM alarm behavior and error counters during sustained runs.

FAQ about data center transceivers for 400G ROI

Q1: Which 400G data center transceivers usually deliver the best ROI?

In most modern fabrics, the best ROI comes from selecting SR4 for short runs where the existing MMF plant supports it, because it typically costs less than SMF long-reach optics and avoids cabling work. If your measured loss or distance exceeds SR4 class, DR4/FR4 becomes the cheaper option versus re-cabling.

Q2: Do I need DOM support for operations?

Yes, especially in large deployments. DOM enables proactive monitoring of optical power and error trends, which reduces MTTR when a module begins to degrade or when fiber contamination increases.

Q3: Can I mix OEM and third-party optics in the same switch?

Sometimes, but it depends on the switch vendor’s supported optics list and how the firmware handles diagnostics thresholds. Plan a validation run: confirm link stability, consistent BER/error counters, and correct DOM alarm reporting.

Q4: What measurements should I take before ordering?

Measure end-to-end loss and connector quality for each link, and document polarity and patch mapping. Then compare those numbers to the vendor link budget for the specific transceiver type you plan to install.

Q5: What is the fastest troubleshooting workflow when a 400G link won’t come up?

Check switch port status and DOM readings first, then confirm correct fiber type and polarity, and finally validate patch panel mapping. If the link is up but errors spike, focus on polarity and optical loss rather than assuming a defective module.

Q6: How should I plan spares for 400G optics?

Start with a baseline spare ratio for your environment and adjust based on your failure history and vendor lead times. The ROI gain usually comes from reducing downtime during maintenance windows, not from saving a few modules on day one.

For a practical next step, align your transceiver plan with your cabling standards and monitoring approach using fiber optic cabling best practices. That pairing is where 400G ROI typically becomes measurable within one maintenance cycle.

Author bio: I have worked on optics bring-up and fault isolation in high-density data centers, including DOM-driven alert tuning and patch panel polarity recovery. I write from field logs and vendor datasheets to help teams choose data center transceivers that stay stable under real traffic.