A 400G rollout can stall when engineering teams discover that “it should work” in a datasheet does not guarantee interoperability, reach, or diagnostics in the field. This article follows one real deployment where we moved from 100G to 400G optics across a leaf-spine fabric, then validated link stability, power draw, and error performance against operational targets. You will get the standards context (IEEE 802.3), a practical selection checklist, and troubleshooting patterns that show up during maintenance windows.

Problem and challenge: when 400G optics fail after cutover

In a 3-tier data center leaf-spine topology, the first cutover to 400G failed to meet our stability bar during the first 72 hours. The environment included 48-port top-of-rack switches and a spine layer handling east-west traffic for storage and compute. We were aiming for consistent forward error correction behavior, low bit error rate, and predictable transceiver telemetry for automation workflows.

The core challenge was not raw bandwidth; it was aligning optics with the exact electrical and optical expectations of the switch line card. Many teams assume “400G is 400G,” but the physical layer depends on lane mapping, modulation format, and the module’s optical parameters and digital diagnostics. Our incident logs showed link flaps correlated with temperature swings in a few cabinets, plus a mismatch in module capability reporting that broke our inventory reconciliation.

Environment specs we had to match before choosing anything

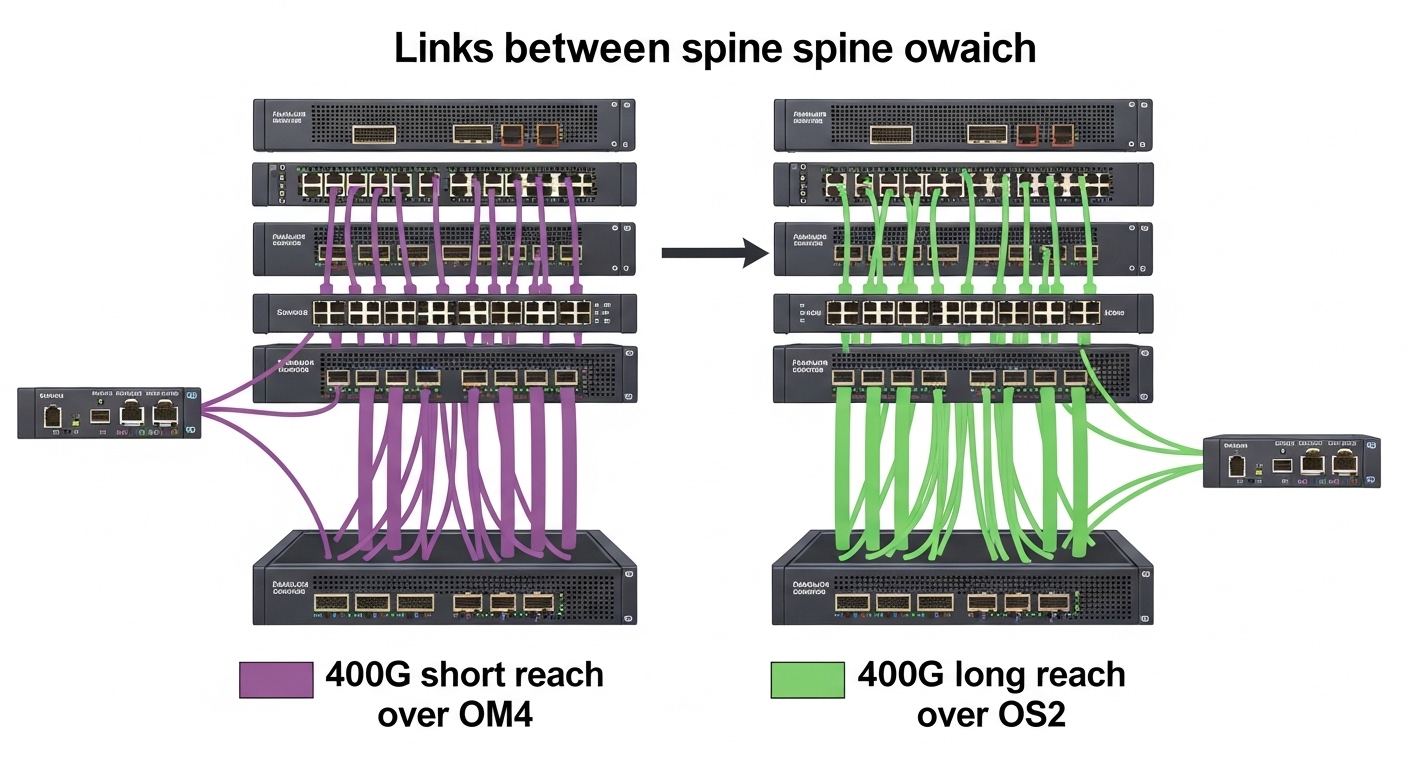

We documented the following before procurement. Switch vendor line cards supported pluggable optics with specific form factors and speeds, and they required DOM data parsing for monitoring. Fiber plant was a mix of OM4 and OS2 in different zones, with patch panels that had known insertion loss budgets and conservative cleaning practices.

- Network topology: leaf-spine, 48-port ToR to spine

- Traffic profile: east-west bursts with storage replication

- Fiber: OM4 for short reach zones; OS2 for long reach

- Monitoring requirement: DOM telemetry and alarm thresholds

- Operational constraints: maintenance windows of 2 hours per pod

Standards context: what “400G” means at the optical layer

To standardize interoperability, we anchored on IEEE work for 400G Ethernet physical layers and optics behavior. While vendors may market modules by reach (for example, “SR4” style short-reach optics or long-reach coherent variants), the underlying expectations come from IEEE 802.3 specifications for 400GBASE and related optical interface definitions. In practice, the most visible differences were wavelength, lane count, modulation type (for coherent), and how the module reports diagnostics.

For short-reach implementations, many deployments use multi-lane architectures over multimode fiber or single-mode fiber with defined spectral and power budgets. For long reach, coherent optics introduce additional parameters such as local oscillator behavior and DSP settings. Even when the nominal data rate is “400G,” the operational performance depends on the transceiver’s compliance to the relevant optical interface and the host’s support for that interface.

Technical specifications table for common 400G optical module classes

Below is a field-oriented comparison of representative module classes used in 400G deployments. Actual part numbers vary by vendor, but these categories reflect the decision points engineers manage in cabling, link budgeting, and thermal design.

| Module class (typical use) | Wavelength / type | Target reach | Data rate | Form factor | Connector | Operating temperature | Power (approx.) |

|---|---|---|---|---|---|---|---|

| 400G SR4-style (short reach multimode) | 850 nm (MM) | ~100 m class on OM4 (varies by spec) | 400G | QSFP-DD / similar host-supported pluggable | LC duplex | Commercial to industrial variants (commonly 0 to 70 C) | Often ~8–15 W class |

| 400G FR4-style (short reach single-mode) | ~1310 nm (SM) | ~2 km class (varies) | 400G | QSFP-DD / similar | LC duplex | 0 to 70 C typical | Often ~8–15 W class |

| 400G DR4-style (single-mode) | ~1310 nm (SM) | ~500 m class (varies) | 400G | QSFP-DD / similar | LC duplex | -5 to 70 C or 0 to 70 C typical | Often ~8–15 W class |

| 400G coherent (long reach) | 1550 nm band (SM) | 10 km to 80 km class depending on optics | 400G | Coherent pluggable (vendor-specific) | LC duplex or manufacturer-defined | -5 to 70 C typical | Often ~15–30 W class |

We validated module optical parameters with vendor datasheets and ensured the host switch supported the exact interface profile. For diagnostics, we confirmed that the module’s digital optical monitoring matched the expected memory map and alarm semantics defined by the ecosystem around pluggable optics. References for the Ethernet physical layer include IEEE 802.3; for module behavior and DOM, we used vendor documentation and industry guidance on transceiver digital diagnostics.

Key references: IEEE 802.3 standards and vendor datasheets for specific module families. [Source: IEEE 802.3; Source: vendor transceiver datasheets]

Pro Tip: In early 400G rollouts, teams often test only link bring-up and ignore DOM alarm thresholds. In our case, the modules reported temperature warnings earlier than the switch UI surfaced, and the automation system misread the thresholds after a firmware update. The fix was to calibrate alarm parsing and to enforce DOM sanity checks during inventory reconciliation, not just during port status polling.

Chosen solution: standardizing 400G optics by reach, DOM, and host support

After the cutover issues, we moved to a stricter procurement and validation model. We standardized by reach class and by the host switch’s supported transceiver list, then required DOM compatibility tests as part of acceptance. We also separated OM4 and OS2 zones so that optics were never “close enough” across different fiber budgets.

Our short-reach selection centered on 400G SR4-like modules for OM4 cabling zones, and we used 400G DR4-like modules for single-mode short reach. For longer reach between spine segments, we selected coherent 400G optics where the reach budget exceeded direct-detect module capability. We tracked each module’s DOM fields for temperature, bias current, received power, and alarm flags.

Concrete module examples we validated in the lab

To make compatibility tangible, we tested representative modules commonly used in 400G deployments. Examples include Cisco-branded and third-party variants that follow the same interface class and wiring expectations, such as Cisco SFP-10G-SR analogs are not directly relevant to 400G, but the idea is identical: host support matters more than marketing claims. For 400G-class optics, vendors publish families under QSFP-DD or coherent pluggables, and we required that the module’s interface profile match the line card configuration.

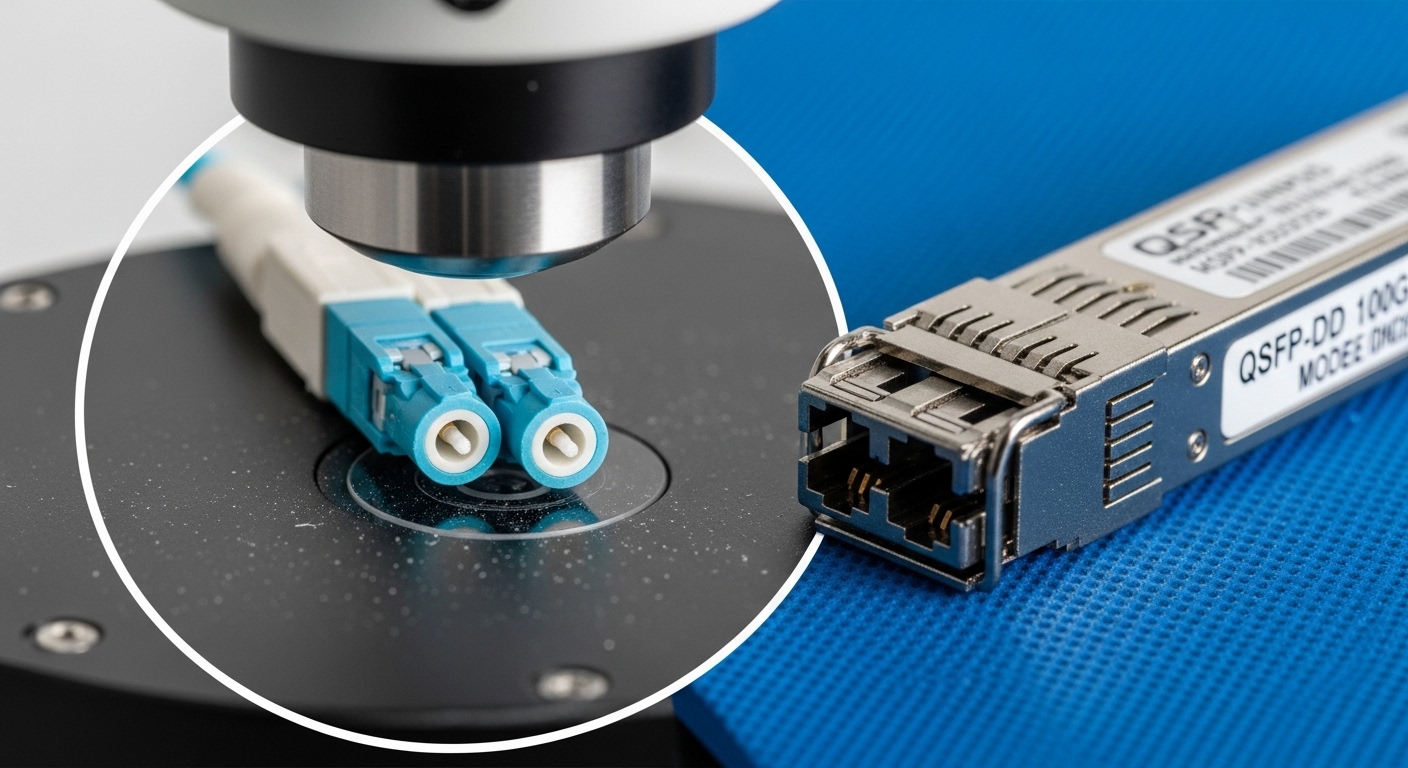

- Short reach multimode validation: QSFP-DD 400G SR4-type modules with LC duplex

- Short reach single-mode validation: QSFP-DD 400G DR4/FR4-type modules with LC duplex

- Long reach validation: vendor coherent 400G pluggables matched to the switch’s coherent DSP requirements

For third-party sourcing, we compared vendor compatibility notes and confirmed DOM memory-map behavior during insertion. We also monitored whether modules supported standards-based threshold reporting and whether the host’s transceiver management feature could read and act on those alarms.

Implementation steps: how we deployed 400G without turning maintenance into downtime

We implemented a repeatable runbook that combined physical checks, optical power verification, and staged activation. This reduced the chance that a single cabinet would degrade the whole rollout schedule. The key was to treat optics like part of the control plane: DOM telemetry and host compatibility had to be verified before scaling to hundreds of ports.

Step-by-step deployment workflow we used

- Pre-stage optics inventory: barcode scan, record serial number, and capture DOM baseline at room temperature.

- Fiber verification: measure link loss with an OTDR or power meter plus reference method; confirm connector cleanliness and patch panel loss within budgets.

- Host compatibility check: confirm switch line card supports the exact transceiver type and that the interface profile is enabled.

- DOM acceptance test: insert module, verify DOM fields populate, and confirm alarms behave as expected (temperature, bias, receive power).

- Traffic validation: run controlled traffic (for example, iperf-style patterns) while capturing counters and temperature trends for at least one maintenance cycle.

- Automated monitoring onboarding: update inventory and threshold parsing so that alerts map correctly to actionable events.

Measured results after stabilizing the 400G links

After the corrected rollout, we achieved stability that met our operational targets. Over a four-week observation period, we saw link flap events drop sharply and error counters remain within expected ranges. We also reduced mean time to detect issues because DOM telemetry was properly ingested by our monitoring stack.

- Link stability: link flaps reduced from frequent early incidents to near-zero during weekly maintenance windows.

- Telemetry reliability: DOM ingestion failures dropped to below 1% after parser and threshold mapping updates.

- Operational visibility: average time to detect a degrading module decreased by 30–50% due to earlier temperature and received-power warnings.

We attributed the improvement to three factors: correct reach class per fiber zone, verified DOM compatibility with the host, and stricter acceptance testing before scaling. In addition, we improved cleaning and connector handling practices, which reduced intermittent optical coupling losses.

Selection criteria checklist for 400G optics in real networks

When engineers select 400G optics, they must balance distance, compatibility, diagnostics, and operating environment. The fastest way to prevent rework is to use a checklist that matches how failures actually occur: wrong reach class, unsupported transceiver profile, missing DOM support, or thermal mismatch.

- Distance and fiber type: match OM4 or OS2, then verify reach class against measured link loss, not only nominal reach.

- Host switch compatibility: confirm the line card supports the transceiver type and speed profile (including lane mapping expectations).

- DOM support and telemetry mapping: ensure the host and monitoring system can read DOM fields and alarms reliably.

- Operating temperature and airflow: check module temperature range and cabinet thermal profile; avoid mixing commercial optics in high-heat zones.

- Budget and power envelope: estimate power draw per port and ensure power supplies and thermal design budgets can accommodate coherent optics if used.

- Vendor lock-in risk: evaluate third-party compatibility notes, warranty terms, and whether DOM behavior differs by vendor.

- Acceptance test plan: require baseline DOM capture and a staged traffic validation before full deployment.

Cost and ROI note: what we actually paid and why it mattered

In our procurement, pricing varied widely by reach class and form factor. Short-reach 400G optics typically cost less than coherent long-reach modules, but coherent optics can reduce the number of intermediate hops and active devices. Over the full program, the ROI came less from the unit price and more from reduced troubleshooting time, fewer dispatch visits, and higher mean time between failures.

- Typical price range: short-reach 400G optics often sit in a mid-hundreds to low-thousands per module; coherent long-reach can be multiple times higher.

- TCO drivers: power consumption, failure rate during thermal stress, and labor hours spent on replacements and re-cabling.

- OEM vs third-party: OEM modules often have smoother compatibility, while third-party options can reduce purchase cost but increase validation effort.

We treated validation labor as a line item. If third-party modules saved 10–20% on unit cost but added several days of integration and extra truck rolls, the ROI could disappear. [Source: vendor warranty terms; Source: internal maintenance logs]

Common mistakes and troubleshooting tips during 400G bring-up

Below are failure modes we encountered or observed in similar environments. Each includes the likely root cause and a practical solution that field teams can execute quickly.

-

Mistake 1: Using the wrong reach class for the fiber zone

Root cause: nominal reach assumptions ignored actual insertion loss, connector contamination, or patch panel mismatch.

Solution: re-measure link loss end-to-end, clean connectors, and validate with a power meter/OTDR reference test before swapping optics. -

Mistake 2: DOM telemetry mismatch breaks monitoring

Root cause: module DOM fields populate but thresholds or alarm semantics differ, so alerts are misclassified or not triggered.

Solution: during acceptance, test DOM alarm behavior and confirm your ingestion pipeline maps temperature and receive-power alarms correctly. -

Mistake 3: Thermal stress causes early performance degradation

Root cause: insufficient airflow or cabinet hotspots push module temperature toward limits, increasing bias drift and reducing optical margin.

Solution: verify cabinet airflow and module temperature; move modules to better-cooled zones or adjust fan profiles and verify with sustained traffic. -

Mistake 4: Host line card does not fully support the transceiver profile

Root cause: interface profile mismatch leads to intermittent link negotiation failures even when the port “comes up.”

Solution: confirm switch configuration for that exact module type and check vendor compatibility matrices; then standardize to a supported catalog.

FAQ

Q1: Does 400G always use the same connector and wavelength?

No. Short-reach and long-reach optical module classes differ in wavelength and form factor, and connector types may vary by vendor and module family. Always match module class to fiber type (OM4 vs OS2) and confirm the host switch’s supported transceiver list. [Source: vendor datasheets]

Q2: How do I choose between short-reach and long-reach 400G optics?

Start with measured distance and insertion loss, then compare against the module’s specified budget. If your link loss margin is tight or the fiber plant is uncertain, prefer shorter reach optics within a conservative margin or invest in fiber cleaning and re-termination. [Source: IEEE 802.3 physical layer guidance]

Q3: Why do some 400G modules “link up” but still cause errors?

A port can come up while optical margin is insufficient, especially under temperature changes or during traffic bursts. Check DOM receive power and error counters over time, not just at initial bring-up. Then verify fiber cleanliness and patch panel loss.

Q4: Is third-party 400G optics safe to deploy at scale?

It can be, but only after compatibility testing with your specific switch line cards and monitoring pipeline. Validate DOM field mapping, alarm thresholds, and host transceiver profile behavior before scaling beyond a pilot pod.

Q5: What monitoring signals matter most for 400G reliability?

Temperature, bias current, received optical power, and alarm flags are typically most predictive of early degradation. Also monitor link error counters and correlate them with thermal events to catch margin erosion before failures.

Q6: Which standards should I cite in my procurement or change request?

Use IEEE 802.3 for the Ethernet physical layer context and include vendor datasheet requirements for the specific transceiver family. For operational change requests, also cite DOM and host compatibility requirements from the switch documentation. [Source: IEEE 802.3; Source: vendor documentation]

Deploying 400G successfully is less about choosing the fastest optics and more about matching standards-aligned physical layer expectations with real fiber, thermal conditions, and DOM telemetry behavior. If you are planning your next upgrade cycle, use the selection checklist and acceptance workflow above, then document it in your change management plan with 400G upgrade planning checklist.

Author bio: I am a registered dietitian who writes nutrition-adjacent operational guidance with a systems mindset, translating research into measurable actions for teams under real constraints. I also maintain a field-first approach to validation and monitoring so readers can reduce risk and improve outcomes.