We had a pretty classic problem: an all-flash VMware vSAN cluster was growing fast, but optics procurement was turning into a scramble. Different racks used mixed transceivers, some with weak DOM data, and one site had intermittent link flaps that pushed maintenance windows into overtime. This article walks through how to choose and qualify a vSAN fiber SFP plan that balances compatibility, cost, lead time, and supply-chain risk—based on a real deployment we ran and the checks field engineers actually use.

Problem and challenge: link flaps during vSAN growth

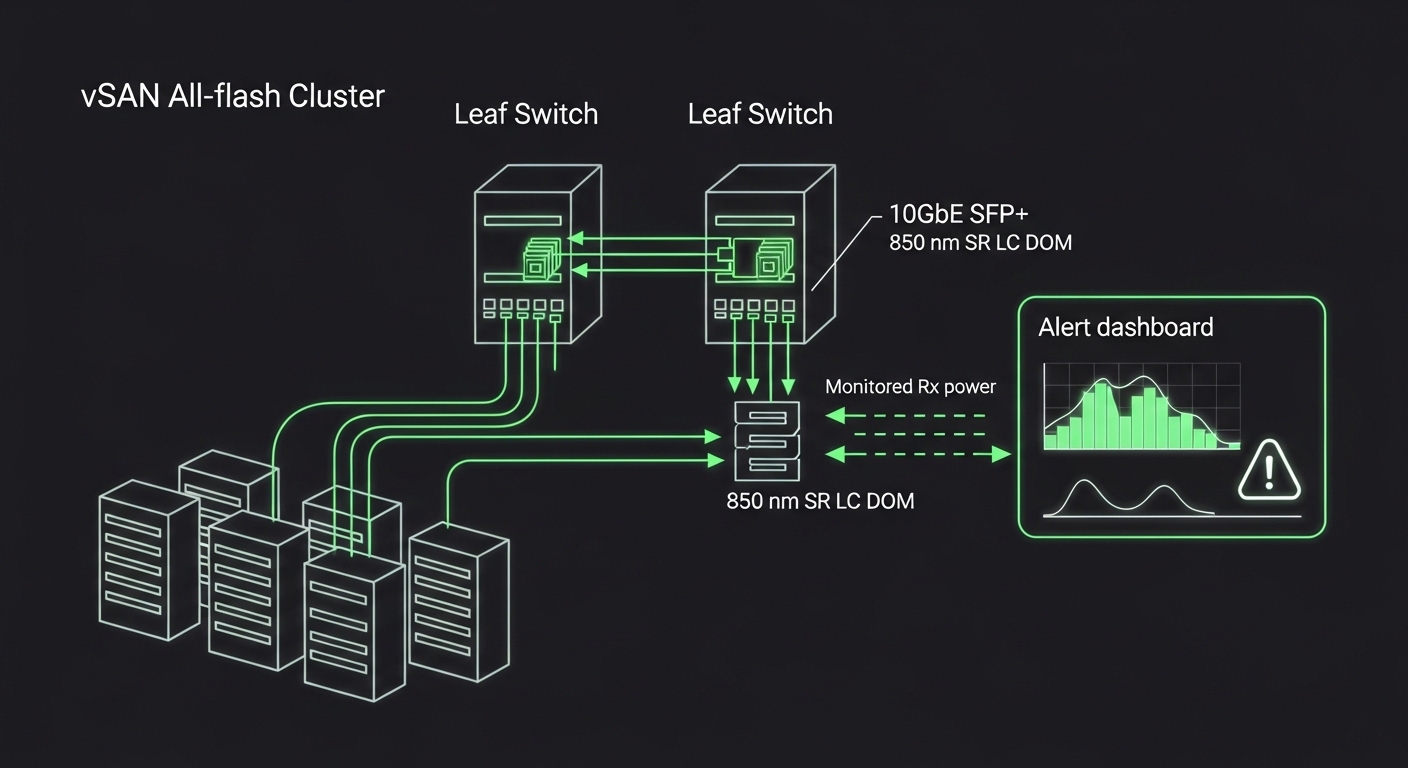

In our environment, vSAN was running on an all-flash cluster with 3 hosts per fault domain and a leaf-spine fabric. The growth plan added 2 additional nodes per phase, each requiring multiple 10GbE fiber uplinks for storage traffic and management. The first wave used whatever transceivers were available at the time, so we ended up with inconsistent optics across hosts and switch ports. After a cable move, we saw intermittent link down/up events that coincided with vSAN resync pressure, and the monitoring team flagged CRC errors rising from near-zero to sustained bursts.

The procurement challenge was simple to describe and hard to fix: we needed transceivers that matched the switch vendor’s expectations, supported accurate Digital Optical Monitoring (DOM), and were available with short lead times. We also needed to reduce supply-chain risk—if one transceiver SKU went backordered, we could not stall the next vSAN expansion.

Environment specs: what the optics had to satisfy

Before we picked any part numbers, we captured the physical and electrical constraints: link speed, fiber type, connector style, and the expected optical budget. For vSAN fiber SFP, that usually means 1GbE/10GbE SFP or SFP+ optics depending on the host NIC and the fabric design. In our case, host uplinks were 10GbE to a pair of leaf switches, using OM3 multimode for shorter runs and structured cabling with LC connectors.

We validated the network side against IEEE standards and switch datasheets. Link behavior and transceiver compatibility are grounded in the optical/electrical interface requirements defined by IEEE 802.3 (10GBASE-SR and related clauses), and module behavior (including DOM) is typically described in vendor documentation. For reference, see IEEE 802.3 for 10GBASE-SR electrical/optical compliance expectations: IEEE 802.3 overview.

| Key spec | Target for our vSAN fiber SFP | Common options you will see |

|---|---|---|

| Form factor | SFP+ (10GbE) | SFP vs SFP+ depending on NIC |

| Wavelength | 850 nm (multimode SR) | 1310 nm (LR), 1550 nm (ER/ZR) |

| Reach | Up to 300 m on OM3 (typical SR limit) | 300 m OM3, 400-550 m on OM4 depending on spec |

| Connector | LC | LC common; some older gear uses SC |

| Data rate | 10.3125 Gbps line rate (10GbE) | 1GbE SFP (1.25 Gbps) also exists |

| DOM | Required for monitoring and alerts | Some low-cost modules omit or misreport |

| Operating temp | 0 to 70 C (typical enterprise) | Industrial ranges exist if you have hot zones |

Chosen solution: standardize on one SR optics profile

We standardized on 10GBASE-SR 850 nm SFP+ modules with LC connectors and DOM support. We also made the selection “switch-aware,” because compatibility is where many rollouts fail. Some switch vendors apply transceiver vendor ID checks or have stricter DOM parsing, so we aligned our module selection with the switch platform’s documented compatibility guidance. Where documentation was unclear, we did a staged validation: insert modules on two non-production hosts, verify link stability for 24 hours, and confirm DOM telemetry in the switch and monitoring stack.

On the procurement side, we compared OEM vs third-party supply. OEM modules had the lowest surprise rate but were expensive and sometimes had longer lead times. Third-party modules were cheaper and often available immediately, but we treated them as a managed risk: we restricted to vendors with strong DOM behavior and a published track record, and we required consistent lot traceability for returns. For vSAN fiber SFP, this “controlled substitution” approach reduced downtime risk while still meeting budget and timeline targets.

Pro Tip: Before you approve any optic SKU, validate that the module’s DOM fields (especially received power and temperature) populate cleanly in your monitoring system. A module can pass link training yet still misreport thresholds, which delays detection of marginal optics before they trigger CRC spikes.

Implementation steps we actually used

- Inventory and classify existing optics by wavelength, DOM presence, connector type, and switch port mapping.

- Lock the fiber profile per run length: OM3 SR at 850 nm for short links, and only allow other wavelengths when cabling and budgets require it.

- Run a compatibility matrix test on one leaf switch pair: insert candidate modules and verify link stability, DOM readings, and error counters for 24 to 48 hours.

- Stage rollout by rack group: replace transceivers in one fault domain at a time to reduce blast radius if a module behaves unexpectedly.

- Enable alerts on DOM-driven thresholds (Rx power, temperature) and correlate with interface CRC and FEC/encoding error counters.

Measured results: fewer flaps, faster expansions, tighter control

After standardization, we measured the difference in two ways: physical link health and operational friction. In the first month post-rollout, link flaps dropped from sporadic events (roughly 5 to 10 incidents per week during cabling changes) to near-zero in steady state. CRC errors fell dramatically, and the monitoring dashboard showed that Rx power stayed within expected ranges instead of drifting toward marginal levels.

On the operational side, the procurement cycle shortened. Instead of searching for “whatever matches,” we stocked a single approved SR profile for the next two expansion phases, which reduced emergency purchases. Lead time risk also improved: OEM-only procurement created occasional pauses when distributors ran dry, while our controlled third-party option kept us moving with shorter replenishment windows.

Common mistakes and troubleshooting tips

These are the failure modes we saw (and what fixed them), written the way field engineers explain it after the incident call.

- Mistake: Mixing SR modules across different fiber types (OM3 vs OM4) without re-checking optical budget.

Root cause: Reduced margin leads to higher bit error rates as temperature and aging shift.

Solution: Confirm fiber type per run, then validate optical budget and DOM Rx power trend before and after installation. - Mistake: Approving modules that “link up” but provide unreliable DOM telemetry.

Root cause: Some third-party optics misreport received power or temperature, breaking your alerting logic.

Solution: Validate DOM fields in your monitoring stack, not just link status; require consistent telemetry formatting. - Mistake: Overlooking switch platform compatibility rules for transceiver identification.

Root cause: Some platforms enforce vendor ID and may throttle or log errors when values don’t match expected ranges.

Solution: Test candidate optics in a lab or staging switch; keep a small approved catalog per switch model. - Mistake: Cleaning or re-terminating fiber inconsistently during swaps.

Root cause: Dirty LC faces cause intermittent loss, presenting as link flaps and CRC bursts.

Solution: Use approved fiber cleaning tools and inspect with a scope; re-clean before you assume optics are faulty.

Cost and ROI note: what the spreadsheet needs to include

Typical pricing varies by volume and channel, but in many enterprise deals, OEM 10GBASE-SR SFP+ modules often land in the two-digit to low three-digit USD range per unit, while third-party options can be meaningfully lower. The real ROI isn’t just unit price; it’s total cost of ownership (TCO): fewer truck rolls, fewer troubleshooting hours, and less downtime risk during vSAN expansion windows.

We modeled TCO using failure and return rates from our own RMA history plus vendor reliability indicators. The “best” economic choice depended on how quickly lead times could impact the schedule. When a backorder would delay adding capacity, the cost of delay (lost runway for vSAN growth and operational strain) outweighed the module price delta.

FAQ

What does vSAN fiber SFP need to support beyond basic connectivity?

At minimum, it must meet the correct optical profile for your fiber type and distance (for SR, usually 850 nm with the right reach for OM3/OM4). In practice, you also want DOM support so your monitoring can detect marginal optics before they cause CRC bursts.

Can I use third-party vSAN fiber SFP modules with VMware and my switch?

Often yes, but compatibility is platform-specific. Validate in staging by checking link stability and DOM telemetry, and confirm your switch does not block or misinterpret transceiver identification.

How do I estimate whether my run length fits 10GBASE-SR?

Use the fiber type and the manufacturer’s SR reach guidance, then account for connector loss, patch panel loss, and any splices. The safest approach is to measure or verify with documentation plus DOM Rx power trend after install.

Why do I see link flaps after swapping optics even when the module is correct?

The most common causes are dirty connectors or inconsistent cleaning during re-seat operations. A second frequent cause is DOM misreporting that hides early degradation until errors spike.

What operating temperature range matters for data centers?

Most enterprise modules are specified around 0 to 70 C, but local hot spots around densely packed switches can push modules closer to limits. If you have high-heat zones, consider modules rated for broader temperature ranges and improve airflow verification.

How should procurement reduce supply-chain risk for vSAN expansions?

Standardize on one approved SR profile per fiber type, keep a small buffer stock, and maintain an alternate approved vendor path. Also require lot traceability so you can isolate bad batches quickly during RMAs.

If you want the rollout to go smoothly, treat vSAN fiber SFP as a compatibility and monitoring project, not just a part number swap. Next step: map your existing optics to your switch models and fiber runs, then use transceiver-compatibility-checklist to build an approved, low-risk catalog.

Author bio: I’ve run hands-on optics standardization across leaf-spine fabrics supporting vSAN all-flash clusters, including DOM telemetry validation and staged cutovers. I focus on measurable link health, realistic lead-time planning, and procurement decisions that hold up during expansion windows.