An AI optical network lives or dies by visibility: link health, optics drift, and signal margins must be measurable while the fabric scales. This article helps network engineers and field techs select transceivers that support analytics for an AI-driven optical network management workflow. You will compare common module options, learn practical selection criteria, and troubleshoot telemetry and optics issues that frequently appear during rollouts.

Telemetry-first comparison: digital optics vs simple pluggables

In an AI optical network, the biggest operational difference is whether the transceiver exposes enough diagnostics to support closed-loop management. Many “works on day one” modules still hide key signals like received power trend, temperature stability, or laser bias. For AI-driven optical network management, you typically want standardized digital diagnostics plus management access through your switch, controller, or an out-of-band telemetry pipeline.

Start by distinguishing modules by interface and diagnostic capability. Most modern pluggables implement Digital Diagnostic Monitoring (DDM) parameters such as Tx Bias, Tx Power, Rx Power, Laser Temperature, and Supply Voltage. For higher observability, some vendors also provide enhanced vendor-specific pages or telemetry extensions that your platform can read and export to time-series systems.

What engineers actually measure during AI traffic spikes

When an AI cluster shifts workloads, you often see bursty east-west traffic and rapid link utilization changes. Engineers correlate telemetry with error counters and link state to detect early degradation. In practice, you will watch Rx power drift, temperature excursions, and error rate counters on the switch for the same port over hours, not just minutes.

Head-to-head: typical transceiver analytics profiles

Below is a practical comparison of common optical families used in AI optical network deployments. Values vary by vendor, but these ranges match what field teams use to plan optics budgets and operational guardrails.

| Category | Example module | Wavelength | Reach (typical) | Data rate | Connector | Temperature range | Telemetry readiness |

|---|---|---|---|---|---|---|---|

| SR over MMF | Cisco SFP-10G-SR / Cisco QSFP-40G-SR4 | 850 nm | ~300 m to 400 m (OM3/OM4 dependent) | 10G / 40G | LC | Commercial (0 to 70 C) | DDM supported on most platforms |

| ER over SMF | Finisar FTLX8571D3BCL (example family) | 1550 nm | ~40 km (single-mode dependent) | 10G / 25G / 100G variants | LC | Industrial options exist | DDM supported; vendor pages vary |

| LR over SMF | FS.com SFP-10GSR-85 (example reach variant) | 850 nm (or LR variants at 1310 nm) | ~10 km (variant dependent) | 10G | LC | Commercial/extended available | DDM supported; check switch compatibility |

| Active optical (AOC) | Vendor AOC twinax-to-fiber assemblies | 850 to 1310 nm depending on design | ~5 m to 100 m class | 25G to 400G class | LC or integrated | Varies | Telemetry may be limited vs pluggables |

Key takeaway: for AI optical network analytics, prioritize modules that reliably expose DDM to your specific switch model and firmware. If your switch can’t read vendor-specific pages, you may lose the “extra” value even if the optics hardware supports it.

Pro Tip: During pilot runs, export DDM readings every 30 seconds and compute a rolling slope for Rx power (dBm/hour). A slow downward trend often appears days before port errors, giving you time to clean connectors, re-seat optics, or plan a proactive replacement.

For standards context, Ethernet physical layer behavior and link bring-up timing are defined across IEEE 802.3 amendments, while diagnostics and management are typically driven by the pluggable interface standards and vendor implementations. For deeper platform-level expectations, always validate against the switch vendor’s optics compatibility guide and the transceiver datasheet.

Selection criteria: choosing modules that make analytics actionable

Engineers rarely fail because they picked the wrong wavelength alone. They fail because telemetry is incomplete, incompatible with the switch’s management plane, or too slow to support operational decisions. Use this checklist to select transceivers for an AI optical network that depends on analytics for uptime.

- Distance vs optical budget: confirm fiber type, attenuation at wavelength, and connector loss. For MMF SR, verify OM3/OM4 and patch cord quality; for SMF, validate end-to-end budget including splices.

- Data rate and FEC mode: ensure the optics match the port speed (10G/25G/40G/100G) and that the switch uses the expected coding/FEC behavior. Mismatches can surface as intermittent link flaps.

- Switch compatibility: check the exact switch model and firmware. Some platforms accept third-party optics for basic link, but restrict advanced diagnostics or set warnings only.

- DOM support and visibility: verify DDM parameter support and whether the switch exports it to telemetry. Confirm you can read Tx bias and Rx power through your monitoring stack.

- Operating temperature range: AI racks can create localized hot spots near top-of-rack airflow. Prefer industrial or extended temperature options if you see sustained chassis temperatures above 70 C at the port side.

- Vendor lock-in risk: decide whether you can tolerate vendor-specific telemetry pages. If you rely on analytics pipelines, consider requiring standardized DDM fields at minimum.

Cost and ROI: what analytics-ready optics change in TCO

In many deployments, transceivers are a small line item compared to downtime and maintenance labor, but the analytics layer changes the cost curve. Third-party optics can reduce purchase price, yet you must include engineering time for compatibility testing and potential monitoring gaps. OEM optics may cost more per unit, but they often reduce rollout risk and speed up RMA workflows.

Typical street pricing varies by region and speed class. As a practical planning range, 10G SR modules often cost less than 25G or 100G modules, and ER/long-reach SMF optics cost more due to laser complexity and yield. For TCO, include: monitoring infrastructure effort, spares strategy (how many ports you keep ready), and the expected failure rate under your thermal profile. If analytics lets you replace optics before they cause packet loss, you can avoid costly incident response and reduce mean time to repair.

ROI also depends on how your AI optical network management is implemented. If you already have time-series ingestion, DDM-based alerting can be low incremental cost. If you do not, budget for telemetry collection, correlation logic, and alert tuning to avoid noise during normal thermal variation.

Deployment scenario: telemetry-driven maintenance in a leaf-spine AI fabric

Consider a 3-tier data center leaf-spine topology with 48-port 10G ToR switches feeding an aggregation tier and then a spine. Suppose each leaf has 40 active 10G links and you run an AI training workload that shifts traffic every 10 to 20 minutes due to job scheduling. Over 30 days, field teams observe that certain links show a gradual Rx power decrease of 0.2 to 0.4 dB/day before errors. By correlating DDM trends with interface counters, they schedule connector cleaning and optic reseating during low-traffic windows instead of after outage.

Operationally, the workflow looks like: poll DDM via switch telemetry every 30 seconds, store port-level time series, and alert when Rx power slope exceeds a threshold and the port error counter remains non-zero. In one rollout, this prevented multiple “mystery flaps” caused by oxidized LC end faces near a hot aisle. The team still kept a standard RMA path, but they reduced reactive truck rolls by catching degradation early.

Common mistakes and troubleshooting for transceiver analytics

Even when optics are correct, analytics can fail due to operational or compatibility issues. Here are field-proven failure modes with root causes and solutions.

Link works but telemetry is blank or incomplete

Root cause: the switch firmware may not support the optics’ diagnostic pages, or the monitoring pipeline only subscribes to certain MIB/telemetry fields. Some third-party modules expose DDM but not the vendor-specific extensions your tooling expects.

Solution: confirm DDM fields are readable for the exact switch model and firmware. Update monitoring to parse only standardized DDM first, then add vendor-specific pages if available.

Frequent link flaps after re-seating optics

Root cause: fiber end-face contamination or connector geometry mismatch. In high-density AI racks, a reseat can disturb a marginal connector or slightly misalign LC ferrules, raising reflectance and causing receiver overload.

Solution: inspect and clean connectors using approved fiber cleaning tools, then re-seat with consistent latch engagement. If possible, test the same fiber path with a known-good transceiver.

Alerts trigger too often due to temperature noise

Root cause: overly sensitive thresholds on Rx power or temperature without accounting for normal chassis variation. AI optical network airflow can create localized gradients near certain cages.

Solution: implement hysteresis and use rolling averages (for example, 10-minute windows). Correlate alerts with error counters so that alerts fire only when both optics drift and link impact occur.

Misconfigured speed or FEC expectation

Root cause: selecting an optics type that negotiates differently than intended. Some modules support multiple rates, but the switch port profile might default to a mode that stresses the link budget.

Solution: lock port configuration to the intended speed and verify FEC mode. Validate with optical power readings and error counters immediately after change.

Decision matrix: which transceiver option fits your AI optical network

Use this matrix to align module choice with operational priorities. The goal is not “best optics,” but “best analytics reliability plus compatible optics budget.”

| Your priority | Best-fit option | Why it fits | Main trade-off |

|---|---|---|---|

| Maximum telemetry consistency | OEM pluggables with verified DOM/DDM | Highest likelihood that your switch exports the same diagnostic fields every time | Higher unit cost |

| Lower purchase cost with acceptable analytics | Third-party pluggables validated in your specific switch firmware | Can reduce CAPEX if monitoring is adapted to standardized DDM | Extra validation effort; possible telemetry gaps |

| Short-reach within racks | AOC or SR pluggables depending on airflow and cabling constraints | Faster deployment, fewer fiber handling issues | Telemetry may be limited vs pluggables |

| Distance beyond MMF limits | SMF ER/LR optics with validated optical budget | Supports required reach with stable wavelength performance | Higher cost and careful budget planning |

Which Option Should You Choose?

If you are building a production AI optical network where telemetry drives proactive maintenance, choose modules with guaranteed readable DDM fields in your switch firmware, even if that means starting with OEM optics for the first pilot. If you are optimizing for budget and can tolerate an initial validation phase, third-party optics can work well when you confirm standardized DDM visibility and validate thresholds against real thermal conditions. If your primary constraint is rack-level cabling speed and fiber handling, consider AOC where telemetry is sufficient for your monitoring goals, but do not assume it matches pluggable diagnostics.

Next step: align your optics plan with your monitoring architecture using AI optical network monitoring so telemetry becomes an operational control loop rather than a dashboard.

FAQ

Q: How do I confirm a transceiver supports analytics for an AI optical network?

Start by verifying DDM fields like Rx power, Tx bias, temperature, and voltage are readable on your exact switch model and firmware. Then confirm your telemetry collector exports those fields into your time-series system so alerts can be correlated with port error counters.

Q: Are third-party optics safe to use for telemetry-driven operations?

They can be, but validate in a staging environment that matches your firmware and monitoring parsing. The main risk is not link bring-up; it is missing or inconsistent diagnostic fields that your analytics pipeline expects.

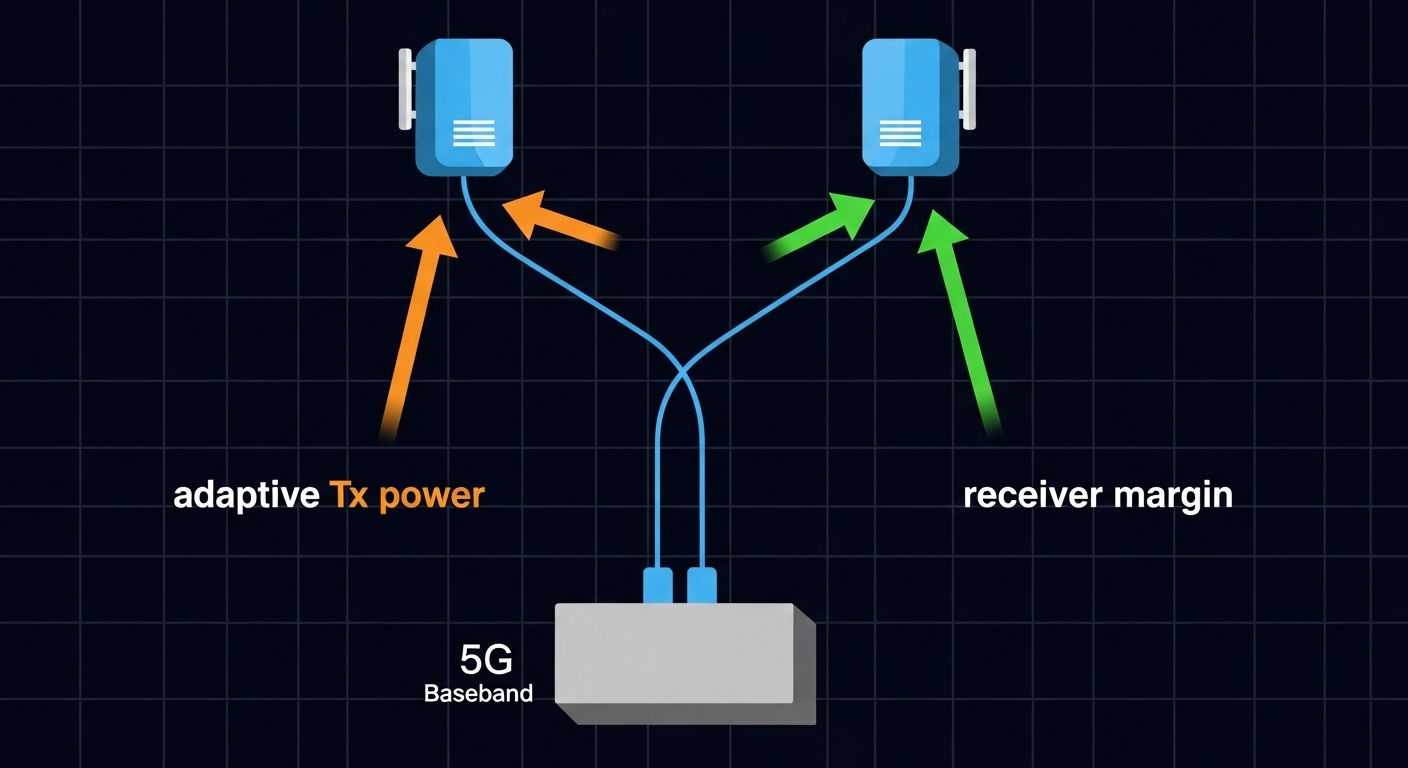

Q: What distance matters most: fiber reach or measured optical power?

Both matter, but measured optical power is what determines receiver margin under real conditions. Use the vendor optical budget as a starting point, then validate with DDM readings after installation and during thermal changes.

Q: Why do we see telemetry drift without immediate link errors?

Optics aging and connector contamination often show up as gradual Rx power changes before packet errors. That is why slope-based alerting and correlation with error counters can prevent outages.

Q: What temperature range should we plan for in AI racks?

Plan for worst-case localized port-side temperatures, not just average ambient. If your environment can exceed typical commercial ranges, consider extended or industrial temperature optics and verify airflow with measurements.

Q: Can switching platforms limit what diagnostics you can read?

Yes. Some