In a 5G radio access network, a few nanoseconds of timing drift can turn into real operational pain: misaligned TDD slots, degraded phase noise performance, and extra troubleshooting cycles. This article helps network and field engineers choose telecom timing optics that support G.8273.2 Class C requirements, focusing on how transceiver characteristics influence end-to-end timing. You will get an implementation checklist, a spec comparison table, and hands-on troubleshooting for the most common failure modes. Updated: 2026-04-30.

Prerequisites: what you must verify before swapping timing optics

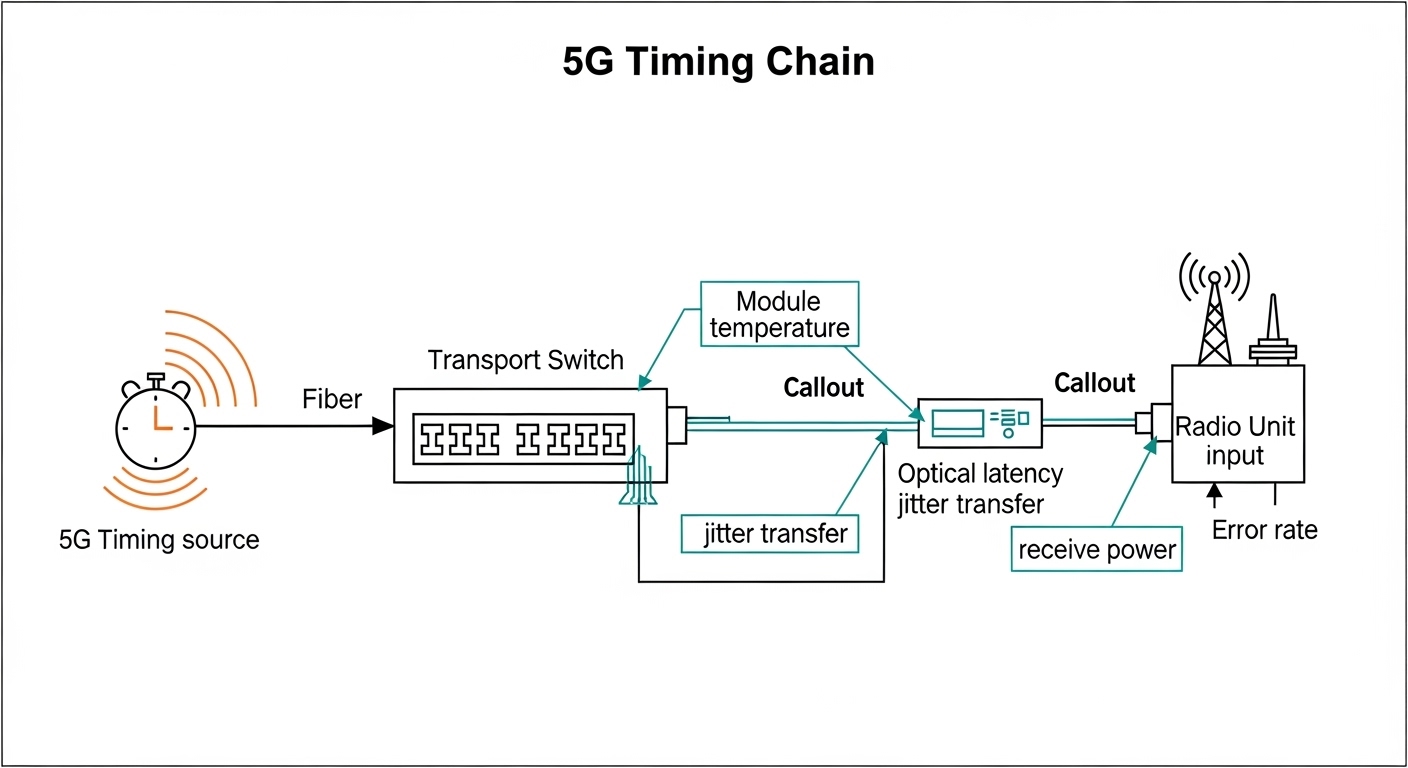

Before selecting or deploying optics, confirm your timing architecture and measurement approach. For G.8273.2 Class C, the optical link is part of an end-to-end timing chain that includes synchronization source quality, transport delay behavior, and jitter transfer characteristics. If you cannot measure jitter or phase stability at the demarcation points, you will end up validating by symptoms rather than by compliance evidence.

Gather these inputs

- Network topology: fronthaul or midhaul, point-to-point spans, and whether you use active Ethernet (including EEC/SyncE) or a dedicated timing distribution.

- Expected fiber reach: confirm planned span length in kilometers and whether you use single-mode fiber with APC or UPC mating.

- Switch and transceiver compatibility: vendor support matrix for timing and DOM reporting.

- Optical budget targets: measured receive power at the far end, including aging margin.

- Operational environment: rack airflow, ambient temperature range, and whether the site sees condensation or dust.

Expected outcome: You will have a clear “timing chain map” and the minimum data needed to select optics without guessing.

How telecom timing optics influence G.8273.2 Class C timing in practice

In timing distribution, optics are not just “data pipes.” Their physical and electrical interfaces can affect the timing chain through deterministic latency, differential delay sensitivity, and how the module handles retiming and clock recovery behavior. For G.8273.2 Class C, the compliance focus is on end-to-end performance, but transceiver selection still matters because different optics can introduce different optical-to-electrical conversion and jitter generation under real operating conditions.

Key mechanisms engineers watch

- Deterministic latency and delay variation: differences between nominal and actual path delay matter when you budget synchronization phase.

- Jitter transfer and bounded wander: the module can contribute to jitter accumulation, especially when the link is stressed near optical power limits.

- Temperature sensitivity: small wavelength drift and receiver sensitivity changes can increase error rates, which then increases retransmission or buffering effects.

- Link quality under margin: running too close to the receive power threshold increases bit errors and can destabilize higher-layer timing behavior.

Pro Tip: In field tests, timing “mysteries” often trace back to optics running with insufficient optical margin rather than to the timing source. If you can only check one thing, measure receive power and optical module temperature at the far end during peak load; weak margin can silently worsen jitter transfer even when the link stays “up.”

Specs that actually matter: compare timing-capable transceivers

Different module families can meet basic data rates, but timing performance depends on optical reach, wavelength stability, receiver sensitivity, and module diagnostics. Use the comparison table below as a starting point for engineering discussions with your vendor or integrator, then confirm the exact performance claims against the transceiver datasheet and the system’s timing test results.

Baseline comparison (example classes you may encounter)

| Parameter | 10G SR (example) | 10G LR (example) | 25G SR (example) |

|---|---|---|---|

| Typical wavelength | 850 nm | 1310 nm | 850 nm |

| Reach (typical) | Up to ~300 m OM3 / ~400 m OM4 | Up to ~10 km SMF | Up to ~300 m OM3 / ~400 m OM4 |

| Connector | LC | LC | LC |

| Data rate | 10.3125 Gb/s | 10.3125 Gb/s | 25.78125 Gb/s |

| DOM support | Commonly available (temperature, bias, power) | Commonly available | Commonly available |

| Temperature range | 0 to 70 C typical or -40 to 85 C (extended, varies by vendor) | 0 to 70 C typical or -40 to 85 C (varies) | 0 to 70 C typical or -40 to 85 C (varies) |

| Timing relevance | Margin and jitter under load; watch optical power drift | Deterministic latency stability over longer spans | Receiver sensitivity and thermal behavior |

Note: The table uses representative categories. For compliance work, validate against the specific part numbers you plan to buy and the system’s timing test evidence. Common examples you might see in deployments include vendor and third-party optics such as Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, or FS.com SFP-10GSR-85—but always confirm compatibility with your exact switch model and optics firmware/behavior.

Standards and reference points

- ITU-T G.8273.2 for synchronization performance classes, including Class C objectives. [Source: ITU-T G.8273.2]

- IEEE 802.3 for Ethernet physical layer specifications relevant to module behavior and link operation. [Source: IEEE 802.3]

- Vendor datasheets for optical parameters, DOM readings, and temperature ranges. [Source: Cisco, Finisar, FS.com datasheets]

Step-by-step selection guide for telecom timing optics in Class C projects

This numbered workflow is designed for teams deploying optics for 5G synchronization where G.8273.2 Class C compliance is required or strongly expected. The goal is to choose optics that are stable under real temperature and power conditions, not just those that pass a lab “link up” test.

Implementation steps

-

Step 1: Lock the timing transport method

Decide whether your sync is distributed over the same Ethernet fabric or a dedicated timing path. Confirm your equipment supports the needed synchronization mode and that optics are not introducing unsupported retiming behavior.

Expected outcome: You know the physical layer requirements and where optical latency/jitter matters. -

Step 2: Match reach and fiber type

Use single-mode for multi-kilometer links (for example, 1310 nm LR-style optics) and OM3/OM4 for short-reach (850 nm SR-style optics). Keep at least a conservative margin so you are not near receiver sensitivity during hot/cold extremes.

Expected outcome: Your chosen wavelength and reach align with the planned span. -

Step 3: Confirm optical budget with real measurements

Calculate budget using connector loss, splice loss, and expected aging. Then validate with an optical power meter at installation and after burn-in.

Expected outcome: You can show that receive power stays within spec across the temperature range. -

Step 4: Require DOM and logging access

Select optics that provide DOM telemetry (at minimum: module temperature, TX bias/current, and TX/RX power). Ensure the switch exposes those values via your management plane.

Expected outcome: You can correlate timing issues with optical module health. -

Step 5: Validate temperature range for the site

If the rack ambient can hit 50 to 60 C during summer peaks, prefer extended temperature optics where your vendor supports them. Avoid mixing module temperature classes across the same timing chain if you can.

Expected outcome: Optical behavior stays stable during worst-case conditions. -

Step 6: Check vendor lock-in and compatibility risk

Confirm your switch model accepts the optics type (SFP, SFP+, QSFP, QSFP28) and supports required diagnostics. If you use third-party optics, test a small batch and verify no “unsupported optics” warnings or reduced functionality.

Expected outcome: Reduced risk of operational surprises during maintenance windows.

Decision checklist engineers use

- Distance (km or meters) vs optical reach and fiber type

- Budget (capex for optics vs expected replacement interval)

- Switch compatibility (form factor, vendor support, rate matching)

- DOM support and whether telemetry is accessible for monitoring

- Operating temperature and airflow constraints

- Vendor lock-in risk (supported optics list; behavior on “unsupported” modules)

Deployment scenario: Class C optics in a 5G leaf-spine transport

In a 3-tier data center and edge transport design, a regional 5G aggregation site uses 48-port 10G ToR switches in a leaf-spine topology, with two redundant uplinks per rack. Assume 12 racks, each with two radio unit feeds, and each uplink spans 1.5 km of single-mode fiber using LC connectors. During commissioning, the team installs LR-class optics (1310 nm) and verifies receive power at the far end with a calibrated meter, targeting a mid-range value (for example, not within 1 to 2 dB of the minimum sensitivity). They then enable DOM polling and export module temperature and optical power to a time-series system to correlate any timing anomalies with thermal or optical drift events.

After 72 hours of burn-in, the links remain error-free while module temperature stays within the supported range, and the system’s synchronization measurement shows stable performance consistent with G.8273.2 Class C objectives. The key operational detail is that the optics were not selected only by reach; they were selected by documented stability and monitored continuously using DOM telemetry.

Common mistakes and troubleshooting for timing optics that miss expectations

Even when optics are “correct” on paper, real installations can fail timing expectations. Below are three concrete failure modes that show up in the field, with root causes and fixes.

Link stays up but timing performance degrades

Root cause: Optical margin is too tight; RX power is near sensitivity during temperature peaks, increasing error rates and jitter transfer. The link may still report healthy status while timing quality worsens.

Solution: Measure RX power at the far end during peak thermal load. Replace optics or improve patching to restore margin (clean connectors, reduce bends, shorten spans, or use higher-budget modules). Also compare DOM temperatures between redundant links.

Intermittent alarms after maintenance

Root cause: Connector contamination or fiber damage from repeated unplugging; dust increases loss and can cause sudden degradation.

Solution: Inspect with a fiber microscope, clean using appropriate lint-free wipes and IPA or approved cleaning tools, then re-measure optical power. If you see consistent loss increases, verify fiber condition and replace compromised patch cords.

Compatibility warnings or missing telemetry

Root cause: Third-party optics not fully supported by the switch’s DOM handling or optics qualification logic. Some modules may operate at the correct rate but provide incomplete diagnostics or different power reporting.

Solution: Use the vendor compatibility list for your exact switch model. During validation, confirm DOM fields populate correctly and that telemetry collection works in your monitoring system. If needed, standardize on optics from a single validated supply chain.

Cost and ROI note for telecom timing optics

Pricing varies widely by vendor, temperature grade, and form factor. As a realistic planning range, many 10G SR and 10G LR optics often fall into a broad band (commonly tens to a couple hundred currency units per module depending on brand and grade), while extended-temperature or system-qualified optics can cost more. OEM optics may reduce compatibility risk and troubleshooting time, while third-party optics can cut capex but increase validation workload and potentially raise failure or incompatibility rates.

For TCO, include optics plus the labor cost of cleaning, testing, and swap events. In timing-sensitive 5G deployments, the ROI often comes from fewer truck rolls and fewer commissioning cycles, not just from module price. If your monitoring and burn-in process is strong, third-party optics can be economical; if it is weak, the “cheaper module” can become the most expensive line item.

FAQ

How do telecom timing optics relate to G.8273.2 Class C?

They are part of the transport path that contributes delay and jitter behavior to the end-to-end synchronization chain. Even if the timing source is excellent, optics can worsen performance if they operate near sensitivity or have unstable thermal behavior. [Source: ITU-T G.8273.2]

Which transceiver type is best for timing over short vs long spans?

Short in-rack or campus segments often use 850 nm SR optics over OM3/OM4. Multi-kilometer links typically use 1310 nm LR optics over single-mode fiber. The “best” choice is the one that provides sufficient optical margin and stable diagnostics for your environment.

Do I need DOM support for timing compliance work?

DOM is not a standards requirement by itself, but it is operationally critical for correlating timing anomalies with optical health. Without temperature and power telemetry, you cannot reliably prove that the link stayed within stable operating conditions.

Can third-party optics meet timing requirements?

They can, but you must validate them in your specific switch and fiber environment. Confirm compatibility, DOM behavior, optical budget performance, and stability across temperature. Your vendor may require system-qualified optics for formal compliance evidence.

What is the fastest troubleshooting path when timing alarms appear?

Start with receive power measurement at the far end under current load and temperatures. Then check DOM readings for sudden changes in TX/RX power or module temperature. Finally, inspect connectors and fiber patch cords for contamination or damage.

Where can I find authoritative timing guidance?

Use ITU-T G.8273.2 for class definitions and objectives, and IEEE 802.3 for Ethernet physical layer behavior. For practical optics parameters, rely on transceiver datasheets and your switch vendor’s optics compatibility guidance. [Source: ITU-T G.8273.2] [Source: IEEE 802