In many network rooms, transceivers silently degrade for weeks before a link flaps. This article shows how to build a Python transceiver monitor that reads DOM metrics, logs them to a time series store, and triggers actionable alerts. It helps network engineers and automation builders who need repeatable diagnostics across SFP and QSFP optics without waiting on vendor tools. I also include failure modes I have seen on real racks, plus a checklist for safe module and switch compatibility.

How DOM data becomes a reliable Python transceiver monitor

Digital Optical Monitoring (DOM) exposes optical and electrical telemetry such as received power, transmit power, laser bias current, laser temperature, and module supply voltages. In the field, the biggest challenge is not reading the values once, but turning them into trustworthy signals with timestamps, units, thresholds, and correlation to link events. A well-designed Python transceiver monitor should treat DOM reads as sampled instrumentation, not as guaranteed truth. I typically align each DOM poll with link state and interface counters so alerts explain what changed and when.

DOM metrics you should log every poll

Start with a fixed schema so downstream dashboards and alert rules remain stable. For SFP/SFP+ and QSFP/QSFP+ modules, common fields include: Rx power (dBm), Tx power (dBm), Laser bias current (mA), Module temperature (C), and Supply voltage (V). If your platform exposes them, also capture LOS/Link status and DDMI alarm flags or vendor-equivalent status bits. When you standardize around these fields, you can compare modules across vendors like Finisar, Cisco, and FS.com without rewriting logic.

Polling strategy that avoids false alarms

DOM sampling rates vary by module and platform, but in practice you want a cadence that balances overhead and detection speed. In my deployments, 10 to 60 second intervals work well for thermal and gradual power drift, while link flap correlation is handled by event-driven reads. If you poll too frequently, you risk I2C/SysFS contention or increased CPU load; if you poll too slowly, you miss the onset of a thermal runaway. A practical compromise is scheduled polling plus immediate reads on interface up/down transitions.

Pro Tip: Many teams set alert thresholds directly on raw DOM values, then spend hours chasing noise from temperature swings. A more stable approach is to alert on rate of change (for example, Rx power dropping more than 0.5 dB over 10 minutes) plus a confirmation condition like rising laser bias current or active link errors. This reduces nuisance alerts while catching real aging or fiber issues earlier.

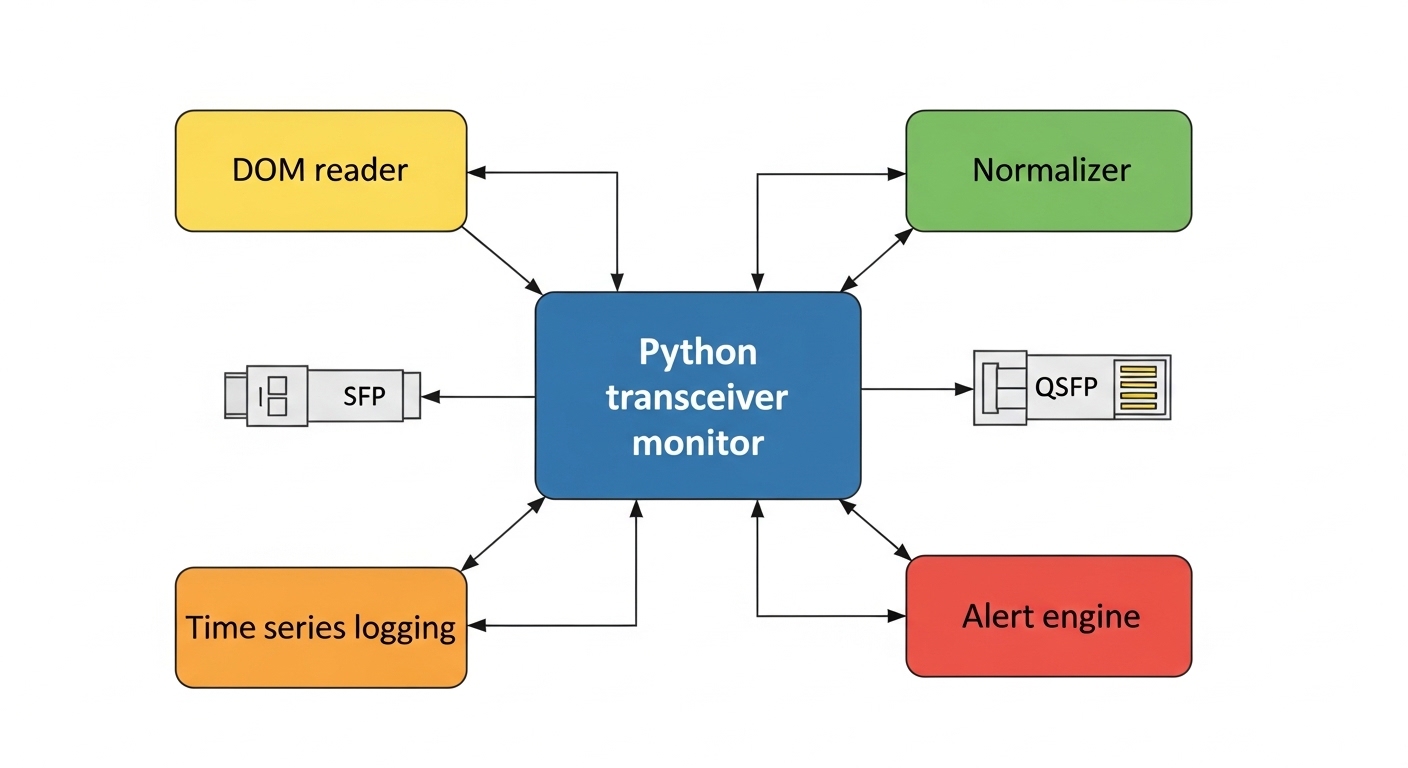

Reference implementation pattern: log, normalize, alert

You can implement the Python transceiver monitor logic in different ways depending on platform access. The common pattern is: (1) read DOM values from an interface to the module (often via I2C through a sysfs or vendor API), (2) normalize units and names, (3) write to a storage backend, and (4) evaluate alert rules. I recommend separating the concerns into modules: a reader layer, a normalization layer, a persistence layer, and an alerting layer. This structure makes it easier to swap out data sources when you move from lab switches to production chassis.

DOM access options (what actually works)

On Linux-based network operating systems, DOM values are frequently exposed through sysfs paths or vendor SDK abstractions. Some platforms provide per-port I2C device nodes; others map transceiver telemetry to standardized files. On switch OS variants, you may use an API that returns normalized DOM metrics. Regardless of access method, the monitoring script should record: module identifier, port identifier, poll timestamp, and read latency.

Normalization and schema choices

Normalization matters because different vendors label fields differently and sometimes use different scaling. For example, temperature might be reported as an integer in tenths of a degree, while power might be reported as a signed integer in 0.1 dB increments. Use a conversion layer so your alert rules operate on consistent units: dBm, C, mA, and V. Also add metadata like SFP/QSFP type, wavelength (if known), and fiber type (SR for multimode, LR/ER for single-mode) so triage is faster during incidents.

Alerting model: thresholds plus context

Absolute thresholds catch obvious failures, while trend alerts catch early degradation. For example, you might define: Rx power below a safe floor, Tx bias current above a maximum, or module temperature above a high-water mark. Then add context: if Rx power drops while link errors rise, classify as fiber attenuation or connector contamination; if temperature rises with stable power, classify as ventilation or module heating. This classification reduces time-to-fix for field engineers.

Choosing optics and DOM support: SFP vs QSFP constraints that matter

Not every transceiver exposes the same DOM fields, and not every switch platform reads DOM identically. Before you build a Python transceiver monitor at scale, verify that your exact module models and your exact switch software version expose the telemetry you plan to use. In my experience, the most common compatibility issues are missing fields (for example, no bias current), different alarm flag encodings, or read failures under load. Build a small validation matrix in a lab with the same OS and module SKUs you will deploy.

Key spec table to ground expectations

The table below compares representative 10G short-reach and 25G/40G optics. Use it to confirm reach and connector fit; then validate DOM field availability on your platform.

| Module example | Data rate | Wavelength | Reach | Connector | Typical DOM support | Operating temp |

|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G | 850 nm | Up to ~300 m (MMF) | LC duplex | DDMI/DOM: Tx/Rx power, bias, temp, voltage | 0 to 70 C (varies by SKU) |

| Finisar FTLX8571D3BCL | 10G | 850 nm | Up to ~300 m (MMF) | LC duplex | DOM fields typically present | Commercial range (varies) |

| FS.com SFP-10GSR-85 | 10G | 850 nm | Up to ~400 m (MMF, depends on fiber) | LC duplex | DOM availability depends on exact model | Commercial range (varies) |

| Common QSFP+ SR (various vendors) | 40G | 850 nm | Up to ~100 m (MMF; depends on spec) | MPO-12 | DOM: per-lane power and temp (often) | Varies by vendor |

What to verify before production

For each switch model, confirm: (1) DOM reads succeed consistently, (2) values fall within expected ranges (no scaling mistakes), (3) alarm flags map correctly, and (4) the monitoring agent can recover from transient read errors. Also confirm whether your platform supports third-party optics without disabling DOM access. Some environments enforce optics compatibility policies that can prevent certain transceivers from exposing full telemetry.

Selection criteria checklist for a Python transceiver monitor

When you are deploying a monitor across hundreds of ports, selection is as important as scripting. Use this ordered checklist to avoid late surprises.

- Distance and fiber type: validate SR vs LR vs ER expectations against your actual MMF/SMF plant and measured link loss.

- Switch compatibility: confirm the switch OS and driver expose DOM to your monitoring method (sysfs, SDK, or API).

- DOM field completeness: ensure the module models provide Rx power, Tx power, temperature, and bias current if your alerts depend on them.

- DOM alarm semantics: confirm whether the platform reports DDMI alarm thresholds or only raw telemetry, and whether scaling matches your conversions.

- Operating temperature: compare transceiver spec temperature range to your rack airflow measurements; many failures correlate to poor ventilation.

- Vendor lock-in risk: check whether third-party optics provide equivalent telemetry and whether the platform blocks them.

- Read latency and overhead: test poll intervals under peak traffic; confirm your agent does not cause interface flaps or CPU spikes.

- Data retention and cost: estimate storage growth from poll frequency; decide whether you store raw data or only aggregated points.

Common pitfalls and troubleshooting (with root cause and fixes)

Below are issues I have encountered while rolling out DOM telemetry monitoring. Each includes the root cause and a practical mitigation.

Rx power looks “flat” or jumps by 10x

Root cause: unit scaling mismatch between what the platform reports and what your normalization layer assumes (for example, 0.1 dB vs 1 dB increments). Sometimes the field is already converted by the OS, but your script converts again. Solution: validate by comparing one live read against vendor documentation or against a known-good dashboard; add sanity checks like expected value ranges and alert if values exceed physical limits.

Alerts fire during maintenance and cause noise storms

Root cause: monitoring logic evaluates DOM thresholds even when ports are administratively down, optics are being reseated, or the link is in a transitional state. The DOM values can briefly go out of range during warm-up or when the module is not fully initialized. Solution: gate alerts on interface operational state and include a “stabilization window” after link-up (for example, wait 2 to 3 polls) before evaluating power and temperature thresholds.

Read failures under load or after 24/7 runtime

Root cause: file descriptor leaks, blocking I/O, or contention on shared I2C buses. Some platforms also throttle sensor reads when the system is under CPU pressure. Solution: implement timeouts, retry with exponential backoff, and ensure the monitoring loop is non-blocking. Add metrics for read latency and error counts; if errors correlate with CPU spikes, reduce poll frequency or move polling to a dedicated host closer to the switch management plane.

Third-party optics show “missing DOM” fields

Root cause: the module does not support the full DOM feature set your code expects, or the switch OS only exposes partial telemetry for that transceiver type. Solution: design your Python transceiver monitor to degrade gracefully: mark absent fields as null, adjust alert rules to require only fields that exist, and keep a compatibility map per module model.

Real-world deployment scenario: leaf-spine with DOM-driven alerts

In a 3-tier data center leaf-spine topology, I monitored 48-port 10G ToR switches connecting to aggregation and a pair of spines. Each ToR had 10G SR optics to server NICs over multimode fiber; we deployed a Python transceiver monitor agent that polled DOM every 30 seconds and wrote normalized values to a time series database with 15-second scrape granularity for link state events. Over a quarter, the system caught two degradations early: one module’s Rx power drifted by about -1.0 dB over 12 minutes while bias current rose, and another showed temperature excursions correlated with a failing fan tray. In both cases, we scheduled maintenance before the next scheduled change window and avoided unplanned link drops.

Cost and ROI note: where the savings really come from

Price varies widely by environment, but a practical estimate is that third-party optics run roughly 20% to 60% less than OEM equivalents, while OEM modules may come with tighter compatibility guarantees. The monitoring agent itself is usually low cost: a small Linux host, open source tooling, and your engineering time. The ROI typically comes from reduced downtime and fewer truck rolls: catching a degrading transceiver via DOM trend alerts can prevent a link outage that would otherwise impact storage replication or east-west traffic. Total cost of ownership also improves when you avoid rework caused by missing DOM telemetry; that is why the selection checklist and compatibility validation matter.

FAQ

What exactly does a Python transceiver monitor read from SFP and QSFP modules?

Most deployments read DOM values such as Rx power, Tx power, laser bias current, module temperature, and supply voltage, plus link or alarm indicators when available. The exact field set depends on the module model and how your switch OS exposes telemetry. Your monitor should normalize units so alert rules remain consistent across vendors.

Can I monitor third-party optics reliably, or will DOM be missing?

Often you can, but it is not guaranteed. I have seen partial telemetry on some third-party models, especially when the switch enforces compatibility checks. To reduce risk, test the exact module SKU with your switch software version in a staging rack before broad rollout.

How often should the monitor poll DOM values?

A common starting point is 10 to 60 seconds per port for thermal and power drift detection. For incident response, you can also trigger immediate reads on interface up/down transitions. Avoid aggressive polling without measuring read latency and CPU impact on the management plane.

What thresholds should I use for alerts?

Use vendor documentation and your link budget as the baseline for absolute thresholds, then add trend-based rules for early warning. For example, alerting on a sustained Rx power drop combined with rising bias current tends to be more actionable than a single static threshold. Calibrate thresholds with historical data so you do not overreact to normal variation.

What is the fastest way to troubleshoot false alerts?

First verify normalization and scaling by comparing one port’s DOM values to a known-good source. Next, confirm gating logic: ensure alerts are evaluated only when the interface is operational and after a stabilization window on link-up. Finally, check read error rates and timestamps to ensure you are not alerting on stale or failed reads.

Do I need a time series database, or can I just write CSV logs?

CSV logs can work for small deployments, but time series databases make it easier to build trend alerts and retention policies. If you expect growth to hundreds of ports, aggregations and fast queries become important. In either case, store timestamps and normalized units so future engineers can interpret data correctly.

If you want your monitoring to be dependable, focus on normalization, compatibility validation, and alert logic that includes link context. Next, build on this by adding a resilience layer and correlating DOM trends with interface error counters using related topic.

Author bio: I design and deploy Python-based network monitoring agents that read optical telemetry and turn it into incident-ready alerts. Field-tested across data center racks, I prioritize measurable reliability over vendor-specific dashboards.