A regional ISP was paying recurring costs for leased wavelengths, yet still had to manage optics replacement, spares, and downtime. This article walks through a real deployment where the team shifted from leased optical transceivers to an ownership model on DWDM dark fiber. It helps network engineers and CTOs evaluate build-versus-buy decisions with measurable outcomes, not guesswork.

Problem: leased optics costs versus operational control on dark fiber

The challenge started with a common pattern: the provider had long-haul capacity available but relied on a third party to supply transceivers and manage wavelength provisioning. Over 18 months, the ISP saw rising monthly recurring charges and inconsistent lead times for replacement modules after link flaps. Their ops team also lacked visibility into optical power budgets, DOM events, and remote alarms tied to the vendor’s equipment. As a result, the team could restore service, but they could not reliably prevent repeat failures.

In a typical DWDM dark fiber model, the fiber pair is “dark” until you light it with optics, and DWDM splits one fiber into multiple narrow wavelength channels. IEEE 802.3 defines Ethernet PHY behavior, while DWDM performance depends heavily on ITU-T channel grids, transceiver specifications, and the optical link budget. The decision was whether to keep leasing transceivers and accept lower control, or to own optics and internalize monitoring and change management.

Environment specs: what the network actually looked like

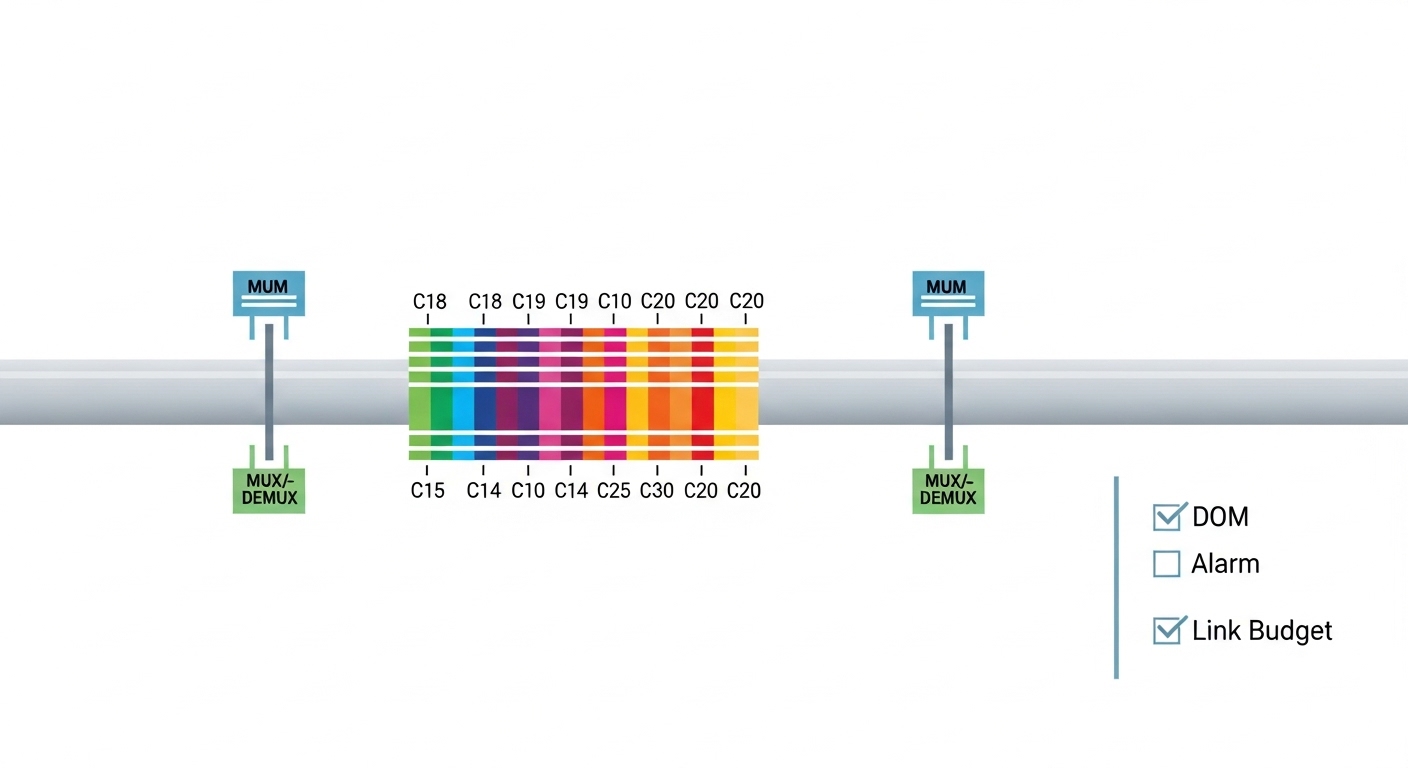

The deployment ran between two metro sites connected by a routed fiber path of 74 km. Each site had a 100G coherent core and a 10G/25G aggregation layer, but the DWDM segment carried multiple 10G channels on a shared wavelength plan. The team targeted ITU-T 100 GHz spacing (common in C-band) and used a link budget sized for worst-case connector loss, aging, and temperature drift.

Optical assumptions and channel plan

- Fiber type: G.652D single-mode

- Band: C-band

- Channel spacing: 100 GHz grid

- Target reach: 80 km class to include margin

- Transceiver family: 10G DWDM SFP+ or pluggable equivalents with ITU grid alignment

- Monitoring: DOM with alarm thresholds and optical power telemetry

Technical specifications comparison (owned vs leased optics)

| Spec | Owned DWDM dark fiber optics (example) | Leased optics (third-party typical) |

|---|---|---|

| Data rate | 10.3125 Gb/s (10G) | 10.3125 Gb/s (10G) |

| Wavelength | C-band ITU grid channel (e.g., 193.1–196.1 THz range) | Pre-assigned channel, grid locked |

| Reach class | 80 km (with margin) | Often stated as 80 km, but varies by vendor config |

| Connector | LC duplex | LC duplex |

| DOM support | Yes (Tx/Rx power, temperature, voltage) | Partial or vendor-only visibility |

| Operating temperature | 0 to 70 C typical for pluggables; verify datasheet | Varies; may include extended options |

| Power class | Defined Tx power and sensitivity per datasheet | Defined in lease contract, sometimes opaque |

For concrete reference, many vendors publish DWDM pluggables such as 10G C-band ITU grid modules (for example, Finisar FTLX8571D3BCL family variants and Cisco-compatible optical modules like Cisco SFP-10G-SR are common in short-reach—DWDM models differ by wavelength and reach). Always validate against the exact datasheet for your ITU channel number and optics class. For standards context on Ethernet PHY requirements, see [Source: IEEE 802.3]. For optical channel grid concepts, use vendor DWDM product notes and [Source: ITU-T recommendations].

Chosen solution: own the optics, keep the provisioning disciplined

The team chose to own the DWDM transceivers on DWDM dark fiber while using a structured provisioning workflow. The key was not just purchasing modules; it was operationalizing optics governance: inventory control, channel assignment, DOM monitoring, and a repeatable acceptance test. The provider still collaborated with a carrier for fiber construction and patching, but they removed recurring charges tied to transceiver leasing.

Why owning worked better in this case

- Telemetry ownership: DOM readings were ingested into their monitoring stack, enabling alerting on Tx power drift and Rx power degradation.

- Faster spares: they stocked a small pool of hot spares per ITU channel, reducing mean time to repair.

- Change control: optical channel swaps followed a documented process, reducing accidental mismatch risk.

- Budget predictability: the amortized cost of optics replacements became stable versus ongoing lease fees.

Pro Tip: In DWDM links, most “mystery outages” are not total fiber failure; they are usually a subtle optical power budget shift from connector/patch panel swaps or a channel mismatch during maintenance. DOM trending (Tx/Rx power over time) lets you spot the drift days before the link drops, so you can intervene before the alarms fire.

Implementation steps: how the team rolled out safely

The rollout ran in phases to avoid service disruption. Each phase used a controlled maintenance window, a rollback plan, and validation at both optical and Ethernet layers.

Phase 1: acceptance tests on the dark fiber lighting

- Fiber verification: OTDR traces and end-to-end loss checks confirmed the link budget assumptions for 74 km route.

- Channel mapping: ITU channel assignments were recorded against physical patch panel ports to prevent wavelength confusion.

- Optical verification: Tx output power and Rx sensitivity were measured at the receiver side using vendor-supported test procedures.

- Ethernet verification: link came up cleanly under IEEE 802.3-compatible configuration, with error counters observed for stability.

Phase 2: monitoring and alarm thresholds

- DOM telemetry was polled for Tx power, Rx power, temperature, and voltage.

- Thresholds were set using observed baseline values from the first stable week, not factory defaults.

- Alarms were correlated with maintenance tickets to distinguish expected transients from real degradation.

Phase 3: spares and operational readiness

- They stocked spares by ITU channel and connector type (LC) to reduce “wrong module” repairs.

- Field technicians received a one-page runbook: check channel label, confirm DOM readings, verify interface mapping.

- They documented vendor part numbers for the exact optics class to reduce compatibility surprises.

Measured results: what improved after switching to owned DWDM optics

After the cutover, the ISP tracked service reliability, incident response, and costs. The results were practical and measurable: fewer prolonged outages, faster replacements, and better visibility into optical health.

Operational metrics

- Mean time to repair: dropped from about 6.5 hours to 2.1 hours because spares were on hand and DOM confirmed which side degraded.

- Incident frequency: link-related events decreased by ~35% over the next quarter as the team used DOM trending to catch drift early.

- Change success rate: improved to 98% for maintenance windows because channel mapping and acceptance tests were standardized.

Cost and TCO note

Pricing varied by vendor and channel count, but a realistic range for 10G C-band DWDM pluggables in the market was roughly $800 to $2,000 per module for reputable third-party or OEM equivalents, plus installation labor and test time. Leased optics often appeared cheaper month-to-month but accumulated recurring fees and sometimes required vendor-only replacements. Over a 3-year horizon, the ISP estimated TCO favored ownership once they accounted for spares, downtime cost, and the reduced operational burden. Power consumption differences between pluggables were usually minor; the bigger savings came from removing recurring lease charges and cutting outage duration.

Limitations: ownership does not remove vendor dependencies entirely. You still need compatible optics with your switch or line system, and you must respect wavelength and grid constraints. If you own optics, you also own the responsibility for inventory accuracy and the operational discipline to prevent mismatched channel installs.

Common mistakes and troubleshooting tips

Even strong teams hit predictable failure modes with DWDM dark fiber. Here are the most common issues seen in the field, with root causes and fixes.

Wrong ITU channel or swapped wavelength pair

Root cause: a module installed into the wrong port during patching or maintenance, or labels faded over time. DWDM channels are narrow, so a mismatch can prevent optical lock.

Solution: implement a channel-to-port register and scan labels before insertion. Validate DOM Tx/Rx power immediately after link bring-up and compare against baseline.

DOM alarm thresholds left at factory defaults

Root cause: factory thresholds may not match your specific link budget, connector quality, or aging profile. This leads to noisy alerts or missed drift.

Solution: build baseline telemetry during the first stable week and set alert thresholds based on measured percent drop (for example, a conservative alarm band well before link loss). Correlate alerts with maintenance windows.

Connector and patch panel loss growth after “simple” moves

Root cause: dust or micro-scratches on fiber end faces after frequent patching. Even a small increase in loss can push the receiver near sensitivity limits.

Solution: use end-face inspection before mating, adopt cleaning SOPs, and re-measure optical power after any fiber handling. If you see Rx power decline without temperature change, suspect cleanliness or connector damage.

Switch compatibility surprises with pluggables

Root cause: some line cards enforce transceiver vendor or revision checks, or they expect specific optics parameterization. This can show up as link not coming up or intermittent errors.

Solution: validate compatibility in a lab using the same switch model and firmware. Confirm the module conforms to expected interface electrical characteristics and supported optical wavelength plan.

FAQ

What does DWDM dark fiber mean in practice?

It means the physical fiber is installed but not carrying active wavelengths until you deploy optics and a DWDM system. In practice, you light multiple narrow wavelength channels across one pair of fibers, typically in C-band with ITU grid spacing. The result is higher capacity without trenching new fiber.

Is owning DWDM optics always cheaper than leasing?

Not always. If you have very low channel counts, short contract terms, or minimal maintenance requirements, leasing can be competitive. Ownership tends to win when you value telemetry control, spares availability, and predictable multi-year TCO.

How do we ensure optical compatibility with our switches or line cards?

Start with the vendor datasheets and your equipment compatibility matrix. Verify wavelength plan, reach class, connector type, and DOM behavior. Then run a pilot in the same firmware environment before scaling.

What should we monitor with DOM on a DWDM dark fiber link?

Track Tx power, Rx power, temperature, and voltage. The most actionable signal is Rx power trending over time: it shows degradation long before hard link failure. Set thresholds based on baseline measurements rather than factory defaults.

What are the biggest risks when managing channelized optics internally?

Channel mismatch during maintenance and optical budget drift due to connector issues are the top two risks. Mitigate them with a channel-to-port register, strict labeling, end-face inspection, and acceptance testing after any fiber handling.

Which standards should we reference for design and validation?

For Ethernet PHY behavior, use [Source: IEEE 802.3]. For DWDM channel grid concepts and optical system alignment, rely on ITU-T guidance and the optics vendor’s DWDM application notes. Your link budget should follow standard optical engineering practices and vendor sensitivity/power specs.

If you want the next step, map your current lease terms into a 3-year TCO model and pair it with a pilot plan for owned optics on DWDM dark fiber capacity planning. You will move faster with fewer surprises when telemetry, channel governance, and acceptance tests are designed upfront.

Author bio: I am a hands-on CTO focused on optical networking strategy, reliability engineering, and build-versus-buy decisions. I have deployed DWDM and pluggable optics in production environments with measurable MTTR, telemetry baselines, and controlled change management.