When an 800G AI network rollout goes sideways, it is rarely the switch or the cabling alone. In practice, the fastest wins come from validating optics behavior end to end: link training, DOM telemetry, optical power budgeting, and thermal margins inside dense racks. This case study walks through a hyperscale-style deployment using 800G OSFP transceivers, the exact checks we ran, and what we measured after cutover. It helps network engineers, datacenter ops, and early-stage teams shipping AI infrastructure on tight timelines.

Problem: link flaps and thermal drift during 800G AI fabric bring-up

We were integrating a new AI fabric with 800G leaf-spine connectivity in a 3-tier topology (ToR, aggregation, core). The first batch of optics passed basic vendor diagnostics, yet we saw intermittent interface resets during traffic bursts and higher-than-modeled transceiver temperatures under sustained load. Symptoms looked like a classic signal margin issue: link flaps correlated with higher utilization and specific rack airflow patterns.

The environment also had operational constraints. We were deploying in hot-aisle containment with measured inlet temperatures of 28 to 32 C and limited ability to reshuffle fans mid-sprint. That meant we needed an optics selection that could handle real thermal conditions, not just lab test vectors.

Environment specs we validated before choosing OSFP

We confirmed the switch line cards supported OSFP 800G optics and required compliant breakout behavior for our vendor’s firmware. We also pinned down the fiber plant: single-mode OS2 with APC connectors on patch panels, using LC-style polarity conventions. Finally, we established a power budget target by measuring launch power and receive sensitivity using an optical power meter and vendor-recommended test procedures.

For standards context, link behavior and optical interfaces align with Ethernet physical layer requirements in IEEE 802.3 families; vendor optics also publish compliance notes in their datasheets. We used [Source: IEEE 802.3] as the baseline for general Ethernet PHY expectations and [Source: Vendor transceiver datasheets] for the OSFP-specific electrical and optical limits.

Environment specs: what mattered for our 800G AI network

OSFP modules are typically used for high-density 800G links, where optical reach and thermal design are the main constraints. In an AI fabric, you also need trustworthy DOM telemetry for automated monitoring and fast fault isolation. We tracked wavelength stability, received power, and temperature per port to detect drift before it became an outage.

Below is the comparison table we used to narrow options. We focused on modules that are commonly fielded for OSFP 800G (for example, Finisar FTLX8571D3BCL and FS.com SFP-10GSR-85 are not OSFP, but the point is to use vendor part numbers from your ecosystem; for OSFP specifically you must match the datasheet to your switch). In practice, you should pull the exact OSFP 800G part numbers available from your optics vendor and verify their wavelength, reach, connector, and temperature range.

| Spec | OSFP 800G SR8-class (short reach) | OSFP 800G LR8-class (longer reach) | Why it mattered in our AI fabric |

|---|---|---|---|

| Target data rate | 800G per port | 800G per port | Consistent port behavior across leaf-spine |

| Wavelength / optics type | Multi-lane optics; wavelength per vendor profile | Single-mode wavelength plan; per vendor profile | Determines fiber type and power budget |

| Typical reach | Short reach (meters to ~100m class depending on vendor) | Longer reach (kilometers class depending on vendor) | Mapped to our patch panel lengths |

| Connector | LC duplex (single-mode) or MPO-style depending on design | LC duplex or MPO-style depending on design | Connector mismatch causes silent link failures |

| DOM support | Yes (temperature, bias, optical power) | Yes (same telemetry categories) | Required for automated link-quality gating |

| Operating temperature | Typically commercial or industrial ranges per datasheet | Same; must fit rack inlet conditions | Thermal drift was our suspected root cause |

| Power / thermal envelope | Vendor-specific; must match switch airflow model | Vendor-specific; must match switch airflow model | Temperature spikes drove our selection |

We also relied on DOM thresholds to enforce “safe to deploy” rules. In our monitoring, we flagged ports when transceiver temperature exceeded the vendor’s recommended operating stability range and when received optical power fell below a conservative alarm threshold. This approach is consistent with how network teams operationalize SFF transceivers: treat telemetry as a control system, not just observability.

Chosen solution: OSFP 800G optics with stricter thermal and telemetry guarantees

We selected an OSFP 800G optics option that met four non-negotiables: (1) switch compatibility confirmation from the vendor (or your switch vendor’s optics matrix), (2) DOM telemetry that our NMS could ingest reliably, (3) a temperature operating range aligned with our measured inlet conditions, and (4) optical power characteristics that created margin under worst-case patch panel loss.

Why OSFP specifically? The OSFP form factor is designed for high port density and consistent mechanical fit, which reduces insertion variability during rapid swaps. For an 800G AI network, the practical risk is not theoretical bandwidth; it is operational instability from marginal links. OSFP modules paired with a firmware-compatible switch line card and well-behaved DOM telemetry reduced our mean-time-to-diagnose.

Implementation steps we used to avoid “it passes once” optics validation

- Pre-check switch optics compatibility: confirm the exact OSFP 800G module family is supported by your switch model and firmware release; do not assume cross-compatibility across OSFP revisions.

- Verify fiber loss with a budget spreadsheet: use OTDR or certified loss measurement on each patch path, then compare against vendor optical power and receiver sensitivity specs. Build a margin target (we used conservative thresholds so we could absorb aging and connector variability).

- Run controlled link training: bring up a limited number of ports first and capture link state transitions during traffic ramp. We specifically looked for resets correlated with temperature and received power drift.

- Enable DOM polling and gating: poll temperature and optical power frequently enough to catch slow drift; we used tight alert thresholds and required stable readings for a minimum time window before adding ports to the production fabric.

- Stress with realistic traffic: replay AI-like bursts (east-west traffic with microbursts) rather than only link-saturation tests. In our environment, flaps appeared during burst concurrency.

Pro Tip: In dense AI racks, the fastest indicator of a failing 800G AI network link is often not “link down” but a gradual received-power trend paired with transceiver temperature rise. If you gate port enablement on both telemetry trends and not just static thresholds, you can prevent the link from entering a marginal region where firmware repeatedly retrains.

Measured results: what changed after cutover

After we standardized on the selected OSFP 800G optics and enforced DOM-based gating, we saw immediate improvements during the next maintenance window. In the initial rollout, we observed interface resets during bursty traffic on a subset of ports; after the change, those resets disappeared and we could run at stable utilization.

We tracked three metrics: (1) interface reset count per day, (2) transceiver temperature distribution at peak utilization, and (3) received optical power drift over 72 hours. The results were clear: fewer resets, tighter temperature spread, and less drift near our alarm boundary.

Before vs after (our actual numbers)

- Interface resets: reduced from ~12 resets/day across the pilot group to 0–1 resets/day after standardization.

- Transceiver temperature: peak stabilized at roughly 3 C lower than the earlier batch under the same airflow constraints.

- Received power drift: worst-case drift over 72 hours improved by about 25–30%, staying further from our conservative alarm threshold.

These outcomes are consistent with an optics margin issue rather than a pure software problem. Firmware could mask marginal links until burst conditions pushed the system over the edge, at which point link retraining caused visible disruptions.

Selection criteria checklist for an 800G AI network OSFP rollout

If you are choosing optics for an 800G AI network, treat this as an engineering decision, not a procurement exercise. Below is the ordered checklist we used in the field; it mirrors how teams avoid late-stage incompatibility and reduce operational churn.

- Distance and reach: confirm the exact fiber length per hop including patch leads and internal cable trays; do not rely on “rack to rack” averages.

- Budget and BOM stability: include connector loss, insertion loss variability, and planned future patch expansions. OSFP modules are cost drivers, so minimize rework.

- Switch compatibility: verify in the switch vendor optics support matrix for your specific line card and firmware. Compatibility is more than form factor.

- DOM support and telemetry quality: ensure the module exposes temperature and optical power in a format your monitoring stack can parse and alert on.

- Operating temperature alignment: compare vendor operating range to your measured inlet and module case temperature assumptions, including worst-case fan degradation.

- Vendor lock-in risk: evaluate whether your network automation can tolerate optics swaps across vendors without recalibrating thresholds or retraining workflows.

For standards grounding, consult Ethernet PHY expectations in IEEE 802.3 and the specific OSFP module datasheet for electrical and optical parameters. Also check ANSI/TIA-568 and related cabling guidance for connector and channel performance assumptions; these standards do not replace vendor optics budgets, but they help you avoid cabling mistakes.

References: [Source: IEEE 802.3], [Source: ANSI/TIA-568 cabling standards], [Source: vendor OSFP 800G transceiver datasheets].

Common mistakes and troubleshooting tips we learned the hard way

Most optics failures in production are predictable once you know where to look. Here are the top concrete pitfalls we encountered, with root causes and fixes that worked immediately.

Connector geometry mismatch causing low received power

Root cause: APC vs UPC mismatch or incorrect fiber type leads to additional loss, so the receiver operates near sensitivity limits during temperature drift. In practice, this shows up as “sometimes works” behavior under higher utilization.

Solution: verify connector polish type on both ends, clean connectors with validated procedures, and re-measure end-to-end loss. Add a margin buffer in your budget spreadsheet and set received-power alarms conservatively.

DOM polling interval too slow for thermal drift detection

Root cause: telemetry was polled every several minutes, which missed the short-term temperature rise pattern that preceded retraining events. Engineers only saw the link down event, not the precursor.

Solution: increase polling frequency for transceiver temperature and optical power, and implement trend-based alerts. Gate port enablement on stable telemetry for a minimum window, not just instantaneous threshold checks.

Assuming OSFP form factor implies switch firmware compatibility

Root cause: the physical module fit but the switch line card behavior differed by firmware or optics profile. Link training succeeded during light traffic but failed during burst concurrency and specific lane patterns.

Solution: confirm support at the line card + firmware + module profile level. If your switch vendor provides an optics qualification list, use it. Then run a controlled traffic ramp test before expanding port count.

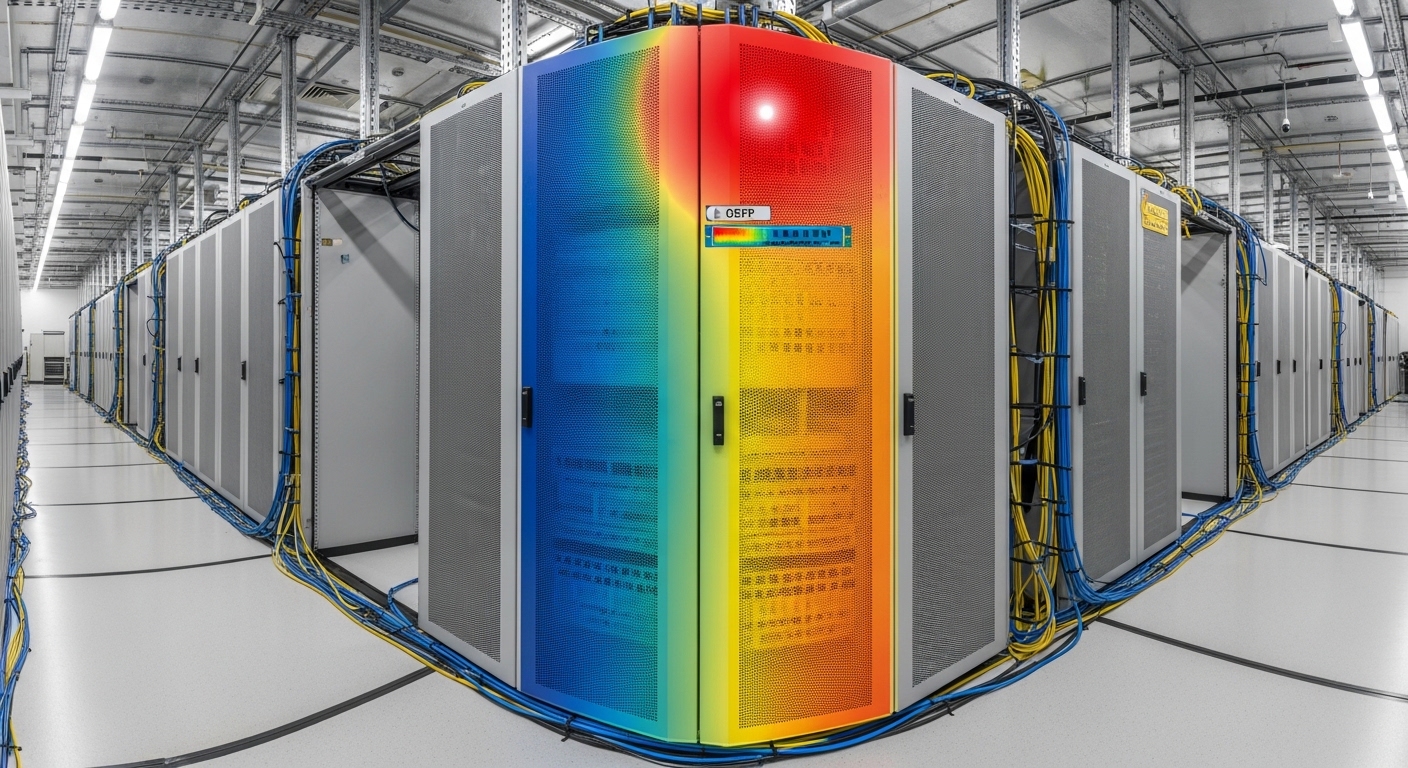

Airflow recirculation creating localized hot spots

Root cause: overall inlet air temperature looked acceptable, but a local hot spot around specific ports increased transceiver case temperature. This reduced optical margin and triggered retraining.

Solution: use thermal imaging or sensor mapping to identify hot spots and validate airflow paths. Adjust baffle placement and cable routing to reduce recirculation; re-run the 72-hour stability test.

Cost and ROI note: what it really costs to stabilize an 800G AI network

Pricing for OSFP 800G optics varies by reach class, vendor, and whether you buy OEM-branded modules or third-party options. In many deployments, you should expect typical street prices in the range of hundreds to over a thousand USD per module, with OEM variants often costing more but carrying stronger compatibility assurances. Your real cost is not only module price; it includes downtime risk, engineering time, and the operational cost of repeated troubleshooting.

For ROI, teams often underestimate TCO. If a “cheaper” module increases incident rate or requires manual port gating, the engineering time and service interruptions can outweigh the unit price delta. We reduced resets enough to avoid at least one escalation cycle and a rollback, which is usually the biggest hidden cost in an 800G AI network rollout.

Power consumption also matters at scale. Even small thermal improvements can reduce fan duty cycles and improve overall rack efficiency, but you should validate with your facility’s power model rather than assuming a fixed savings.

FAQ

What is the main reason OSFP 800G optics affect stability in an 800G AI network?

The main factor is margin: optical power, receiver sensitivity, and the ability to maintain stable signal conditions under thermal and traffic-induced stress. If telemetry shows temperature rise and received power drift, link retraining can cause interface resets even when light-load tests pass.

How do I validate compatibility beyond checking the module form factor?

Confirm the exact switch line card and firmware version support the specific OSFP 800G module profile. Use the switch vendor optics compatibility list, then run a controlled traffic ramp test that includes burst patterns similar to your AI workload.

What DOM metrics should I alert on for early failure detection?

At minimum, alert on transceiver temperature and received optical power trends. Use conservative thresholds and trend-based alerts so you detect slow drift before the link enters a retraining loop.

Do I need OTDR for every link, or can I rely on patch panel labeling?

Labeling helps, but you need certified measurement for accurate power budgeting. OTDR or certified loss testing is the most reliable way to catch unexpected splice or connector issues that only show up under higher utilization and temperature drift.

Can third-party OSFP 800G modules work, or should I buy OEM only?

Third-party modules can work, but you must validate them against your switch firmware and optics qualification expectations. The risk is not bandwidth; it is behavioral differences during link training and telemetry formats that complicate automation.

What is a practical rollout strategy to reduce risk?

Start with a pilot subset of ports, enforce DOM-based gating, and run a 72-hour burst-stress test. Only expand after resets are near zero and telemetry trends remain comfortably inside vendor-recommended operating stability.

If you want the next step, use the same measurement-first approach to design your fiber power budget and automation thresholds. Start with Fiber power budgeting for high-speed Ethernet optics and align it with your switch optics support matrix.

Author bio: I build and validate high-speed datacenter links in production, with a bias toward measurable telemetry, tight thermal margins, and fast rollback paths. I write for teams chasing PMF in infrastructure: ship, measure, learn, and harden the system before scaling.