When you move from Traditional RAN to Open RAN, the biggest surprise is often not radio software, but fiber optics. More functional splits, more remote units, and more midhaul paths can change how many links you need, what reach you can afford, and which optics survive real deployment conditions. This article helps network and infrastructure engineers plan fronthaul, midhaul, and backhaul fiber with the right transceiver strategy and fewer field failures.

Why Open RAN shifts fiber demand beyond “more cables”

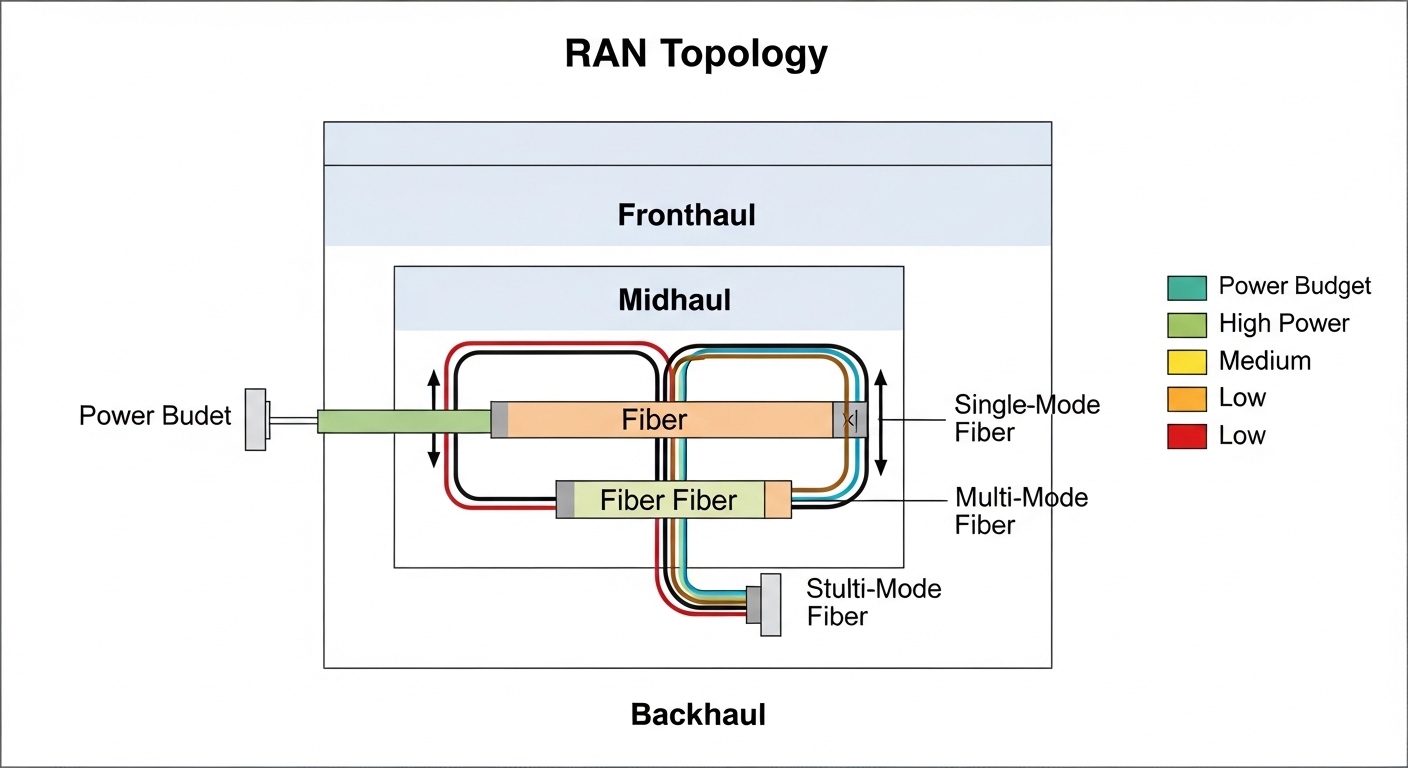

Traditional RAN deployments often centralize baseband processing and keep a simpler physical topology between radio sites and the aggregation/core. With Open RAN, the architecture can introduce additional network segments and functional split options, meaning different latency, bandwidth, and synchronization requirements across fronthaul, midhaul, and backhaul. In practice, that can increase the number of optical interfaces per site and change the mix of short-reach versus longer-reach links.

From a standards perspective, the industry commonly references functional split concepts aligned to 3GPP (for example, split options in the higher-level ecosystem) and transports that map to Ethernet-based methods. On the physical layer, engineers still operate within IEEE Ethernet PHY behavior and vendor-specific optics support constraints, especially for 25G/10G/100G optics and optics management features. If you treat fiber planning as a static “site count times link count” exercise, you can under-provision transceiver types and over-provision expensive long-reach optics.

Open RAN transport mapping: fronthaul, midhaul, backhaul

Open RAN is not one single transport pattern; it is a set of disaggregated components where the split point drives what must traverse the fiber. For engineers, the key is to identify which segments carry time-critical signals (often fronthaul), which carry aggregation and scheduling traffic (midhaul), and which carry less timing-sensitive traffic (backhaul). That classification determines whether you can use cost-optimized short-reach optics or whether you must plan for higher budget per link due to reach, dispersion tolerance, and optical power margin.

Fronthaul planning implications

Fronthaul segments tend to be the most sensitive to latency and synchronization. Even when the transport is “Ethernet-like,” the operational requirement can push you toward more deterministic behavior, tighter jitter budgets, and careful link engineering. Field engineers often discover that optics selection is only half the story; patch panel cleanliness, connector inspection, and fiber end-face quality can make or break link stability under temperature swings.

Midhaul and backhaul planning implications

Midhaul and backhaul are usually more tolerant, so reach and cost become dominant. Here you can often consolidate traffic, choose higher-count links, and standardize transceivers to reduce spares complexity. However, Open RAN can increase the number of intermediate aggregation points, so you may still end up with more optical interfaces overall, even if each link is “easier.”

Key optics parameters that determine whether your Open RAN fiber plan holds

Once you know which segment carries fronthaul versus midhaul/backhaul, you can match optics to fiber type, reach, and operational environment. Engineers frequently underestimate power budget and temperature derating. Many transceiver datasheets specify optical power and receiver sensitivity under reference conditions; in real sites, you must account for aging, connector loss, and temperature variation.

The comparison table below focuses on common short-reach and extended-reach choices used in RAN-adjacent designs. Use it as a sanity check for wavelength, reach class, connector type, and typical operating conditions. Always validate against the exact vendor datasheet and your switch or OLT compatibility list.

| Optics type (example models) | Data rate | Wavelength | Typical reach class | Connector / fiber | Typical temperature range | Power (typical) and notes |

|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR / Finisar FTLX8571D3BCL / FS.com SFP-10GSR-85 | 10G | 850 nm (MM) | ~300 m (OM3) / ~400 m (OM4) | LC, multimode (OM3/OM4) | 0 to 70 C (varies by vendor) | Low-cost, short-reach; sensitive to patch loss and cleanliness |

| QSFP28 SR4 class (vendor-specific SR4 modules) | 100G | 850 nm (MM) | ~100 m (OM3) / ~150 m (OM4) | MPO/MTP, multimode (OM3/OM4) | 0 to 70 C (varies) | Higher port density; requires careful MPO polarity and cleaning |

| QSFP28 LR4 class (vendor-specific LR4 modules) | 100G | 1310 nm (SM) | ~10 km (typical) | LC, single-mode (OS2) | -5 to 70 C (varies) | Higher BOM cost; more margin for longer spans |

| SFP+ 10G ER / ZR class (vendor-specific) | 10G | 1550 nm (SM) | ~40 km (ER) / ~80 km+ (ZR, varies) | LC, single-mode (OS2) | -5 to 70 C (varies) | Designed for long spans; check link budget and optics vendor compatibility |

For authority and baseline behavior, Ethernet PHY and optical transport assumptions should be aligned with IEEE Ethernet standards and the vendor datasheets for the specific transceiver part numbers. For connector and fiber loss engineering, consult ANSI/TIA fiber cabling standards for loss measurement practices and test methods. [Source: IEEE 802.3] [Source: ANSI/TIA-568]

Pro Tip: In Open RAN rollouts, the most common “mystery link flaps” are not caused by the transceiver at all; they come from marginal connector end-face cleanliness and patch panel rework. Treat every MPO/MTP and LC cleaning event as a controlled maintenance step, and verify with an inspection scope before blaming optics reach or switch configuration.

Decision checklist: choosing optics and fiber strategy for Open RAN

To keep fiber infrastructure stable as the architecture evolves, engineers should use a repeatable selection process. Below is an ordered checklist that field teams can apply per site and per link type. This is designed to reduce tech debt and avoid “swap later” decisions that become expensive once you trench fiber or populate racks.

- Distance and fiber type: Determine whether you are on OM3/OM4 multimode or OS2 single-mode, and measure actual installed length including patching and slack.

- Budget and optical margin: Calculate link budget with connector loss, splice loss, and worst-case temperature/aging. Do not rely on “datasheet max reach” as a planning number.

- Switch compatibility: Confirm the transceiver is supported by the exact switch model and firmware. Many platforms enforce optic capability via digital diagnostics and compatibility checks.

- DOM support and monitoring: Prefer modules with digital optical monitoring (DOM) and ensure your network stack can read and alert on thresholds (laser bias, RX power).

- Operating temperature and airflow: Outdoor cabinets and indoor shelters can exceed spec. Validate that the module temperature range matches the installation environment.

- Vendor lock-in risk: Evaluate whether third-party optics are accepted and stable long-term. Lock-in risk grows when firmware updates tighten compatibility rules.

- Spare strategy: Plan spares by part number, not by “equivalent reach.” Mixed spares complicate incident response during outages.

When you compare Open RAN versus Traditional RAN, the checklist remains the same, but the number of decisions increases because the topology is more distributed. That is why standardization matters: selecting a smaller set of optics families (for example, one MM SR for short links and one SM LR for longer segments) can cut operational risk.

Open RAN vs Traditional RAN: practical fiber architecture differences

In a Traditional RAN design, a common pattern is fewer functional split points and a more centralized baseband, which can reduce the number of distinct fiber segments and optical interfaces per site. With Open RAN, disaggregation can increase the number of hops and introduce midhaul aggregation layers. That changes how you allocate fiber pairs, how you plan rack-to-shelter patching, and how you design for future capacity upgrades without re-splicing.

Concrete deployment scenario

Consider a 3-tier data center leaf-spine topology with 48-port 10G ToR switches at the leaf, 100G uplinks to spine, and a separate edge aggregation layer for radio sites. Suppose you deploy Open RAN where 12 radio units per cluster connect to a near-edge unit, and each cluster needs 8 fronthaul links plus 4 midhaul aggregation links. If each fronthaul span is 180 m on OM4 and each midhaul span is 2.5 km on OS2, you may use 10G SR optics for fronthaul and 10G LR/ER or equivalent for midhaul depending on reach and budgets.

Operationally, that scenario can translate into 12 clusters × 12 fronthaul links = 144 optical interfaces plus 12 clusters × 4 midhaul links = 48 interfaces. Even if each interface is “short,” the total optics count increases, and so does the probability of failure events. That is why monitoring thresholds, connector inspection routines, and standardized part numbers become part of the architecture, not just procurement.

Common mistakes and troubleshooting patterns in Open RAN fiber rollouts

Even experienced teams can stumble when Open RAN changes the link mix. Below are common pitfalls with likely root causes and practical solutions.

Using “reach” from the datasheet without adding connector and patch loss

Root cause: Engineers plan using maximum reach but ignore connector loss, patch panel wear, and additional splices from rework. In Open RAN, more intermediate panels mean more loss sources. Solution: Build a link budget using measured fiber attenuation plus conservative splice and connector loss assumptions, then include a margin for aging and temperature.

MPO/MTP polarity and ribbon orientation errors on high-density QSFP28 SR4 links

Root cause: High-density multimode links are unforgiving when MPO polarity is reversed, especially after maintenance. The result can be intermittent link loss or “link up but high BER.” Solution: Use polarity-aware patching, label both ends consistently, and verify with an MPO polarity tester and optical power checks at commissioning.

Assuming optics are interchangeable across switch models

Root cause: Some switches enforce digital diagnostic thresholds, supported vendor IDs, or specific optic capability profiles. After a firmware update, previously working optics may degrade or fail to initialize. Solution: Maintain an optics compatibility matrix per switch model and firmware version; test optics in a staging environment before production changes.

Skipping DOM monitoring and only reacting to link-down alarms

Root cause: Laser power drift and receiver sensitivity changes can precede link outages. If you only alert on link state, you lose the early warning window. Solution: Enable alerts on DOM metrics like RX power and laser bias current, and set thresholds based on your baseline commissioning values.

Cost and ROI: what changes when Open RAN increases optics count

Open RAN can increase the number of optical interfaces per site due to more distributed units and additional midhaul paths. That can raise upfront costs, but it can still be ROI-positive if you standardize optics, reduce truck rolls, and avoid expensive fiber rework. Typical street pricing varies widely by region and vendor, but engineers often see 10G SR modules in the low tens of USD to low hundreds depending on brand and temperature grade, while 100G LR4 modules can cost several hundred to over a thousand USD each.

For TCO, include transceiver failure rates, field cleaning consumables, spare inventory holding costs, and the labor cost of troubleshooting. Third-party optics can reduce BOM cost, but they may introduce compatibility risk and faster obsolescence during firmware updates. In many deployments, the best ROI comes from a controlled mix: third-party optics for non-critical segments with stable compatibility, and OEM or tightly validated optics for fronthaul segments where stability and monitoring are paramount.

For cabling lifecycle costs, remember that fiber infrastructure is often the longest-lived asset. The cheapest transceiver is irrelevant if your fiber plant is under-tested or your connector hygiene is inconsistent. [Source: ANSI/TIA-568]

FAQ

How does Open RAN affect fronthaul versus backhaul optics choices?

Open RAN can introduce additional functional split points, which changes where timing-sensitive traffic flows. That typically makes fronthaul planning more sensitive to latency, jitter, and optical link stability, while backhaul can be more cost-optimized with standard Ethernet-class optics.

Should we use multimode or single-mode for Open RAN fiber links?

Use multimode (OM3/OM4) for shorter spans when your measured link budget supports it and you can maintain strict connector hygiene. Use single-mode (OS2) for longer spans, higher consolidation, or when you want fewer “reach edge” failures during maintenance.

What are the biggest causes of link instability in real deployments?

The top causes are usually connector cleanliness issues, patch panel rework mistakes, MPO polarity problems, and insufficient link budget margins. A secondary cause is optics compatibility drift after switch firmware updates.

How do we reduce vendor lock-in risk with Open RAN?

Maintain a tested optics compatibility list per switch model and firmware baseline, and require DOM support so you can monitor performance. Where feasible, standardize on a small set of optics families and require acceptance testing before expanding third-party vendors.

Do we need DOM monitoring for every optics type?

DOM is strongly recommended for critical fronthaul and any link where you cannot tolerate frequent outages. For less critical backhaul links, you may still benefit from DOM to detect degradation early and reduce mean time to repair.

What testing should we do at commissioning?

Perform end-to-end attenuation checks, verify connector inspection results, and validate BER/packet error rates under expected traffic profiles. For MPO links, confirm polarity and run optical power verification before declaring acceptance.

If you are mapping an Open RAN rollout to a fiber plan, start by classifying segments (fronthaul/midhaul/backhaul) and then apply the optics decision checklist with measured link budgets. Next, align your transceiver compatibility and monitoring approach to your switch fleet using transceiver compatibility and DOM monitoring and your site’s maintenance process.

Author bio: CTO-focused infrastructure writer with hands-on experience designing and operating fiber-based transport for disaggregated radio and enterprise Ethernet networks. Previously led field rollouts where optics compatibility, DOM monitoring, and connector hygiene reduced outage rates in production.