A modernization program can stall on a simple question: which transceivers will reliably carry fronthaul and midhaul traffic in an Open RAN build? This article helps radio access network engineers and field deployment teams make Open RAN selection decisions that survive real temperature swings, optics budgets, and switch quirks. You will leave with a step-by-step implementation plan, a practical checklist, and failure-mode troubleshooting grounded in IEEE Ethernet optics behavior.

Prerequisites for Open RAN selection (so choices do not fail in the rack)

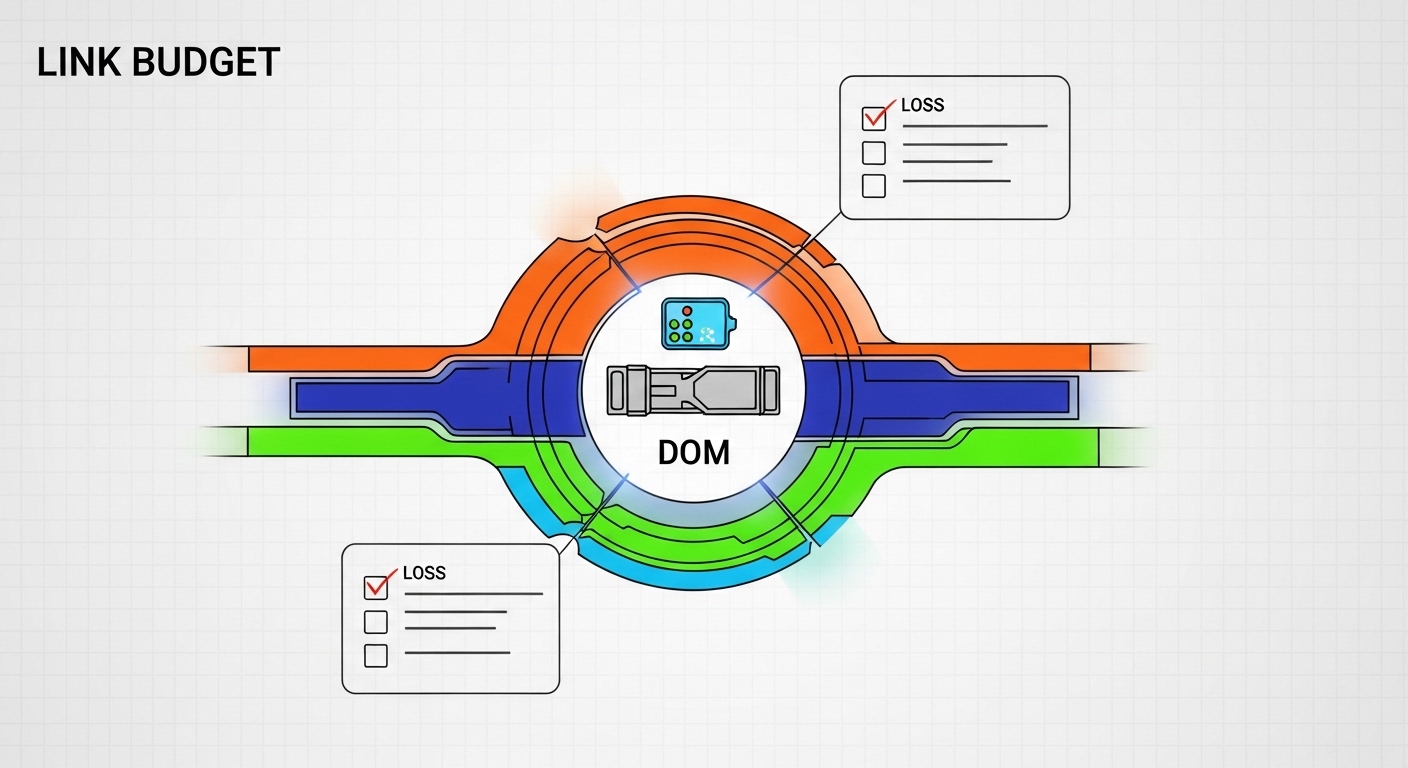

Before you touch part numbers, align on what your Open RAN deployment actually needs: interfaces, fiber plant, timing, and operational constraints. In many builds, the transceiver choice is dictated less by “what works in a lab” and more by optical budget margins, DOM telemetry support, and switch vendor interoperability.

What to gather on day one

Document the interface map (e.g., eCPRI over 10GBASE-R/25GBASE-R), the expected distances, and whether you are using active equipment with strict optics compatibility lists. Pull the exact switch model and line card port type, then verify whether it supports vendor-agnostic optics or only whitelisted modules.

Measure the fiber plant: record link length, fiber type (OM3, OM4, OS2), and connector losses (typical field values: ~0.3 dB per mated LC pair, plus patch panel and splice allowances). If you cannot measure, assume conservative connector and splice losses and keep margin for aging and cleaning quality.

Confirm optical policy: decide if the network requires Digital Optical Monitoring (DOM) for alarm thresholds, and whether you will enforce vendor-specific diagnostics in operations. For Open RAN selection, DOM matters because field failures often present first as rising receive power alarms, not total link down events.

Step-by-step implementation guide: Open RAN selection that matches reach, power, and telemetry

This numbered path is how I would run the decision in a production rollout, from requirements to installed optics. Each step includes the expected outcome so you can validate progress and avoid late surprises.

Lock the Ethernet PHY and data rate profile

Identify the exact line rate and PHY mapping used by your Open RAN transport. For example, if your leaf-spine fabric uses 25G/10G uplinks and your fronthaul/midhaul design maps to Ethernet over eCPRI with specific transport encapsulations, your optics must match the port type (e.g., 25G SFP28, 10G SFP+, or 100G QSFP28).

Expected outcome: You eliminate incompatible module form factors and PHY families before any procurement.

Choose the fiber type and wavelength family

For short-reach multimode links, use 850 nm optics paired to OM3/OM4 fiber. For longer reach or single-mode deployments (common for aggregation spans), select 1310 nm or 1550 nm variants depending on your budget and optics availability.

Compute an optical budget with real margins

Start with the vendor’s specified maximum reach for your module class, then subtract measured link losses. Include conservative allowances: patch cord loss, connector insertion loss, and splice loss. In field practice, I often keep at least 3 dB of margin beyond calculated loss for cleaning variability and aging; if the environment is harsh, plan 4–6 dB.

Expected outcome: You can justify why a specific reach class (SR vs LR vs ER) will remain stable after maintenance cycles.

Verify switch compatibility and optics policy

Open RAN selection is not only about optics physics; it is also about how the switch validates transceivers. Some platforms enforce strict firmware checks, while others allow third-party modules but only if the DOM flags and vendor IDs pass their thresholds.

Check the exact switch and line card documentation, then test with a small pilot. If the switch supports “DOM monitoring,” confirm whether the network management system will read alarms such as laser bias current and received power.

Expected outcome: You avoid a pilot where links flap because the switch refuses modules or misinterprets diagnostics.

Pick concrete transceiver models (and keep spares aligned)

Procure modules with the same optical class, DOM behavior expectations, and temperature rating. In real deployments, I have seen teams mix “equivalent” SR modules that differ in laser safety class or threshold calibration, causing inconsistent alarm behavior during outages.

Example module families you may consider: Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, or FS.com SFP-10GSR-85 (model availability varies by vendor catalog and region). For 25G, you might use SFP28 SR parts or 10G-to-25G designs depending on your transport architecture.

Validate with acceptance tests before mass install

Perform link bring-up tests, check DOM telemetry, and validate error counters. On many switches, you can confirm operational health by monitoring interface counters (CRC errors, FCS errors) and by reading DOM registers through the management plane. Keep an eye on whether receive power is trending toward alarm thresholds.

Expected outcome: Every installed port has a baseline and an alert profile, reducing mean time to repair.

Transceiver specs that matter most in Open RAN selection

Engineers often compare only reach, but Open RAN selection succeeds when you align reach, wavelength, connector type, power class, and temperature range to the site reality. The table below compares common transceiver options used in Ethernet-based Open RAN transport. Always confirm the exact SKU and compliance statement in the vendor datasheet.

| Module example | Data rate / form factor | Wavelength | Typical reach | Fiber type | Connector | Power (typ.) | DOM support | Operating temperature |

|---|---|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR | 10G SFP+ | 850 nm | ~300 m (OM3) / ~400 m (OM4) | MMF | LC | Low single-digit W class | Yes (DOM) | Commercial range (check datasheet) |

| Finisar FTLX8571D3BCL | 10G SFP+ | 850 nm | ~300 m (OM3) / ~400 m (OM4) | MMF | LC | Low single-digit W class | Yes (DOM) | Commercial/industrial options vary |

| FS.com SFP-10GSR-85 | 10G SFP+ | 850 nm | ~300 m (OM3) / ~400 m (OM4) | MMF | LC | Low single-digit W class | Varies by SKU (confirm) | Industrial options may exist |

Standards and reference points: Ethernet optical interfaces are governed by IEEE physical layer behavior; for 10GBASE-SR and related optical mechanisms, consult IEEE 802.3 for baseline performance expectations. For operational telemetry and management behavior, rely on vendor datasheets and DOM documentation. [Source: IEEE 802.3] [Source: Vendor transceiver datasheets]

Pro Tip: In Open RAN selection pilots, do not only verify “link up.” Query DOM and record baseline receive power and laser bias current at installation time. A module that is merely “within spec” can still drift faster than expected, and that drift often shows up first as rising receive power alarms months before a hard failure.

Real-world Open RAN deployment scenario: how choices play out in the field

Consider a regional Open RAN build in a 3-tier data center leaf-spine topology with 48-port 10G ToR switches at the edge and 2x 100G spine uplinks for aggregation. The fronthaul-to-distributed unit (DU) zone uses 10GBASE-SR over OM4 with typical patch-to-rack distances of 120–180 m, including patch panels and cross-connects. Engineers target a conservative optical budget: measured fiber loss of ~3.5 dB plus ~1.5 dB for connectors and splices, leaving margin before the vendor reach ceiling.

In this scenario, Open RAN selection is constrained by switch optics policy: a pilot shows that one third-party SR module variant passes link bring-up but does not expose DOM alarms correctly through the management system, delaying fault localization. The fix is not to “buy another brand at random,” but to align DOM behavior and thresholds with what the switch firmware expects, then standardize on temperature-rated SKUs for the DU room where airflow is uneven.

Selection criteria checklist for Open RAN selection (engineer priorities)

Use this ordered checklist like a gate review. If you can answer each item with evidence, your Open RAN selection is likely to survive both audits and outages.

- Distance and fiber plant: confirm OM3/OM4/OS2, measured loss, and connector/splice assumptions.

- Data rate and PHY mapping: ensure the optics match the port type (SFP+, SFP28, QSFP28) and line rate.

- Wavelength compatibility: 850 nm for MMF short reach; 1310/1550 for SMF depending on budget.

- Switch compatibility: validate optics whitelisting policy and firmware behavior for DOM and diagnostics.

- DOM support and telemetry: confirm alarms, thresholds, and whether your monitoring stack can ingest them.

- Operating temperature: choose commercial vs industrial rating based on enclosure airflow and worst-case ambient.

- Vendor lock-in risk: weigh OEM modules with predictable support against third-party modules with controlled compatibility.

- Connector cleanliness plan: ensure field procedures for inspection and cleaning exist; optics fail more from contamination than from “bad specs.”

Common mistakes and troubleshooting tips (root cause, fix, and how to prevent repeats)

When Open RAN selection goes wrong, it often does so in predictable ways. The best teams treat these as design flaws in the process, not just “bad optics.”

Pitfall 1: Choosing SR for a link that is actually closer to the margin than the datasheet

Root cause: Underestimated connector loss, unmeasured patch cords, or optimistic reach assumptions for OM4. Solution: remeasure fiber length and end-to-end loss; add a margin (commonly 3 dB or more), and if needed, move to a higher-reach optic class or reduce patch complexity.

Pitfall 2: Module works but alarms and monitoring are missing or misleading

Root cause: DOM behavior differs across vendor SKUs; switch firmware may interpret thresholds differently, or the monitoring system may not map DOM fields. Solution: during acceptance testing, verify DOM telemetry visibility in the same management path used in production; standardize on modules that expose consistent alarm registers.

Pitfall 3: Intermittent link flaps after maintenance or cleaning

Root cause: Dirty connectors, damaged ferrules, or incorrect polarity/duplex pairing. Solution: inspect with a scope, clean with approved methods, and confirm Tx/Rx polarity and patching discipline. Re-terminate or replace any connector showing scratches.

Pitfall 4: Temperature-related degradation in edge enclosures

Root cause: Using commercial temperature modules in warmer DU rooms or enclosures with poor airflow, causing laser bias drift and increased error rates. Solution: select industrial temperature-rated optics and improve airflow; monitor DOM for early drift.

Cost and ROI note: balancing OEM certainty with third-party flexibility

In typical procurement channels, OEM 10G SFP+ SR modules often land in a range that can be materially higher than third-party equivalents, while QSFP-class optics can be more sensitive to lead times and firmware compatibility. A realistic ROI lens includes not only unit price but also TCO from downtime, truck rolls, and troubleshooting time when DOM telemetry is incomplete.

In practice, I have seen third-party modules reduce purchase cost but increase operational overhead if compatibility and DOM expectations are not validated in the acceptance test plan. The safest ROI comes from standardizing module SKUs per switch family, keeping a small “known-good” inventory of spares, and measuring failure rates over the first maintenance cycle rather than relying on vendor claims.

FAQ: Open RAN selection questions engineers ask before ordering

Q1: Do I need DOM for Open RAN selection?

DOM is strongly recommended if your operations team relies on proactive monitoring. Without it, you may detect failures only after link loss, which increases mean time to repair. Confirm that your switch and monitoring stack can ingest DOM fields reliably for the specific SKU.

Q2: Is 850 nm always the right choice for fronthaul?

Not always. 850 nm SR optics fit short-reach multimode links, but if your spans approach the reach limit or you use single-mode fiber, 1310/1550 nm optics are usually safer. The decisive factor is measured optical loss plus margin, not distance alone.

Q3: Can I mix OEM and third-party transceivers on the same switch?

You can, but it depends on the switch firmware’s optics policy and how it validates DOM and diagnostics. A mixed environment can work, yet it complicates troubleshooting if alarm behavior differs. For stability, standardize per switch model and run a pilot to confirm compatibility.

Q4: What is the fastest way to validate an optics choice?

Do an acceptance test that includes link bring-up, DOM telemetry capture, and sustained error-counter monitoring under realistic traffic patterns. If possible, run the test for long enough to reveal early drift rather than a single-minute “it links” check.

Q5: What should I monitor after deployment?

Track receive power alarms, laser bias current (if available), and interface error counters like CRC/FCS errors. Also monitor environmental indicators like enclosure temperature and airflow changes, because thermal stress can manifest as gradual optical drift.

Q6: How do I avoid transceiver failures caused by fiber issues?

Implement connector inspection and cleaning procedures, and verify polarity and patching discipline during every maintenance event. Many “optics failures” are actually contamination or connector damage that a scope would reveal immediately.

Open