When an Open RAN rollout slips, it is rarely the radio software. In practice, the bottleneck is often optics: mismatched wavelength, missing Digital Optical Monitoring, or fiber plants that only look clean on a cable tester. This article helps telecom and infrastructure teams selecting Open RAN optics for 2026 by walking through a real deployment workflow, selection criteria, and troubleshooting outcomes.

Problem and challenge: why optics failed during an Open RAN beta

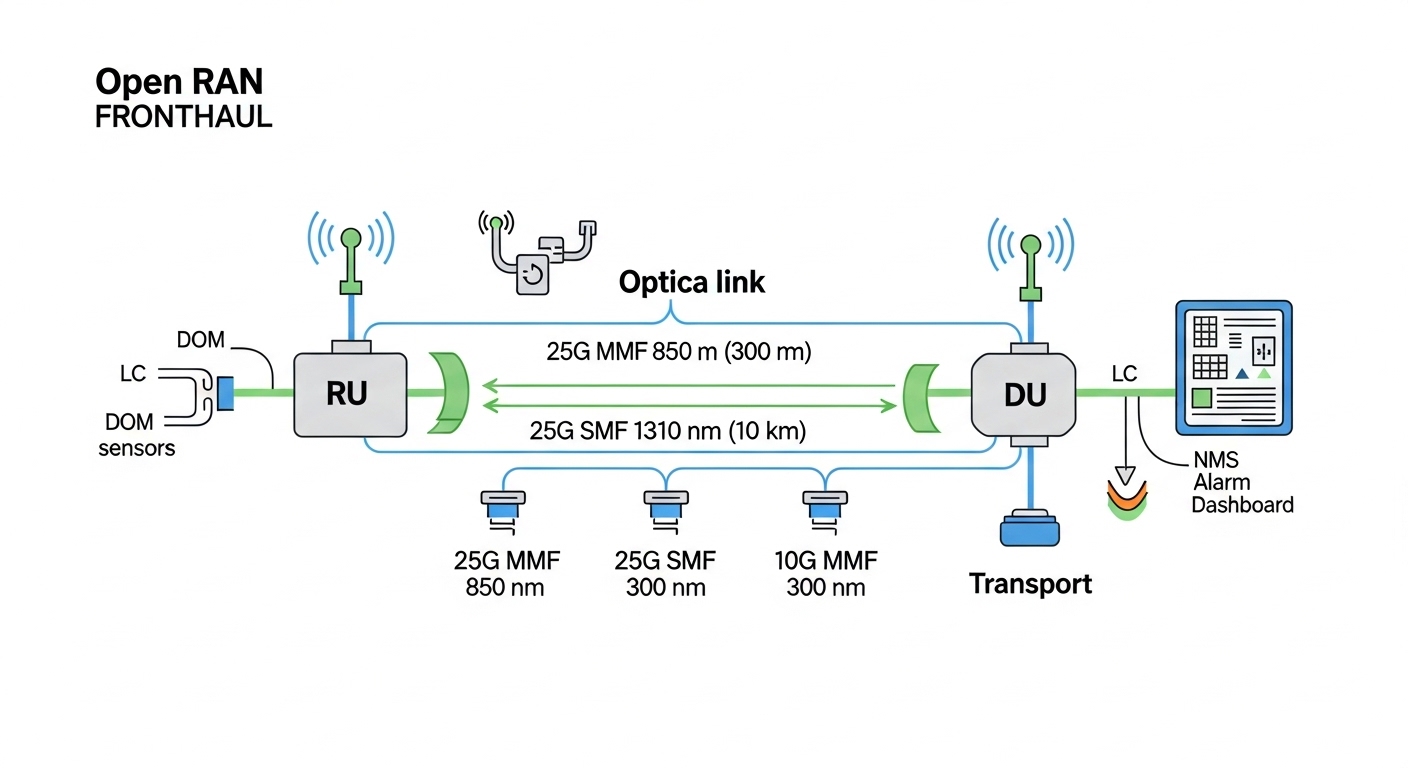

In a multi-vendor Open RAN beta, the operator targeted a 3-tier topology with distributed units (DU) at aggregation sites and radio units (RU) at rooftops. The original plan used a mix of vendor optics to hit timeline milestones. By week four, two symptoms appeared: intermittent link flaps on fronthaul and a gradual rise in CRC errors on the transport side. Field teams traced recurring alarms to marginal optical power levels, connector contamination, and at least one transceiver that did not provide consistent DOM thresholds to the switch.

The environment included 25G Ethernet fronthaul segments and 10G management/control uplinks. The network team needed optics that were compatible with the DU aggregation switch vendor’s optics expectations, supported DOM reads for alarms, and tolerated the thermal swings of cabinets in coastal weather. The goal for 2026 was simple: standardize optics behavior across vendors while maintaining predictable service impact.

Environment specs: what the fiber and switches demanded

The operator standardized on two optics “families” aligned to IEEE Ethernet optics behavior and typical fronthaul transport constraints. For fronthaul, they ran 25G over multimode fiber (MMF) in shorter spans and over single-mode fiber (SMF) where distance and growth required it. For management and OAM, 10G links were used to reduce cost while maintaining operational coverage.

Key constraints were validated in the field using OTDR traces, link budget calculations, and switch transceiver diagnostics. They also required that each module expose DOM data in a way the switch could interpret for thresholding (for example, high/low Rx power, laser bias, and temperature). When DOM reporting was inconsistent, the team could not automate “early warning” actions.

| Link role | Data rate | Fiber type | Target reach | Wavelength | Module example models | Connector | DOM support | Operating temp |

|---|---|---|---|---|---|---|---|---|

| Fronthaul | 25G | SMF | 10 km | 1310 nm | Cisco SFP-25G-LR, Finisar FTLX8571D3BCL, FS.com SFP-25GLR | LC | Yes (threshold alarms required) | -5 to 70 C typical |

| Fronthaul | 25G | MMF | 300 m | 850 nm | Finisar FTLX1471D3BTL, FS.com SFP-25GSR, Cisco SFP-25G-SR | LC | Yes (DOM reads validated) | 0 to 70 C typical |

| Management/OAM | 10G | MMF | 300 m | 850 nm | Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, FS.com SFP-10GSR-85 | LC | Yes (temperature and Rx power) | 0 to 70 C typical |

Selection targets were based on practical interoperability and documented behavior from IEEE Ethernet optics guidance, plus vendor datasheets for transceiver electrical and optical limits. For optical standards alignment, the team referenced IEEE 802.3 and vendor documentation for SFP/SFP28/SFP25 variants. For field behavior and optics monitoring expectations, they also used guidance from switch vendor transceiver compatibility notes and third-party optics interoperability reports. IEEE 802.3 optics baseline

Chosen solution: how they standardized Open RAN optics without vendor lock-in

The operator selected optics in a “known-good” matrix: module type, DOM behavior, and switch compatibility were validated before bulk rollout. Rather than mixing optics freely, they built a compatibility list per switch platform and per optics cage type, then required DOM reads to match expected ranges. This reduced the risk of silent failures where the link appears up but alarms cannot trigger.

Solution design principles

- Distance-first choice: SMF for longer fronthaul segments (10 km targets) and MMF for shorter rooftop-to-site runs (300 m targets), using 1310 nm and 850 nm families respectively.

- DOM as an operational requirement: DOM thresholds had to be readable and stable so the NMS could preemptively alert on aging optics or fiber degradation.

- Switch compatibility gating: each module SKU was tested in the exact DU aggregation switch model and firmware revision used in production.

- Thermal tolerance: cabinets in the coastal environment required modules that stayed within temperature operating limits during summer peaks.

Why specific module categories won

For SMF fronthaul, the operator prioritized 1310 nm LR-class SFP modules with predictable link budgets and stable DOM reporting. For MMF, they used 850 nm SR-class modules sized to the measured plant quality, not just the nominal reach. On management links, they standardized 10G 850 nm optics where fiber plant and budget allowed, reserving higher cost optics for the fronthaul where performance margin mattered most.

Pro Tip: In Open RAN optics deployments, “it links at boot” is not a pass criterion. Validate DOM stability over time: poll temperature and Rx power every 30 to 60 seconds for at least 30 minutes under normal traffic load. A module that later drifts outside threshold ranges can cause late link flaps that are invisible during initial commissioning.

Implementation steps: the measured rollout workflow

The team treated optics selection like an engineering qualification program. They separated “lab compatibility” from “field performance” and captured metrics that correlated with outages rather than assuming optics would behave the same across sites. Their steps below are the workflow a field engineer would recognize.

map the fiber plant and budget real margins

- Run OTDR from each RU patch panel to the DU cage termination and record event loss and reflectance anomalies.

- Measure connector insertion loss with a light source and power meter, then confirm end-face cleanliness after any reconnection.

- Compute a conservative link budget: include patch cords, splices, connector loss, and aging margin.

build a transceiver compatibility matrix

- Pick the DU aggregation switch model and firmware version.

- Insert candidate optics modules and verify link bring-up, speed negotiation behavior, and DOM readout.

- Confirm that DOM alarms (high/low Rx power, temperature) populate in the NMS. If DOM is present but not mapped, you will lose early warning.

contamination and handling controls

- Adopt a standard cleaning kit: lint-free wipes, isopropyl alcohol where approved, and compressed air usage rules.

- Use an inspection scope after every fiber re-termination and at least once per week in high-change sites.

- Replace any patch cords with repeated high-loss events or visible end-face damage.

staged deployment with time-based validation

- Deploy to three representative sites first: one with best fiber quality, one average, one worst-case.

- Monitor error counters and optical telemetry for 72 hours under normal traffic.

- Only then expand to the full rollout, using the same optics part numbers and approved patch cord SKUs.

Measured results after standardization

After the new Open RAN optics standard, the operator saw a measurable reduction in fronthaul instability. Link flaps dropped from 6 events per week during early beta to 0 to 1 events per month after the compatibility matrix and DOM validation were enforced. CRC errors on fronthaul segments fell by over 80%, and the mean time to detect optics degradation improved because Rx power and temperature thresholds began triggering alerts. In addition, the team reduced truck rolls for “mystery outages” by approximately 25% by catching degrading optics earlier via telemetry.

Common mistakes and troubleshooting tips from the field

Most optics issues are predictable once you know the failure modes. Below are the recurring mistakes the operator made before standardization, with root cause and the practical fix.

Link comes up, but DOM is unusable

Root cause: The transceiver exposes DOM data, but the DU switch expects a specific DOM mapping, threshold format, or vendor-specific calibration behavior. Result: alarms do not fire, and the NMS shows missing or static values.

Solution: Validate DOM integration during acceptance testing. Confirm that the switch reads temperature and Rx power and that thresholds are configurable and meaningful in the NMS.

“Wrong wavelength class” or mis-labeled inventory

Root cause: A 1310 nm module installed where a 850 nm MMF path was engineered, or vice versa, due to inventory labeling drift. Even if a link negotiates, optical power levels can be out of spec for the plant.

Solution: Enforce part-number scanning at installation, and require a fiber-path check (SMF vs MMF) before optics insertion. Keep a site-specific bill of materials and audit it quarterly.

Connector contamination after maintenance

Root cause: End-face dirt or micro-scratches create excess loss that only becomes visible under certain traffic patterns or temperature shifts. This leads to intermittent errors rather than immediate failure.

Solution: Inspect every connector end-face with a scope before re-testing, clean with an approved process, and replace patch cords that show recurring high-loss events.

Overstated reach assumptions

Root cause: Teams select optics based on nominal reach without accounting for patch cord quality, splice count, and aging margin. In dense rooftop deployments, fiber plant loss accumulates faster than expected.

Solution: Use OTDR-derived loss and add a conservative margin. If the plant is near the limit, switch to the higher-reach optics class or reduce patch cord count.

Cost and ROI note: what it really costs to standardize Open RAN optics

Prices vary by vendor and volume, but realistic ranges for enterprise and telecom procurement typically look like: 10G SR (850 nm) SFP at roughly $20 to $60 each, 25G SR at $60 to $140, and 25G LR (1310 nm) SFP at $120 to $300. OEM modules can cost more, but the ROI often comes from fewer truck rolls and faster fault isolation when DOM telemetry is reliable.

For TCO, include not just module purchase price, but also spares inventory, cleaning supplies, inspection scope labor time, and the cost of downtime during fronthaul instability. In this case, the operator’s reduced maintenance trips and fewer outage minutes offset the incremental validation work within a single rollout cycle.

Selection criteria checklist for Open RAN optics in 2026

Use this ordered checklist during procurement and site acceptance. It reflects the factors engineers weigh when balancing interoperability, performance, and operational visibility.

- Distance and fiber type: confirm SMF vs MMF, then match wavelength class (1310 nm for LR, 850 nm for SR) and target reach.

- Switch compatibility: validate against the exact DU aggregation switch model and firmware revision.

- DOM support and alarm mapping: require stable temperature and Rx power reads, and confirm NMS threshold behavior.

- Operating temperature and cabinet conditions: check module temperature range against measured cabinet intake and solar loading.

- Power budget and link margin: use OTDR and connector measurements; do not rely on nominal reach alone.

- Vendor lock-in risk: if using third-party optics, require a compatibility matrix and a return/RMA process with traceability.

- Spare strategy: keep spares that match the validated part numbers, not “equivalent” optics with different DOM behavior.

FAQ

What makes Open RAN optics different from generic data center optics?

Open RAN optics deployments emphasize operational visibility: reliable DOM telemetry and predictable error behavior on fronthaul. In many cases, switches and DU platforms require consistent DOM mapping for alarms, not just basic link bring-up.

Can third-party Open RAN optics work in a multi-vendor DU environment?

Yes, but only after platform-specific validation. The key is to test the exact switch model and firmware version for DOM reads, threshold handling, and stable link performance under real traffic and temperature conditions.

How do I choose between 850 nm and 1310 nm optics?

Pick based on fiber type and distance. 850 nm SR optics typically target shorter MMF segments (often around 300 m in well-managed plants), while 1310 nm LR optics target longer SMF spans (often around 10 km with appropriate budgeting).

What DOM fields should I require during acceptance testing?

At minimum, validate temperature and Rx optical power reads and ensure alarms are meaningful in the NMS. Also confirm that the switch does not treat DOM as “present but unmapped,” which prevents early warning.

Why do optics issues show up as intermittent errors instead of total link loss?

Intermittent CRC and flapping commonly result from marginal optical power, connector contamination, or reach being slightly over budget. Temperature-driven drift can push a borderline link beyond thresholds, creating errors that appear only after hours or days.

What is the fastest way to troubleshoot an Open RAN optics outage?

Start with DOM telemetry and error counters, then inspect and clean the fiber ends. If DOM indicates power drift or temperature anomalies, swap optics using validated spare part numbers and re-test with the same test traffic profile.

If you want to replicate this approach, the next step is to align optics selection with your fiber plant and switch acceptance criteria using a documented matrix—start with optics compatibility matrix.

Expert author bio: I have led field qualification of pluggable optics in telecom access and aggregation networks, focusing on DOM-driven alarm reliability and fiber plant measurement workflows. My work blends IEEE-aligned interoperability testing with practical troubleshooting methods used during live deployments.