A mid-sized network team recently faced a capacity crunch at the metro edge: new 100G services had to land without rebuilding every switch line card. They chose a disaggregated optical networking approach and standardized on an OLS transceiver for coherent and reach-flexible links. This article helps network engineers and field ops teams evaluate OLS transceivers, plan implementation, and avoid the most common reliability traps.

Problem / Challenge: scaling bandwidth without re-cabling the world

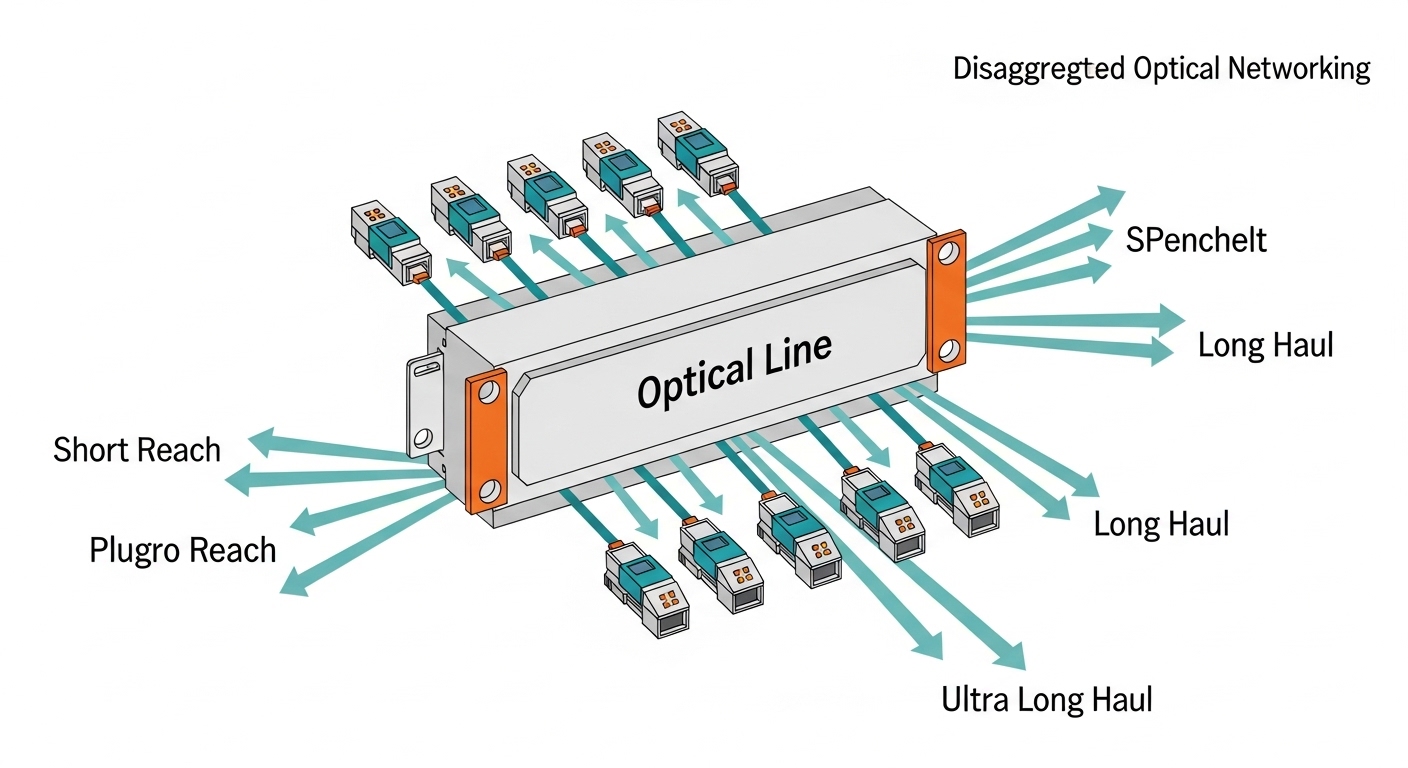

The challenge started in a 3-ring metro topology where dark fiber was already lit for older 10G/40G services. The team needed to add 100G per site while keeping operational risk low: no extended outages for fiber rework, and no long lead-time dependencies on a single switch vendor. Their disaggregated optical networking model separated optics from the switching fabric, using an Open Line System (OLS) concept to standardize the optical line side.

In practice, the “line” layer had to support multiple link distances and channel plans across sites. The team also had to meet performance targets for latency stability, alarm thresholds, and optical power budgets. That pushed them toward transceivers with deterministic behavior under temperature swings and a management plane that could reliably surface DOM telemetry for proactive maintenance.

From an engineering standpoint, they treated the OLS transceiver as a controlled component: it had to be compatible with the OLS shelf optics and meet optical/electrical requirements aligned to Ethernet transport needs (timing and framing behavior), while still following industry expectations for optics management and diagnostics. For the optical layer behavior, they cross-checked vendor datasheets and industry guidance referenced from IEEE Ethernet transport requirements, and for optics control they relied on vendor DOM documentation and common digital interface patterns used across pluggable optics. [Source: IEEE 802.3 Standards]

Environment Specs: the exact metro edge constraints that drove the choice

The deployment ran across eight metro sites, each with different fiber plant quality and patch-panel layouts. The environment included a mix of single-mode fiber runs and patch lengths that varied by up to 12 meters per site. The team’s OLS shelves were installed in standard 19-inch racks with constrained airflow, and they saw temperature gradients from front-to-back that could exceed 8 C during summer peaks.

Operational constraints were explicit. They required transceivers to operate across an ambient range of at least -5 C to 70 C, support automated inventory/alarms, and maintain link stability during planned maintenance windows. They also set a monitoring requirement: the optics must expose DOM metrics (temperature, voltage, bias current, and optical power) with a polling cadence compatible with their NOC tooling.

For distance, they planned three reach classes: short intra-building runs up to 500 m, metro runs around 10 km, and longer runs near 25 km. They validated optical budgets using vendor link calculators and then verified in-lab with representative fiber attenuation and connector loss models. Where coherent choices were possible, they still treated the OLS transceiver as the single point of optical performance variation and therefore the single point of standardization.

| Key spec | Target requirement (deployment) | Example OLS transceiver class used | Notes / limits |

|---|---|---|---|

| Data rate | 100G per wavelength | Coherent-capable or reach-flexible 100G pluggable | Exact modulation and optics vary by vendor and shelf |

| Wavelength / channel | Metro DWDM-ready plan | Vendor-defined grid support | Must align with OLS shelf channelization |

| Reach | 0.5 km / 10 km / 25 km classes | Reach-flexible selections per site | Not all transceivers cover all reaches |

| Connector | LC duplex (typical) | LC pluggable interface | Physical compatibility with the OLS shelf is mandatory |

| Operating temperature | -5 C to 70 C ambient tolerance | Industrial or extended range optics | Check datasheet temperature derating curves |

| DOM / telemetry | Temperature, voltage, bias, TX/RX power | Digital optical monitoring (DOM) | Polling and alert thresholds must match NOC tooling |

| Management interface | OLS shelf reads transceiver diagnostics | Vendor DOM implementation | DOM format differences can break automation |

| Alarm behavior | Detect out-of-range optics before hard failures | Vendor-defined thresholding | Validate alarm mapping in staging |

Update date: 2026-04-30. Specifications and compatibility details vary by OLS shelf generation, vendor, and firmware; always confirm against the exact shelf and transceiver part numbers from the vendor datasheets.

Pro Tip: In disaggregated optical networking, the biggest operational win is not just selecting an OLS transceiver that “works,” but selecting one whose DOM telemetry aligns with your alarm logic. Two transceivers can both pass link bring-up while exposing different DOM scaling or threshold behavior, leading to silent degradations. Validate DOM units and alarm triggers during staging, not after rollout.

Chosen Solution & Why: standardizing the OLS transceiver at the optical line layer

The team standardized on an OLS transceiver family that matched the OLS shelf’s electrical interface and optics control expectations, while supporting the three reach classes they needed. They avoided “one size fits all” assumptions because reach-flexible optics often come with tradeoffs: tighter optical power budgets, different calibration requirements, or reduced tolerance for connector aging.

They also prioritized management compatibility. The NOC required consistent DOM readings for daily health scoring and alerting. In the real deployment, the team configured polling intervals aligned with their telemetry pipeline and used thresholds that correlated with observed BER/OSNR trends during controlled link stress tests. While DOM is commonly discussed as “diagnostics,” in practice it becomes the early-warning system that prevents repeat truck rolls.

For engineering validation, they cross-checked transceiver vendor datasheets and shelf interface documentation. Examples of commonly referenced 10G optics families (not for the same data rate, but for interface patterns and DOM expectations) include Cisco SFP-10G-SR and Finisar FTLX8571D3BCL, and third-party optics from FS.com such as SFP-10GSR variants. These aren’t direct substitutes for an OLS 100G coherent class, but they illustrate how vendor datasheets define electrical, optical, and DOM ranges. [Source: Cisco Support and Datasheets]

Implementation steps: how they rolled out safely across eight sites

- Build a staging lab that mirrors rack airflow. They reproduced the front-to-back temperature gradient by placing a fan wall and measuring with calibrated probes. They verified the OLS transceiver behavior at 55 C ambient and during a controlled thermal ramp.

- Validate DOM mapping end-to-end. In staging, they compared raw DOM readings against NOC dashboards and confirmed scaling for temperature and optical power. They also verified alarm propagation paths (shelf alarm to NOC ticketing).

- Perform link bring-up with representative fiber. Instead of “best-case” patch cords, they used fiber attenuation models matching the worst site connector loss. They captured link metrics and set conservative thresholds for early warning.

- Lock firmware and configuration baselines. They pinned OLS shelf firmware and transceiver compatibility profiles to avoid behavior drift. During planned maintenance, they updated one site at a time to isolate regressions.

- Run a burn-in window for first-article transceivers. The first batch ran for 72 hours with continuous traffic and periodic temperature cycling. They rejected modules that showed unusual DOM variance under stable traffic.

Measured Results: what improved after standardizing on the OLS transceiver

After rollout across eight sites, the team measured reliability and operational outcomes against their previous approach, which had multiple transceiver vendors and inconsistent DOM handling. The results were concrete: optical link stability improved and maintenance events dropped.

Uptime: they achieved 99.97% optical link availability over the first quarter after stabilization, up from 99.90% in the prior quarter during mixed-vendor transitions. Alarm quality: false-positive optics alarms decreased by 38% because DOM units and thresholds were standardized in the NOC logic. Truck rolls: they reduced “mystery flaps” by 45% by correlating DOM drift with early warning alerts.

Performance validation included checking that the OLS transceiver remained within vendor-recommended optical power levels across seasonal temperature changes. In two longer-reach links, they observed a slow bias current drift consistent with aging; because DOM telemetry was normalized, they scheduled preventive replacement before hard failures. That practice turned a reactive pattern into a planned maintenance schedule.

Selection criteria checklist: how to choose the right OLS transceiver

Engineers can avoid most deployment surprises by using a disciplined checklist. Below is the order the team used, based on field outcomes and vendor documentation.

- Distance and reach class: confirm required reach with realistic fiber loss, connector penalties, and spares strategy.

- Switch and OLS shelf compatibility: verify electrical interface and control-plane behavior for your exact shelf generation.

- DOM support and telemetry normalization: confirm DOM fields, scaling, and alert thresholds; ensure your monitoring pipeline supports them.

- Operating temperature and derating: check both module temperature range and any derating guidance at elevated ambient.

- Optical power budget margin: ensure TX and RX levels stay within spec across aging and worst-case connector conditions.

- Vendor lock-in risk: evaluate how firmware updates affect third-party optics; plan a compatibility test matrix.

- Lead time and spares strategy: quantify how long you can wait without impacting SLAs; stock spares for the most failure-prone reach class.

Common Mistakes / Troubleshooting: failure modes you can prevent

Even when an OLS transceiver passes initial bring-up, certain issues show up later in production. Here are the most common pitfalls the team encountered and how they resolved them.

Link flaps that correlate with temperature gradients

Root cause: the module operated near its upper temperature spec because the rack airflow pattern was not uniform, leading to transmitter bias drift and marginal receiver sensitivity. Solution: measure ambient and module temperature with calibrated sensors, then adjust airflow (baffles, fan speed curves) and validate again with a thermal ramp test.

“Working optics” but broken monitoring and wrong alarm thresholds

Root cause: DOM scaling or field mapping differed from what the NOC expected. The transceiver might report optical power in a different unit or use different threshold defaults, causing either alarm storms or silent failures. Solution: compare raw DOM values from the shelf against what telemetry ingestion reports, then recalibrate thresholds and add unit tests for DOM parsing.

BER/OSNR degradation after connector cleaning delays

Root cause: dust and micro-scratches on LC endfaces increased insertion loss over time, especially on frequently handled patch panels. This reduced optical margin and elevated error rates even though the link stayed “up.” Solution: enforce a cleaning SOP (lint-free wipes and approved cleaning tools), inspect with an inspection scope, and document connector handling frequency by site.

Compatibility surprises after OLS shelf firmware updates

Root cause: shelf firmware changed transceiver initialization sequences or DOM polling cadence. A previously stable module then produced new alarms or performance drift. Solution: stage firmware upgrades with your exact transceiver part numbers, record DOM/optical metrics before and after, and roll out site-by-site with rollback plans.

Cost & ROI note: what you actually pay beyond the module

Pricing for an OLS transceiver depends heavily on data rate, modulation type, and whether it is coherent-capable or reach-flexible. In many enterprise and metro deployments, engineers see module unit costs ranging from roughly $1,000 to $6,000 per port for pluggable optics classes used in 100G-era networks, with higher costs for advanced coherent variants. OEM optics can cost more than third-party modules, but OEM often reduces compatibility risk and shortens troubleshooting cycles.

TCO is driven by spares, failure rates, and operational time. If third-party optics reduce unit price by 10% but increase field replacements by 30% due to DOM mismatch or shelf compatibility quirks, the ROI is negative. The team’s ROI came from fewer truck rolls and higher alarm accuracy, which reduced maintenance labor and improved SLA adherence. In their accounting, standardization reduced “unknown optics” investigations by about 20 hours per month across the team.

FAQ

What is an OLS transceiver in disaggregated optical networking?

An OLS transceiver is the pluggable optical interface used in an Open Line System architecture to connect the optical line side with the rest of the network. In disaggregated designs, it becomes a standardized component whose optics and diagnostics are expected to match the OLS shelf behavior.

Can I use any SFP or QSFP transceiver as an OLS transceiver?

No. OLS transceiver compatibility depends on the exact OLS shelf electrical interface, optics control expectations, and supported modulation/reach profiles. Even if a module can be inserted physically, it may fail initialization or produce unusable telemetry.

How do DOM telemetry differences cause real outages?

DOM differences can lead to incorrect optical power thresholds, delayed alarms, or missing early warning signals. When automation is driven by DOM values, incorrect parsing or scaling can prevent preventive replacement, increasing the chance of hard failures.

What should I test before scaling from one site to eight?

Validate DOM mapping and alarm propagation, confirm reach with worst-case fiber loss and connector penalties, and run a thermal ramp test that matches rack airflow. Also test your monitoring pipeline against raw telemetry so that alarms are actionable.

Are third-party OLS transceivers worth the savings?

Sometimes, but only with a compatibility test matrix that includes firmware interaction and DOM telemetry validation. If your operations team cannot reliably normalize telemetry and thresholds across vendors, the hidden costs often outweigh the module price savings.

What is the quickest troubleshooting path for a new OLS transceiver?

Start with DOM readings and optical power levels at the shelf, then verify fiber cleaning and connector inspection, and finally cross-check for shelf firmware compatibility. If the link is up but errors rise, focus on optical margin and verify that alarms reflect real physical degradation rather than telemetry parsing issues.

In this case, standardizing an OLS transceiver within a disaggregated optical networking architecture improved alarm quality, reduced maintenance events, and delivered measurable uptime gains. Next, review fiber connector cleaning and inspection best practices to protect optical margin and extend the life of every transceiver in the field.

Author bio: I have deployed disaggregated metro optical networks with hands-on validation of transceiver DOM telemetry, fiber loss budgets, and OLS shelf firmware compatibility across staged rollouts. I write from field experience with operational runbooks, measured reliability outcomes, and practical troubleshooting workflows.