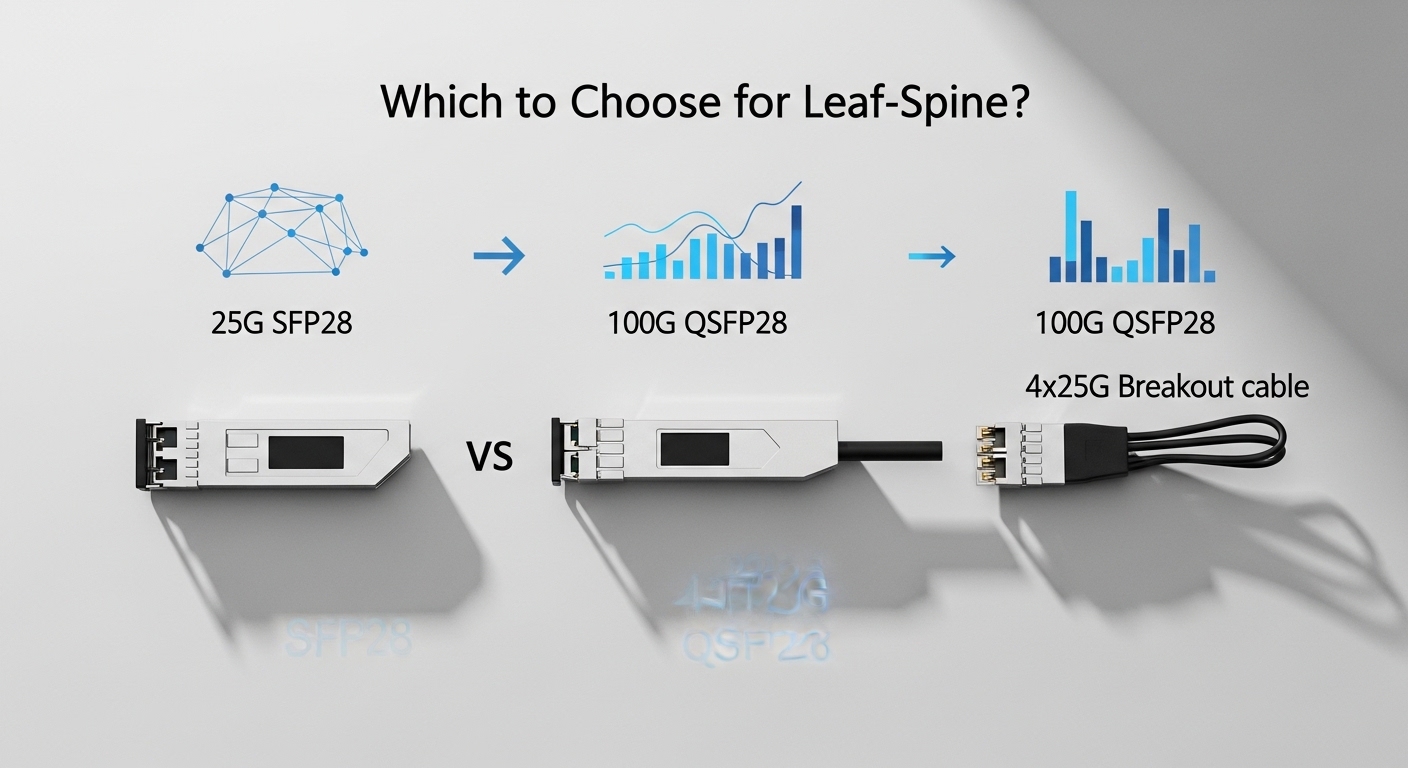

In leaf-spine data centers, choosing between 25G SFP28 and 100G optics can make or break port density, oversubscription math, and fault-domain size. This guide helps network engineers and field teams decide when 4x25G breakout beats native 100G QSFP28, and when it does not. You will get a practical checklist, real deployment example, and troubleshooting patterns you can apply on the rack.

How leaf-spine traffic patterns change the decision

Leaf-spine designs often use 25G or 100G links depending on switch silicon, ToR density, and budget. With 25G SFP28 plus 4x25G breakout, you can land more downstream endpoints per chassis line card, which matters when servers are mostly 25G NICs. With 100G QSFP28, you reduce optics count and simplify cabling, but you may get fewer total “useful” server-facing ports if you have to consolidate at the leaf.

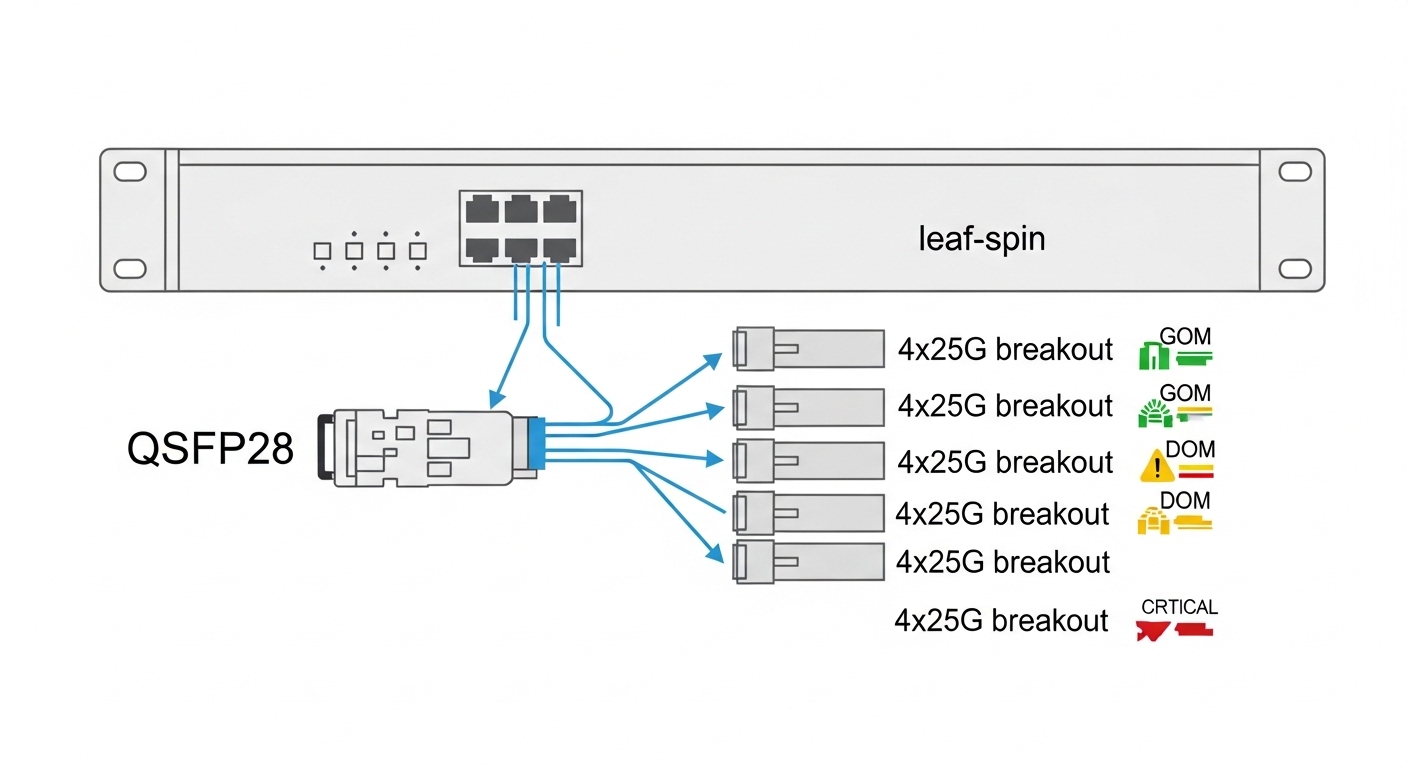

Operationally, breakout increases granularity: a single QSFP28 cable failure can translate into up to four link events, but it can also isolate issues to a smaller 25G lane. Conversely, native 100G creates a larger single failure surface for bandwidth, yet fewer physical transceiver instances to manage.

Field note: fault domain and troubleshooting time

During burn-in in a 2-stage leaf-spine, we saw mean time to repair drop when 25G breakout was used with per-lane visibility: link LEDs and counters mapped cleanly to four separate 25G interfaces. With 100G, a single optics swap restored service faster when the failure was clearly in the module, but diagnosing intermittent errors could involve more systematic rollback across the 100G interface.

Key optics specs: 25G SFP28 vs 100G QSFP28

Most leaf-spine deployments use short-reach multimode for intra-building runs. The main differences are interface type, wavelength, reach class, and power. Ensure you match the switch vendor’s approved optics list and the transceiver electrical interface (SFP28 for 25G lanes, QSFP28 for 100G lanes).

| Parameter | 25G SFP28 (typical SR) | 100G QSFP28 (typical SR4) |

|---|---|---|

| Data rate | 25.78 Gb/s per lane | 103.1 Gb/s aggregate |

| Fiber type | OM4 MMF (common) | OM4 MMF (common) |

| Wavelength | ~850 nm | ~850 nm (SR4 lanes) |

| Reach (typical) | Up to 70 m on OM4 (varies by vendor) | Up to 70 m on OM4 (varies by vendor) |

| Connector | LC duplex (typical) | LC quad / MPO-12 (depends on model) |

| Power (typical) | ~1.0 to 2.0 W | ~3.0 to 5.5 W |

| Temperature range | Often 0 to 70 C | Often 0 to 70 C |

Examples of commonly deployed parts include Cisco SFP-10G-SR (older form), and for modern 25G/100G: Finisar/FS and other OEMs sell 25G SR SFP28 and 100G SR4 QSFP28 modules such as FS.com SFP-10GSR-85 (legacy naming) and current SR4 QSFP28 families; always validate exact ordering code and DOM support against your switch.

Standards alignment matters: Ethernet physical layer expectations follow IEEE 802.3 link layer and optical interface requirements, while the optics themselves must satisfy vendor electrical and optical specifications for signal integrity. Reference: IEEE 802.3.

4x25G breakout vs native 100G: a decision checklist

Use this ordered checklist on every leaf-spine port plan. The goal is to avoid surprises in optics compatibility, cabling, and power budgets.

- Distance: confirm OM4/OM3 class and patch cord loss budget; target margin for aged links.

- Budget and port economics: compare optics cost per active 25G lane vs per 100G port.

- Switch compatibility: verify the exact transceiver model in the vendor interoperability list; do not assume “SR is SR.”

- DOM support: ensure the optics provide readable digital optical monitoring (DOM) and that the switch supports it.

- Operating temperature: check vendor module spec and your airflow; leaf-spine exhaust zones can exceed expected ambient.

- Vendor lock-in risk: evaluate third-party optics acceptance; plan for replacement spares with matching firmware/DOM behavior.

- Management and cabling: MPO vs LC complexity; breakout increases the number of patch points and labeling requirements.

Pro Tip: In mixed 25G and 100G environments, breakout can reduce operational risk if you standardize optics labels and maintain per-lane interface mapping in your CMDB. When a single 100G link degrades, you often have to treat it as a whole; with 4×25, you can pinpoint which lane pair is noisy by interface counters before swapping anything.

Real-world leaf-spine scenario with measured constraints

Consider a 3-tier data center leaf-spine topology: 48-port ToR switches with 12 uplink slots per leaf, each server NIC at 25G, and total north-south traffic dominated by east-west microbursts. If you deploy native 100G QSFP28 uplinks (SR4), each leaf uses fewer optics but uplink bandwidth per port grows quickly; however, the leaf line card may expose fewer physical server-facing ports when you allocate breakout resources elsewhere. If instead you use 25G SFP28 with 4x25G breakout for uplinks, you can align uplink granularity to server NIC speeds and apply QoS per 25G interface.

In practice, teams budget optics power and cabling labor. A typical 100G QSFP28 SR4 can draw roughly 3 to 5.5 W versus 1 to 2 W per 25G SFP28; breakout uses more modules but can still be acceptable if your switch ports are the bottleneck. TCO often hinges on field swap speed, spare inventory, and how often optics are replaced due to connector wear and dust in high-turnover racks.

Common mistakes and troubleshooting patterns

These issues show up repeatedly during acceptance testing and later maintenance windows.

- Mismatched fiber type or loss budget: Root cause is OM3 vs OM4 mismatch or dirty patch cords. Solution: verify fiber plant labeling, clean with approved procedures, and re-measure with an OTDR/launch-and-receive power meter.

- Connector and polarity confusion (LC/MPO): Root cause is incorrect polarity mapping on SR4/MPO or reversed LC duplex. Solution: use polarity adapters per vendor guidance; document lane mapping and validate with link up plus receive power thresholds.

- DOM/compatibility mismatch: Root cause is third-party optics that report DOM fields differently or lack required EEPROM behavior. Solution: test optics models in a staging switch; confirm DOM reads and alarms are normal before rollout.

- Temperature and airflow violations: Root cause is poor airflow or blocked vents causing module derating. Solution: measure inlet/outlet temperatures, enforce minimum clearance, and monitor optical power for drift.

- Assuming “SR equivalence” across vendors: Root cause is different receiver sensitivity and link budget assumptions. Solution: use vendor reach tables and validate with your exact switch and patch cord lengths.

Cost and ROI: what usually wins in TCO

Street pricing varies by volume, but a realistic range is: 25G SFP28 SR commonly costs less than a 100G QSFP28 SR4 per optics unit, while 100G QSFP28 costs more per module but reduces total optics count. Breakout can increase the number of optics spares you must stock, yet it may lower risk per failure and improve maintenance efficiency. ROI typically improves when breakout aligns with server NIC speeds