When fiber module end of life hits your vendor lifecycle window, the failure mode is rarely “sudden brick.” More often it is a slow drift: availability constraints, tighter lead times, changed firmware or optics bins, and rising error rates. This helps network and data-center teams plan migration for optical transceivers with measurable controls, so you can keep link budgets, optics temperatures, and BER margins intact. The article targets builders of leaf-spine and metro aggregation networks who need an operational playbook, not marketing guidance.

Top 8 migration actions when fiber module end of life is announced

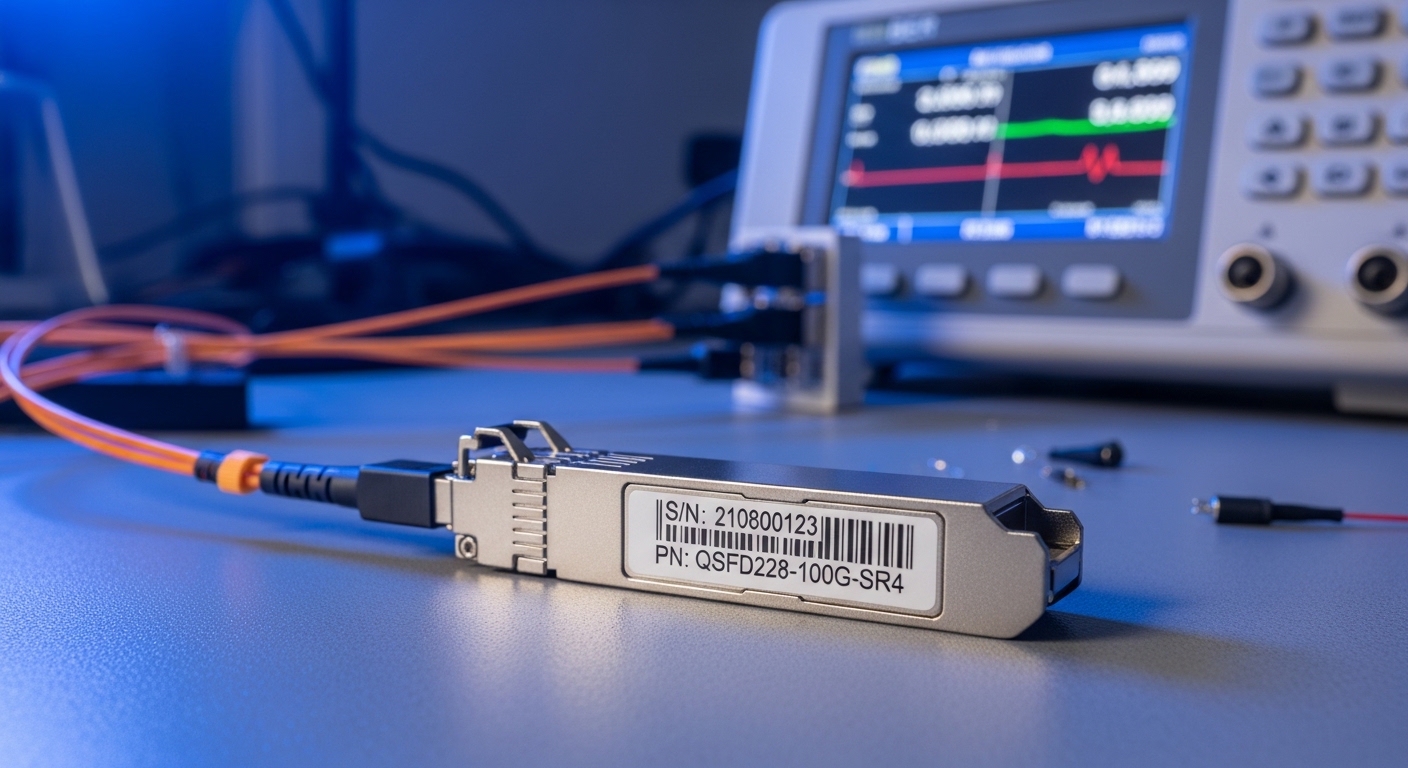

Inventory by exact part number, not by form factor

Start with a bill of materials keyed to the transceiver SKU and serial traceability, because replacements can differ in vendor calibration, DOM behavior, and optical output power bins. Pull data from switch DOM tables and optics management systems, then normalize to the exact models you deploy (examples: Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, FS.com SFP-10GSR-85). Correlate each port to patch panels and fiber route IDs so you can estimate which links will be impacted first by lead-time risk.

Pros: reduces “looks compatible” surprises. Cons: requires disciplined CMDB hygiene.

Validate optics class using IEEE 802.3 and vendor specs

For 10G/25G/40G/100G, ensure the replacement supports the same modulation and electrical interface expectations used by the host ASIC. Cross-check against IEEE 802.3 clauses for the relevant PHY (for example, 10GBASE-SR, 25GBASE-SR, or 100GBASE-SR4) and the vendor datasheet for wavelength, receiver sensitivity, and launch power compliance. Also confirm connector type: LC versus MPO/MTP for SR4 and parallel optics.

Pros: prevents silent link instability. Cons: can limit third-party options.

Use a link budget delta test before changing optics

Compute a baseline budget for each affected path: transmitter launch power (from datasheet or measured DOM), fiber attenuation, connector loss, splice loss, and receiver sensitivity. Then repeat the calculation using the candidate optics’ parameters, including worst-case temperature drift. If you have margin, target a delta that preserves at least a practical headroom for aging and cleaning variance—especially where patch cords are repeatedly reworked.

Pros: makes migration quantifiable. Cons: requires accurate loss documentation.

Compare candidate modules with a spec matrix

Create a matrix that engineers can review quickly during change control. Include wavelength, reach, optical power range, receiver sensitivity, DOM feature set, connector, and temperature class. Below is a representative comparison template for SR-class modules; values vary by vendor and speed grade, so always fill from datasheets.

| Parameter | 10GBASE-SR SFP+ | 25GBASE-SR SFP28 | 100GBASE-SR4 QSFP28 |

|---|---|---|---|

| Nominal wavelength | 850 nm (MM) | 850 nm (MM) | 850 nm (MM, 4-lane) |

| Typical reach | 300 m (OM3), 400 m (OM4) | 100 m (OM3), 150 m (OM4) | 100 m (OM3), 150 m (OM4) |

| Optical interface | LC | LC | MPO/MTP |

| DOM support | Yes (vendor-specific thresholds) | Yes (calibrated alarms) | Yes (per-lane telemetry) |

| Temperature range | 0 to 70 C (typical) | 0 to 70 C (typical) | 0 to 70 C (typical) |

| Key compatibility risk | RX power and DOM alarm thresholds | Host PHY tolerance and vendor coding | MPO polarity and lane mapping |

Pros: speeds approvals and reduces change churn. Cons: incomplete matrices cause false confidence.

Run a port-level acceptance test using BER and telemetry

Before mass swap, stage a controlled test on representative ports in the same switch model and firmware revision. Use a traffic generator or built-in error counters to validate BER or packet loss under load. Confirm DOM readings: transmit power, receive power, bias current, and temperature. If the host uses threshold-based alarms, ensure the candidate optics’ DOM scaling does not trigger nuisance events that can lead to automated shutdown policies.

Pros: catches interoperability defects early. Cons: costs test time and requires spare optics.

Plan lead time and stocking strategy around end-of-life windows

When fiber module end of life is announced, treat it like a supply-chain risk event. For critical links, buy last-time-ship inventory within the vendor’s cutoff and stage spares by location and speed grade. Then define a “minimum viable stock” based on your annual failure rate and typical maintenance turnaround. Include the logistics cost of moving spares to remote sites where optics availability can become the bottleneck.

Pros: reduces operational downtime. Cons: ties up working capital if projections are wrong.

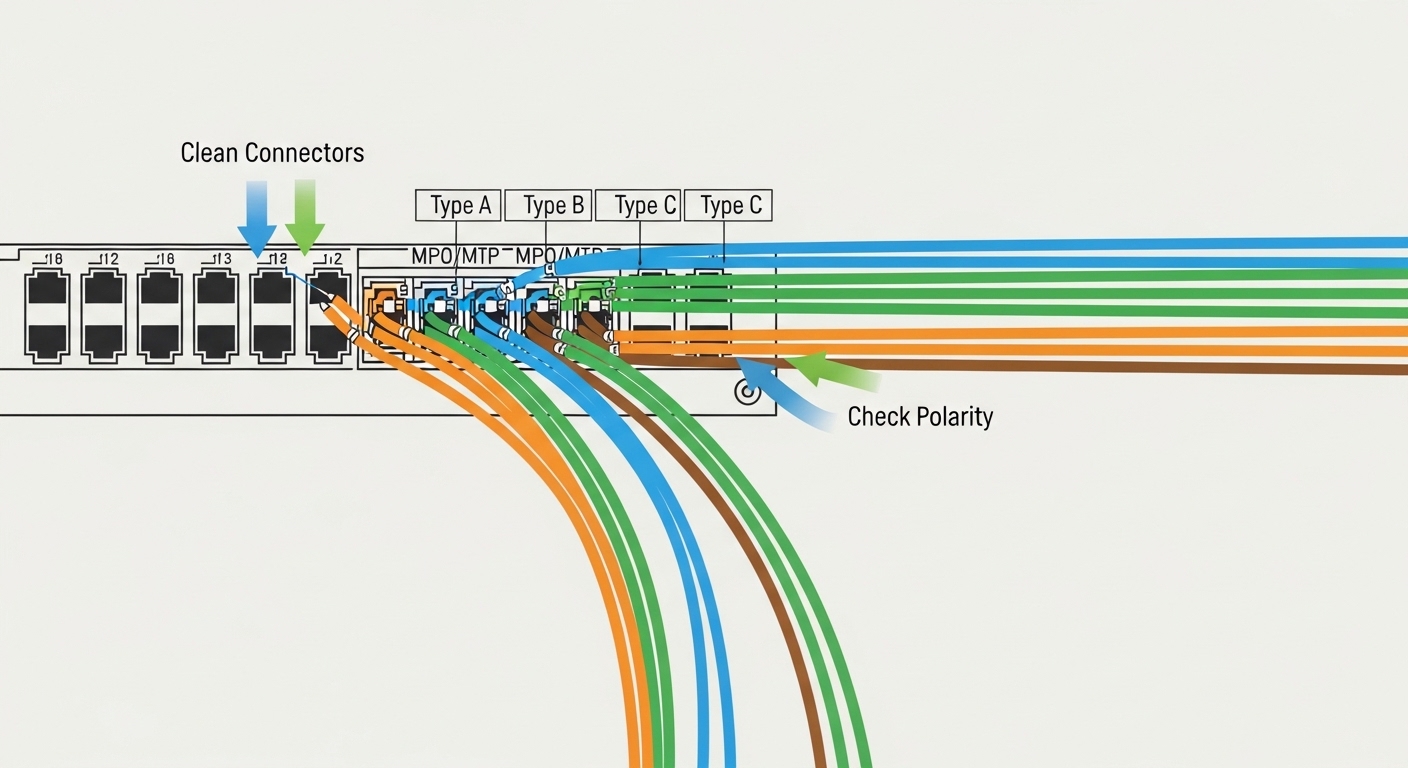

Implement fiber hygiene and polarity verification as part of migration

Many optics swaps fail not because the module is wrong, but because the optical path changes during maintenance. For MPO/MTP, verify polarity and lane mapping with a polarity checker and standardized connector cleaning process. For LC, inspect endfaces and re-clean with lint-free procedures before testing. Tie this to your migration SOP so field teams do the same steps every time.

Pros: improves link stability independent of vendor choice. Cons: adds procedural steps and tooling.

Control change risk with rollback criteria and documentation

Define rollback triggers: sustained CRC error growth, rising DOM temperature beyond spec, receive power falling outside acceptable thresholds, or BER degradation under a defined traffic profile. Document the exact host firmware version, switch port mapping, transceiver SKU, and test results. This is essential for root-cause analysis if a future optics batch behaves differently due to manufacturing binning.

Pros: reduces MTTR. Cons: requires disciplined runbooks.

Pro Tip: In the field, teams often assume “same wavelength, same reach” guarantees stability. In practice, the host switch may apply vendor-specific or DOM-threshold-based behaviors; a replacement that reports slightly different Tx bias or alarm thresholds can trigger link flaps or maintenance automation even when the physical layer would otherwise pass BER.

Common mistakes and troubleshooting during fiber module end of life

- Mistake: Replacing by speed/form factor only (e.g., swapping SR SFP+ without validating DOM behavior).

Root cause: host PHY and alarm thresholds can be sensitive to DOM scaling; some optics report different calibration ranges.

Fix: run port-level acceptance tests and verify DOM telemetry ranges before production. - Mistake: Ignoring MPO polarity and lane mapping.

Root cause: incorrect polarity can manifest as low or intermittent receive power per lane, causing high error bursts.

Fix: use a polarity checker, validate lane map, and re-terminate or re-patch when per-lane Rx power is asymmetric. - Mistake: Skipping fiber cleaning between swaps.

Root cause: microfilm or scratches on endfaces increase insertion loss and can shift the link budget outside receiver sensitivity.

Fix: enforce endface inspection and standardized cleaning; re-test receive power after each maintenance action. - Mistake: Treating datasheet “max reach” as usable margin.

Root cause: real deployments have variable patch cord lengths, connector aging, and temperature drift.

Fix: compute budget using measured losses and include worst-case temperature and power drift.

Cost and ROI note for migration and stocking

Typical street pricing varies by vendor and speed grade: third-party optics for common SR modules may range from roughly $20 to $80 per unit, while OEM-branded modules can be higher—often $80 to $250+ depending on portfolio and volume. TCO is driven by failure rates, spares logistics, and downtime cost during swaps. If you can reduce field visits via better acceptance testing and stock the right SKUs early, ROI often comes from fewer outages and lower MTTR rather than the unit price alone.

FAQ

How do I determine if a specific optics part number is actually approaching fiber module end of life?

Use vendor lifecycle notices tied to the exact SKU and check your procurement history for last-time-order dates. Then validate that your current modules still report stable DOM telemetry and do not show rising error counters in the same environmental conditions. [Source: vendor lifecycle bulletins and transceiver datasheets]

Are third-party optics safe replacements during migration?

They can be, but you must validate electrical and optical compatibility with your switch model and firmware. The critical checks are BER/packet loss, DOM telemetry stability, and alarm threshold behavior. [Source: IEEE 802.3 and switch vendor compatibility notes: [[EXT:https://standards.ieee.org/standard/]]|IEEE 802.3]]

What DOM fields matter most for diagnosing end-of-life related issues?

Focus on Tx power, Rx power, bias current, and temperature. If you see drift trends, correlate them with link errors and physical maintenance events. Also watch for threshold-based alarm triggers that can cause automated actions in some platforms.