When you plug third-party coherent or transponder optics into an existing DWDM plant, a DWDM alien channel can look “compatible” on paper and still fail in the field. This article helps network engineers and field techs validate wavelength alignment, optical power budgets, and management features before you light additional services. Expect hands-on troubleshooting patterns, concrete spec targets, and a decision checklist you can apply during cutovers.

What “alien channel” means in modern DWDM control planes

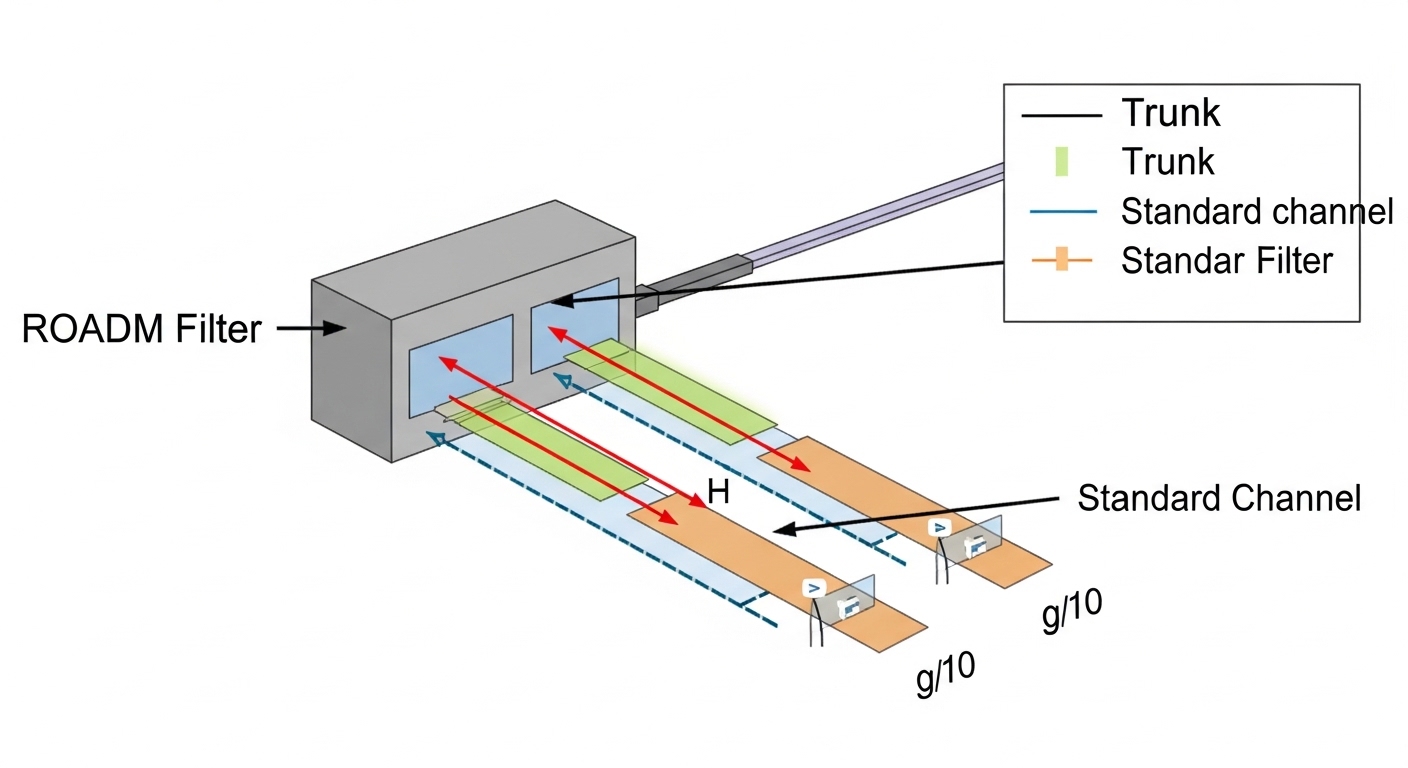

An alien channel is a wavelength-carrier that originates from a device not originally provisioned as part of the DWDM system vendor’s managed optical chain, yet is inserted into the same fiber plant and wavelength grid. In practice, you may add a third-party transponder to a ROADM or a fixed demux/mux system and rely on wavelength plan settings rather than vendor-specific provisioning. The key risk is not only whether the wavelength exists, but whether the grid, power, and spectral shape match what the multiplexing, filtering, and receiver DSP tolerances expect. This is why the term comes up in vendor interoperability notes, and why engineers track it similarly to “non-native” optics in DWDM transport.

From an standards perspective, the electrical side often follows IEEE 802.3 for Ethernet framing rates, while the optical transport is typically aligned to ITU-T wavelength grids (commonly 50 GHz or 100 GHz). The optical layer behavior is governed more by datasheet-defined parameters (laser linewidth, side-mode suppression, OSNR sensitivity) than by Ethernet standards. For deeper wavelength planning context, see [Source: ITU-T G.694.1]. For coherent receiver and performance constraints, vendor coherent optics datasheets and ROADM filtering specifications are the primary references.

- Best-fit scenario: Adding third-party transponders to a deployed ROADM or mux/demux plant with established channel spacing.

- Pros: Faster vendor diversification, potential capex reduction.

- Cons: Interop gaps in wavelength accuracy, spectral mask, and telemetry expectations.

Wavelength grid, tuning accuracy, and spectral purity targets

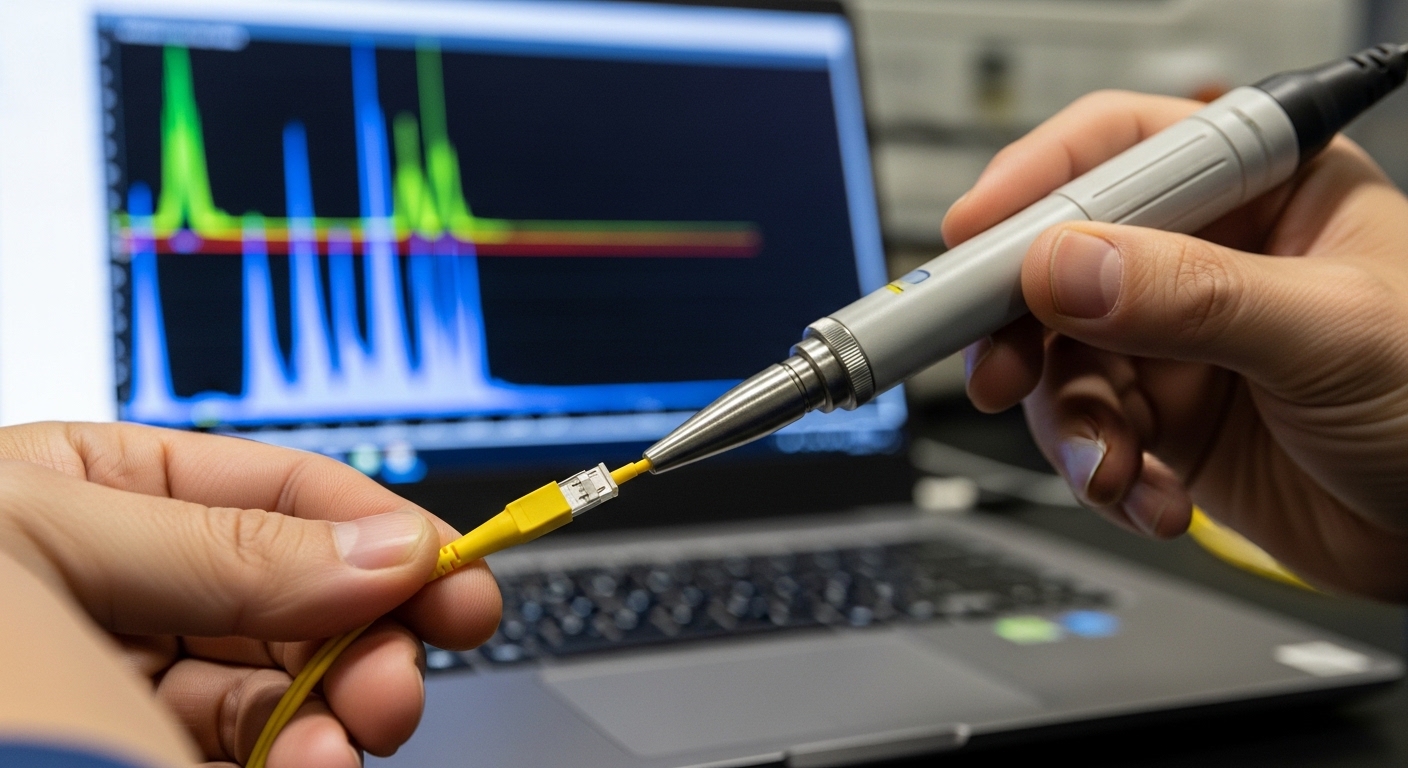

Most alien-channel outages trace back to wavelength plan mismatch: wrong channel number, wrong grid (50 GHz vs 100 GHz), or tuning drift beyond the filter passband. During acceptance tests, treat wavelength as an optical control variable with measurable tolerances. Typical field practice is to verify the emitted carrier using an optical spectrum analyzer (OSA) and confirm the center frequency lands within the system’s expected tolerance window.

Key spectral parameters that matter for third-party insertion are center wavelength accuracy, linewidth, side-mode suppression ratio, and the spectral width relative to ROADM filter bandwidth. If the plant uses narrow ROADM filters, a third-party transponder that meets “laser spec” in isolation can still fail after mux/demux filtering. In coherent systems, the receiver’s DSP can tolerate some impairment, but it still depends on OSNR and adjacent-channel interference.

| Parameter | What to verify for DWDM alien channel | Typical target (rule-of-thumb) | Why it matters |

|---|---|---|---|

| Channel spacing / grid | 50 GHz or 100 GHz plan; channel numbering | Match plant grid exactly | Prevents filter mismatch and routing errors |

| Center wavelength accuracy | Laser tuning setpoint vs measured carrier | Within ±5 to ±10 pm (depends on grid) | Ensures carrier lands inside ROADM passband |

| Laser linewidth | Linewidth and stability over temperature | Low linewidth (vendor-defined) | Affects coherent demod and spectral overlap |

| Spectral mask / side suppression | Out-of-band emissions and side lobes | Meets ROADM filter spec | Limits crosstalk into adjacent channels |

| Optical output power | Launch power into span and overall OSNR | Within plant power budget | Prevents receiver overload or OSNR collapse |

| Operating temp range | Transponder and optics thermal envelope | Match chassis spec | Prevents tuning drift and telemetry faults |

| DOM / telemetry | Laser temp, bias, power, alarm thresholds | DOM supported and trusted | Enables safe monitoring and automated rollback |

Reference note: ITU-T grid definitions are summarized in [Source: ITU-T G.694.1]. For acceptance testing procedures, vendor transponder/ROADM interoperability guides and OSA measurements are the practical source of truth.

- Best-fit scenario: You have OSA access and can confirm carrier placement before cutover.

- Pros: Predictable behavior when grid and spectral mask align.

- Cons: Requires time for measurement and sometimes firmware tuning.

Power budgets, OSNR, and how “looks aligned” still fails

Even with correct wavelength, alien-channel performance can collapse if the launched power and spectral shape create unexpected interference. In a DWDM system, OSNR is influenced by span loss, amplifier noise figure, channel loading, and relative power levels. A third-party transponder might be calibrated for a different expected launch condition, leading to either receiver saturation or elevated nonlinear penalties depending on fiber type and span design.

In coherent deployments, OSNR is often specified in terms of minimum OSNR required for target BER or FEC margin. In direct-detect systems, power budget is more straightforward: you check received power against sensitivity, then verify link margin after connector/splice losses and aging. For both, the field approach is to align the alien channel’s launch power to the plant’s established per-channel operating point. If your plant uses Raman amplification or has variable gain, you must re-check per-span conditions at the time of installation.

Practical field values: in many 80 km to 120 km metro spans with EDFA, engineers aim to keep each channel within the transponder’s allowed launch window (often on the order of a few dBm range, but exact values are vendor-specific). A common mistake is to set launch power “to maximum” because the transponder supports it; the plant may already be near its OSNR optimum due to existing channel loading.

- Best-fit scenario: You can measure received power per channel and compare against baseline before and after insertion.

- Pros: Reduces risk of nonlinear and crosstalk issues.

- Cons: Requires accurate plant loss and amplifier configuration data.

Pro Tip: If your alien channel passes initial OSA center-frequency checks but the downstream FEC margin collapses, treat it as an OSNR and spectral-mask problem first. I have seen cases where the wavelength was within tolerance, yet the adjacent-channel crosstalk increased because the third-party transmitter’s out-of-band emissions did not match the ROADM’s implicit spectral assumptions.

Management and interoperability: DOM, alarms, and rollback behavior

Operationally, the alien-channel risk is often not physical layer only; it is the management plane. Many DWDM platforms rely on transponder telemetry (laser bias current, temperature, output power, alarm flags) to trigger protection switching or to quarantine a failing channel. Third-party optics may implement DOM, but the alarm thresholds, scaling, or field naming can differ, causing false positives or missed critical alarms.

In the field, I recommend validating DOM behavior by comparing telemetry readings against a known-good native module in the same slot. If the platform supports standard interfaces, ensure the optical module uses the expected management mechanism (for example, SFF-style memory maps for pluggables, or transponder vendor-specific MSA equivalents). When DOM is absent or partially implemented, you may lose the automated rollback logic, forcing manual intervention during cutover.

For concrete module examples engineers commonly test: dual-source 10G/25G optics like Cisco SFP-10G-SR or Finisar FTLX8571D3BCL are not DWDM coherent alien-channel optics, but the lesson transfers: DOM compliance and threshold mapping matter. For true DWDM channel insertion, you will be dealing with coherent transponders or DWDM transceiver families from your vendor and the third party; always align to the host chassis compatibility matrix.

- Best-fit scenario: You need safe automation: alarm thresholds, telemetry dashboards, and protection triggers.

- Pros: Faster troubleshooting when telemetry is trustworthy.

- Cons: DOM mismatch can lead to misleading alarms and longer MTTR.

Cutover workflow: a repeatable acceptance test for alien channels

During cutovers, the fastest path to stability is a staged workflow that isolates wavelength, power, and signal-quality checks. Start with an out-of-service validation: confirm the transponder is configured for the correct channel plan, then measure the optical spectrum to verify center frequency and side lobes. Next, validate optical power at the mux/terminal and compare to the baseline of the channel plan. Only then bring the service into service and confirm FEC and error counters remain within expected ranges.

A practical sequence for a metro DWDM plant with multiple spans: (1) confirm chassis slot support for the third-party transponder model; (2) set channel frequency using the same numbering convention as the DWDM controller; (3) verify OSA peak at the expected center; (4) adjust launch power to match baseline per-channel level; (5) enable the service and watch FEC/BER for at least 30 minutes while monitoring temperature and alarms. If the system supports per-channel OSNR readings, log them at 5-minute intervals to detect transient instability.

Use the same measurement instruments you trust during baseline collection. If you do not have OSA, a high-quality wavelength meter with sufficient accuracy can help, but it will not fully reveal spectral mask compliance. For safety, keep a rollback plan: disable the alien channel, revert to the last known-good transponder, and restore pre-cutover amplifier settings if you changed them.

- Best-fit scenario: You are adding a handful of services and need controlled risk.

- Pros: Lower MTTR and fewer “mystery” outages.

- Cons: Requires disciplined test time and logging.

Common mistakes and troubleshooting patterns that actually save hours

Alien-channel issues are rarely random. Below are failure modes I have seen repeatedly in field deployments, along with root causes and fixes.

-

Mistake: Channel frequency set using the wrong grid or numbering convention.

Root cause: Mixing 50 GHz and 100 GHz plans or confusing “channel index” between controller and transponder configuration.

Solution: Verify measured carrier frequency on OSA and map it back to ITU grid expectations; do not rely solely on configuration labels. Reapply the correct channel plan and re-check spectral peak position. -

Mistake: Launch power configured to maximum to “ensure signal.”

Root cause: Overshooting the plant’s OSNR optimum or receiver linear range, increasing nonlinear penalties and adjacent-channel crosstalk.

Solution: Set launch power to the baseline operating point used by native channels; confirm received power and FEC margin after insertion. If possible, read OSNR per channel and tune to the plant’s sweet spot. -

Mistake: Assuming DOM presence means alarms are trustworthy.

Root cause: DOM scaling/threshold mismatches cause false alarms or missed critical temperature/bias events.

Solution: Cross-check telemetry against a known-good module in the same slot; validate alarm behavior by inducing non-destructive conditions where allowed (for example, monitoring in steady state across temperature variation). -

Mistake: Skipping spectral mask verification when wavelength peak looks correct.

Root cause: Out-of-band emissions can be suppressed enough to pass a center-frequency check but still violate ROADM filter assumptions, elevating crosstalk.

Solution: Use OSA to inspect side lobes and out-of-band roll-off; compare against ROADM filter characteristics from the platform datasheet or interoperability note.

- Best-fit scenario: You are diagnosing intermittent drops, alarms, or FEC degradation after a seemingly correct insertion.

- Pros: Faster isolation of wavelength vs power vs telemetry causes.

- Cons: Requires disciplined measurements and log correlation.

Real-world deployment scenario: leaf-spine metro with phased alien-channel rollout

In a 3-tier metro network with a leaf-spine topology, a carrier interconnects two data center sites using a DWDM transport layer. The plant runs 100 GHz channel spacing over multiple EDFA-amplified spans totaling 96 km each direction, with 32 active wavelengths and per-channel launch power maintained at a baseline of roughly the vendor-recommended operating point. A field team adds two new services using third-party coherent transponders for cost and supply-chain reasons, inserting them as DWDM alien channel carriers into the existing ROADM-managed spectrum. Initial OSA checks confirm center frequency alignment, but FEC margin dips by about 2 dB for one of the two new channels after traffic bursts.

Root cause was identified as a launch power mismatch: the third-party transponder defaulted to a calibration profile intended for a different amplifier noise figure and channel loading assumption. The team adjusted launch power down by a small step (within the transponder’s allowed range) and re-validated OSNR readings and FEC margin for 30 minutes. After the change, error counters stabilized and alarms ceased, confirming the alien-channel issue was power/impairment interaction rather than a pure wavelength error.

- Best-fit scenario: You manage a live plant and roll out services in phases with measurable baseline comparisons.

- Pros: Minimizes downtime and prevents broad spectrum instability.

- Cons: Requires coordination with amplifier settings and baseline documentation.

Selection criteria checklist for DWDM alien channel acceptance

Use this ordered list as a practical pre-flight checklist. If you can’t satisfy an item, treat it as a risk until you have test evidence.

- Distance and span losses: Confirm reach against transponder specs and your actual span attenuation plus aging margin.

- Grid and channel plan: Verify channel spacing, ITU alignment, and controller numbering conventions.

- Switch or chassis compatibility: Confirm the host platform supports the third-party model in that exact slot type and firmware level.

- Optical power budget and OSNR: Confirm allowed launch power and check OSNR sensitivity for the chosen modulation and FEC mode.

- DOM and telemetry mapping: Ensure the management plane can read critical alarms and does not mis-scale thresholds.

- Operating temperature range: Validate the module thermal envelope matches the chassis environment, especially if the plant uses hot-aisle or variable airflow.

- Vendor lock-in risk: Consider whether the DWDM platform restricts optics by cryptographic identity, firmware gating, or service provisioning workflows.

- Test instrumentation availability: Ensure you have OSA or equivalent spectral verification and a method to validate FEC/BER under load.

- Best-fit scenario: You are writing an internal acceptance plan or performing vendor qualification.

- Pros: Reduces “works in lab” surprises during field cutover.

- Cons: Requires upfront documentation and measurement time.

Cost, TCO, and when third-party optics are worth the operational overhead

Third-party optics can reduce module purchase cost and improve supply-chain resilience, but TCO depends on operational risk and replacement logistics. In many DWDM environments, a coherent transponder or DWDM channel module can cost several times a basic short-reach pluggable, so failure rates and downtime have meaningful financial impact. Field experience suggests that if you lack testing tools and interoperability documentation, the labor and downtime costs can erase any per-unit savings.

Typical budgeting reality: third-party coherent optics may offer a noticeable discount versus OEM, but you should include costs for acceptance testing, spare strategy, and potential firmware or management plane integration work. If your plant already has mature procedures for native optics, the marginal cost of adding alien channels is lower because you can reuse baseline measurements and alarm handling. For TCO modeling, track MTTR, mean time between failures, and the cost of a failed cutover window (including traffic reroutes and maintenance staffing).

- Best-fit scenario: You have strong operational maturity and measurement capability.

- Pros: Potential capex reduction and supply flexibility.

- Cons: Higher integration effort and possible compatibility constraints.

Summary ranking: which alien-channel factors matter most first

When you are deciding whether a particular DWDM alien channel insertion is likely to succeed, prioritize factors in the order below. This ranking reflects field failure frequency: wavelength plan and spectral/power interaction are usually earlier culprits than telemetry details.

| Priority | Factor | Primary failure symptom | Validation method |

|---|---|---|---|

| 1 | Wavelength grid and center frequency | No lock, no signal, ROADM filter attenuation | OSA or wavelength meter peak verification |

| 2 | Launch power vs OSNR and crosstalk | FEC margin collapse, intermittent bursts | OSNR/FEC logging and power adjustment |

| 3 | Spectral mask and out-of-band emissions | Adjacent-channel degradation | OSA side-lobe and roll-off inspection |

| 4 | DOM and alarm mapping | False alarms or missed critical events | Telemetry comparison to native baseline |

| 5 | Thermal and operating envelope | Drift over time, tuning instability | Temperature monitoring and stability testing |

| 6 | Chassis compatibility and provisioning | Module not recognized, provisioning failure | Host compatibility matrix and firmware checks |

If you want the next step after this checklist, I recommend reviewing how to validate optical budgets and alarms systematically across your transponder fleet: DWDM optical power budget validation.

FAQ

Q: Can a DWDM alien channel work without OEM configuration changes?

Often yes, provided the third-party transponder is configured to the exact ITU grid and the host platform supports the optics model. However, you may still need to align launch power targets and verify spectral behavior because ROADM filtering assumptions are not always documented in full.

Q: What measurement should I trust first: OSA center peak or FEC margin?

Start with OSA center peak and spectral shape to confirm the carrier is where the plant expects it. Then validate FEC margin under realistic traffic load; center-frequency correctness does not guarantee OSNR or crosstalk compliance.

Q: How do DOM issues show up during cutover?

They can appear as missing alarms, incorrect power readings, or protection logic not triggering when expected. Compare telemetry against a native baseline in the same slot and confirm that alarm thresholds behave consistently.

Q: Does channel spacing choice (50 GHz vs 100 GHz) affect alien-channel risk?

Yes. Narrower grids typically increase filter sensitivity to wavelength error and spectral mask violations, raising the importance of accurate tuning and out-of-band suppression.

Q: Are third-party optics always cheaper once you include integration labor?

Not always. If you have mature acceptance testing and baseline telemetry, third-party optics can reduce capex. If you lack OSA/OSNR validation workflows or the host platform has provisioning friction, labor and downtime can erase savings.

Q: What is the fastest rollback trigger during a problematic alien-channel insertion?

Rollback when you see persistent FEC margin collapse coupled with stable OSA wavelength peak, or when alarm patterns indicate telemetry/optical safety thresholds are being