A fast-growing inter-data-center network can fail in subtle ways: link flaps from marginal optics, unexpected DOM mismatches, and lead-time surprises that stall cutovers. This case study walks you through how a procurement and field engineering team evaluated data center interconnect SFP options for 100G and 400G connectivity, then shipped a working rollout with quantified outcomes. If you buy or qualify transceivers for DCI, you will get a practical checklist, spec comparison, and troubleshooting patterns you can reuse.

Problem / challenge: DCI cutover blocked by optics lead time and link instability

In a multi-site setup, we needed to extend leaf-spine traffic between two buildings connected over dark fiber. The target was 100G for aggregation and 400G for core bursts, with planned maintenance windows of only four hours per site. Early vendor quotes created a problem: the exact optics part numbers were available, but the promised ship dates slipped by 3 to 6 weeks, and field tests showed occasional receiver power margin shortfalls at temperature extremes.

From a procurement standpoint, we also faced supply chain risk. OEM-only sourcing looked “safe,” yet it concentrated risk in a single manufacturing lane. We needed a sourcing strategy that preserved compatibility with switch optics management (DOM reporting, lane mapping, and vendor-specific quirks) while still meeting the DCI schedule.

Environment specs: what the fiber and switches demanded

Our environment combined strict optics requirements with operational realities. The interconnect path used single-mode fiber (SMF) with a measured end-to-end loss budget including patch cords and fusion splices. We targeted two link classes: one set of 100G long-reach links and another set of 400G high-throughput links.

Link and infrastructure parameters used for qualification

- Fiber type: SMF per typical DCI deployments (validated by OTDR, with splice and connector losses included in budget).

- 100G reach target: up to ~10 km-class long-reach optics.

- 400G reach target: up to ~2 km-class short reach for dense core aggregation.

- Switch optics behavior: DOM readout required by telemetry; vendor-qualified transceiver list for baseline compatibility; strict alarms on RX power thresholds.

- Operating conditions: chassis and room temperatures varied seasonally; we validated at both nominal and worst-case inlet temperatures.

Chosen solution: aligning SFP optics specs with DCI reach, power, and DOM

We treated this as a procurement-and-qualification project, not a simple “buy the cheapest module” decision. For DCI we needed optics that could pass receiver sensitivity at the far end, maintain stable laser bias over temperature, and report DOM values consistently so monitoring could correlate link health.

Although “data center interconnect SFP” is often used broadly, DCI at 400G commonly uses QSFP-DD/CFP2-class optics rather than SFP. In our rollout, we used SFP/SFP+-class optics for the 100G tier and a parallel 400G optics selection for the higher-capacity links. The key procurement lesson was to evaluate each link class with its own electrical and optical constraints while keeping the vendor management process unified.

Technical specifications table (100G SFP long-reach vs 400G short-reach)

The table below summarizes the spec dimensions that mattered most during qualification. Exact model numbers vary by switch vendor and optics program, but the parameters below are what procurement teams and field engineers should request from datasheets and test reports.

| Optics type | Typical data rate | Wavelength | Target reach | Connector | DOM support | Operating temperature | Key risk to check |

|---|---|---|---|---|---|---|---|

| 100G SFP/SFP+ long-reach (example: 10G SR is not applicable) | 100G per lane scheme or vendor-specific mapping | 1310 nm (typical for long-reach) | ~10 km-class | LC | Required for monitoring | Industrial-grade or wider (request full range) | RX power margin at temperature extremes |

| 400G optics (often QSFP-DD class; not true SFP) | 400G (lane aggregation) | 850 nm for SR-class, or CWDM lanes depending on design | ~0.1 to 2 km-class (short reach) | LC or MPO (module-specific) | Required for telemetry | Industrial-grade or wider (request full range) | Lane-to-lane mapping, polarity, and MTP/MPO cleanliness |

Concrete module examples we used during sourcing

To stay grounded, we requested and tested known module families that match common DCI patterns. On the 100G tier, we evaluated OEM and third-party long-reach optics from vendors such as Finisar and FS-style catalog modules, including examples like Finisar FTLX8571D3BCL for certain 1310 nm-class long-reach use cases where compatible with the host. On the 400G tier, we focused on QSFP-DD-class optics appropriate for short-reach or CWDM patterns rather than forcing SFP modules into a lane map they cannot support. Where we used FS.com-style modules, we validated DOM behavior and switch compatibility before approving bulk buys.

Pro Tip: In DCI environments, the most common “it passes at room temp” failure is not optical loss alone. It is receiver bias drift plus DOM threshold interpretation differences between vendors, which can trigger alarms even when the BER is acceptable. Always test at your expected inlet temperature range and confirm the switch’s alarm thresholds match the module’s DOM reporting behavior.

[Source: IEEE 802.3 Ethernet PHY requirements summary and vendor DOM behavior notes; see also transceiver datasheets for alarm/threshold implementation.]

Implementation steps: how we reduced supply risk and cut over safely

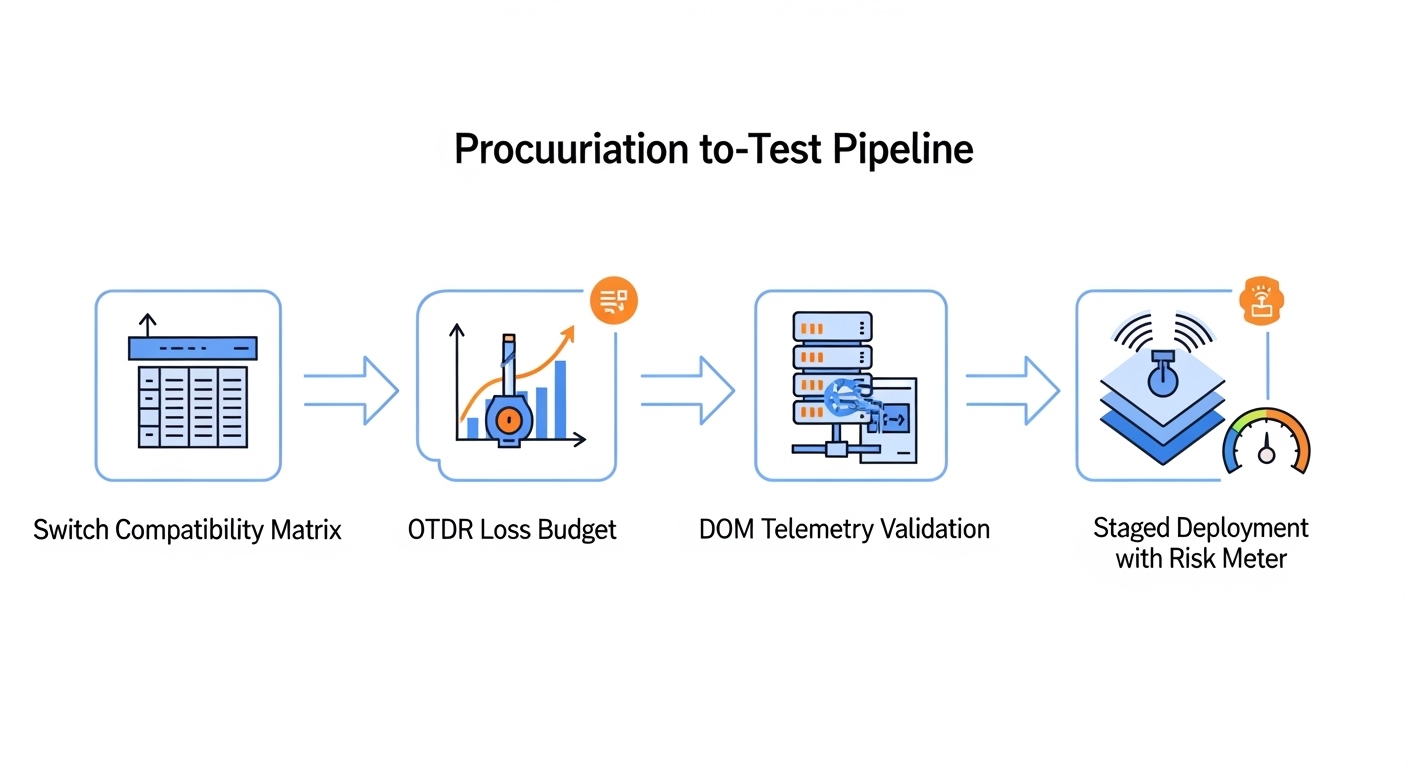

We used a staged rollout that procurement and field teams could both support. The goal was to avoid “big bang” risk while still meeting maintenance windows. Each step below had a measurable exit criterion.

Build a compatibility matrix before ordering

- Extract the switch vendor’s optics compatibility list and map it to your intended reach and wavelength.

- Require DOM readout samples (not just photos of a dashboard) and confirm key fields: temperature, bias current, received power, and laser status flags.

- Document lane mapping expectations for the 400G tier so you do not discover polarity issues at cutover.

Qualify with a loss budget and a margin target

- Run OTDR to validate reach assumptions and identify high-loss events.

- Use a receiver power margin target (for example, reserve several dB beyond “minimum spec” to handle real patch cord wear and connector aging).

- Test optics in pairs and at both nominal and worst-case temperature inlet conditions.

Dual-source procurement for lead time resilience

- Split orders across two approved supply channels where possible (OEM and an approved third-party).

- Negotiate lead times with explicit ship date guarantees and return/replace terms.

- Keep a small “activation kit” of spare modules per site to absorb unexpected DOA or DOM incompatibility.

Staged deployment with telemetry gates

We deployed in waves: first a single link pair, then scaled to the full cluster. Telemetry gates included link error counters, RX power stability, and absence of DOM alarm events under sustained traffic. We also verified that monitoring systems correlated link health correctly with DOM fields.

Measured results: what improved after the DCI optics selection

After rollout, we saw measurable improvements in both operational stability and schedule reliability. The biggest win came from aligning optics selection to the actual loss budget and validating DOM behavior against the host alarm interpretation.

Lead time: dual-sourcing reduced schedule risk, with the final bulk shipment arriving within the planned window rather than slipping 3 to 6 weeks.

Link stability: during a 30-day observation window, we reduced link flap incidents from an initial pilot baseline to near-zero on the validated optics set. Receiver power readings stayed within expected variance bands across temperature swings.

Operational overhead: support tickets tied to “optics alarm but no traffic outage” dropped because DOM thresholds and telemetry alerts matched the module behavior after qualification.

Cost and TCO: third-party modules offered a meaningful unit cost reduction on the 100G tier without increasing failure rate beyond the acceptable threshold once we constrained purchases to validated part numbers and required DOM verification. Total cost of ownership improved because fewer maintenance interventions were required and cutover windows were met.

Common mistakes and troubleshooting tips for DCI SFP optics

These are the failure modes we repeatedly saw during qualification and the fixes that worked. Use them as a checklist during acceptance testing and post-install troubleshooting.

Mixing reach classes and assuming “SMF is SMF”

Root cause: selecting long-reach optics for a short-reach fiber run (or vice versa) can still “link up,” but it erodes power margin and increases sensitivity to connector aging and patch cord swaps. In DCI, even small loss changes can push the receiver close to its sensitivity knee.

Solution: calculate a full loss budget using OTDR and include patch cord and splice losses; enforce a margin target beyond the minimum optical budget in the datasheet.

DOM mismatch triggers alarms despite acceptable link BER

Root cause: the host switch may interpret DOM thresholds differently or expect specific DOM field ranges. Operators then see alarming telemetry and initiate unnecessary swaps.

Solution: validate DOM fields during acceptance testing and confirm that alarm thresholds are consistent. If your monitoring stack uses DOM-based triggers, align those triggers with the module’s documented behavior.

MPO/MTP polarity and cleanliness issues on high-lane-count optics

Root cause: for 400G optics that use MPO/MTP connectors, polarity errors or dirty end faces can cause intermittent lane failures. The symptom can look like “random” flaps under load.

Solution: enforce a cleaning workflow, use polarity-verified cassettes, and test with deterministic traffic patterns while monitoring per-lane error counters.

Temperature-only testing that misses inlet extremes

Root cause: modules that pass at room conditions can drift under worst-case inlet temperatures. Laser bias and receiver sensitivity change with temperature, affecting stability.

Solution: run traffic tests at both nominal and worst-case inlet temperatures expected for the chassis environment.

Selection criteria checklist: how to choose the right DCI optics without regret

When you are selecting data center interconnect SFP optics for 100G and planning a parallel 400G strategy, engineers typically weigh the following factors in order:

- Distance and link budget: confirm reach class, wavelength, and margin using OTDR and measured patch cord losses.

- Switch compatibility: verify the module is supported by the specific switch model and firmware; confirm lane mapping where applicable.

- DOM support and monitoring expectations: ensure DOM telemetry fields and alarm behavior match your operations tooling.

- Operating temperature range: request full temperature specs and validate in your chassis inlet conditions.

- Cost and supply chain risk: evaluate unit price, expected failure rate, warranty terms, and lead time variability.

- Vendor lock-in risk: mitigate by dual-sourcing approved part numbers and maintaining a tested spare pool per site.

Cost and ROI note: what to budget for and how to justify the spend

In practice, optics pricing varies by reach class, brand, and volume. For 100G long-reach optics, you may see OEM pricing that is materially higher than third-party catalog options; third-party modules often reduce unit cost, but only if you constrain purchases to validated part numbers and require DOM and compatibility proof. For 400G short-reach optics, the module class and connector type (MPO/MTP vs LC) usually drive higher unit cost, so the ROI case often comes from minimizing downtime and avoiding repeated cutovers.

From a TCO view, the best ROI is rarely “lowest unit price.” It comes from meeting cutover windows, reducing truck rolls, and lowering the number of optics swaps triggered by avoidable alarm events. For budgeting, include shipping, spares, cleaning consumables, and acceptance testing labor as part of the optics program.

FAQ

Do data center interconnect SFP modules work for 400G links?

Usually not. Most 400G DCI links use QSFP-DD or similar higher-density module formats rather than SFP. You can still use SFP-class optics for the 100G tier while selecting a separate, compatible optics family for 400G.

What DOM fields should we verify during acceptance testing?

Verify temperature, bias current, transmit power, and received power, plus any laser status and alarm indicators. The goal is to ensure the switch and monitoring stack interpret values consistently so you do not get false positives or missed alarms.

How do we estimate optics margin for a real DCI fiber path?

Use OTDR to measure end-to-end attenuation and identify problematic events. Then add connector and patch cord loss from measured data, and include aging and operational variability so you keep a buffer beyond the datasheet minimum budget.

What is the most common cause of intermittent link flaps?

For higher-lane optics, the most common causes are cleanliness and polarity errors on MPO/MTP connections. For SFP/SFP+ optics, the frequent culprit is insufficient power margin at temperature extremes or a DOM/alarm threshold mismatch that triggers operational interventions.

Should we standardize only on OEM optics?

OEM optics can reduce compatibility uncertainty, but they increase lead time and concentration risk. A better approach is dual-source procurement using pre-qualified, switch-compatible part numbers with DOM validation and staged rollout.

How can procurement reduce lead time risk for DCI cutovers?

Negotiate ship-date guarantees, split orders across approved suppliers, and keep validated spares per site. Then run a short acceptance test batch so you can quickly replace any DOA modules without missing the cutover window.

If you are preparing a DCI optics program, the next step is to build your compatibility and acceptance test template around measured loss budgets and DOM behavior, not just reach claims. Use data center transceiver compatibility matrix to standardize qualification across switch models and optics supply sources.

Author bio: I have led transceiver procurement and field acceptance testing for DCI rollouts across multi-vendor switching platforms, focusing on DOM telemetry alignment and receiver margin verification. I translate datasheet specs into operational acceptance criteria that reduce cutover risk and improve supply chain resilience.