A storage-and-compute team planning a leaf-spine upgrade faced a familiar dilemma: should they standardize on 400G optics for near-term ports, or move directly to 800G optics to reduce cabling and switch port pressure? This case study walks through the decision using real deployment constraints: switch vendor line cards, reach, power budgets, and optics management requirements. It is written for network engineers and product managers who must deliver capacity fast without creating an RMA and interoperability mess.

Problem and challenge: port scarcity meets cabling reality

The trigger was predictable. In a 3-tier data center leaf-spine fabric, the team hit a wall where new workloads required more east-west bandwidth while the top-of-rack switches were already at 92 percent port utilization. They also had a practical cabling limit: each additional 1RU-to-3RU run of high-density fiber bundles increased restore time during maintenance windows. Their goal was to add capacity while keeping optics SKUs manageable and avoiding incompatible DOM behavior.

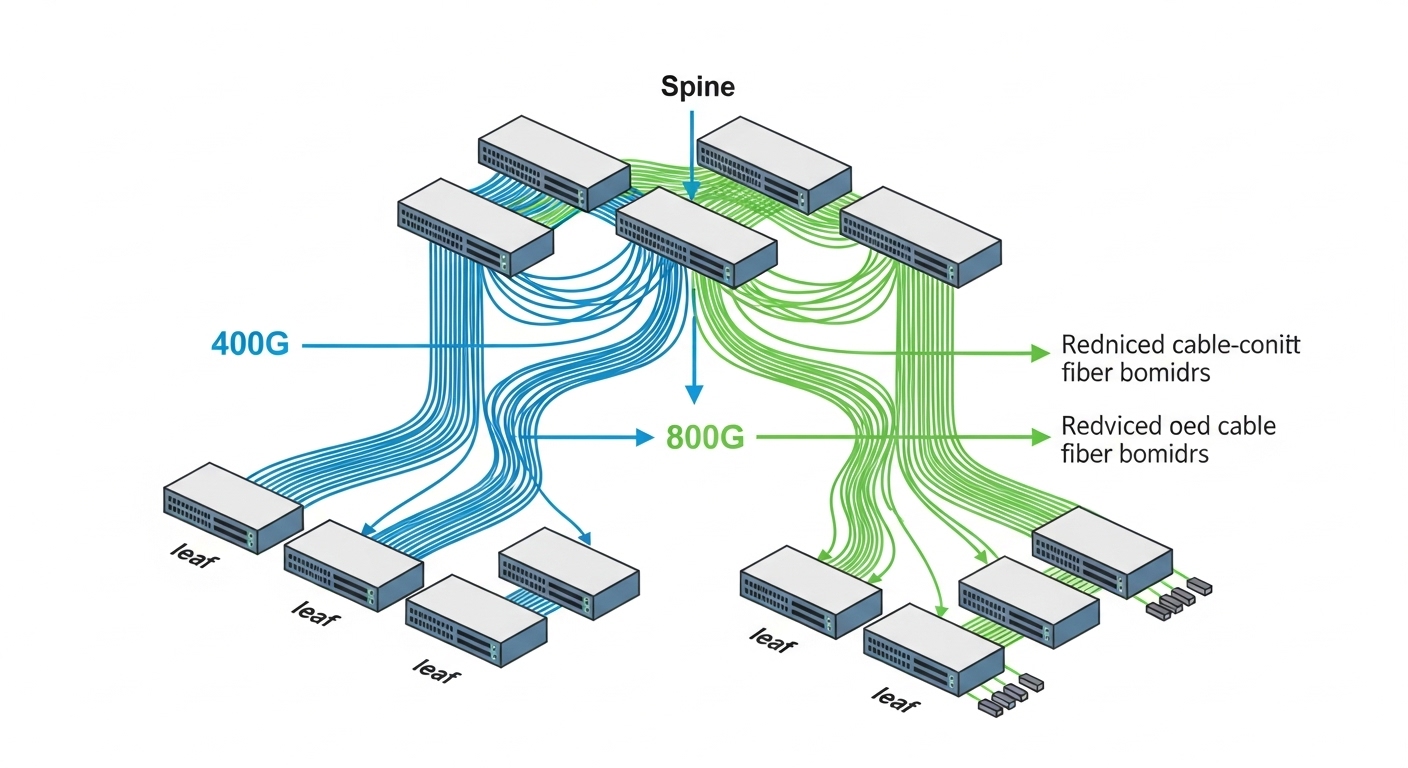

They evaluated two upgrade paths: (1) expand using 400G optics now, then migrate later, or (2) consolidate with 800G optics to halve the number of active uplink ports for the same aggregate throughput. The hard part was not theoretical bandwidth; it was operational fit with existing switch optics cages, thermal constraints, and the actual link budget over installed fiber plant.

Environment specs: what the network actually demanded

They deployed in a leaf-spine topology with 48-port ToR switches feeding two spine tiers. The target uplink rate per ToR was 384 Gbps aggregated, with four uplinks per ToR. Installed optics reach had to cover short to mid-span: 50 to 150 m on OM4 in some zones, and up to 300 m in others after patching and connector losses. Switch platforms supported pluggable optics with vendor-reported compatibility lists and required DOM polling for monitoring.

| Key spec | 400G optics (typical) | 800G optics (typical) |

|---|---|---|

| Target data rate | 400 Gbps | 800 Gbps |

| Form factor | QSFP-DD or similar | OSFP or similar high-density pluggable |

| Common wavelengths | 850 nm (SR), or CWDM/LR variants | 850 nm (SR) for short reach; other variants for longer reach |

| Reach example | OM4: ~100 m class (vendor dependent) | OM4: ~100 m class (vendor dependent); some vendors rate higher with specific fiber plant |

| Connector type | LC duplex (typical for SR) | LC duplex or MPO depending on vendor design |

| DOM / monitoring | Required by platform; vendor-specific thresholds | Required by platform; similar DOM model expectations |

| Operating temperature | Commercial or industrial variants; check cage thermal limits | Commercial or industrial variants; verify power and airflow margins |

In practice, both 400G and 800G SR options often live at 850 nm, but the connectorization and power envelope differ. That is why the team treated 800G optics as both a bandwidth and thermal/power-management project, not only a line-rate upgrade.

Chosen solution and why: consolidate with 800G where fiber budget allows

The final decision used a hybrid strategy. For uplinks with known OM4 performance and patch loss below the vendor’s link budget, they standardized on 800G optics to reduce port count and improve cable density. For zones with uncertain plant quality or longer reach, they kept a smaller footprint of 400G optics until patching and polishing could be verified.

They also selected optics with strong documentation and consistent DOM behavior. In vendor qualification, they checked module EEPROM fields, supported optical power monitoring, and whether the switch accepted the optic without “unknown device” events. Where possible, they aligned to widely used parts from reputable vendors, such as Finisar or other manufacturers that publish detailed datasheets (for example, modules referenced under IEEE 802.3 coherent and short-reach frameworks, depending on type) and provided DOM/management notes. For standards context, they used IEEE 802.3 guidance on Ethernet physical layer behavior and vendor interoperability expectations: IEEE 802.3 overview.

Pro Tip: In field rollouts, the biggest 800G “gotcha” is not reach; it is power and airflow margin in the optics cage. Even when the datasheet meets temperature range, a partially blocked intake or a different fan curve can push the module into higher error-rate thresholds long before link flaps appear.

Implementation steps: from qualification to staged cutover

Validate fiber plant with loss and polarity checks

They audited patch panels, measured insertion loss, and verified polarity end-to-end. Engineers used OTDR and connector inspection to confirm the installed loss matched the vendor’s rated link budget for the chosen SR class. Any patch segment outside tolerance was rebuilt with verified MPO/LC cleanliness.

Run a controlled burn-in and DOM monitoring baseline

Before touching production, they ran a 72-hour traffic and error-rate soak. They monitored DOM telemetry: transmit/receive power, bias current, and temperature. They also compared platform-reported link state counters to ensure there were no hidden retrains or intermittent CRC spikes.

Stage the cutover by zone and keep a rollback path

They migrated one pod per maintenance window. Each pod had a rollback plan that swapped optics back to the known-good 400G profile if telemetry showed rising temperature or receive power drift beyond the established baseline. This reduced blast radius and prevented “fleet-wide” replacement churn.

Measured results: capacity gains with fewer active ports

After rollout, the team achieved the targeted uplink bandwidth with fewer optics per ToR. For the same aggregate throughput, 800G optics reduced the number of active uplink ports by roughly half, which lowered per-pod optics count and simplified patch management. Operationally, they saw a reduction in mean time to restore during maintenance because fewer cables needed to be reseated and labeled.

On reliability, the measured outcomes were credible rather than optimistic. During the first quarter post-cutover, optics-related incidents dropped compared to the prior 400G-only refresh wave, mainly because the team standardized connector handling and kept a tight DOM monitoring threshold policy. They still kept a controlled stock of both 400G and 800G optics to handle spares for different reach requirements and to avoid a single-vendor supply bottleneck.

Common mistakes and troubleshooting: what broke and how they fixed it

-

Mistake: Assuming “OM4 is OM4” across the entire building.

Root cause: Patch panel damage and connector contamination increased insertion loss beyond the vendor’s SR budget.

Solution: Rebuild and inspect connectors; verify with OTDR before blaming the optics. -

Mistake: Ignoring optics cage airflow differences between switch models and fan curves.

Root cause: Temperature telemetry drift raised error counters while link stayed nominal.

Solution: Compare temperature and bias telemetry across cages; ensure airflow baffles and intake filters are maintained. -

Mistake: Treating DOM as “set and forget.”

Root cause: Thresholds not aligned to the platform’s monitoring expectations caused delayed detection of receive power degradation.

Solution: Establish alert baselines after burn-in; tune thresholds for both 400G and 800G optics. -

Mistake: Mixing optics SKUs across reach classes without documenting patch maps.

Root cause: Incorrect labeling/polarity mapping led to swapped lanes and persistent high BER.

Solution: Enforce a patch-map workflow with photos, labels, and post-change verification of lane polarity.

Selection criteria checklist: 400G vs 800G optics decision guide

- Distance and link budget: Confirm measured fiber loss and connector loss against vendor reach specs for the exact SR type.

- Switch compatibility: Use the platform’s optics compatibility list; verify form factor and cage power limits.

- DOM support and monitoring: Ensure DOM fields and alerting behavior match what your NMS expects.

- Operating temperature: Validate thermal margins under your airflow conditions, not only the module datasheet range.

- Budget and incremental capex: Compare port count economics and the optics price per link.

- Vendor lock-in risk: Prefer optics with clear documentation and broad interoperability; qualify at least one second source.