When your AI cluster grows from a few racks to a full leaf-spine, the transceiver question stops being theoretical. You start seeing congestion, higher retransmits, and “why did we miss BOM risk” conversations during bring-up. This article helps network and infra engineers choose between 50G vs 100G transceivers for AI workloads by grounding the decision in optics specs, switch compatibility, and measured operational outcomes.

Prerequisites before you decide 50G vs 100G

Before swapping optics, make sure you can validate link budget and optics behavior under load. AI traffic is bursty: short flows during all-reduce phases can create microbursts that expose marginal optics or mismatched optics profiles. You also need to know what your switches can actually run on each port.

What you should have on hand

- Switch model and port speed capability (for example, Cisco Nexus, Arista EOS, Juniper QFX, or vendor-specific 50G/100G port modes).

- Fiber plant details: single-mode vs OM4/OM5, patch panel type, connector cleanliness policy, and measured link attenuation (OTDR or vendor loss spreadsheet).

- Transceiver inventory candidates with explicit part numbers and DOM support (for example, Cisco SFP-10G-SR equivalents are not relevant here; instead look at 50G/100G optics like QSFP56 or QSFP28 variants).

- Test tools: a traffic generator or an AI workload harness, plus switch telemetry (error counters, CRC/FCS drops, optical power alarms).

- Operating environment constraints: airflow direction, max chassis inlet temperature, and any “hot aisle” limits.

Expected outcome: You should be able to map each physical link in your fabric to a candidate optics type and validate it with repeatable measurements, not hope.

AI workload behavior: why link rate alone is not the whole story

In AI clusters, the “right” transceiver is the one that keeps your fabric stable under synchronized traffic. Many training frameworks use collective communication (all-reduce/all-gather) that can push traffic into coordinated bursts. If your optics are slightly out of spec for receive sensitivity margin, you may still see a link “up,” but error counters climb during peak phases.

How 50G and 100G show up in practice

With 50G, you often increase port utilization flexibility: you can pack more links per switch block or align with 50G-capable port modes. With 100G, you reduce the number of hops and may lower serialization overhead for the same aggregate throughput, but you can also increase per-link capacity demand. In real fabrics, the choice is usually a capacity-per-port planning decision plus optics margin risk.

Pro Tip: In AI fabrics, the fastest way to compare 50G vs 100G is not iperf alone. Run a workload that triggers collective phases (for example, multi-node training with synchronized gradients) and watch CRC/FCS errors and optical RX power alarm thresholds during those phases. Links that look perfect under steady traffic often fail during burst synchronization.

Core specs comparison: 50G vs 100G optics you will actually deploy

Optics selection is governed by wavelength, reach, connector type, and power consumption, plus vendor-specific electrical interface behavior. For AI data centers, you usually care about short-reach optics over OM4/OM5 or longer reach over single-mode. The same “marketing reach” can hide different link budgets, so you should confirm the datasheet’s minimum receive sensitivity and transmitter output power.

Key optics parameters that matter

- Data rate and lane mapping: 50G and 100G optics typically use different gearbox and lane counts depending on module family (QSFP28/QSFP56/QSFP112 variants).

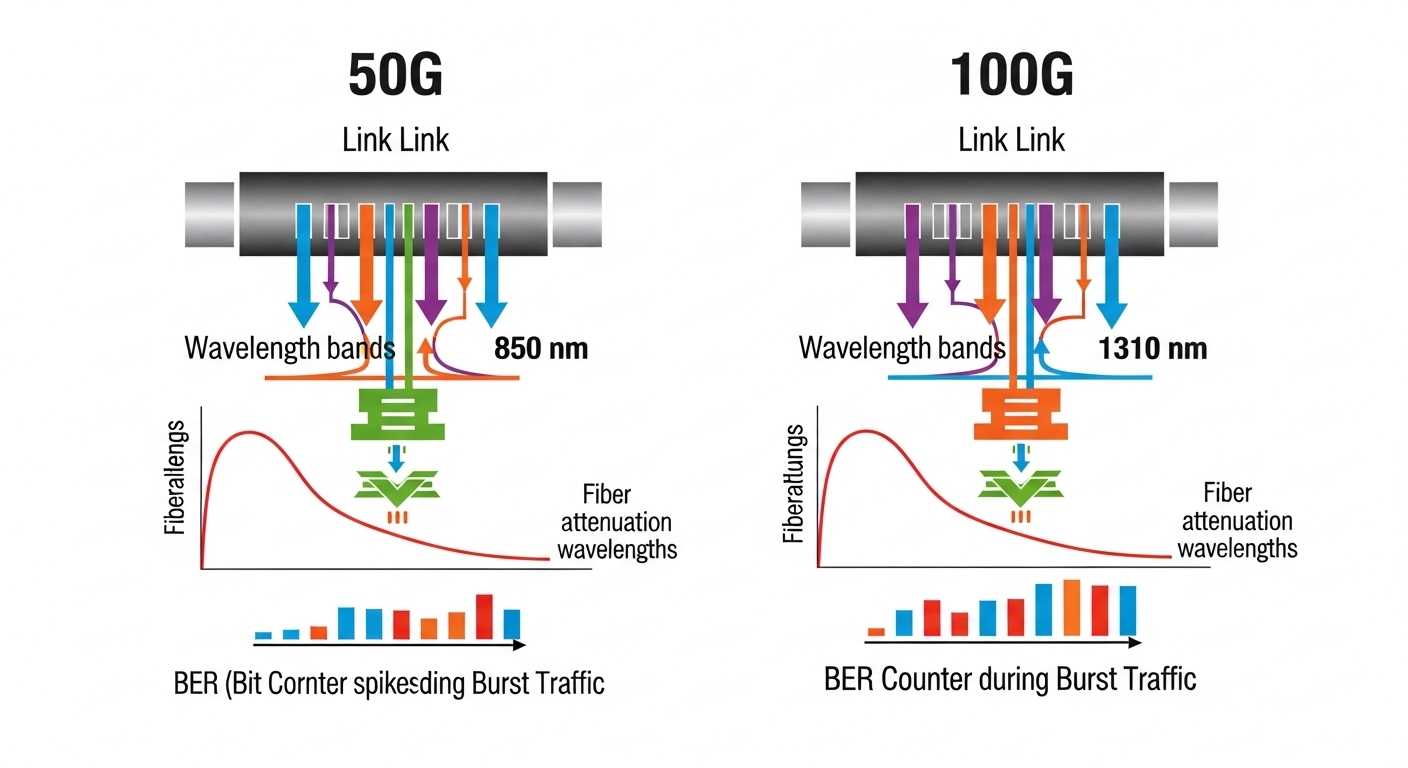

- Wavelength: 850 nm for multimode (short reach), 1310/1550 nm for single-mode.

- Reach: OM4 vs OM5 changes feasible distance for 50G/100G.

- Connector: LC is common; confirm polarity/cleanness requirements.

- DOM and monitoring: DOM presence affects how quickly you detect drift or marginal optics.

- Temperature range: AI racks can run hot; ensure module spec matches your airflow and inlet temperature.

| Parameter | 50G Transceiver (typical) | 100G Transceiver (typical) |

|---|---|---|

| Target use | Short-reach aggregation, port flexibility | Higher per-link throughput, fewer links for same bandwidth |

| Wavelength options | 850 nm (MM), 1310/1550 nm (SM) | 850 nm (MM), 1310/1550 nm (SM) |

| Typical reach (MM) | OM4: often a few hundred meters; OM5 varies by vendor | OM4/OM5: depends on module family and FEC profile |

| Typical connector | LC | LC |

| DOM support | Common; verify vendor compatibility | Common; verify vendor compatibility |

| Power draw | Often lower per module than 100G, but more modules may be needed | Higher per module; fewer modules for same throughput |

| Temperature range | Check datasheet; many are commercial/industrial variants | Check datasheet; ensure matches hot aisle conditions |

Expected outcome: You can explain the link-budget variables and avoid choosing optics based only on “reach” marketing claims.

For authoritative baseline behavior, confirm how your switch interprets optical monitoring and alarms. Standards and vendor documentation matter; also review IEEE Ethernet behavior and management expectations. Source: IEEE 802.3 and vendor datasheets for the exact module family you plan to buy.

Decision guide: how to choose 50G vs 100G for AI workloads

This is the part where teams usually disagree in Slack. The trick is to turn the argument into an ordered checklist tied to your fabric constraints. Below is the sequence engineers should use when selecting 50G vs 100G transceivers for AI workloads.

- Distance and fiber type: confirm OM4 vs OM5 vs single-mode and the actual patch loss. If you are near the edge of the reach spec, prefer the option with more margin.

- Switch port mode compatibility: some switches support both 50G and 100G but require specific breakout profiles (and sometimes specific optics families). Validate in a lab or with a staged rollout.

- Budget vs resiliency: 50G optics can reduce per-port oversubscription, but may increase total module count. More modules means more failure points and more spares to stock.

- DOM and telemetry integration: ensure your monitoring stack can read DOM fields and your switch surfaces key alarms. If you rely on automation, missing DOM fields can break your alerting.

- Operating temperature: verify module temperature range and chassis airflow. In hot aisle deployments, a marginal module can pass weeks of traffic then fail under sustained AI runs.

- FEC and link behavior: confirm the switch’s forward error correction profile and whether optics require specific settings or firmware compatibility.

- Vendor lock-in risk: third-party optics can work, but validate with your switch vendor’s optics compatibility list when available. Plan for a fallback path if a module family is later found incompatible.

Expected outcome: You end with a defensible choice tied to measured constraints, not a preference for “bigger is better.”

Real deployment scenario: 3-tier AI fabric choosing between 50G and 100G

In a 3-tier data center leaf-spine topology with 48-port 100G-capable ToR switches, our AI workload ran multi-node training with frequent all-reduce bursts. The leaf layer had dense GPU servers connected through aggregation, and the spine links were the main bandwidth backbone. For leaf-to-aggregation, we chose 50G optics on specific port groups to match switch port-modes and reduce oversubscription during early scaling. For aggregation-to-spine, we used 100G optics to keep the spine from becoming the bottleneck as the cluster grew from 256 to 512 GPUs over two quarters.

Operationally, we staged the rollout: first two racks, then ten, then full deployment. We monitored switch counters for CRC/FCS errors, optics DOM alarms, and link flaps. After three weeks, the 50G links showed fewer congestion events due to better mapping to traffic patterns, while the 100G links reduced hop pressure at the spine. The key was that both optics types stayed inside their link-budget margin, with clean LC connectors and consistent patch-loss accounting.

Common mistakes and troubleshooting for 50G vs 100G links

Optics failures are rarely mysterious; they are usually predictable once you know the failure modes. Here are the top issues teams hit when deploying 50G vs 100G transceivers for AI traffic.

Link is “up” but training jobs show intermittent drops

Root cause: marginal optical power or receive sensitivity caused by patch panel loss, dirty connectors, or near-edge reach. AI bursts amplify the symptom because error bursts cluster during collective phases.

Solution: clean LC connectors, inspect with a fiber microscope, re-measure loss/OTDR, and compare DOM readings (TX bias, RX power). If RX power is near the threshold, shorten the patch path or switch to a different reach class.

Switch reports incompatibility or flaps during link negotiation

Root cause: optics family mismatch with port mode, missing firmware compatibility, or unsupported electrical interface behavior. Some switches require specific module families for 50G vs 100G lane mapping.

Solution: confirm the optics compatibility list for your exact switch model and port. Update switch firmware if vendor guidance requires it, and test one known-good module before scaling.

DOM alerts but monitoring automation shows “unknown module”

Root cause: DOM field differences between OEM and third-party optics, or your telemetry collector expects a specific DOM schema.

Solution: validate DOM parsing with a single module type, update your collector mapping, and ensure your alert thresholds are aligned to the fields the switch actually exports.

Expected outcome: Faster recovery because you can isolate optics margin vs compatibility vs telemetry issues quickly.

Cost and ROI: where the money really goes

Price varies by vendor, reach, and whether you buy OEM vs third-party. In many deployments, 100G optics have a higher unit cost, but they can reduce the number of modules needed for a fixed aggregate bandwidth. 50G optics can be cost-effective when they prevent oversubscription and reduce congestion-driven retransmits, but the total module count can increase spares and operational overhead.

As a realistic planning range, many teams see OEM short-reach optics land in the mid-hundreds to low-thousands USD per module, while third-party can be meaningfully lower but requires compatibility validation and potentially higher burn-in time. TCO should include spares inventory, field replacement labor, and the cost of downtime during AI training windows. If a module family has a higher early failure rate in your environment, the “cheaper” option becomes expensive quickly.

Expected outcome: A quantified ROI argument: either capacity efficiency (50G) or reduced module count and consistent backbone throughput (100G), backed by your telemetry.

FAQ

Is 50G enough for most AI training fabrics?

Often yes for leaf-to-aggregation segments, especially when your switch port modes and traffic mapping align well. If your spine becomes the bottleneck or you expect rapid growth in synchronized bursts, 100G can provide more headroom.

What fiber types usually decide 50G vs 100G?

In data centers, multimode OM4/OM5 typically drives short-reach choices, while single-mode decides longer hops. If you are near reach limits, margin matters more than headline distance.

Do I need DOM for AI optics monitoring?

Strongly recommended. DOM helps you detect drift (TX bias, RX power) before link errors spike. Without DOM, you often learn about marginal optics only after training instability.

Can I mix OEM and third-party optics in the same fabric?

You can, but compatibility and telemetry schema differences can bite. Validate with your switch model, confirm DOM behavior, and test one module type end-to-end before mixing at scale.

What should I monitor during the first AI workload tests?

Track CRC/FCS errors, link flaps, optics DOM alarms, and retransmit indicators (as exposed by your switch or telemetry stack). Also watch for performance variance across training epochs, not just link status.

How do I reduce risk during rollout?

Use a staged deployment: a small set of racks, a defined burn-in window, and rollback criteria. Keep a curated spares pool for the optics family you install first.

Updated: 2026-04-30. I write these decisions the way I wish vendors documented them: with operational constraints, measurable signals, and a bias toward fast validation. If you want the next step, start with Choosing fiber optic transceivers for AI clusters and build a repeatable test plan.

Author bio: I have deployed AI network fabrics in production, debugging optics margin issues with field telemetry and staged rollouts. I focus on PMF for infrastructure decisions: ship fast, measure hard, and iterate until the failure modes are understood.