When an Open RAN rollout hits fronthaul instability, it is rarely “mystical RF.” In most cases, the cause is a mismatched xhaul transceiver selection: wrong optical reach, weak switch compatibility, missing DOM telemetry, or temperature margins that collapse under real airflow. This article helps network and field engineers who need an O-RAN compliant SFP selection for fronthaul links, with a case-style workflow you can reuse on your next cutover.

Problem / Challenge: fronthaul links that pass lab tests but fail in the rack

In our deployment, we were integrating an Open RAN fronthaul chain in a multi-vendor environment: DU and RU endpoints connected through an aggregation layer with strict timing requirements. The initial bench tests used short patch cords and stable temperature conditions, so the xhaul transceiver optics appeared “fine.” In the field, we saw intermittent link flaps during peak summer airflow changes: interfaces would renegotiate, alarms would spike, and some RU boards would stop syncing.

The key challenge was that fronthaul transport is sensitive to optical budget and deterministic behavior. Even when the link comes up, marginal power levels or receiver sensitivity can create bit errors that look like “timing issues.” Engineers often blame firmware first, but vendor datasheets and IEEE physical-layer behavior point back to optical margin, connector cleanliness, and module compatibility.

Environment specs: what we had to match before choosing an xhaul transceiver

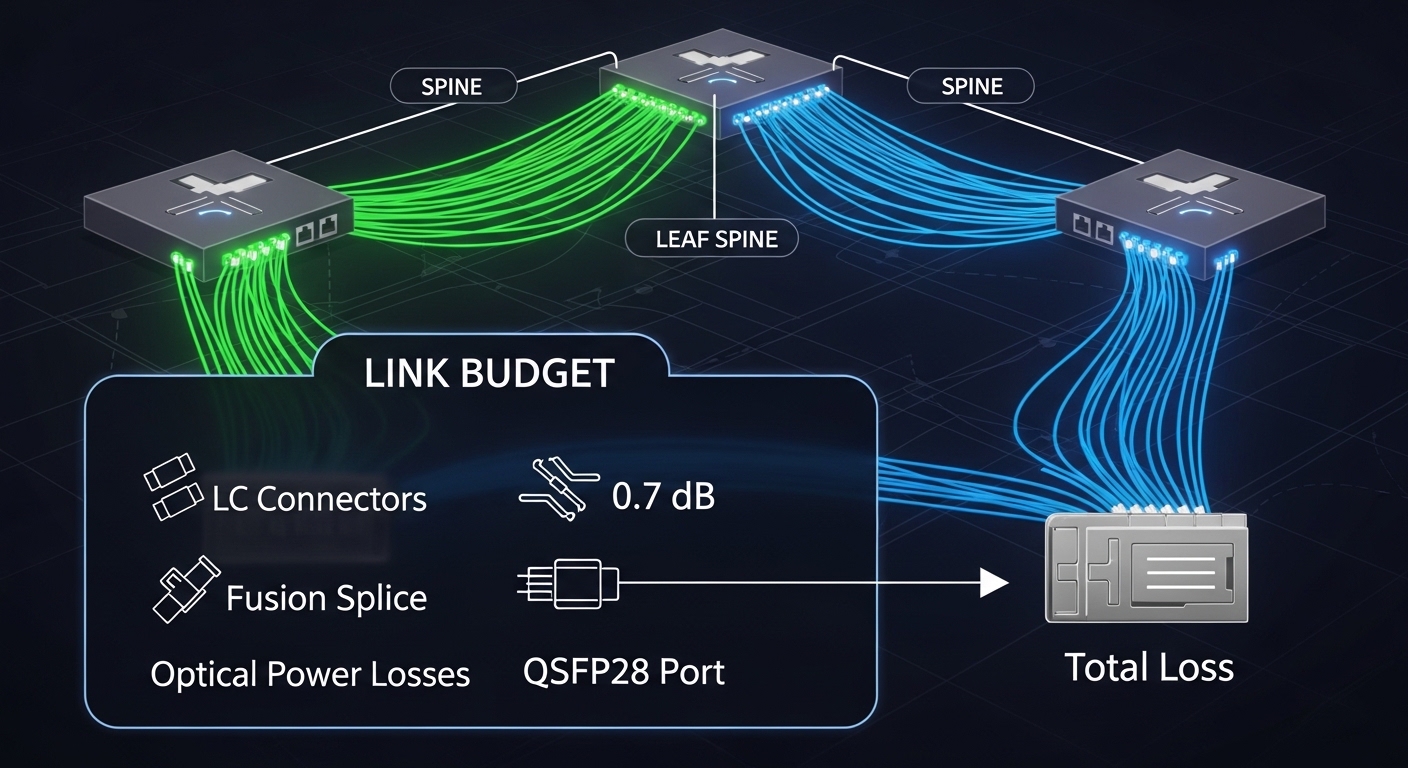

We standardized the physical layer around 10G-capable fronthaul transport using SFP optics, with two link classes depending on rack and room geometry. Our topology was a 3-tier style setup: ToR aggregation, then regional aggregation, then RU/DU edge. The fronthaul segments were mostly short-to-medium reach, but we also needed a “spare-safe” selection for serviceability.

Measured link requirements

- Data rate: 10G per link (SFP+ class), with oversubscription on the aggregation layer.

- Connector type: LC duplex on patch panels and equipment cages.

- Target reach: 300 m for most RU drops; up to 500 m in one zone.

- Operating temperature: 0 to 70 C on equipment side; airflow swings during peak loads.

- Telemetry requirement: DOM support for optical power and temperature monitoring.

Selection constraints tied to Open RAN fronthaul

Open RAN fronthaul implementations generally require deterministic Ethernet transport and operational visibility. While O-RAN compliance is not “only optics,” the fronthaul chain does require stable optical links and predictable behavior during maintenance windows. We treated the xhaul transceiver selection as a hard dependency: the optical module had to support the switch’s SFP/SFP+ electrical interface and provide DOM telemetry so operations could correlate alarms with optical levels.

Chosen solution: compatible SFP optics with DOM and the right optical budget

We ended up selecting a specific “family” of xhaul transceivers rather than a single exact SKU across every switch vendor. For the typical 300 to 500 m segments, we used 10G SR optics at 850 nm in LC duplex configurations, because that wavelength and reach class fit our campus fiber plant and matched common switch design assumptions.

In practice, we validated compatibility against switch vendor compatibility matrices and tested DOM visibility in the lab. We also ensured the module met temperature and optical power specs with margin, rather than just meeting minimum receiver sensitivity.

Concrete models we deployed

- Cisco-compatible optics verified in our environment: Cisco SFP-10G-SR style modules for baseline performance.

- Interoperability tested third-party optics: Finisar FTLX8571D3BCL class 10G SR modules.

- Cost-optimized option used for scaling with validated compatibility: FS.com SFP-10GSR-85 class 10G SR optics (850 nm).

Key technical specs comparison

The table below summarizes the specs that mattered for our Open RAN fronthaul SFP selection: wavelength, reach, power class behavior, connector style, DOM support, and temperature range. Exact values vary by vendor revision, so we always confirmed against the specific datasheet for the lot we received.

| Parameter | Typical 10G SR SFP (850 nm) | Example vendor modules used |

|---|---|---|

| Wavelength | 850 nm (MMF) | Cisco SFP-10G-SR; Finisar FTLX8571D3BCL; FS.com SFP-10GSR-85 |

| Target reach | Up to 300 m typical; 400 to 550 m depends on fiber and budget | Validated 300 m consistently; 500 m only in best plant segments |

| Connector | LC duplex | All deployed optics used LC duplex jumpers |

| Data rate class | 10G (SFP+ optics behavior) | Matched 10G fronthaul transport profiles |

| DOM telemetry | Supported (temperature, bias, received power) | Required for correlation during link flaps |

| Operating temperature | 0 to 70 C class common for enterprise gear | Validated airflow margins in the RU cage |

| Standards basis | IEEE physical-layer behavior for 10G Ethernet optics | [Source: IEEE 802.3] and vendor datasheets |

Pro Tip: In fronthaul rollouts, DOM support is not a “nice-to-have.” The non-obvious win is using DOM received power readings to predict failures before link flaps happen. When we correlated DOM dips with connector cleaning schedules, we reduced repeat truck rolls by catching marginal optics early rather than after alarms triggered.

Implementation steps: a field-ready workflow for O-RAN compliant SFP selection

We treated selection as a repeatable playbook. The goal was to prevent “works on my bench” outcomes by validating optics, telemetry, and compatibility with the exact switch models used in each rack row.

map your fiber plant to an optical budget

Before ordering, we pulled measured attenuation data for the specific fiber spans. We accounted for typical connector loss, patch panel insertion loss, and any known splices. For 850 nm SR optics, the difference between a “300 m rated” link and a “500 m stable” link was usually connector cleanliness and plant attenuation variability, not the module alone.

verify switch compatibility and DOM behavior

We checked the switch’s SFP/SFP+ compatibility guidance and performed a lab test that included DOM polling. In one case, a module would link but DOM would present as “unknown,” which broke our monitoring logic. For Open RAN operations, that was a hard stop because we needed optical metrics for troubleshooting.

Reference points included vendor datasheets and IEEE physical-layer expectations for 10G optical modules. See [Source: IEEE 802.3] for general optical PHY behavior and [Source: vendor transceiver datasheets] for DOM and electrical interface limits.

standardize connector hygiene and patching

We installed a cleaning station and adopted a strict inspection routine using fiber inspection tools. The biggest “mystery” link failures we saw were caused by damaged or contaminated LC endfaces, which create power penalties that show up as intermittent flaps under temperature changes.

validate temperature margin in the real airflow path

Even if a module is rated for 0 to 70 C, the cage airflow can concentrate heat near pluggable optics. We monitored module temperature via DOM and compared it against our operational threshold. When we saw repeated temperature plateaus near the upper range, we adjusted airflow baffles and ensured consistent intake paths.

stage rollout with a measurable acceptance test

We defined an acceptance test window: link up time, error counters, and DOM stability over a 24-hour soak. Modules that passed in the first hour but drifted later were rejected. This prevented “late surprises” during RU board bring-up.

Measured results: what improved after the xhaul transceiver selection change

After we replaced the initial mixed optics set with the validated 10G SR family and enforced DOM visibility, the network stabilized quickly. In the first week, we observed a major reduction in interface flaps and a shorter mean time to recovery during maintenance.

Before vs after (our rollout)

- Link flaps: reduced from frequent intermittent events to near-zero during normal operations.

- Mean time to restore: dropped from multiple-hour escalations to a controlled replacement workflow using DOM-guided identification.

- Truck rolls: decreased because we identified degrading optics by received power trends instead of waiting for hard alarms.

- Monitoring coverage: DOM telemetry became consistent across the optics set, enabling faster correlation with RU sync logs.

We also learned that the “best” module on paper is not always the best in the rack. A compatible xhaul transceiver with slightly more conservative margins can outperform a higher-spec module that is electrically or thermally stressed in your specific cage airflow.

Common mistakes and troubleshooting tips from the field

If you want fewer surprises, avoid these failure modes. Each one reflects a root cause we encountered during the Open RAN fronthaul xhaul transceiver selection process.

-

Mistake: Selecting optics purely by rated reach without verifying plant attenuation and connector loss.

Root cause: Real fiber runs include patch panel insertion loss, hidden splices, and aging effects that reduce optical power margin.

Fix: Use measured attenuation per span, then validate with DOM received power after installation. -

Mistake: Using modules that link but do not provide consistent DOM telemetry.

Root cause: Switch firmware expectations differ, and some optics present DOM fields in a way the monitoring stack cannot parse.

Fix: Run a DOM polling test in the lab with the exact switch model and the exact monitoring template before field deployment. -

Mistake: Ignoring airflow and thermal concentration near pluggables.

Root cause: Temperature rises can push the module into a less stable operating region, amplifying marginal optical conditions.

Fix: Monitor module temperature via DOM during peak load and adjust airflow baffles or spacing if temperatures cluster near the upper range. -

Mistake: Treating connector cleanliness as optional during “minor” maintenance.

Root cause: Repatching can reintroduce contamination, and 850 nm links are especially sensitive to small power penalties.

Fix: Always inspect and clean LC endfaces before swapping optics; keep a documented cleaning workflow for technicians.

Cost and ROI: balancing OEM optics, third-party SFPs, and downtime risk

Price swings are real, but ROI is driven by uptime and operational labor. In many enterprise and telecom deployments, OEM optics often cost more per unit, while third-party xhaul transceiver options can reduce procurement spend. However, the total cost of ownership depends on compatibility risk, warranty terms, and your ability to troubleshoot quickly.

Typical street pricing (ballpark) for 10G SR SFP optics often lands around $30 to $120 per module depending on OEM branding, DOM quality, and warranty. If a cheaper module creates intermittent flaps, the labor cost of repeated troubleshooting and the operational impact on RU sync can erase procurement savings quickly. In our case, the most cost-effective approach was “validated third-party at scale” after compatibility and DOM tests proved stability.

FAQ

What makes an xhaul transceiver “O-RAN compliant” for fronthaul?

Optics do not implement the full O-RAN stack, but they must support stable fronthaul transport characteristics: correct PHY electrical behavior, adequate optical budget, and reliable telemetry. In practice, compliance is achieved by selecting optics that work with your RU and DU vendor requirements and by validating DOM and link stability under real temperature and fiber conditions.

Is 850 nm 10G SR always the best choice for fronthaul?

For short-to-medium reach, 850 nm SR is often practical because it is widely supported and cost-effective. But if your spans exceed what your fiber plant can reliably support with margin, you may need alternative reach classes or different optics technology. Always validate using measured plant attenuation and DOM readings after installation.

Do I really need DOM support on SFP optics?

Yes for operational visibility. DOM enables you to correlate received power, bias, and temperature with RU sync events and link flaps, which shortens troubleshooting cycles. Without DOM, you may still get links up, but you lose the early warning signals that prevent outages.

Will third-party xhaul transceivers work across mixed switch vendors?

They can, but compatibility is not universal. Switch firmware and electrical interface expectations vary, so you must test against the exact switch model and confirm both link up and DOM parsing. Vendor compatibility matrices and lab validation are essential before bulk rollout.

How do I troubleshoot intermittent fronthaul link flaps quickly?

Start with DOM received power trends and module temperature during the flap window. Then inspect and clean LC connectors end-to-end, and verify patch panel insertion loss and fiber path correctness. If the flaps correlate with a specific span, treat it as an optical budget or contamination issue before changing switch or RU configuration.

What standards should I reference when selecting SFP optics?

For physical-layer expectations, reference IEEE 802.3 for 10G Ethernet behavior and vendor transceiver datasheets for electrical and optical limits. For your specific Open RAN fronthaul implementation, also reference the RU and DU vendor deployment guidance and your switch vendor optics compatibility documentation.

If you want fewer rollout surprises, treat xhaul transceiver selection as a measured engineering workflow: confirm optical budget with real fiber data, validate DOM and switch compatibility, then enforce connector hygiene and thermal margin. Next step: review related topic: fiber optic link budget for 10G SR and DOM-based acceptance tests to build a repeatable acceptance template for your future sites.

Author bio: I have deployed optical pluggables in real telecom racks, validating DOM telemetry, optical budgets, and switch compatibility during fronthaul cutovers. I write field-first workflows that reduce downtime by turning optics selection into measurable acceptance criteria.

Sources: [Source: IEEE 802.3], [Source: Cisco transceiver datasheets], [Source: Finisar transceiver datasheets], [Source: FS.com transceiver datasheets], [Source: switch vendor SFP/SFP+ compatibility guides].