Long-distance fiber links fail in predictable ways: wrong wavelength pairing, marginal optical power budgets, incompatible DOM, or temperature stress that shows up only after weeks. This guide helps network engineers and field teams choose a telecom transceiver that can actually hold link stability over distance. You will get a practical selection checklist, a specs comparison table, common failure modes, and a short FAQ for buying decisions.

What “telecom-grade” really means for long-distance optics

For long-haul and metro environments, “telecom grade transceiver” usually implies tighter process control, better optical stability, and more predictable aging than lowest-cost enterprise modules. In practice, buyers verify this through vendor datasheets (optical output power, receiver sensitivity, OSNR assumptions), qualification notes, and the presence of management features like Digital Optical Monitoring (DOM). The goal is repeatability under real plant conditions: connector loss, splice variability, temperature cycling, and aging of lasers.

Standards and what to validate

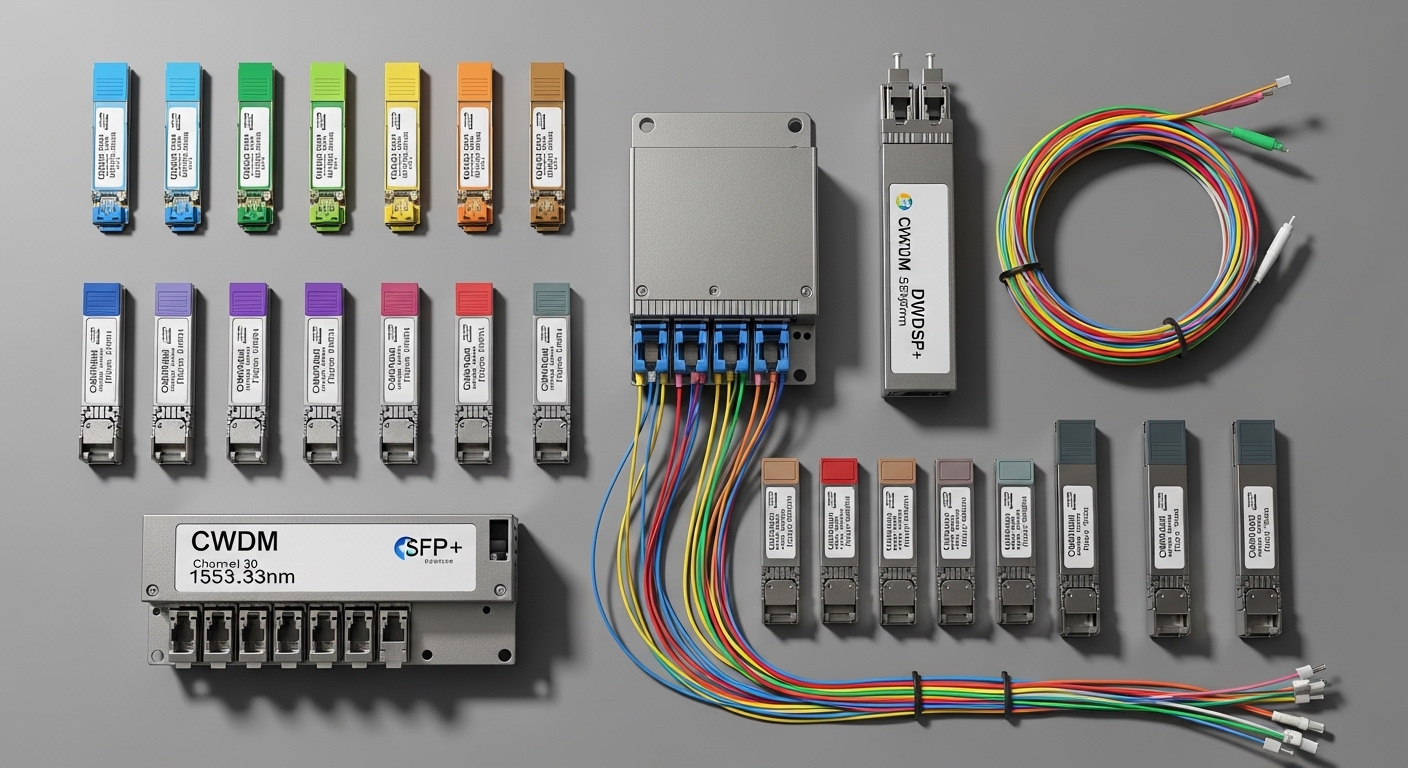

Most transceivers you buy for Ethernet transport align to IEEE 802.3 physical layer definitions (for example, 10GBASE-LR/ER or 100GBASE-LR4, depending on the rate). For telecom optics, the key is that the module must match the interface type your switch or router expects: electrical lane mapping, modulation format, and optical interface (single-mode fiber, wavelength band, and connector type). For wavelength-division multiplexing systems, you also need to align with your WDM plan and channel grid (for dense systems, misalignment can look like “random packet loss”).

DOM and why it matters in operations

DOM is often the difference between “it works today” and “we can diagnose why it degraded.” Field teams typically poll DOM via the switch CLI or telemetry stack to track Tx bias current, Tx/Rx power, and module temperature. When a link starts failing late at night or during cold snaps, DOM trends frequently reveal whether the issue is optical power drift, aging, or fiber damage.

Pro Tip: Many “mystery” long-link failures are not total optical power problems; they are budget erosion caused by aging lasers plus connector micro-loss. If your vendor exposes DOM, compare historical Tx bias and Rx power trends before declaring fiber faults. A healthy module often shows slow drift; a sudden step change usually indicates a connector or patch panel issue.

Key specs that determine long-distance performance

Long-distance success is a math problem plus operational constraints. You need to match data rate, wavelength, reach, and optical power budget to your fiber plant, accounting for connector and splice losses. Then verify the transceiver meets the temperature range and DOM requirements of your platform.

Representative telecom transceiver specs (what to compare)

Below is a comparison across common telecom-grade single-mode optics. Exact values vary by vendor and part number; always verify the datasheet for your specific module.

| Module example | Data rate | Wavelength | Typical reach | Connector | DOM | Operating temp |

|---|---|---|---|---|---|---|

| Cisco SFP-10G-SR (for contrast) | 10G | 850 nm (MM) | ~300 m class | LC | Varies by platform | 0 to 70 C typical |

| Finisar FTLX8571D3BCL (10G ER class) | 10G | ~1550 nm (SM) | ~40 km class | LC | Yes (commonly) | -5 to 70 C typical |

| FS.com SFP-10GSR-85 (for contrast) | 10G | 850 nm (MM) | ~400 m class | LC | Often yes | -5 to 70 C typical |

| Common 25G/100G LR4 telecom SM optics (vendor-dependent) | 25G or 100G | ~1310 nm or ~1310/1550 bands (format-dependent) | 10 km to 40 km class | LC | Yes (many) | -5 to 70 C typical |

Notice how the “contrast” rows include short-reach optics to highlight the trap: an SFP-10G-SR class module is not a long-distance telecom transceiver, even if it fits physically. For long-distance, you typically need single-mode fiber (SMF) and wavelengths in the 1310 nm or 1550 nm region, plus an optic type that matches your transport rate and modulation.

Power budget and receiver sensitivity (the real gating factor)

Datasheets typically provide transmit power range and receiver sensitivity for a specified BER target (often 1E-12 or similar). You compare these against your fiber plant loss: fiber attenuation (dB/km), plus splice loss and connector loss. If you are close to the edge, the link may come up but fail under temperature extremes or after aging.

DOM thresholds and alarm behavior

Some vendors expose alarm thresholds that are conservative; others let you read values but not configure thresholds. In long-distance operations, you want visibility into at least: module temperature, Tx bias current, Tx optical power, and Rx optical power. If your switch firmware logs DOM events, you can correlate alarms with environmental changes.

Real-world deployment: metro ring with 40 km spans

Consider a metro ring topology connecting two aggregation sites with a pair of 100G links over SMF. Each span is 38 km of SMF plus 6 splices and 2 patch-panel connectors per direction. Engineers budget 0.20 dB/km fiber loss, 0.10 dB per splice, and 0.50 dB per connector, totaling about 7.6 dB plant loss (38 km * 0.20 + 6 * 0.10 + 2 * 0.50). They select a telecom transceiver rated for the same wavelength band and a reach class that supports the required optical margin, with DOM enabled for monitoring.

During acceptance testing, the team confirms link stability at both ends using BER counters (on the switch/router optics page) and verifies DOM telemetry. Two weeks later, a cold snap drops chassis temperature and the module temperature readings shift; if Tx bias rises while Rx power stays flat, the module is healthy. If Rx power drops sharply while Tx bias jumps, the likely root cause is a connector micro-loss or fiber damage, not “mysterious network congestion.”

Selection criteria checklist for telecom transceiver buys

Use this ordered checklist to avoid last-mile surprises.

- Distance and link budget: Confirm fiber type, attenuation (dB/km), splice count and loss, connector loss, and required optical margin. Do not rely only on “reach” marketing.

- Wavelength and fiber compatibility: Match the module wavelength to SMF plant. Ensure you are not accidentally buying an MMF short-reach optic (common procurement mistake).

- Data rate and interface type: Verify the transceiver supports your exact rate and electrical interface (SFP+/QSFP28/QSFP-DD depending on platform).

- Switch or router compatibility: Check vendor compatibility lists and transceiver form factor support. Some platforms enforce vendor IDs or require specific EEPROM layouts.

- DOM support and telemetry mapping: Confirm the platform reads DOM fields. Validate alarms you can actually view in your ops tooling.

- Operating temperature range: For outdoor huts, confirm a telecom transceiver supports the minimum ambient temperature at the module location. Heat inside cabinets can exceed ambient.

- Vendor lock-in risk: Decide whether you can tolerate switch vendor authentication behavior. If you use third-party optics, plan for a controlled rollout and test with spares.

- Optical safety and power levels: Ensure Tx power class and connector cleanliness match your plant. High-power optics plus dirty connectors can accelerate failure.

Common mistakes and troubleshooting tips

Field failures are usually repeatable. Here are the most common telecom transceiver pitfalls with root cause and fixes.

Link comes up but errors spike after temperature changes

Root cause: Optical margin is too tight or the transceiver is operating near a sensitivity edge. Temperature affects laser output and receiver characteristics. Solution: Recalculate the optical budget with worst-case loss, then replace with a module that provides more margin (lower sensitivity requirement or higher Tx power within spec).

“No link” or flapping immediately after install

Root cause: Wrong wavelength or wrong fiber type (MMF vs SMF) or swapped Tx/Rx fibers. This is especially common during patch panel rework. Solution: Verify the transceiver wavelength band, confirm SMF use, and perform a fiber polarity check. Clean connectors and inspect endfaces with a scope.

DOM shows alarming temperatures but link performance is inconsistent

Root cause: The module is not seated correctly or airflow is restricted. Some chassis have port-level thermal constraints that are sensitive to blocked vents. Solution: Reseat the module, check fan status, and verify that nearby optics are not causing localized heating. Compare DOM temperature trends between ports.

Third-party optics work in the lab but fail under production authentication

Root cause: Platform enforces EEPROM/vendor checks or expects a specific DOM capability set. Solution: Validate with your exact switch/router model and firmware version. Roll out in phases using a canary pair and keep OEM spares for fallback.

Slow degradation: increasing error rates over months

Root cause: Connector or splice contamination, or aging of optics under higher-than-expected stress. Solution: Use DOM trend analysis: if Tx bias rises while Rx power falls, inspect and clean. If both drift slowly, plan a preventive replacement during the next maintenance window.

Cost and ROI: what to budget for long-distance optics

Pricing depends heavily on data rate, wavelength band, and vendor. As a realistic range, many single-mode 10G telecom transceivers often fall in the tens to low hundreds of dollars per module (OEM tends higher; third-party can be lower). For higher rates like 25G and 100G, module cost can rise significantly, and the total cost of ownership is dominated by downtime risk and spares strategy.

ROI comes from avoiding truck rolls and extended outages. If DOM telemetry reduces mean time to repair (MTTR) from, say, 4 hours to 45 minutes during optical incidents, the savings can outweigh a higher unit cost quickly. However, third-party optics can increase compatibility risk; treat that as an engineering cost in your PMF-like mindset: run small pilots, measure outcomes, and scale only when failure rates are acceptable.

FAQ

How do I know if my telecom transceiver is truly long-distance capable?

Check the datasheet for single-mode wavelength and a reach class that matches your fiber loss and link budget. “Reach” alone is insufficient; you must confirm optical power budget, receiver sensitivity, and required margin for your BER target.

Can I mix vendors for telecom transceivers on the same link?

Often yes if the modules match the same interface standard and wavelength, and the switch accepts both. In practice, mixing can introduce DOM/alarm differences; validate with a controlled test before deploying broadly.

What DOM readings are most useful during long-link troubleshooting?

Track Tx bias current, Tx optical power, Rx optical power, and module temperature. Sudden Rx power drops with stable temperature often point to connector or fiber issues; gradual drift can indicate aging or slow contamination.

Is an LR or ER telecom transceiver always the right choice?

Not always. LR/ER are naming conventions that map to reach classes, but your plant loss and margin determine success. Select based on your calculated dB budget and the module’s stated transmit/receive characteristics for your exact rate.

What temperature range should I require for outdoor or harsh environments?

Require the module’s specified operating temperature range that covers the lowest expected ambient at the module location, not just the cabinet average. Also consider airflow and heat soak; some failures show up only when the chassis runs hot.

What is the fastest way to isolate fiber vs transceiver faults?

Swap transceivers in a known-good port pair and use DOM trend comparison. If the problem follows the transceiver, replace it; if the issue stays on the fiber path, inspect and test the patch panel, connectors, and splices.

If you want to move from “module installed” to “link guaranteed,” start with a budget-based selection and validate with DOM telemetry. Next, use optical-power-budget-and-dom-metrics to standardize your acceptance test so every long-distance telecom transceiver behaves predictably in production.

Author bio: I build and deploy early networking products and obsess over PMF through rapid field validation, including optics acceptance tests and telemetry-driven MTTR reduction. I write from hands-on experience working with real switch platforms, DOM data, and fiber plant constraints.