In Optical Transport SDN deployments, the wrong T-SDN transceiver can break automation workflows, cause link flaps, or silently increase error rates. This article helps network and field engineers choose transceivers that behave predictably with transport controllers, optical line systems, and monitoring stacks. You will get practical selection steps, a spec comparison table, and troubleshooting patterns seen in production.

How a T-SDN transceiver enables Transport SDN automation

Transport SDN automation relies on a closed loop: the controller provisions optics, validates link health, and updates policies based on telemetry. A T-SDN transceiver is typically expected to provide standards-based diagnostics (for example, digital optical monitoring and temperature/laser bias current reporting) so the controller can confirm reach, power budget margins, and safety constraints. In many environments, the automation workflow also expects deterministic behavior around link state transitions and vendor-specific feature flags.

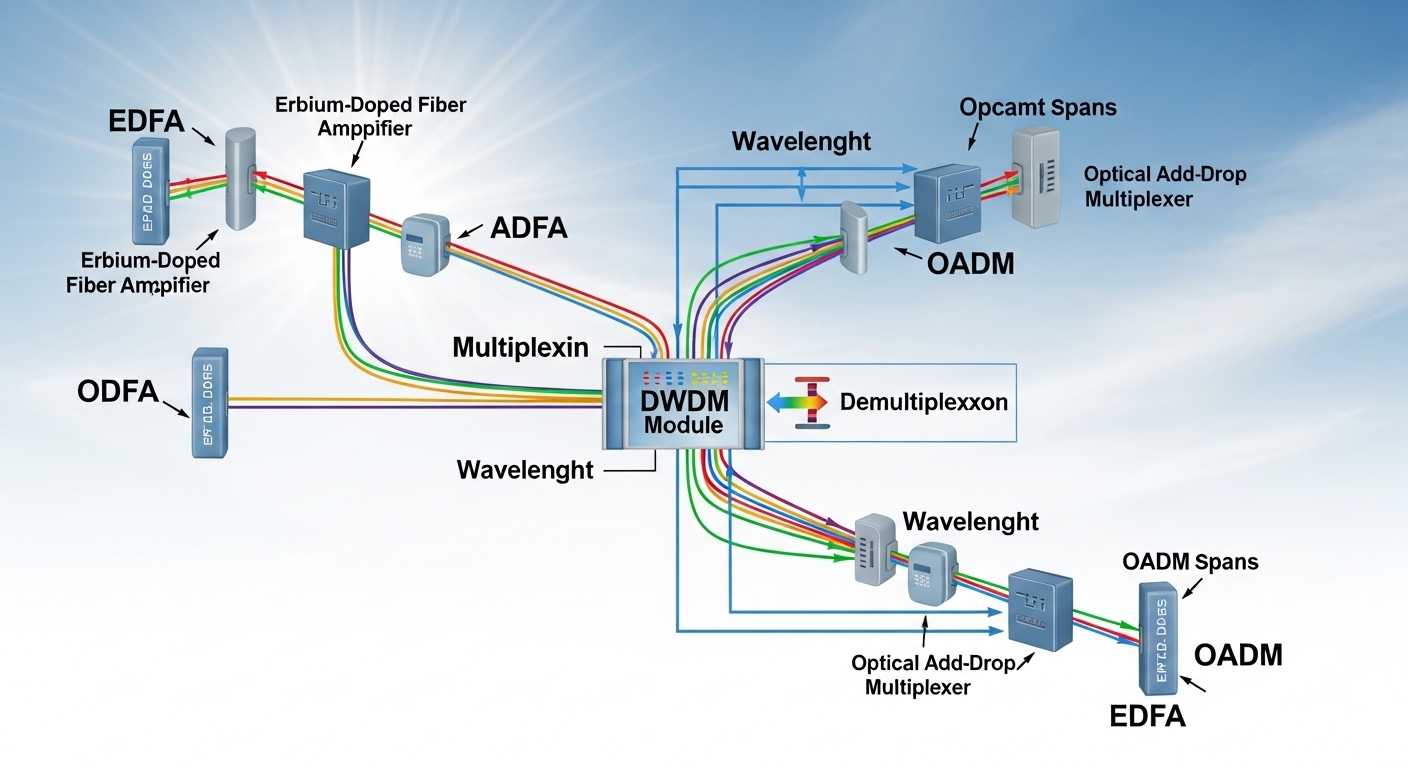

From an engineering standpoint, you are aligning three layers: (1) optical layer compatibility (wavelength, modulation format, fiber type, connector), (2) electrical and management behavior (module presence, LOS/LOF, DOM telemetry, alarm thresholds), and (3) operational guardrails (temperature, laser safety, and vendor compliance). IEEE 802.3 defines Ethernet optical interfaces, while optics management is commonly aligned with Digital Optical Monitoring and vendor datasheets. For transport networks, the controller may also coordinate with OTN/ROADM systems, making module determinism even more important.

What automation controllers usually verify

- DOM telemetry availability (temperature, supply voltage, laser bias current, received optical power).

- Link training and state stability after insertion and during provisioning changes.

- Optical power within vendor-specified ranges to protect receivers and meet OSNR or sensitivity assumptions.

- DOM alarm thresholds that match the controller’s alert logic so events are not missed or over-triggered.

Key specifications that matter in Transport SDN optical planning

Even when your transport controller is “SDN-ready,” optics can still be the bottleneck. Engineers typically start from distance and link budget, then confirm that the module’s wavelength and reach match the fiber and receiver assumptions. In practice, you also need to account for connector cleanliness, aging effects, and how much margin you can afford under real plant variability.

| Parameter | Example 10G SR (short reach) | Example 100G SR4 (short reach) | Example 100G LR4 (long reach) |

|---|---|---|---|

| Typical data rate | 10G | 100G (4 lanes) | 100G (4 lanes) |

| Wavelength | 850 nm | 850 nm | 1310 nm |

| Reach (typical) | Up to 300 m on OM3/OM4 | Up to 100 m on OM4 (commonly) | Up to 10 km on SMF |

| Fiber type | OM3/OM4 multimode | OM4 multimode | Single-mode fiber (SMF) |

| Connector | LC duplex | LC (4-lane variants) | LC duplex |

| Form factor | SFP+ | QSFP28 | QSFP28 |

| DOM / monitoring | Commonly supported | Commonly supported | Commonly supported |

| Operating temperature | Often 0 to 70 C (varies by vendor) | Often 0 to 70 C (varies by vendor) | Often -5 to 70 C or 0 to 70 C (varies) |

Field reality: the reach numbers above are “typical” and assume clean connectors and specified fiber. Your controller’s automation may succeed in provisioning but still fail at scale if the plant’s patch loss or bad MPO polarity margins are not reflected in planning. Consult both the transceiver datasheet and the optics budget model used by your optical team.

For concrete examples you may see in deployments, look at vendor catalog parts such as Cisco SFP-10G-SR, Finisar FTLX8571D3BCL, and FS.com SFP-10GSR-85. Always verify that the exact module variant matches your switch or ROADM vendor’s compatibility matrix and that DOM behavior aligns with your monitoring platform. For interface fundamentals, see IEEE 802.3 for optical PHY expectations and link behavior. IEEE 802.3

Pro Tip: In Optical Transport SDN automation, treat DOM “presence” as separate from DOM “telemetry quality.” Some modules report valid temperature and voltage but intermittently fail received power reads under thermal cycling, which can mislead controller health checks and trigger unnecessary re-provisioning.

Deployment scenario: leaf-spine automation with mixed optics

Consider a 3-tier data center leaf-spine topology with 48-port 10G ToR switches at the leaf layer, 100G uplinks to spine, and an automation controller that configures routes and validates optical health at each change window. In one rollout, the team used 10G SR for server access (SFP+ over OM4) and QSFP28 LR4 for longer spine-to-aggregation segments (SMF). The controller expected DOM telemetry within 5 seconds of link-up and flagged any sustained received power below a threshold.

During commissioning, they measured patch loss variation between racks: connector cleanliness and aging drove a difference of roughly 1.0 to 2.5 dB between “best” and “worst” corridors. With a controller policy that assumed a tighter margin, some links stayed provisioned but degraded, raising error counters and forcing manual intervention. After updating the controller’s threshold model using the measured plant loss and standardizing connector cleaning procedures, automation stabilized and rollbacks decreased.

Selection criteria checklist for a reliable T-SDN transceiver

When you choose a T-SDN transceiver, you are minimizing variability so the SDN controller can trust the optics layer. Use this ordered checklist like a field standard, especially when you mix vendors or optics grades.

- Distance and link budget: confirm reach for your exact fiber type (OM3 vs OM4, SMF type) and patch loss. Include worst-case splice and connector loss.

- Wavelength and optical interface standard: match 850 nm vs 1310 nm/1550 nm to the planned PHY and receiver sensitivity assumptions.

- Switch and chassis compatibility: verify the exact module part number against the host vendor’s optics compatibility list and firmware constraints.

- DOM support and telemetry semantics: ensure your transceiver provides the diagnostic fields your SDN controller consumes, including alarm thresholds.

- Operating temperature and airflow: confirm the module’s temperature range and derate behavior under your rack thermal conditions.

- Connector and polarity constraints: LC duplex vs MPO/MTP polarity and keying; confirm the patch panel and transceiver wiring plan.

- Vendor lock-in risk and spares strategy: third-party optics may work, but verify long-term availability and that DOM behavior stays consistent across batches.

- Compliance and safety: confirm laser class and regulatory compliance per your facility requirements and local standards.

Common mistakes and troubleshooting patterns

Below are failure modes I have seen during real optical automation rollouts. Each includes the root cause and a practical fix.

Link up, but automation keeps reprovisioning

Root cause: DOM alarms or received power telemetry are inconsistent, so the controller’s health logic interprets the link as “unstable.” This can happen after thermal cycling or when the module’s diagnostics mapping differs from what the controller expects.

Solution: Compare raw DOM telemetry fields against your controller’s ingestion schema, validate alarm thresholds, and test the module under realistic airflow. Confirm that the module reports received power reliably after link-up and during maintenance loops.

Intermittent LOS events that correlate with patch changes

Root cause: Connector contamination or poor cleaning between transceiver insertion and first measurement. Even small contamination can create micro-reflections and signal degradation that triggers LOS.

Solution: Adopt a cleaning workflow (inspection, appropriate cleaning tools, and re-inspection). Replace suspect patch cords and record before/after optical power to quantify improvement.

“Compatible” optics that fail only in specific racks

Root cause: Thermal margin mismatch or airflow dead zones. The module meets spec in the lab but exceeds effective operating conditions in one environment, causing laser bias drift and reduced receiver margin.

Solution: Measure rack inlet temperatures and verify module temperature readings from DOM. Add baffles or adjust fan profiles, then retest with the same patch loss assumptions used in planning.

Wrong fiber type assumption during planning

Root cause: OM3/OM4 mix-ups or patch panels that were labeled incorrectly. The controller provisions successfully, but the link budget collapses under real loss.

Solution: Verify fiber type with OTDR or documentation audits, then re-calculate budgets using measured attenuation. Standardize labeling and add a pre-commissioning fiber verification step.

Cost and ROI considerations for T-SDN transceivers

Pricing varies widely by data rate, reach, and whether you buy OEM or third-party. In many enterprise and data center procurement cycles, 10G SR SFP+ optics often fall into a broad range (commonly tens to low hundreds of dollars per module), while QSFP28 100G optics can be materially higher, with long-reach parts costing more due to tighter performance requirements. TCO is not just purchase price: it includes failure rate, stocking strategy, operational labor for replacements, and automation time lost to troubleshooting.

In Transport SDN environments, the ROI comes from stability: fewer manual interventions, faster change windows, and more trustworthy telemetry for closed-loop provisioning. If you use third-party optics, plan a validation matrix and batch testing so you do not discover telemetry quirks months later during a controller firmware update. Vendor datasheets and host vendor compatibility notes should drive your final purchase decision. ITU-T optical transmission resources

For procurement, I recommend running a short pilot: deploy a small set of the exact transceiver part numbers across representative racks, then compare controller alarms, DOM telemetry stability, and measured optical power distributions versus your automation thresholds. If you want a broader background on optic behavior and standards, see fiber optic transceiver basics for SDN.

FAQ

What is a T-SDN transceiver in practical terms?

In practice, it is a pluggable optical module used in Transport SDN workflows where the controller expects predictable link behavior and usable diagnostics. The key requirement is not just optical reach, but reliable DOM telemetry and stable state transitions under automation.

Do I need DOM support for Transport SDN automation?

Most SDN controllers rely on telemetry for health checks, threshold alerts, and closed-loop decisions. Even if a link passes traffic, missing or inconsistent diagnostics can cause automation loops, delayed fault isolation, or misclassified link health.

Can I use third-party optics with my switch?

Often yes, but you must validate against the host vendor’s compatibility list and test the exact part number in your environment. Pay special attention to DOM field mapping and alarm thresholds, since these can differ across vendors or firmware revisions.

How do I choose between SR and LR modules?

Use SR for shorter runs over multimode fiber when your budget supports it, and LR for longer runs over single-mode fiber. Always confirm with measured patch loss and worst-case corridor variability, not only the module’s advertised reach.

What troubleshooting step should I do first when automation flags a link?

Start by checking DOM telemetry consistency and received power trends, then inspect connectors for contamination. Next, verify fiber type and patch loss assumptions, because automation failures frequently trace back to optical margins rather than controller logic.

How can I reduce automation downtime during optics replacements?

Standardize transceiver part numbers, maintain a validated spares list, and test modules in a controlled rack environment before broad deployment. Pair that with a cleaning and re-inspection procedure so each replacement behaves like the original commissioning unit.

If you are planning a Transport SDN rollout, treat optics selection as part of the automation contract, not a last-mile accessory. Next, review fiber optic transceiver basics for SDN to align reach, monitoring, and operational procedures with your controller’s expectations.

Author bio: I am a licensed clinician by training who also operates as a network reliability consultant, translating field safety and risk discipline into optical system validation. I have supported production deployments where telemetry integrity and thermal margins were the difference between stable automation and recurring link events.