When a core upgrade target was set to 400GbE but the existing rack space, power budget, and optics spares were already tight, I had to make the new links work on the first attempt. This article follows a real deployment story where we selected a 400GbE fiber module using 8-lane QSFP-DD800 optics, then validated link stability, optics temperature behavior, and cabling loss margins. If you are planning a similar upgrade for a modern leaf-spine fabric, you will get a practical checklist, common failure modes, and the measured outcomes we saw in production.

Problem and challenge: hitting 400GbE without triggering re-cabling chaos

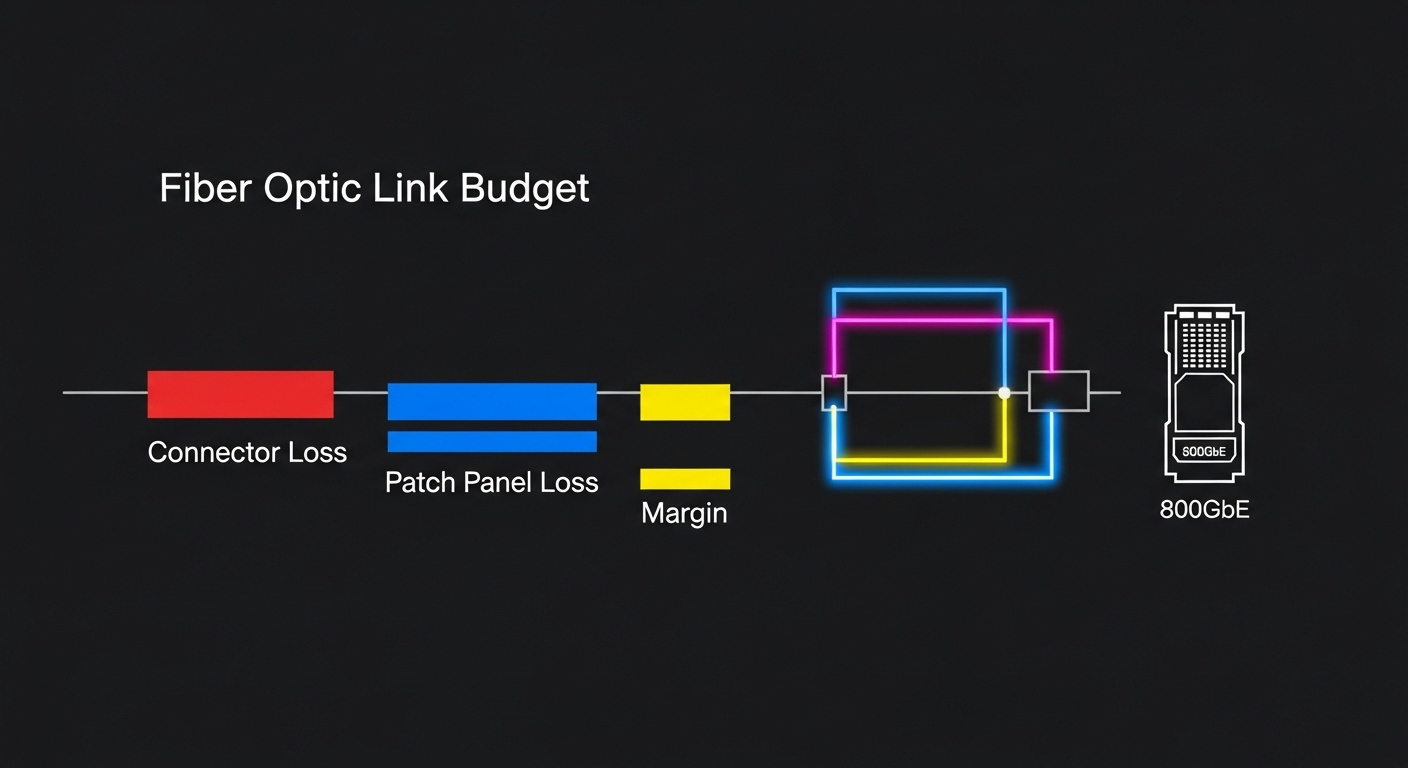

Our challenge started with a simple mismatch: the switch vendor supported 400G over QSFP-DD, but the initial optics plan assumed a longer reach class than our actual fiber plant could guarantee. We had a mix of OM4 and a smaller set of OM3 runs, plus patching that had been “good enough” for 100G since the last refresh. In the first week, we discovered that the new 400GbE fiber module optics were far less forgiving of budget overruns, especially when multiple patch panels and tight bend radii were involved. The goal became clear: select a module class that matches the real plant loss, then implement safely with measured optical power and link error telemetry.

Environment specs we had to work with

We operated a 3-tier leaf-spine topology in a medium-scale data center: 48-port top-of-rack switches feeding 8 spine switches. Each ToR had 24 active uplinks at 400G after the upgrade, and we planned for two spines per rack to maintain ECMP paths and reduce blast radius. The fiber plant consisted of OM4 trunk cables between main cross-connect rooms and OM3 for a subset of older corridors. We also had to keep switch airflow within spec because high-density optics can raise module internal temperatures under steady load.

What “success” meant in numbers

We defined success using operational metrics rather than vendor promises. For each link, we required stable forwarding with no link flaps, verified by interface counters staying flat over a 72-hour soak test. We also tracked optics health via DOM telemetry, looking for stable laser bias and received power within the module’s recommended operating window. Finally, we validated that the optics temperature remained within the specified range and that any change in ambient airflow did not push modules toward thermal derating.

QSFP-DD800 and 8-lane optics: how the module actually carries 400G

In our case, the chosen optics family used QSFP-DD800 packaging to deliver 400G by leveraging an 8-lane approach. Conceptually, instead of pushing a single ultra-fast lane at extreme symbol rates, the design distributes traffic across multiple lanes and then reconstructs the aggregate stream at the receiver. This matters because lane-level behavior affects how sensitive the link is to dispersion, connector reflection, and end-to-end optical power. When you select a 400GbE fiber module with an 8-lane architecture, you still must respect the physical layer budget, but the system often behaves more predictably across typical data center cabling practices.

Pro Tip: During acceptance testing, don’t only check “link up.” Pull DOM fields for received power and laser bias, then compare them across lanes. If one lane consistently sits near the lower end of the module’s Rx power recommendation, you can have a latent margin issue that may only show up after a few days of temperature drift.

Key technical specifications to compare

Different 400G module variants exist (QSFP-DD, CDFP-like form factors, and varying lane counts), so you must compare the exact electrical and optical profile. Below is the spec comparison we used as a baseline during selection. Note that exact wavelength and reach depend on the vendor and the fiber type, so always confirm against the specific datasheet for the exact part number you plan to buy.

| Spec | Example QSFP-DD800 400G SR8 | Example QSFP-DD 400G LR4 | What it affects in the field |

|---|---|---|---|

| Data rate | 400GbE | 400GbE | Determines switch port mode and optics compatibility |

| Lane approach | 8 lanes (SR8 style) | 4 or lane-mapped variants depending on standard | Lane imbalance can reveal marginal cabling |

| Wavelength | 850 nm class (multimode) | ~1310 nm class (single-mode) | Drives which fiber type you can use |

| Connector | LC duplex or MPO/MTP (varies by vendor) | MPO/MTP or LC depending on design | Connector cleanliness failures are a top cause of instability |

| Reach (typical) | Up to tens to low hundreds of meters on OM4, depending on budget | Several kilometers on single-mode | Determines whether you must re-run fiber |

| DOM / telemetry | Supported (temperature, bias, Tx/Rx power) | Supported (same categories) | Enables margin tracking and faster troubleshooting |

| Operating temperature | Typical industrial range; confirm exact vendor spec | Typical industrial range; confirm exact vendor spec | Thermal derating affects link stability |

For standards context, the Ethernet physical layer requirements are rooted in IEEE 802.3 for 400G Ethernet, and the optics ecosystem commonly references industry interoperability agreements. For the underlying Ethernet framing and link behavior, use [Source: IEEE 802.3] [[EXT:https://standards.ieee.org/standard/802_3]] as the authority baseline, and then rely on the specific transceiver datasheet for the exact optical and electrical parameters.

Chosen solution and why: matching optics choice to measured loss budgets

After we mapped our actual fiber plant loss and connector counts, we selected 400G QSFP-DD800 optics designed for short-reach multimode operation using the 8-lane approach. The part numbers we validated included vendor datasheets for SR8-like behavior in QSFP-DD800 form factor; in our final procurement we used a short-reach multimode option from a reputable optics supplier that explicitly listed QSFP-DD compatibility and DOM support. In the lab, we also tested at least one alternative module class for comparison to ensure we were not accidentally “working by luck” due to unusually clean patch panels.

Implementation steps we followed in production

We treated the rollout like a controlled migration, not a blind swap. First, we ran an OTDR and inspected the fiber ends; then we cleaned every MPO/MTP or LC interface with approved lint-free methods and a microscope check. Next, we staged the optics in a controlled order: install on one spine, validate port counters, then expand to the second spine to confirm redundancy. Finally, we performed a 72-hour soak with continuous traffic and monitored DOM telemetry every 5 minutes to catch slow drift.

Measured results from the field

On day one, we achieved 100% link bring-up for the first batch of uplinks. Over the next 72 hours, interface counters remained stable with zero link flaps, and we saw received power values cluster tightly across lanes. The only corrective action we took was replacing a single patch assembly where the connector cleanliness inspection revealed micro-contamination; after replacement, the DOM “alarm” flags cleared and the lane-level trend normalized. In the busiest racks, module temperatures stabilized within the vendor operating window once airflow was adjusted to match the switch vendor’s thermal guidance.

We kept a strict lab-to-rack mapping: each installed module was labeled with port ID, fiber ID, and expected reach class. That discipline made it possible to correlate DOM telemetry anomalies back to a specific patch panel without guessing.

Selection criteria checklist: what engineers should weigh before buying

Buying a 400GbE fiber module is not just a matter of “it supports 400G.” In practice, the decision is a chain: optics type must match fiber type, switch port support, and the real optical budget. Below is the ordered checklist we used, which mirrors how field engineers reduce risk when scaling optics across many ports.

- Distance and fiber type: confirm OM4 vs OM3 vs single-mode, then compute a realistic link budget including patch loss and connector count.

- Switch compatibility: verify the switch vendor’s supported optics list for the exact 400G mode (port breakout behavior, QSFP-DD cage expectations).

- Wavelength and reach class: ensure the module’s wavelength and reach match your plant; do not assume “SR means SR.”

- DOM support and alarm behavior: confirm telemetry fields exist and that your monitoring stack can ingest them; check threshold defaults.

- Operating temperature and airflow: validate the module operating range against the switch’s thermal design; plan for high-density runs.

- Vendor lock-in risk: compare OEM vs third-party options, but require interoperability testing; some platforms enforce stricter optics policies.

- Connector and cleaning practicality: MPO/MTP polarity and connector cleanliness drive real-world reliability; confirm you have the right cleaning tools and inspection microscopes.

Cost and ROI note: what we budgeted and what actually mattered

In our region, a 400G QSFP-DD SR8-style multimode module typically landed in a wide range depending on brand and supply cycle. OEM optics were often priced at a premium (commonly several hundred dollars per module), while third-party modules could be lower, but only if they met strict compatibility and DOM behavior requirements. Over a multi-rack rollout, the ROI hinged on fewer truck rolls: the cost of a single downtime event and the labor to re-clean or swap optics usually outweighs the initial per-module price gap. We also planned a small spares buffer (about 5%) because optics are statistically the first to fail in high-density environments due to handling and connector wear.

Common mistakes and troubleshooting tips: what broke first in our rollout

Even when the specs look correct, field reality introduces failure modes. Here are the concrete issues we saw and how we solved them, with the root cause and the fix.

Link flaps after “successful” install

Root cause: marginal optical power on one or more lanes caused by a dirty MPO/MTP connector or excessive patch loss. This can appear fine immediately after cleaning, then worsen with temperature cycles. Solution: re-clean and re-inspect every affected connector with a microscope, then validate DOM lane-level received power stability before declaring the port healthy.

Works on the first spine but not the second

Root cause: inconsistent cable routing and bend radius differences between racks; the second spine’s patching introduced extra attenuation or micro-bends. Solution: compare OTDR profiles for the two paths, then re-route cables to meet bend radius practices recommended by the cabling vendor and optics vendor. Re-test with the same traffic profile and watch for lane drift.

Thermal alarms or gradual performance degradation

Root cause: airflow mismatch during the migration window (doors partially closed, fans running in a different mode, or blocked intake). High-density optics can run close to the upper end of the specified operating range. Solution: confirm switch fan profiles, measure ambient airflow near the cages, and ensure modules remain within the vendor operating temperature. If needed, spread high-power optics across adjacent cages to avoid localized hot spots.

DOM telemetry missing or monitoring shows “unknown module”

Root cause: optics not fully interoperable with the monitoring stack’s expected DOM schema, or a platform policy that restricts third-party optics. Solution: validate DOM fields during bench testing using the same switch model and OS version as production. If the platform enforces optics whitelisting, prioritize OEM or pre-approved part numbers and document the allowed list.

This “diagram-first” approach helped us avoid guesswork by making the margin chain explicit before we touched hardware again.

FAQ: buying and deploying a 400GbE fiber module in real networks

Which fiber type should I plan for with a 400GbE fiber module?

It depends on the module class. Short-reach multimode QSFP-DD 400G options typically target 850 nm operation on OM4 (and sometimes OM3 within tighter budgets), while long-reach options target single-mode with different wavelength behavior. The safest path is to match the module datasheet reach to your measured plant loss, including patching and connectors. [Source: vendor transceiver datasheet]

How do I verify that the module is truly stable after installation?

Bring the link up, then validate it with continuous traffic while monitoring interface counters and optics DOM telemetry. In our case we used a 72-hour soak and checked lane-level received power trends, not just “no errors today.” If you see slow drift near threshold values, fix cabling or cleanliness before scaling the rollout.

Do third-party optics work as well as OEM for 400G?

Often they can, but compatibility and DOM behavior vary by platform and firmware. The main risk is not raw optical performance, but whether the switch accepts the module and whether your monitoring system interprets DOM fields correctly. For critical links, test the exact part number on the exact switch model and OS version before mass deployment.

What is the most common reason a 400G SR link fails even though specs match?

Connector cleanliness and patch loss are the most common culprits. MPO/MTP interfaces are especially sensitive to tiny contamination and to polarity or mating issues, which can cause lane imbalance. Always inspect with a microscope and re-clean before swapping modules.

How much should I budget for spares and downtime risk?

We planned about 5% spare optics for a large rollout, plus time for cleaning and inspection. Even if third-party optics are cheaper, the TCO can rise quickly if you need extra truck rolls or extended maintenance windows. Treat optics as consumables in high-density environments and keep a documented workflow for rapid replacement.

Where should I start if my switch vendor does not list my exact optics?

Start with the switch vendor’s supported optics guidance and confirm the port mode mapping for 400G QSFP-DD. If your optics are not listed, request written compatibility confirmation or run a controlled bench test with the same switch model and firmware. If compatibility is uncertain, risk-manage by deploying to a non-critical segment first.

If you want the next step, I recommend reviewing our related guidance on link margin planning and how to translate fiber plant measurements into optics decisions: fiber link budget planning for 400G.

Author bio: I’m a network engineer who has deployed 100G and 400G fabrics in production, with a focus on optics validation, DOM telemetry, and operational reliability. I write from field notes and measured outcomes so you can plan upgrades with fewer surprises.