When a Ceph object storage cluster starts falling behind, the culprit is often the hidden I/O path: the object storage network SFP modules between OSD hosts and the leaf switches. This article helps storage and network engineers size and select 100G optical transceivers for Ceph with practical compatibility checks, measurable link-budget constraints, and failure-mode troubleshooting. If you are planning a migration from 10G to 100G or validating a new rack build, you will get a decision checklist you can execute in under an hour per switch model.

Top 8 selection items for object storage network SFP in 100G Ceph

In a Ceph cluster, your “transceiver choice” is not just optics; it is also switch optics compatibility, power and thermal behavior, and how reliably you can sustain congestion-controlled traffic under failure. Below are eight items that field teams repeatedly use to avoid late-stage surprises during rollout. Each item includes key specs, a best-fit scenario, and quick pros/cons to support rapid validation.

Pick the right optical lane rate and standard for 100G Ceph

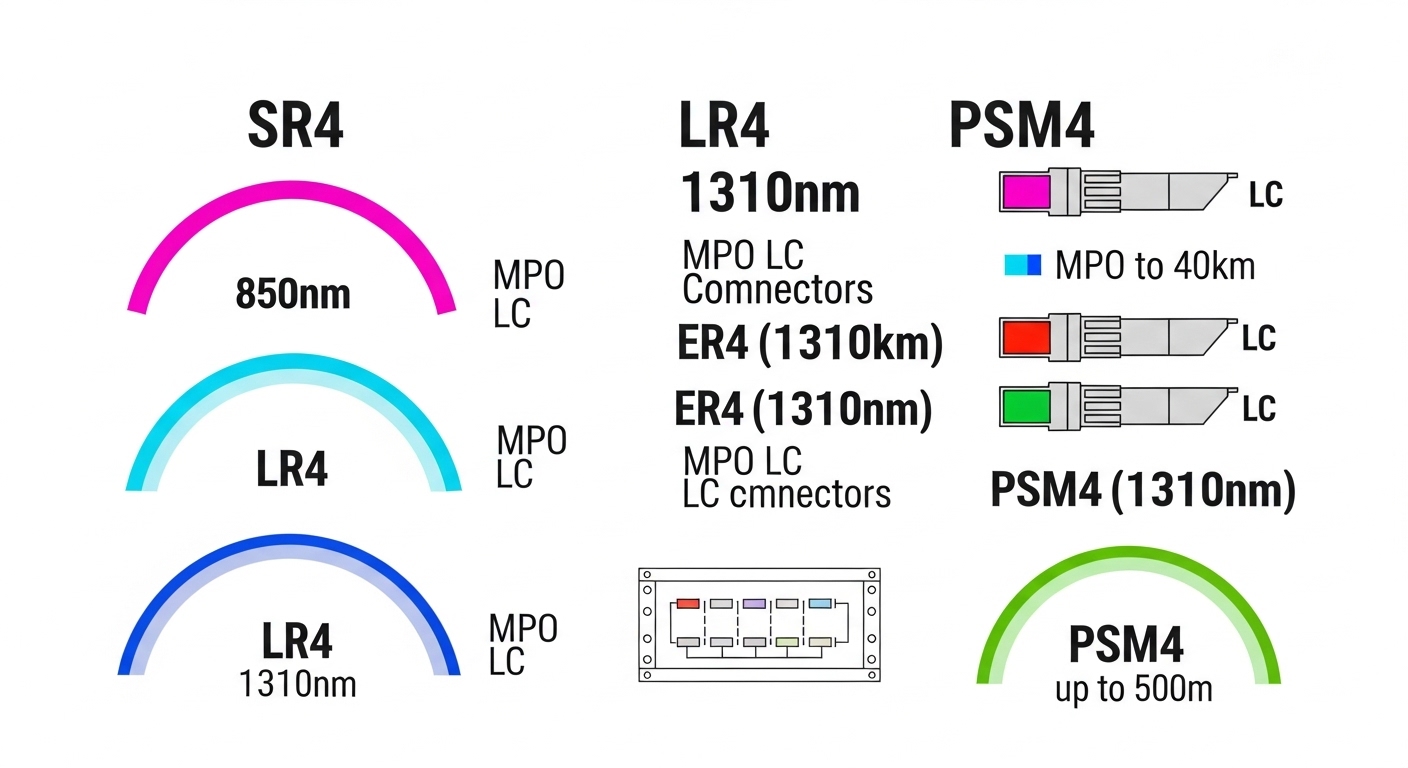

Ceph traffic patterns (small object reads/writes plus replication and recovery) can be sensitive to headroom and microburst behavior. For 100G, the common object storage network SFP approach is to use 100G transceivers that align with IEEE 802.3 Ethernet PHY behavior and your switch ASIC lane mapping. In practice, you want transceivers that match the switch’s expected interface type: 100GBASE-SR4 for short reach with multimode fiber, or 100GBASE-LR4 for long reach on singlemode.

Best-fit scenario: a leaf-spine data center where ToR to storage leaf uses MMF OM4 for sub-100m links and you want to minimize cost and simplify field spares.

- Pros: SR4 is usually the cheapest per port for short links.

- Cons: MMF reach depends heavily on patching quality and connector cleanliness.

Choose SR4 vs LR4 based on measured distance and link budget

Your reach decision should be based on measured fiber plant reality, not just transceiver “headline” specs. For SR4, verify OM4/OM3 support and ensure your cabling loss plus margin stays within the vendor’s link budget. For LR4, confirm attenuation and chromatic dispersion constraints and keep an eye on whether your optics require specific singlemode fiber types.

Best-fit scenario: a rack build where OSD hosts connect to ToR over 35m to 60m using trunked OM4 cabling; SR4 is typically appropriate. If you must traverse multiple rows or cross a corridor with 120m to 300m, LR4 becomes the safer selection.

Pro Tip: In Ceph rollouts, the “fiber distance” you measure at commissioning often excludes patch panel mated cycles and dirty-connector events. Treat your margin as a reliability budget: if the vendor link budget says you have 2 dB margin, plan for cleaning and potential re-termination rather than assuming it will stay stable for years. [Source: IEEE 802.3 Ethernet PHY documentation and vendor link budget guidance]

- Pros: Link-budget alignment reduces link flaps during recovery storms.

- Cons: LR4 optics can be materially more expensive than SR4.

Validate switch compatibility: optics, DOM, and vendor-specific quirks

Switch vendors frequently gate support by transceiver type and firmware expectations. Confirm that your object storage network SFP modules are supported on the exact switch model and port (including breakout mode settings). Also validate Digital Optical Monitoring (DOM) fields your platform reads—vendors may interpret thresholds differently, which can trigger false alarms or suppress real degradation.

Best-fit scenario: you are deploying the same transceiver across multiple switch SKUs (leafs from two vendors, or two hardware revisions). You should test DOM reporting and alarm thresholds in a staging rack before scaling.

- Pros: Prevents “works in lab, fails in field” surprises.

- Cons: Compatibility matrices can lag behind third-party availability.

Compare real 100G optics: wavelength, reach, connector, temperature

Below is a comparison table using representative 100G transceiver models commonly used in short-reach and mid-reach data center deployments. Treat the “reach” as an engineering starting point; always check the vendor datasheet for link budget and supported fiber types.

| Category | Example part | Wavelength / Mode | Target reach | Connector | Data rate | Power (typ.) | Operating temp |

|---|---|---|---|---|---|---|---|

| 100GBASE-SR4 (MMF) | Cisco SFP-100G-SR4 (example OEM class) | 850 nm, SR4 | Up to ~100 m on OM4 class | LC duplex | 100G | ~3 to 5 W class (varies) | 0 to 70 C typical |

| 100GBASE-LR4 (SMF) | Finisar FTLX8571D3BCL (example LR4) | ~1310 nm, LR4 | Up to ~10 km class | LC duplex | 100G | ~2 to 4 W class (varies) | -5 to 70 C typical |

| 100GBASE-SR4 (third-party) | FS.com SFP-10GSR-85 is not 100G SR4, but MSAs for 100G SR4 third-party exist (verify exact SKU) | 850 nm, SR4 | Vendor datasheet dependent | LC duplex | 100G | Varies | Varies |

Best-fit scenario: if your object storage network SFP selection is for OSD host uplinks at 40m to 80m, SR4 is usually the most cost-effective path. If you have mixed rack layouts with longer runs, LR4 reduces the need for expensive active cabling.

- Pros: Spec comparison helps you avoid “wrong connector” and “wrong temperature class” events.

- Cons: Third-party parts require careful validation of DOM and firmware compatibility.

Set power, thermal, and airflow expectations per rack

In Ceph clusters, the optics are not the only heat source; you also have dense NICs, GPUs (sometimes), and high fan-out switch modules. Still, transceiver power and thermal behavior matter during sustained recovery traffic, when link utilization stays high for longer periods. Confirm the transceiver’s typical and maximum power draw and verify it matches the switch’s thermal design limits.

Best-fit scenario: a storage leaf handling 100G at full line rate for long recovery windows. You should ensure the rack’s airflow can maintain optics within the datasheet temperature range.

- Pros: Reduces “intermittent link loss” tied to temperature excursions.

- Cons: Over-optimistic airflow assumptions can still fail under seasonal changes.

Decide OEM vs third-party: risk model for Ceph uptime

OEM transceivers tend to have the smoothest switch compatibility path, while third-party options can cut unit price but increase validation effort. For object storage network SFP procurement, treat this as a risk-managed trade: measure failure rates (where available), validate DOM behavior, and confirm return/RMA processes are workable for your ops team.

Best-fit scenario: you need to deploy 200+ ports quickly and can stage-test at least 10 to 20 ports across switch models before scaling.

- Pros: Third-party can materially reduce capex for large Ceph rollouts.

- Cons: Higher chance of DOM mismatch, threshold alarms, or subtle firmware incompatibilities.

Plan for DOM-driven monitoring and alert thresholds

DOM is the bridge between “it seems fine” and “it will fail soon.” Ensure your monitoring stack can ingest DOM metrics such as received optical power, bias current, and temperature, and map them into actionable alerts. Your goal is to detect degradation early—especially for multimode SR4 where connector contamination can cause gradual receive power drift.

Best-fit scenario: you run Prometheus/Grafana or vendor telemetry and want to correlate DOM dips with Ceph OSD latency spikes. Configure alerts that trigger on sustained changes rather than single-sample anomalies.

- Pros: Early warning reduces recovery-time MTTR.

- Cons: Misconfigured thresholds can create alert fatigue and hide real faults.

Size for Ceph traffic and failure events, not just average throughput

The object storage network SFP sizing decision should reflect Ceph’s worst-case behavior: rebalance after OSD replacement, backfill after disk failures, and client read/write bursts. Instead of only checking “100G is enough,” estimate the recovery bandwidth needed and ensure your switch fabric and uplink oversubscription remain within safe bounds.

Best-fit scenario: a 3-tier rack with ToR-to-aggregation oversubscription of 2:1 and a planned Ceph recovery window. You should validate that even during backfill, per-host NIC utilization does not saturate and trigger packet loss that Ceph will interpret as latency and backpressure.

- Pros: Prevents performance collapse during failure windows.

- Cons: Requires traffic modeling and careful switch configuration review.

Common mistakes and troubleshooting for object storage network SFP

Even strong engineering teams hit predictable failure modes. Below are common pitfalls with root causes and practical solutions, aligned to how Ceph clusters behave under load.

-

Mistake: Assuming MMF reach from datasheets without checking patch loss and connector quality.

Root cause: Patch panels, adapter cleanliness, and aging connectors can add several dB beyond commissioning measurements.

Solution: Use an OTDR or certified loss test at install, clean connectors before swapping optics, and keep spare patch cords for fast A/B testing. -

Mistake: Deploying third-party optics without validating DOM telemetry and thresholds.

Root cause: DOM values can differ in scaling/units, causing alerts to trigger too late or too often.

Solution: In staging, confirm DOM fields map correctly in your telemetry pipeline and adjust thresholds for sustained drift detection. -

Mistake: Ignoring switch port mode requirements for 100G lane mapping.

Root cause: Some platforms expect specific lane ordering or break-out settings; mismatches can lead to link flaps or negotiated-down behavior.

Solution: Verify port configuration against the switch optics guide and confirm link speed and FEC mode after deployment. -

Mistake: Underestimating thermal impact during Ceph recovery storms.

Root cause: High sustained traffic raises ambient and module temperature; optics may temporarily drop out when they exceed operating limits.

Solution: Validate rack airflow, monitor transceiver temperature via DOM, and schedule maintenance around seasonal airflow changes.

Real-world deployment scenario: 100G Ceph with object storage network SFP

In a 3-tier data center leaf-spine topology, a team deployed 48-port 100G ToR switches connecting to 96 OSD hosts using 100G uplinks. Each OSD host had a single 100G NIC and connected to the ToR via OM4 LC cabling measured at 52m from server patch panel to switch patch bay. They initially rolled out third-party SR4 optics, then ran into sporadic link drops during Ceph backfill after an OSD replacement; DOM showed received power drifting downward over 48 hours in two racks. After connector cleaning, patch cord replacement, and re-tuning alert thresholds for sustained DOM drift, link stability returned and Ceph recovery time improved because the cluster stopped triggering client retry storms.

Image note: Use this shot type for documentation so operators can confirm connector type and orientation during swaps.

Selection criteria checklist engineers actually use

When you need to choose object storage network SFP modules quickly for Ceph, work this list in order. It compresses validation time and reduces backtracking later.

- Distance and fiber type: measured link length, OM4/OM3 grades, or SMF specs; confirm patch loss budget.

- Data rate and Ethernet PHY match: ensure the transceiver is aligned to 100GBASE-SR4 or LR4 as required by your ports.

- Switch compatibility: confirm exact switch model and port support; validate FEC and any required settings.

- DOM support: confirm telemetry ingestion, units, and threshold behavior in your monitoring stack.

- Operating temperature and thermal headroom: verify transceiver operating range vs rack ambient and airflow plan.

- Vendor lock-in risk: compare OEM vs third-party availability, RMA workflow, and your ability to stage-test quickly.

- Spare strategy: decide how many optics you keep per rack or per switch to minimize downtime during failures.

- Ceph traffic headroom: validate recovery-bandwidth needs and ensure uplink oversubscription won’t create loss.

Cost and ROI note for object storage network SFP in Ceph

For 100G SR4 optics, typical street pricing often lands in the low-to-mid hundreds of dollars per module for third-party and higher for OEM, while LR4 can be significantly higher depending on vendor and supply. TCO should include: (1) optics cost, (2) labor and downtime risk during swaps, (3) cleaning and patch management, and (4) potential performance impact if link flaps extend Ceph recovery windows. In practice, teams that stage-test and invest in fiber hygiene often reduce incident-driven labor enough to offset the small delta between OEM and third-party pricing.

Image note: This illustration helps explain why link budget margin and DOM alerts matter for recovery events.

Summary ranking table: fastest path to a safe object storage network SFP choice

Use this table to rank options during evaluation. “Best” assumes short reach, stable fiber hygiene, and verified switch compatibility; adjust based on your measured distance and monitoring maturity.

| Rank | Option | Best for | Main advantage | Main risk |

|---|---|---|---|---|

| 1 | 100GBASE-SR4 on OM4 with verified switch support | Sub-100m Ceph uplinks | Lower cost and simpler cabling | Connector cleanliness and patch loss |

| 2 | 100GBASE-LR4 on SMF with validated DOM | Longer intra-facility runs | More forgiving fiber distance | Higher module cost |

| 3 | OEM 100G optics with strict compatibility | Mixed switch environments | Lowest compatibility friction | Higher capex |

| 4 | Third-party optics after staging validation | Large rollouts with test capacity | Lower capex | DOM and firmware quirks |

FAQ

Q1: What does object storage network SFP mean in a Ceph context?

A: It refers to the transceiver modules and optical interfaces that carry Ceph client, replication, and recovery traffic between storage nodes and switches. In 100G designs, you typically use 100G optics (